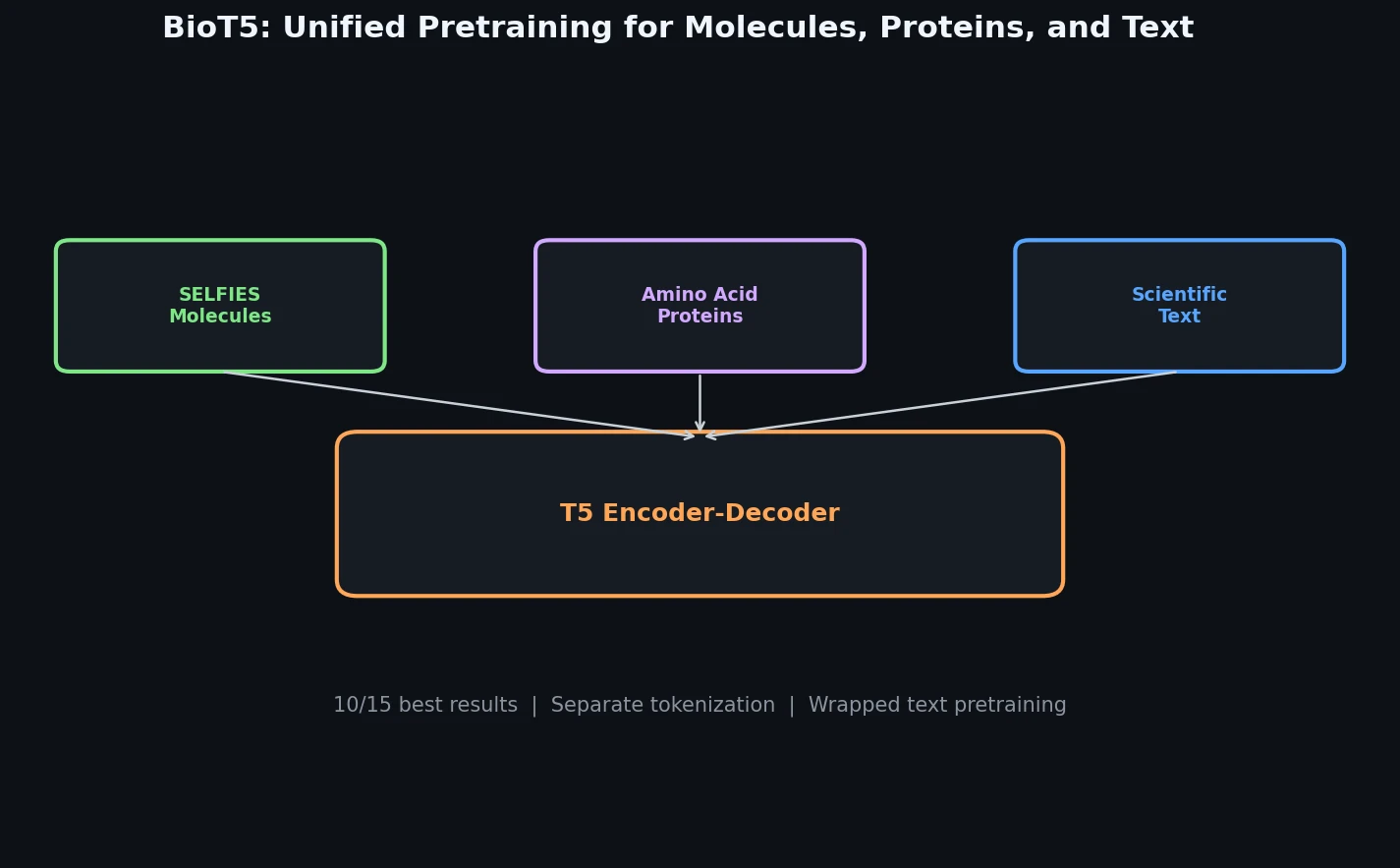

BioT5: Cross-Modal Integration of Biology and Chemistry

BioT5 uses SELFIES representations and separate tokenization to pre-train a unified T5 model across molecules, proteins, and text, achieving state-of-the-art results on 10 of 15 downstream tasks.