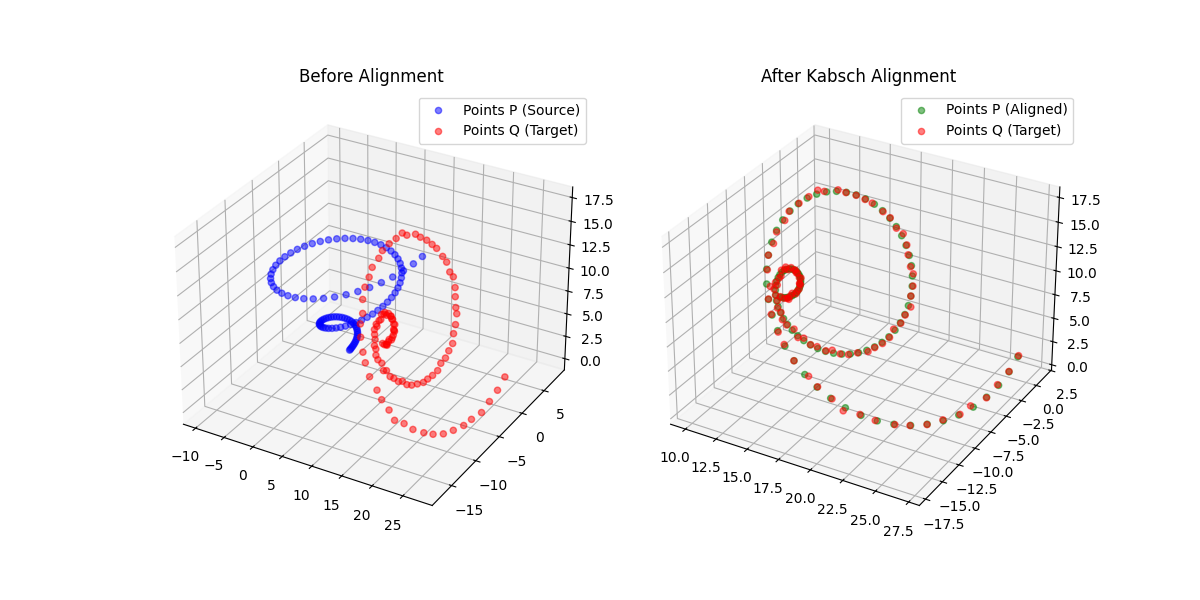

Kabsch-Horn Cookbook: Differentiable Alignment

A differentiable point-set alignment library implementing N-dimensional Kabsch, Horn quaternion, and Umeyama scaling algorithms with per-point weights, batch dimensions, and custom autograd across NumPy, PyTorch, JAX, TensorFlow, and MLX.