How Does Congress Actually Work? Data from 15K Bills

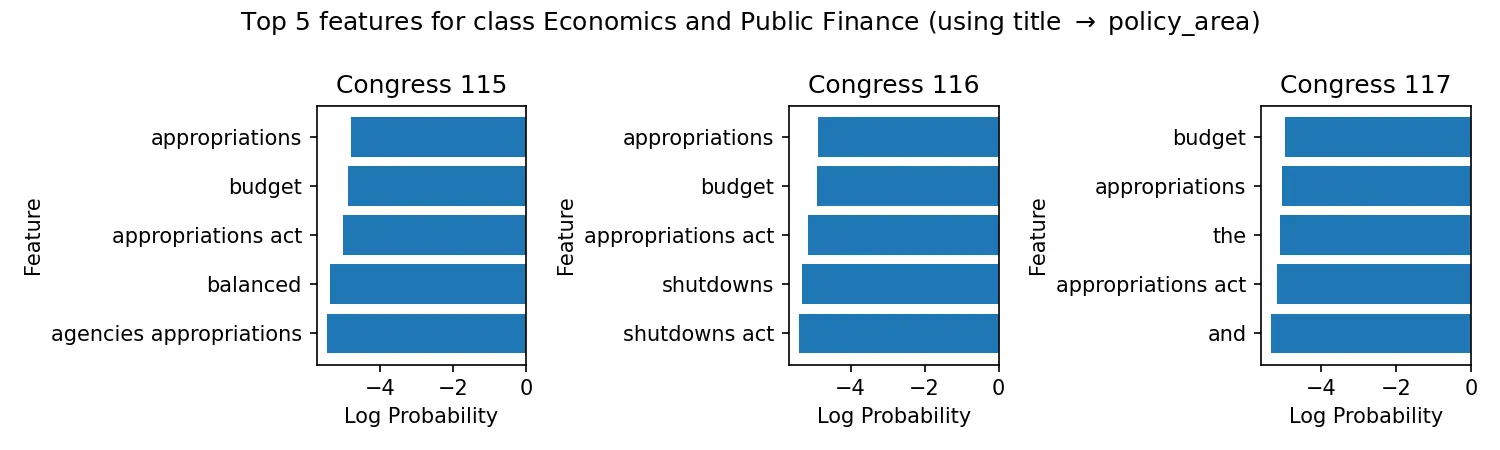

Only 2% of congressional bills become law. We analyze 15K bills from 2021-2023 to understand what drives legislative success and failure.

Only 2% of congressional bills become law. We analyze 15K bills from 2021-2023 to understand what drives legislative success and failure.

Learn to align molecular structures and point clouds using the Kabsch algorithm, with differentiable implementations for modern ML frameworks.

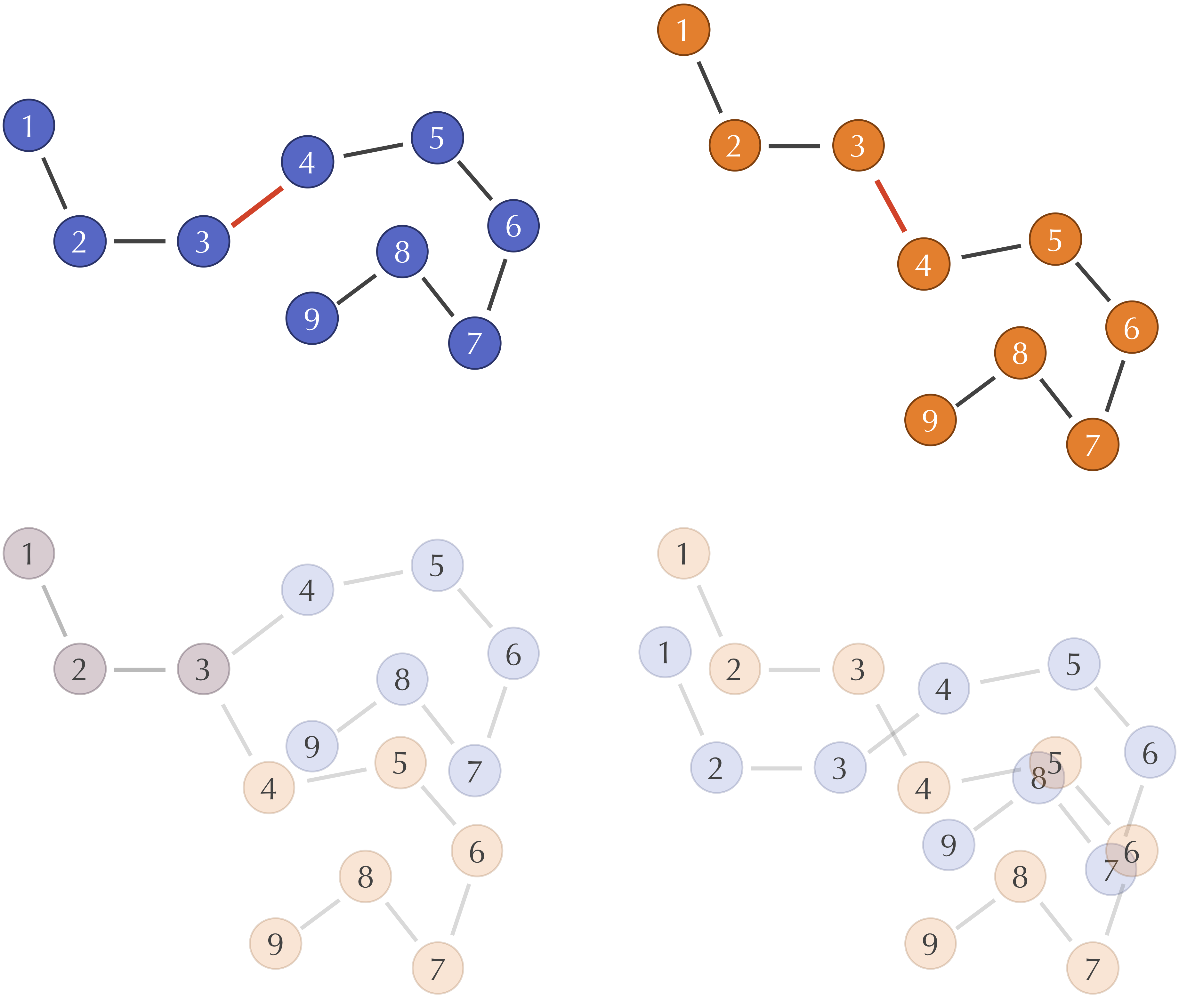

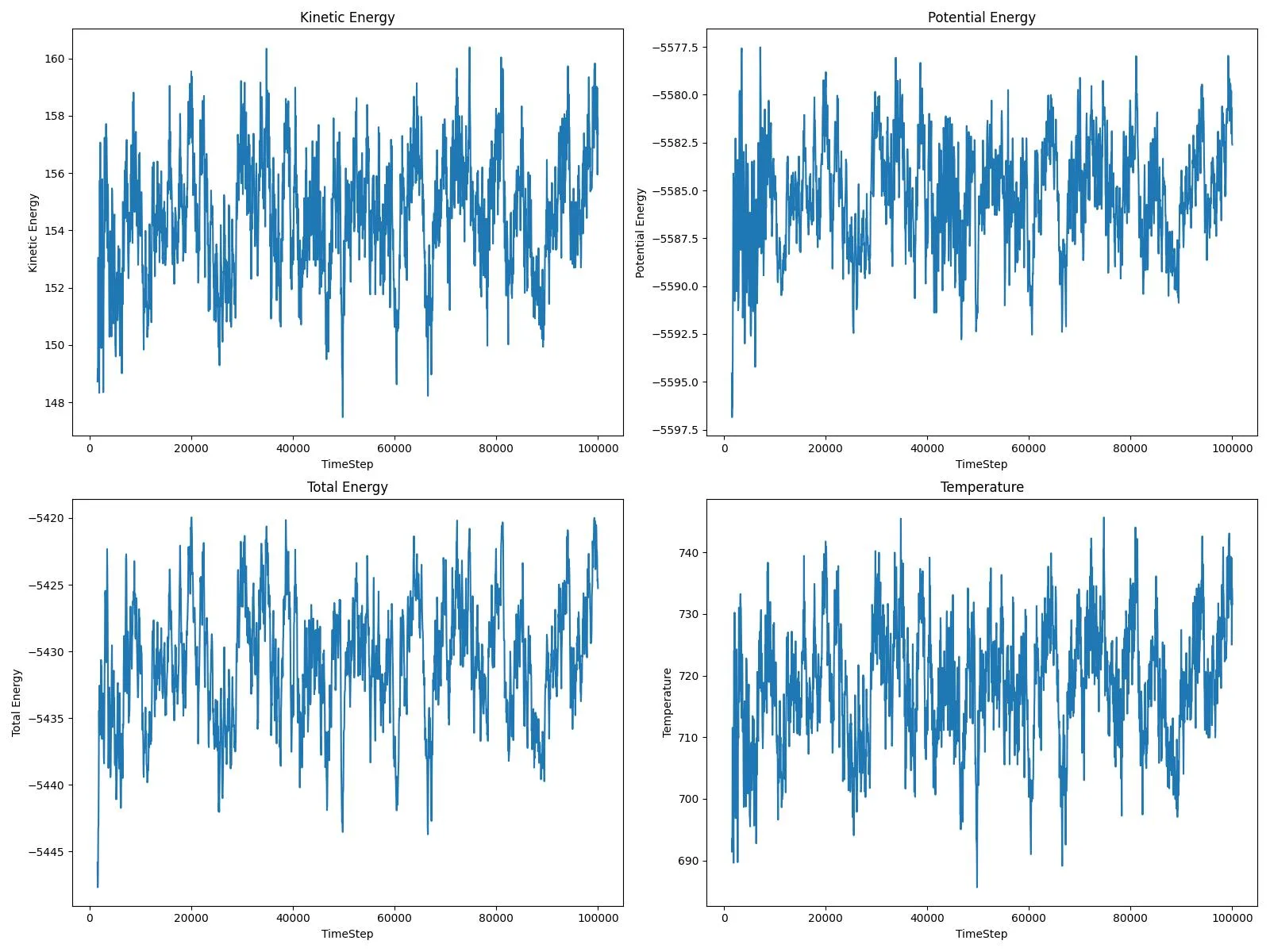

A complete input-to-analysis workflow for simulating adatom diffusion on FCC metal surfaces using LAMMPS and EAM potentials, providing comparative datasets for copper and platinum that demonstrate how atomic mass and bonding strength affect surface dynamics, with automated Python analysis generating publication-ready visualizations.

An automated GROMACS pipeline for generating high-fidelity molecular dynamics datasets suitable for machine learning, simulating capped dipeptides across nine residue types with 0.1 ps resolution and atomic force extraction optimized for training Neural Network Potentials.

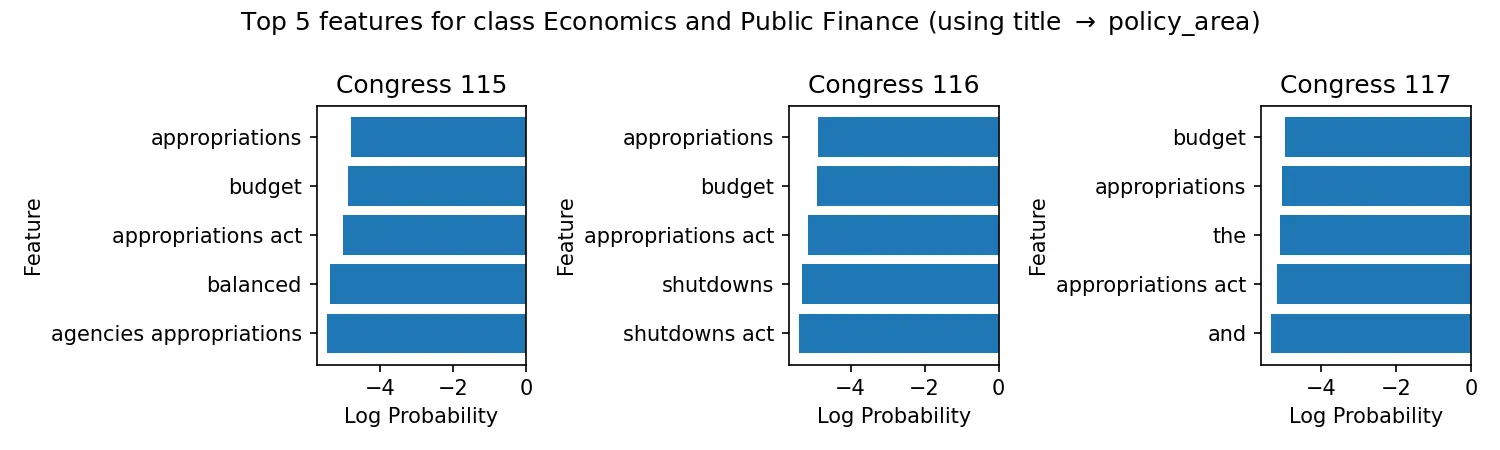

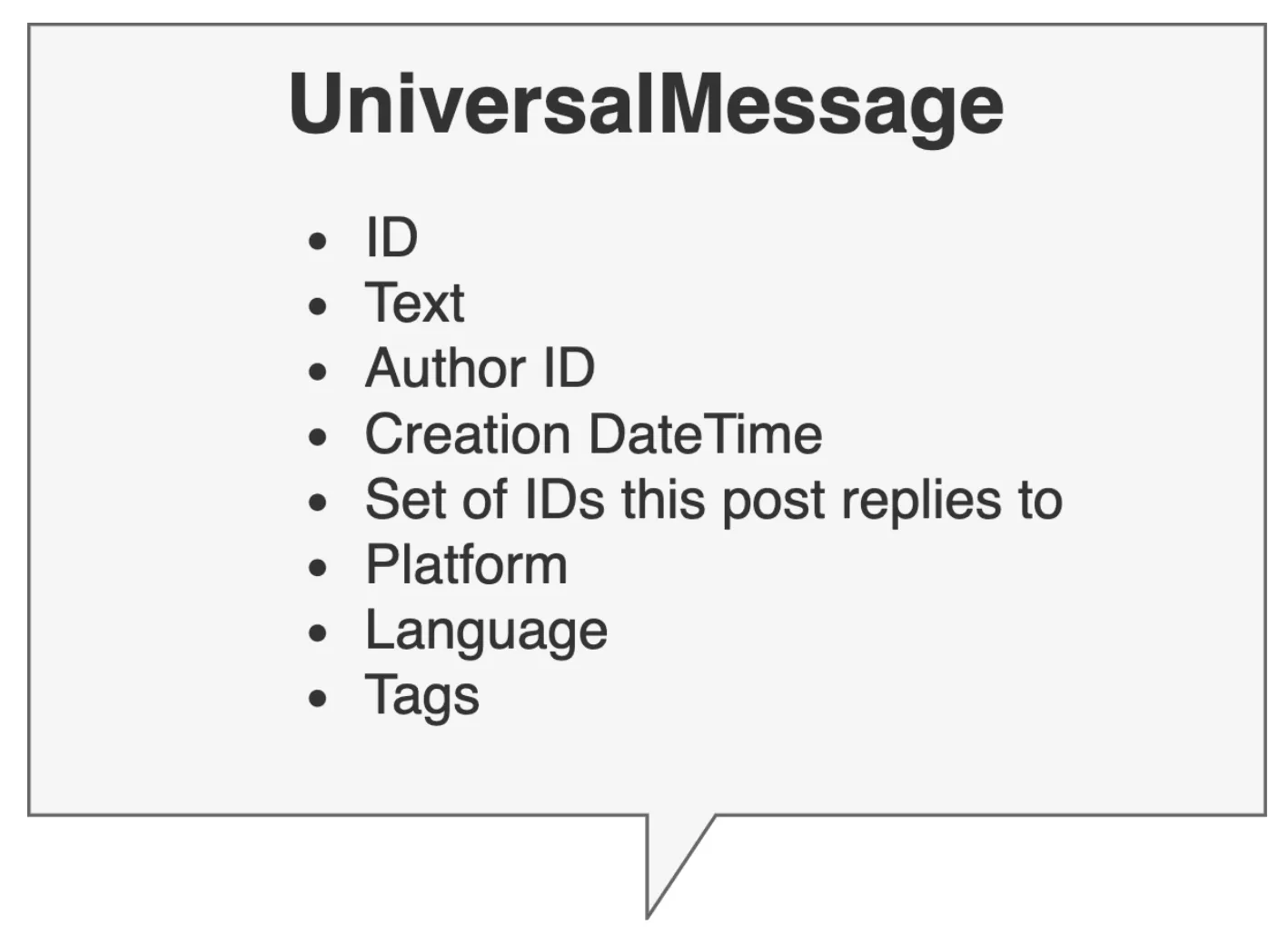

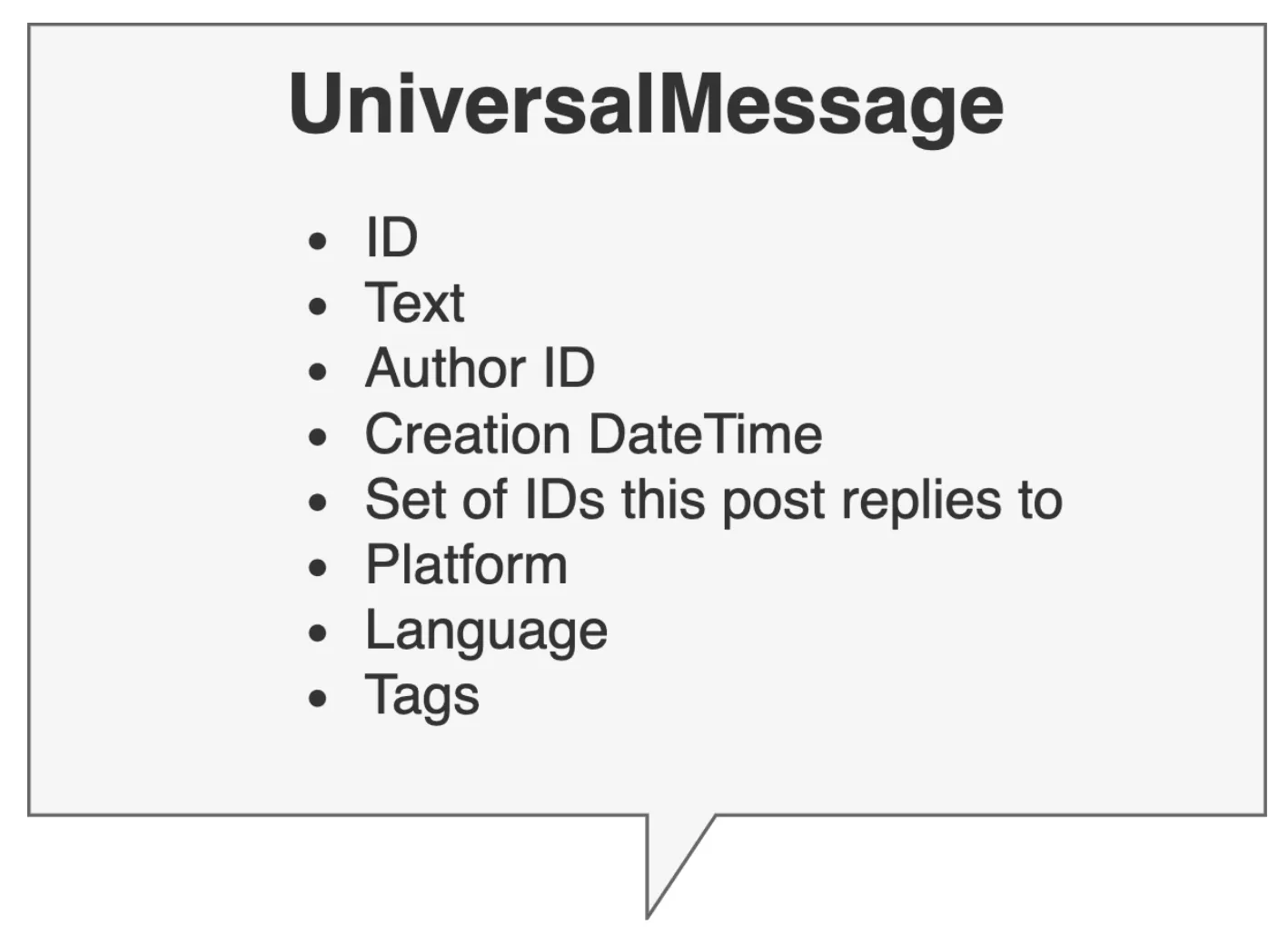

Bachelor’s thesis introducing PyConversations, an open-source library that normalizes over 308 million posts from Twitter, Reddit, Facebook, and 4chan into a unified data model for cross-platform social media research.

Research project that investigated how different NLP models perform on social media data, finding that domain-specific approaches often outperform large pre-trained models. Includes PyConversations, a Python module for analyzing conversations across social media platforms.

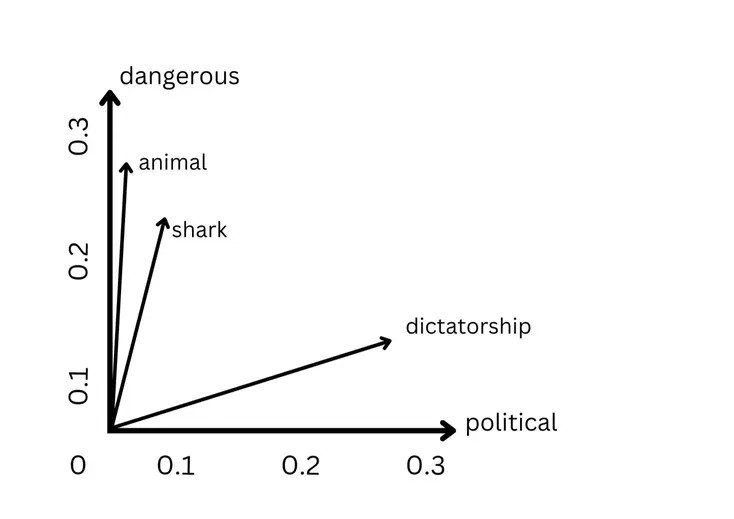

Learn how computers understand words through mathematical vectors, from simple counting methods to contextual embeddings that power modern NLP.

A freshman-year automation tool solving the university scheduling constraint satisfaction problem through web scraping Drexel’s Term Master Schedule and implementing recursive backtracking algorithm to generate every valid schedule permutation satisfying user-defined hard and soft constraints, used throughout undergrad 2016-2020.

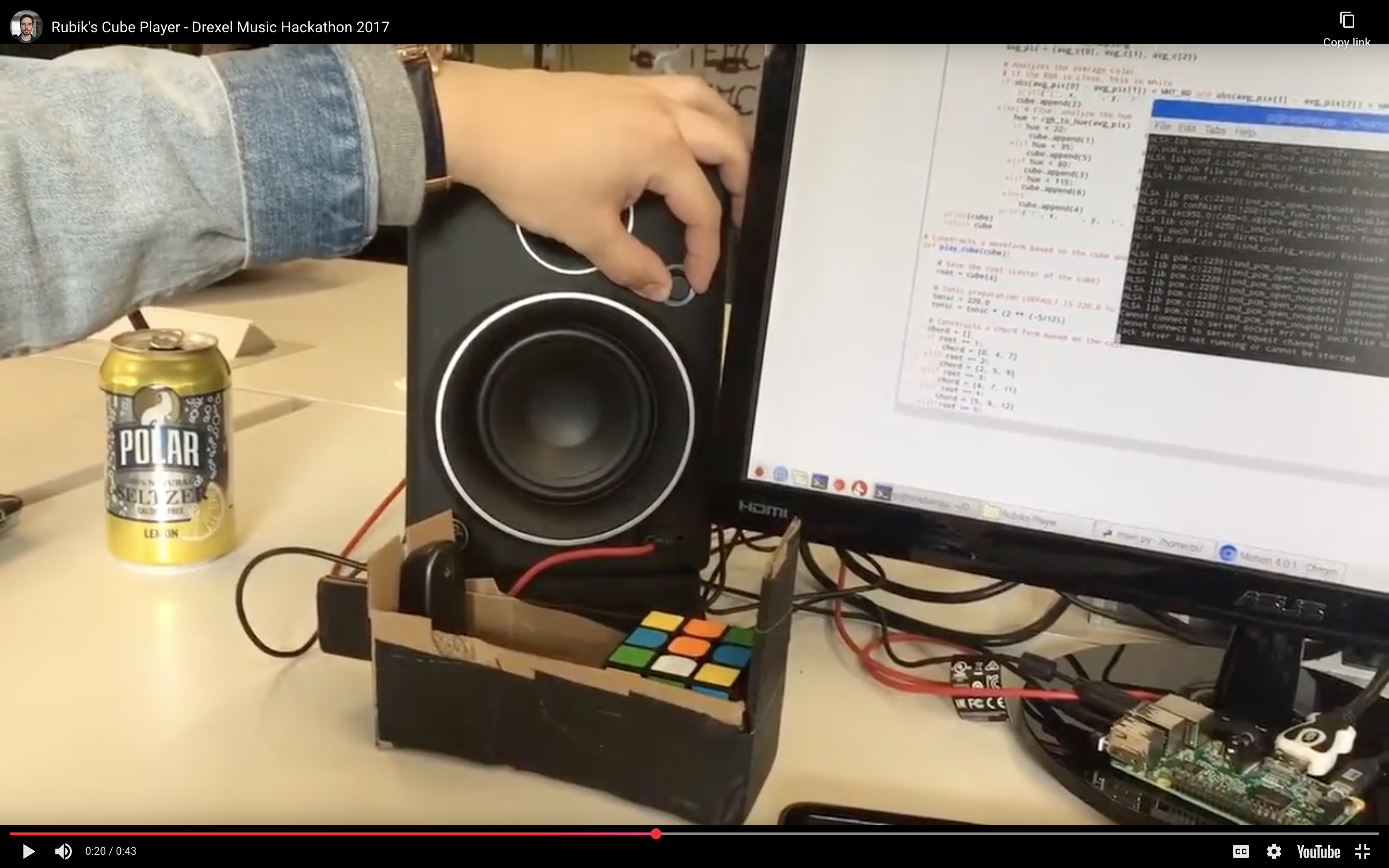

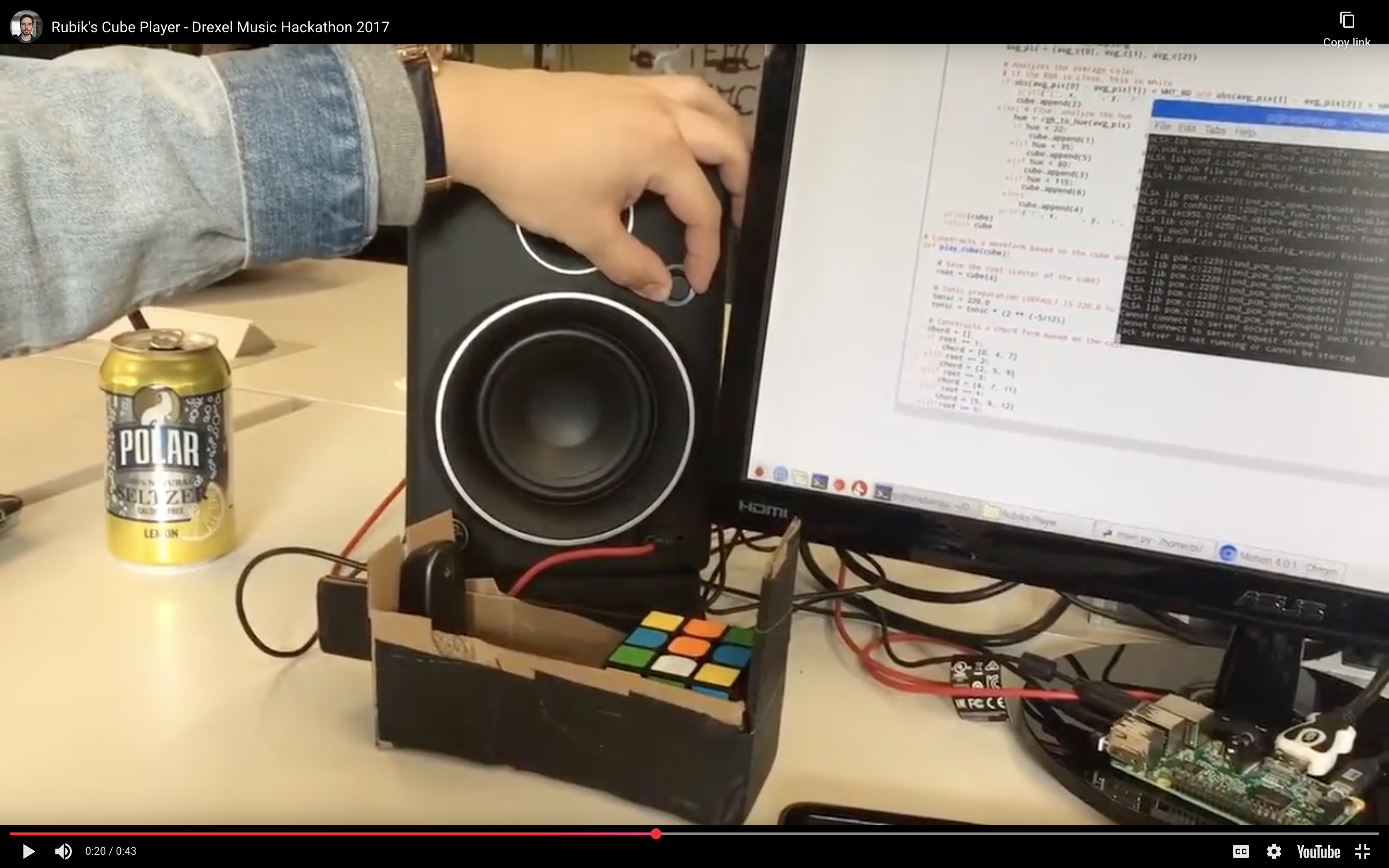

A project I built with Emmanuel Espino and Jason Zogheb at the 2017 Drexel Music Hackathon. It uses computer vision to read a Rubik’s cube and generates music based on how solved each face is.

A 24-hour hackathon project exploring algorithmic musicology by using a webcam to scan a Rubik’s cube and generate audio based on color configuration, implementing first-principles waveform synthesis with byte-by-byte PCM generation and equal temperament frequency calculations to map visual entropy to harmonic resolution.