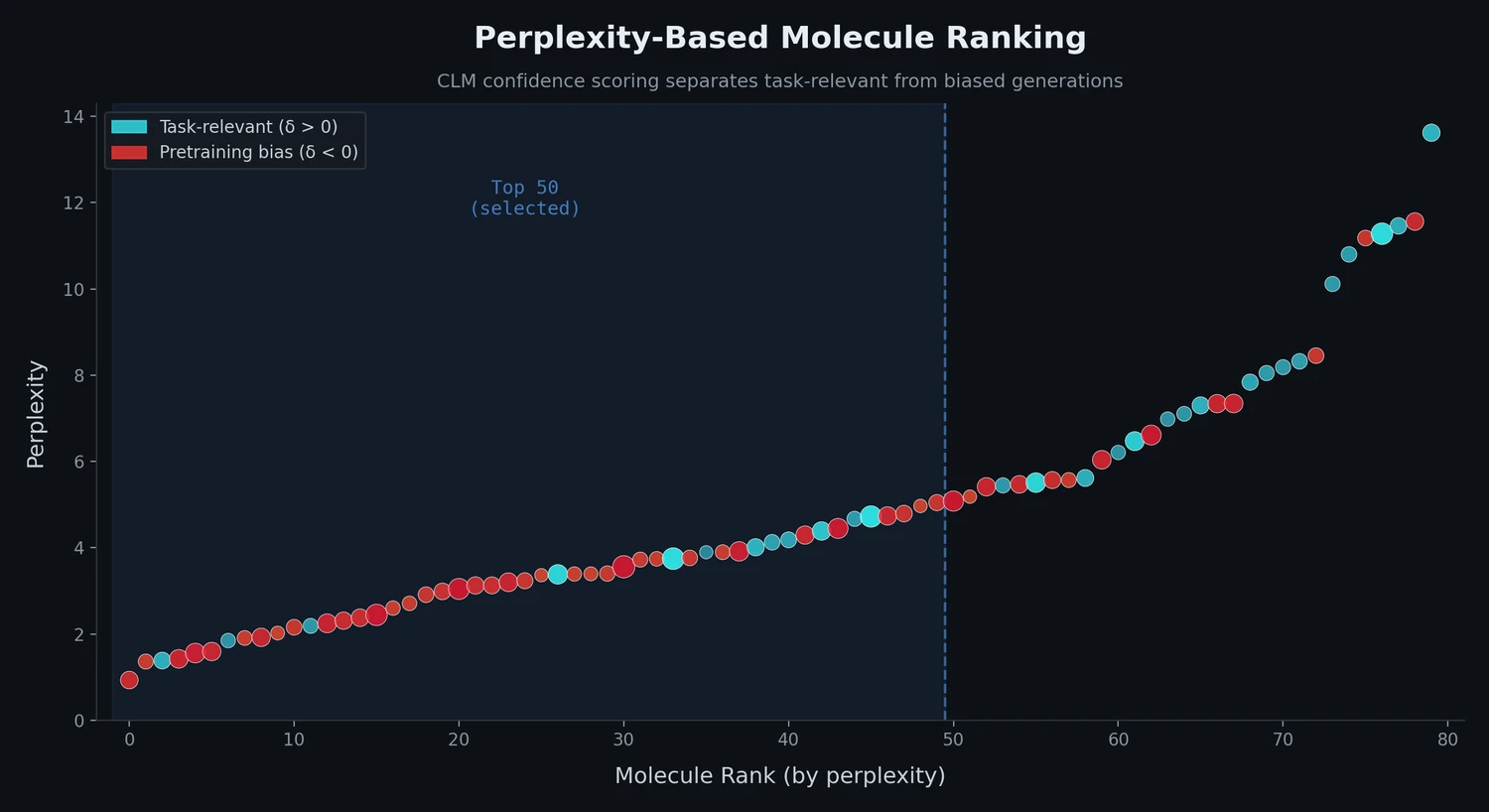

Perplexity for Molecule Ranking and CLM Bias Detection

This study applies perplexity, a model-intrinsic metric from NLP, to rank de novo molecular designs generated by SMILES-based chemical language models and introduces a delta score to detect pretraining bias in transfer-learned CLMs.