SMILES2Vec: Interpretable Chemical Property Prediction

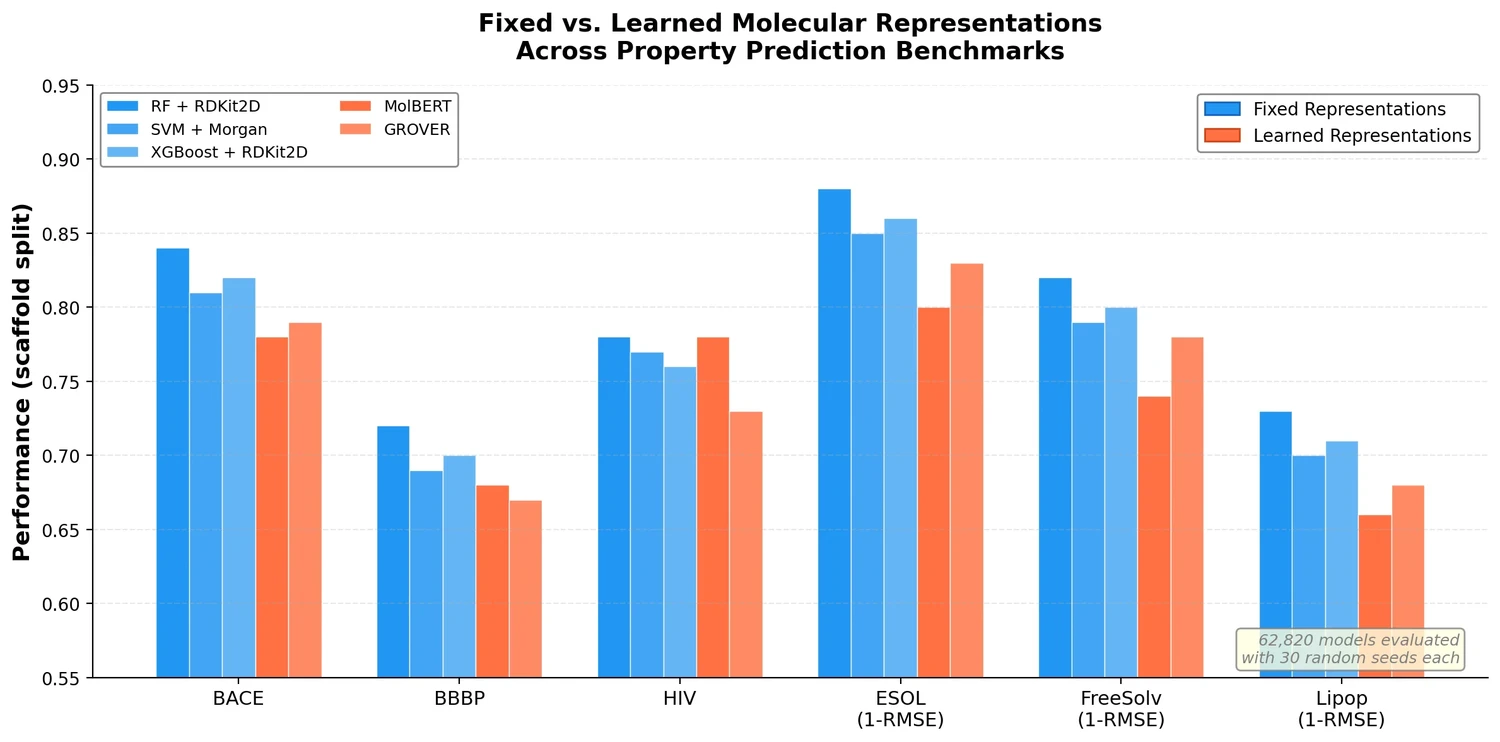

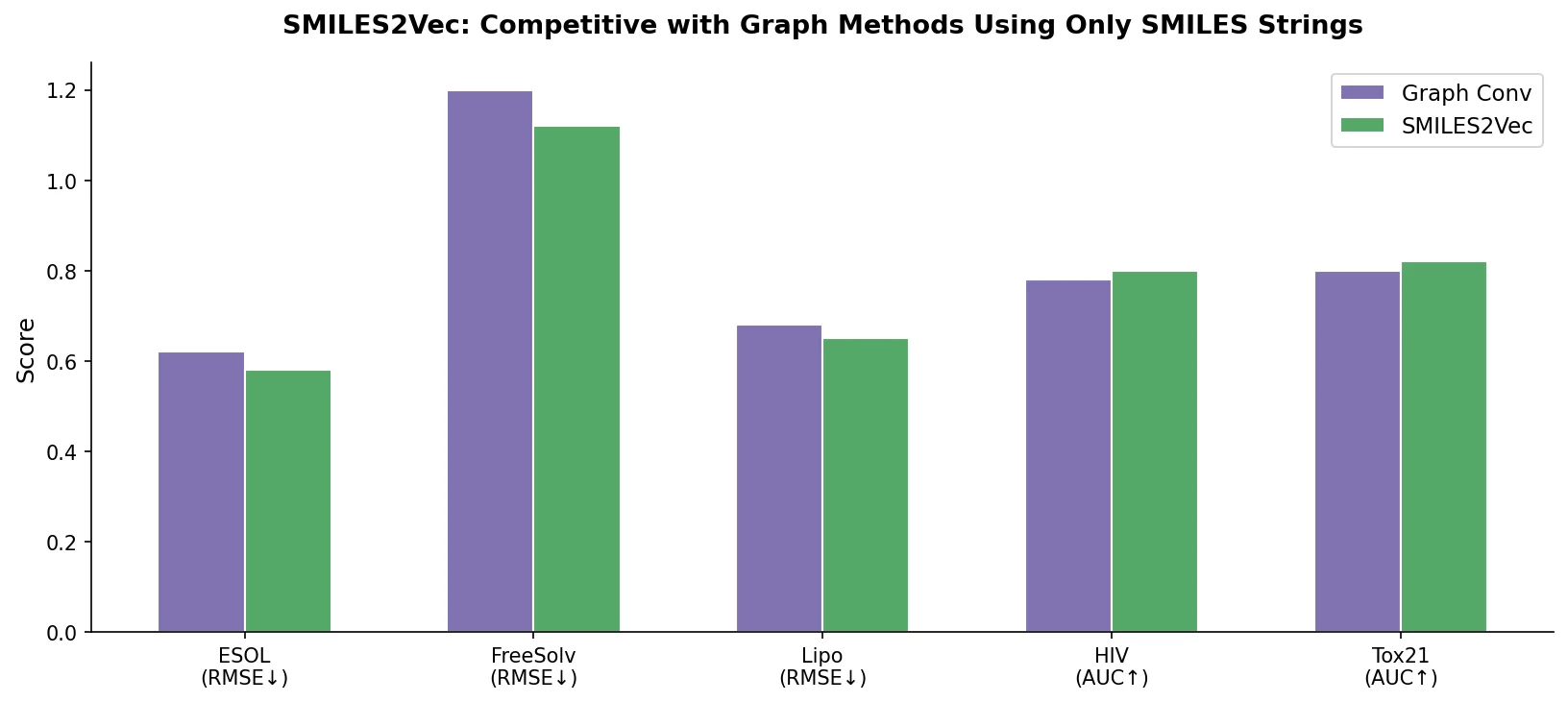

SMILES2Vec is a deep RNN that learns chemical features directly from SMILES strings using a Bayesian-optimized CNN-GRU architecture. It matches graph convolution baselines on toxicity and activity prediction, and its explanation mask identifies chemically meaningful functional groups with 88% accuracy.