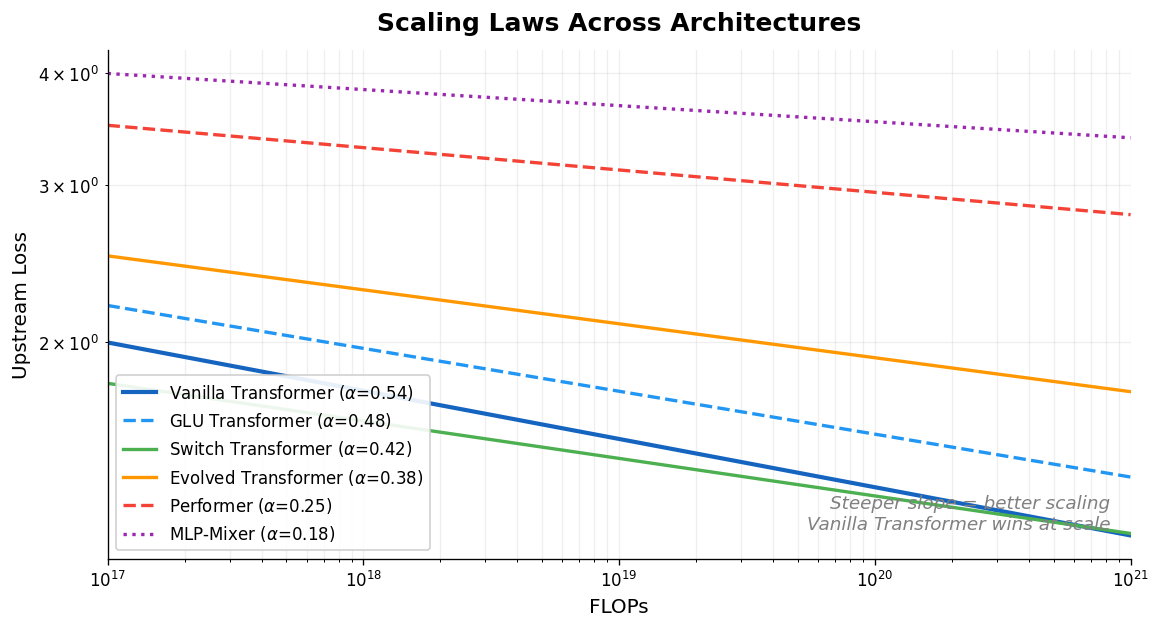

Scaling Laws vs Model Architectures: Inductive Bias

Tay et al. systematically compare scaling laws across ten diverse architectures (Transformers, Switch Transformers, Performers, MLP-Mixers, and others), finding that the vanilla Transformer has the best scaling coefficient and that the best-performing architecture changes across compute regions.