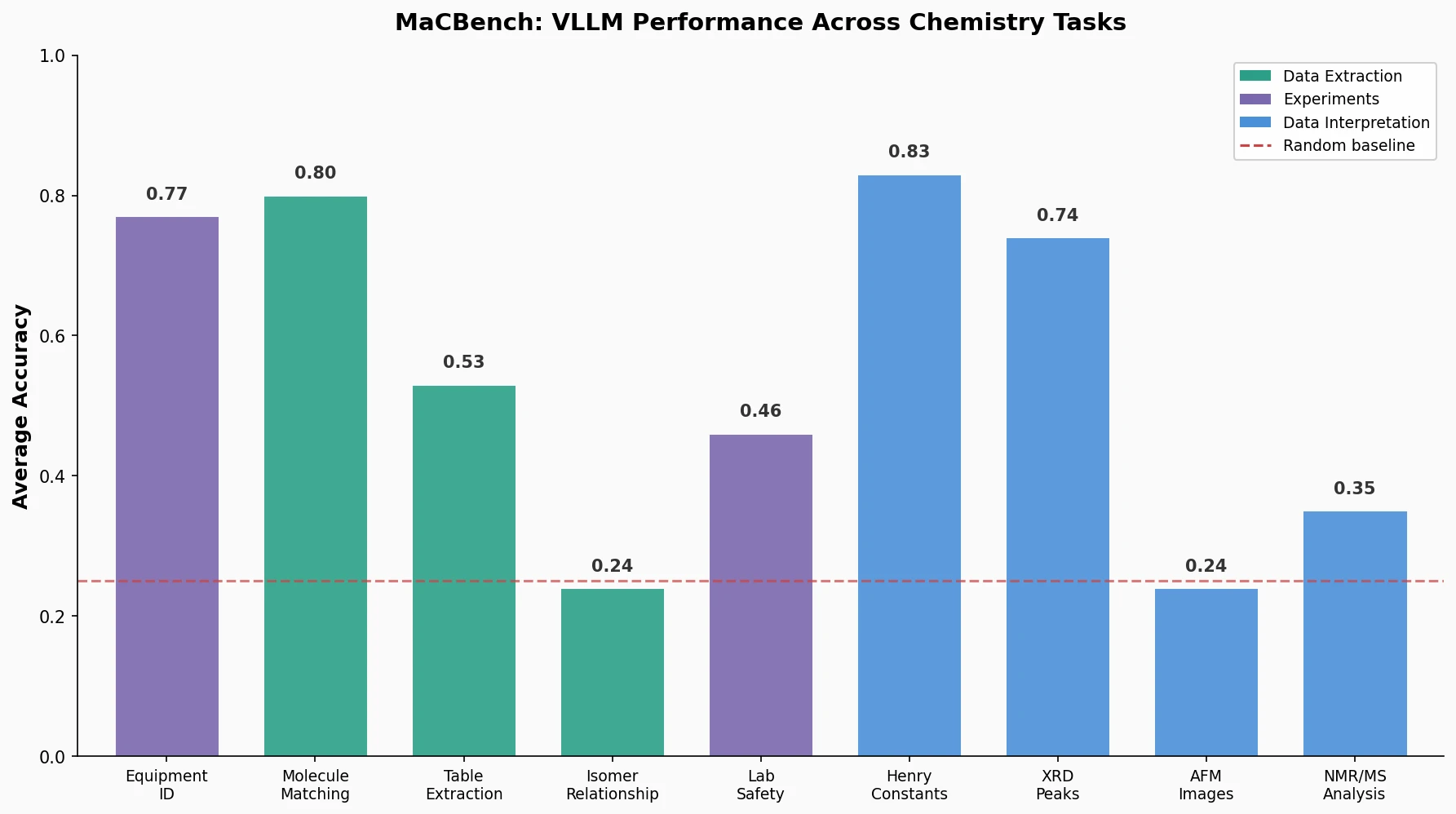

MaCBench: Multimodal Chemistry and Materials Benchmark

MaCBench evaluates frontier vision language models across 1,153 chemistry and materials science tasks spanning data extraction, experimental execution, and data interpretation, uncovering fundamental limitations in spatial reasoning and cross-modal integration.