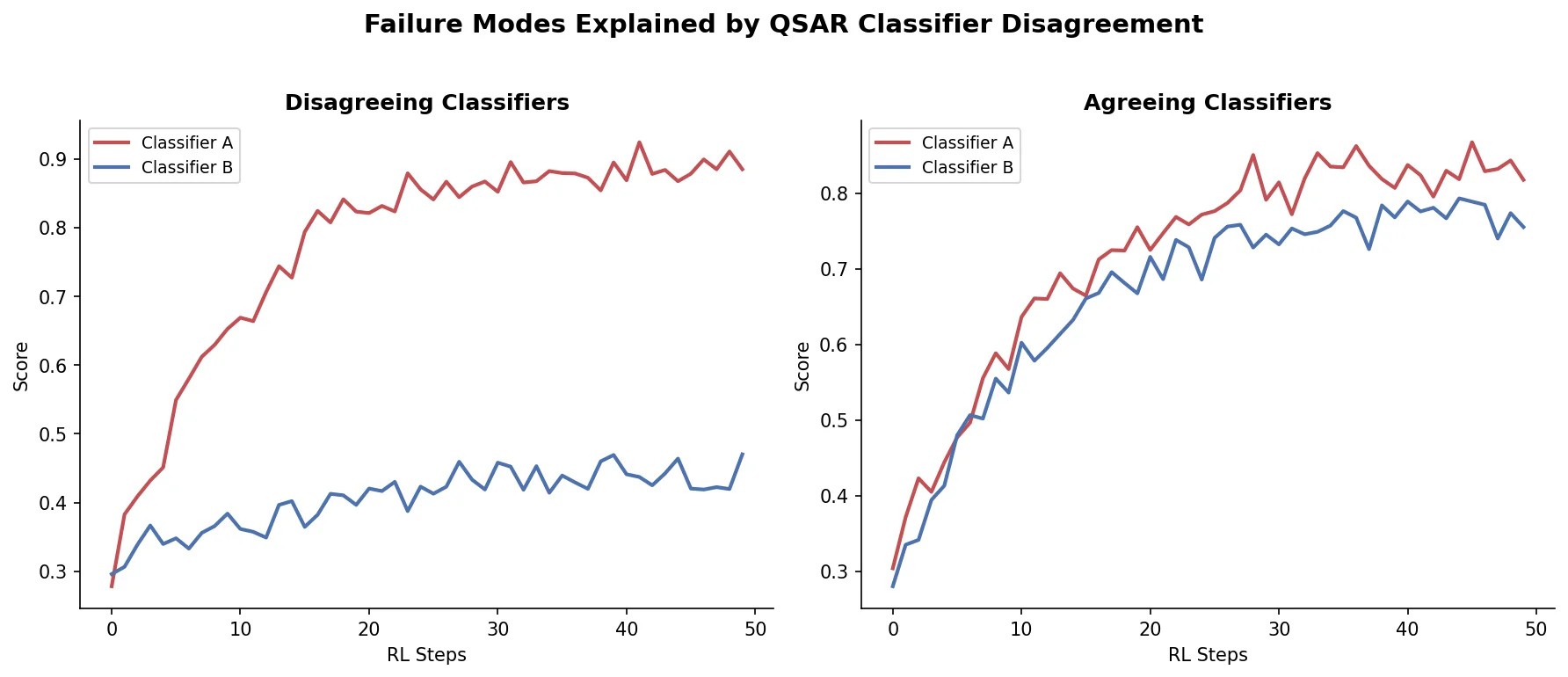

Avoiding Failure Modes in Goal-Directed Generation

Shows that divergence between optimization and control scores during goal-directed molecular generation is explained by pre-existing disagreement among QSAR models on the training distribution, not by algorithmic exploitation of model-specific biases.