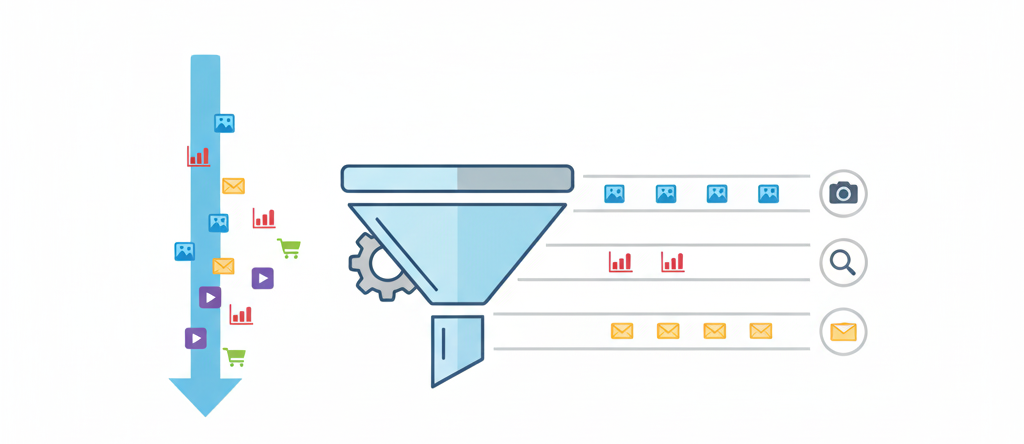

The Evolution of Page Stream Segmentation: Rules to LLMs

We trace the history of Page Stream Segmentation (PSS) through three eras (Heuristic, Encoder, and Decoder) and explain how privacy-preserving, localized LLMs enable true semantic processing.

We trace the history of Page Stream Segmentation (PSS) through three eras (Heuristic, Encoder, and Decoder) and explain how privacy-preserving, localized LLMs enable true semantic processing.

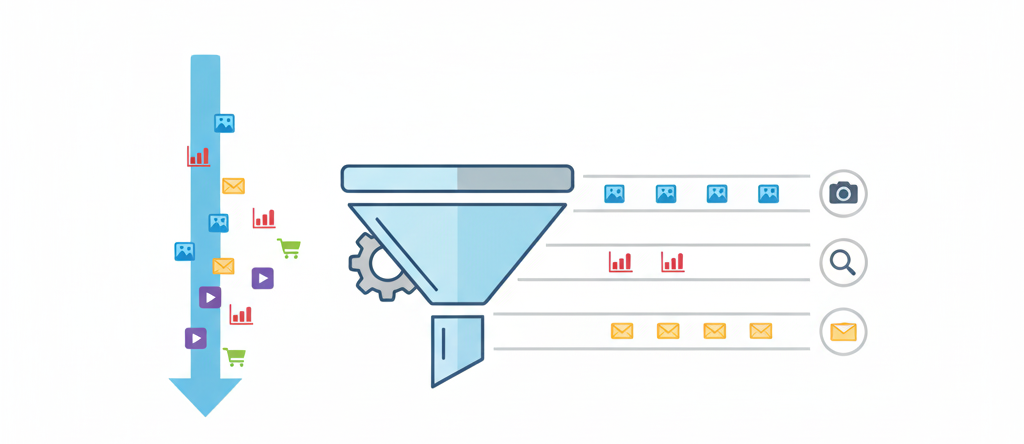

This paper introduces NOMINATE, a probabilistic spatial model that recovers metric coordinates for legislators and roll calls from nominal voting data, demonstrating that a single liberal-conservative dimension explains the vast majority of Congressional voting behavior.

Sussman and Wisdom’s 1992 study used the Supercomputer Toolkit and symplectic mapping to integrate the entire Solar System for 100 million years, confirming chaotic behavior with an exponential divergence timescale of ~4 million years and demonstrating that long-term planetary motion is fundamentally unpredictable.

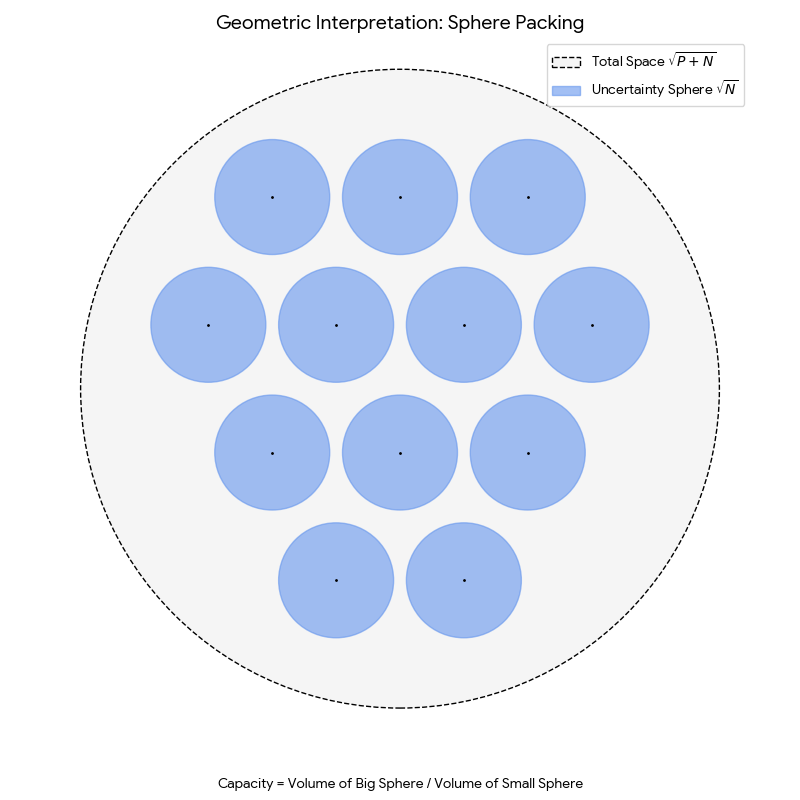

Geoffrey Hinton’s 1984 technical report that formally derives the efficiency of distributed representations (coarse coding) and demonstrates their properties of automatic generalization, content-addressability, and robustness to damage.

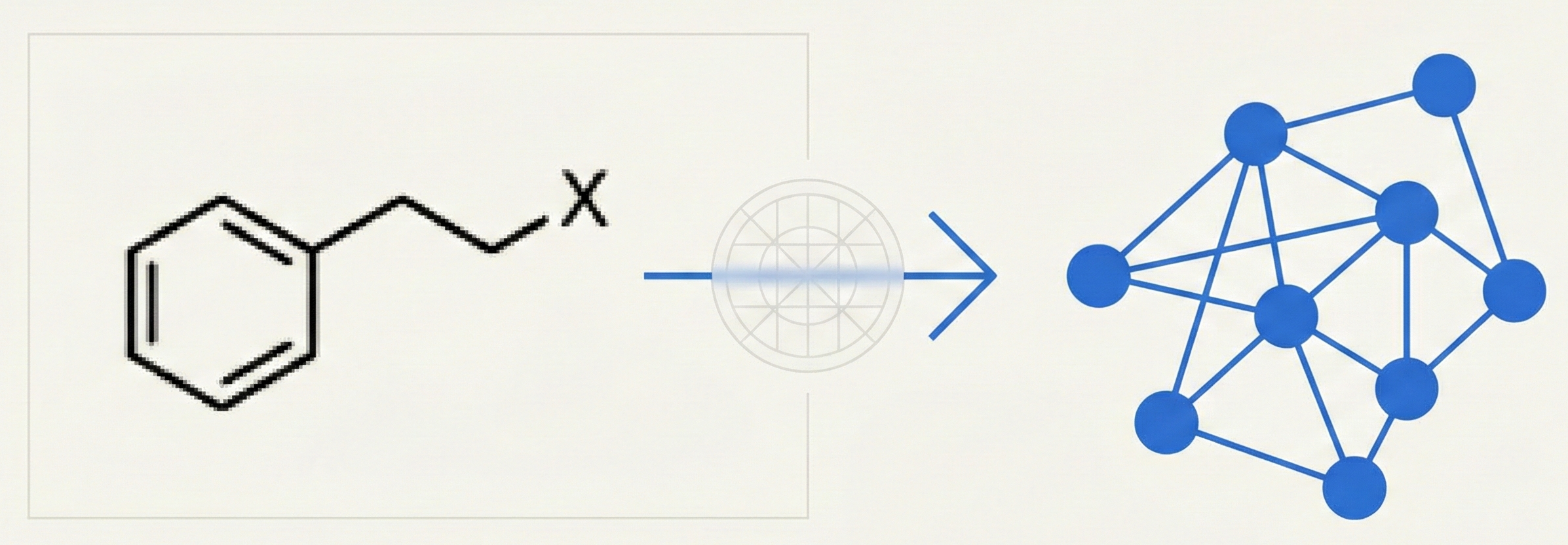

This 1990 paper presents an early OCR pipeline for converting hand-drawn or printed chemical structures into connectivity tables. It introduces novel sweeping algorithms for graph perception and a matrix-based feature extraction method for character recognition.

Proposes the Hyperbolic Algorithm for Euclidean field theory simulations. By adding a second-order fictitious time derivative to the Langevin equation, the method reduces systematic errors from O(ε) down to O(ε²).

This paper established the three-domain classification system (Bacteria, Archaea, Eucarya) based on molecular evidence from ribosomal RNA sequences, arguing that the prokaryote-eukaryote dichotomy obscures the deep evolutionary divergence of Archaea from Bacteria.

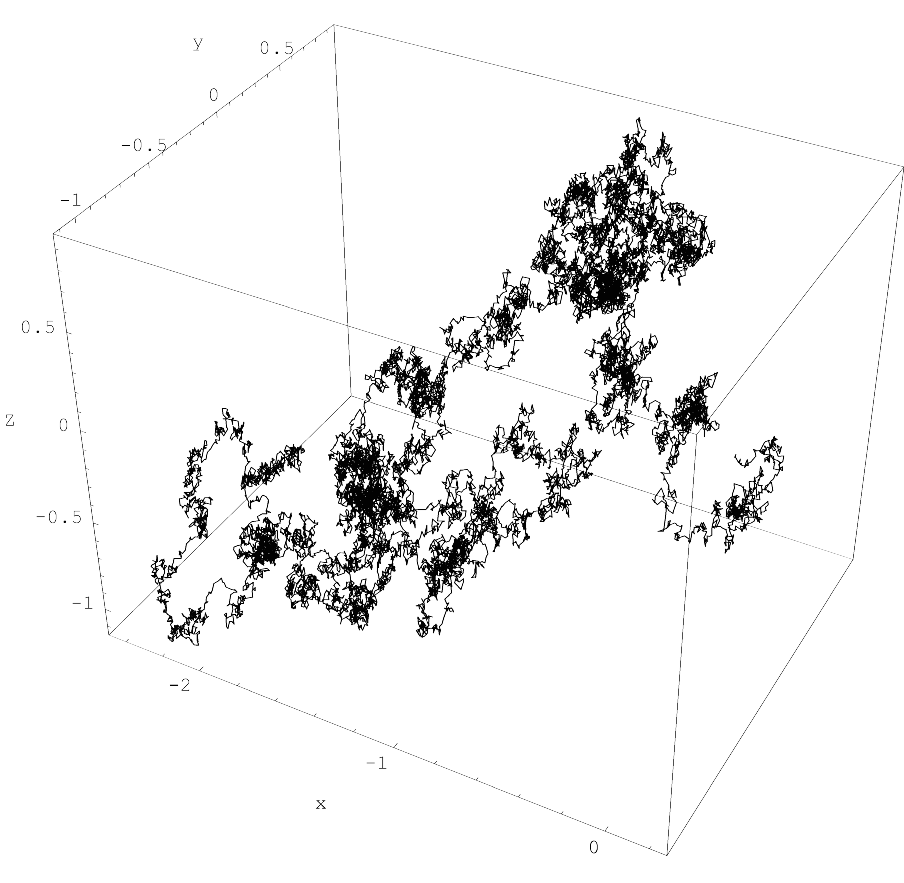

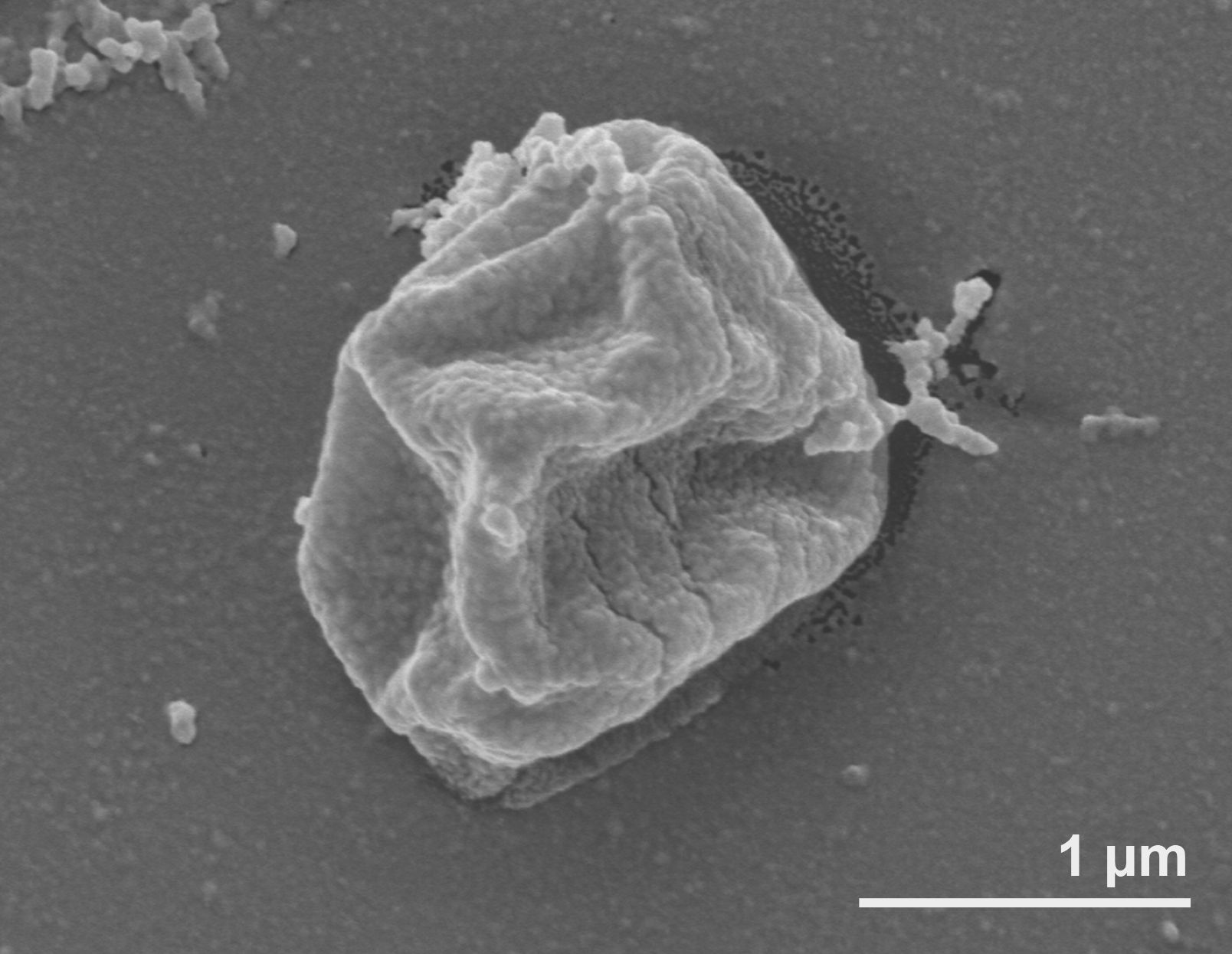

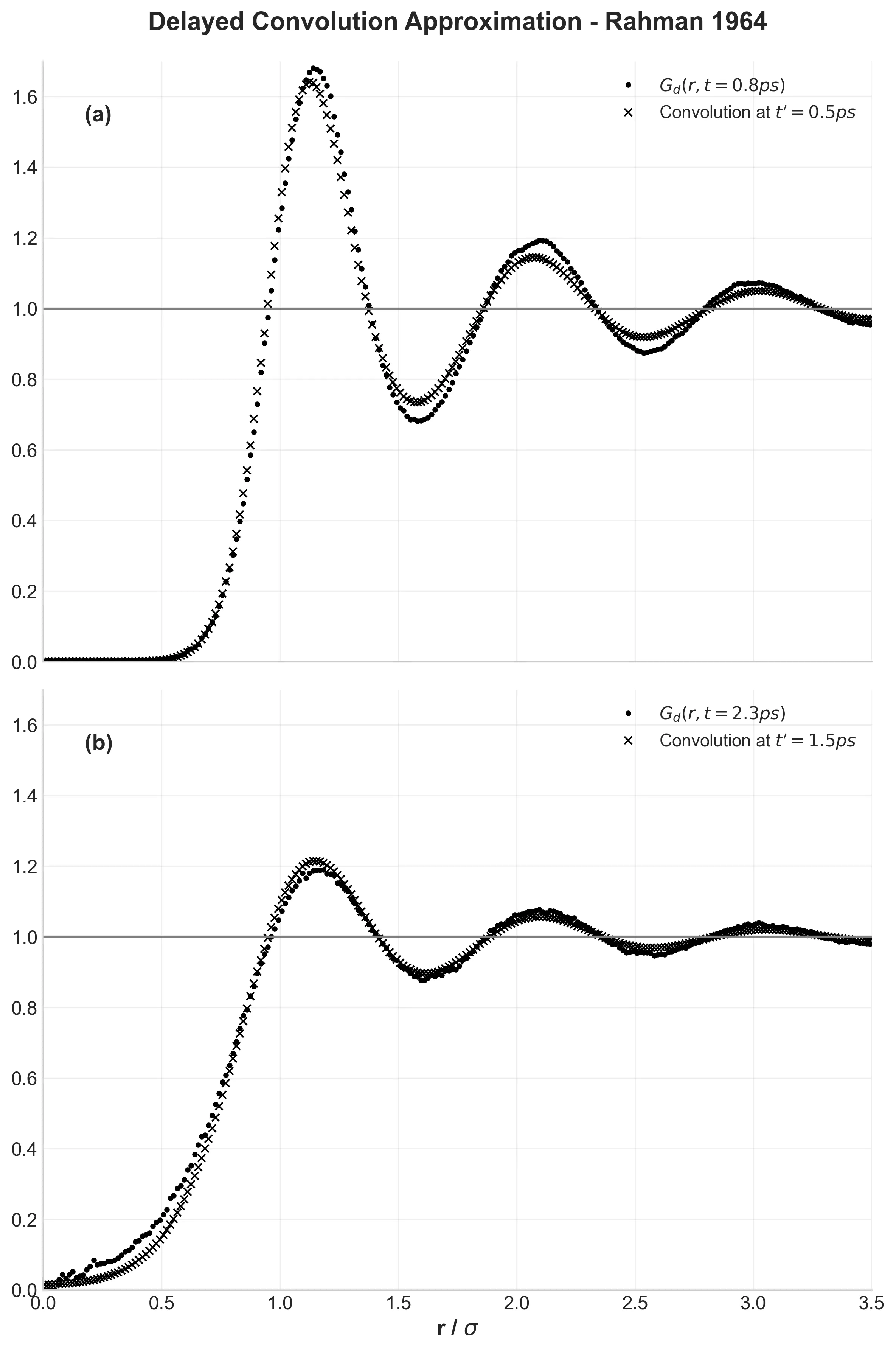

This work validated classical Molecular Dynamics for simulating liquids, revealing the ‘cage effect’ in velocity autocorrelation and establishing predictor-corrector integration algorithms for N-body problems.

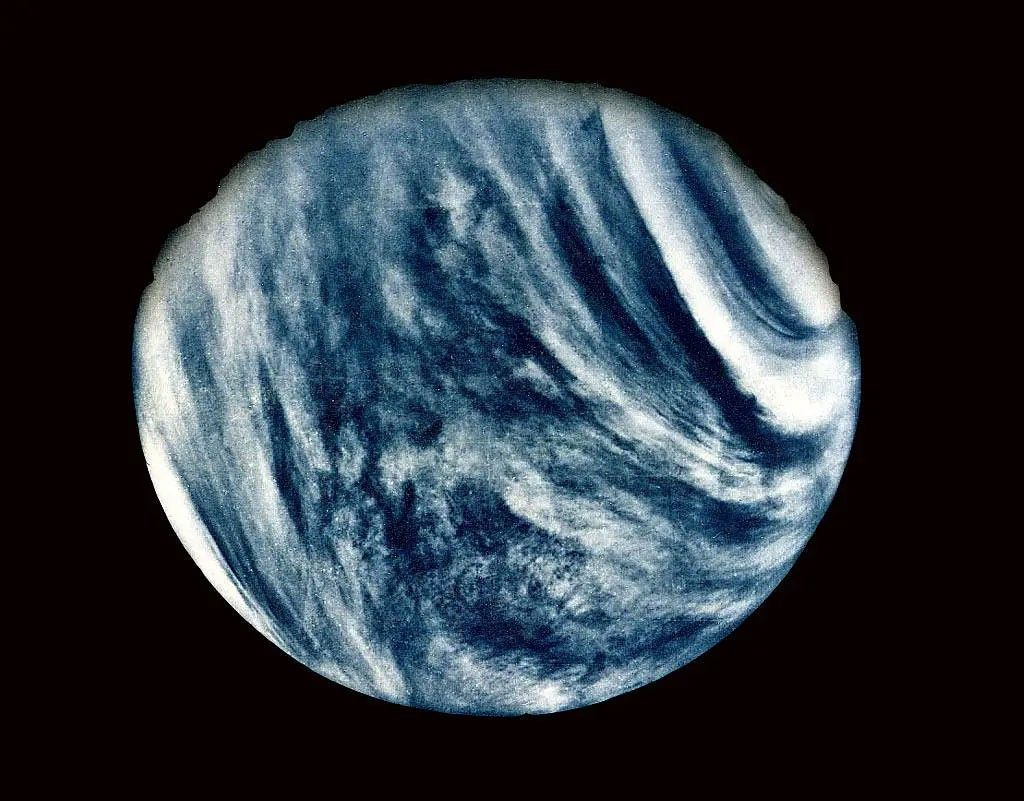

A deep dive into the physical limits of life on Venus, reviewing Charles Cockell’s foundational 1999 analysis while connecting it to modern discoveries like the 2020 phosphine detection and upcoming DAVINCI+ missions.

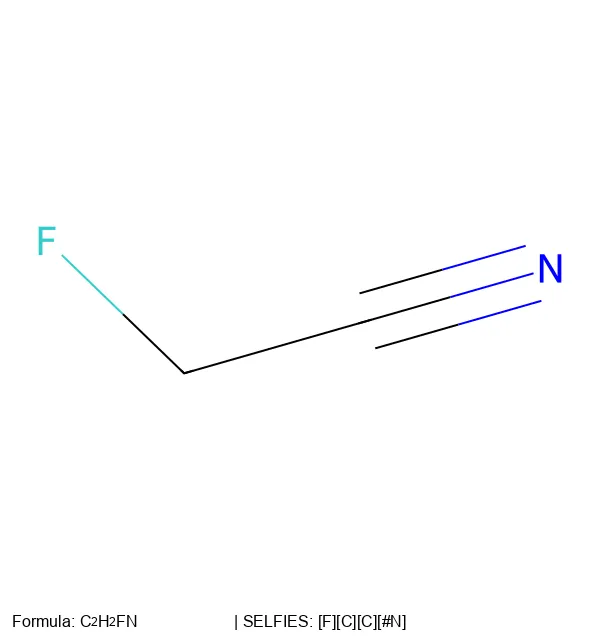

The 2020 paper that introduced SELFIES: Mario Krenn and colleagues created a molecular representation that solves SMILES validity problems. It guarantees every generated string corresponds to a valid chemical structure.

David Weininger introduced SMILES notation in 1988, establishing encoding rules for representing chemical structures as compact, human-readable strings.

Shannon’s foundational 1949 paper establishing the mathematical framework for modern information theory, defining channel capacity as the fundamental limit for reliable communication over noisy channels and introducing the sampling theorem (Nyquist-Shannon) that underpins all digital signal processing.