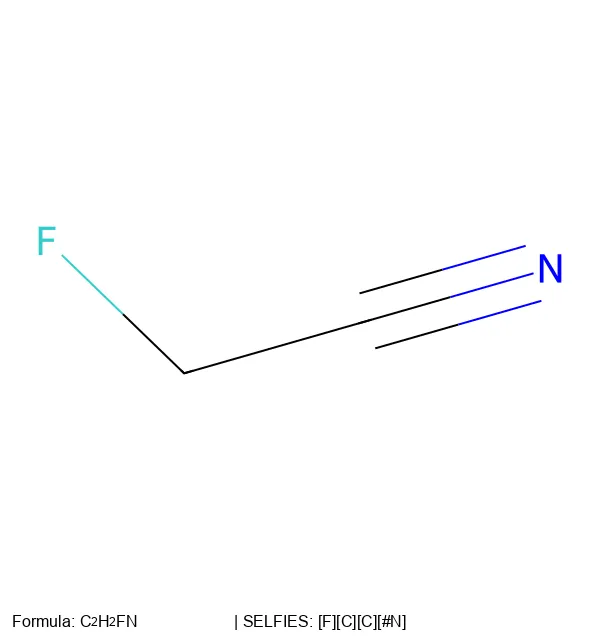

SELFIES and the Future of Molecular String Representations

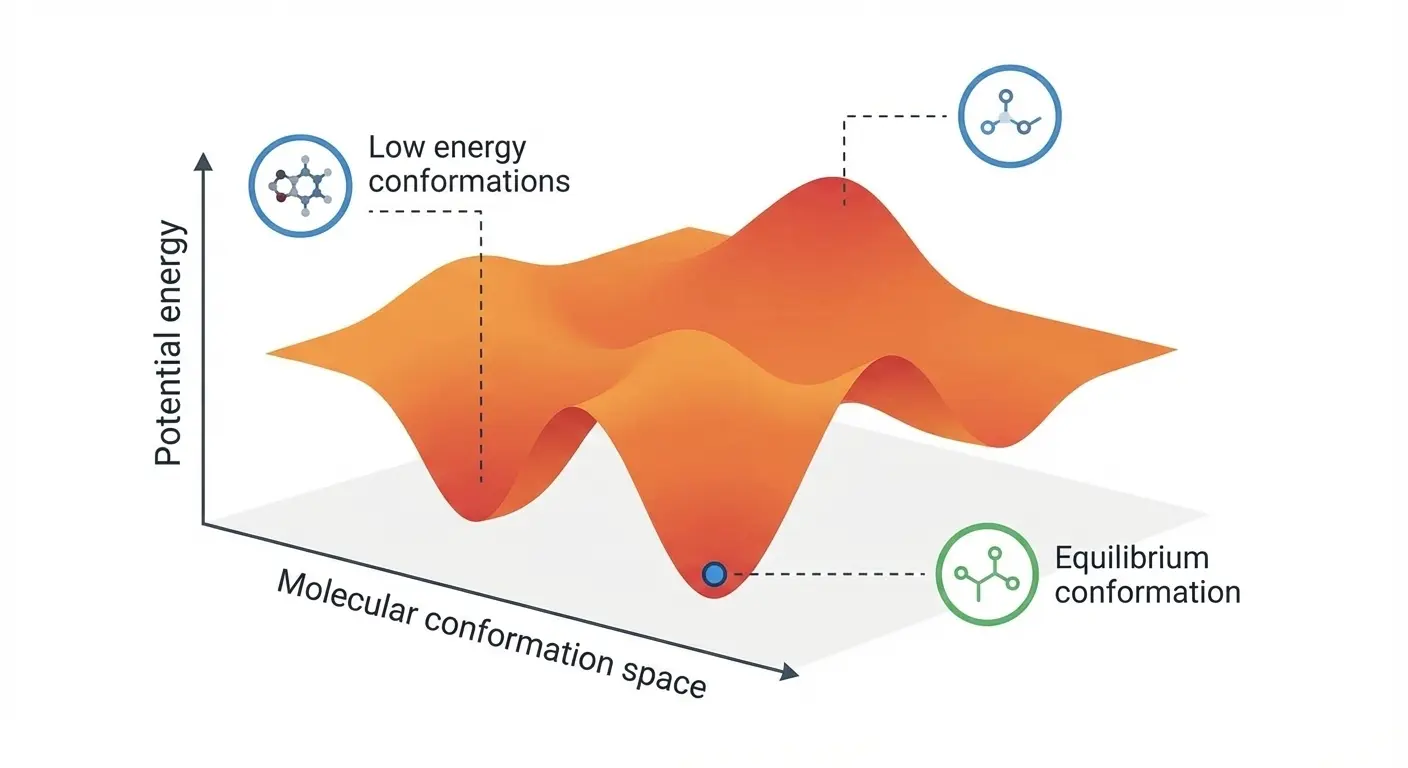

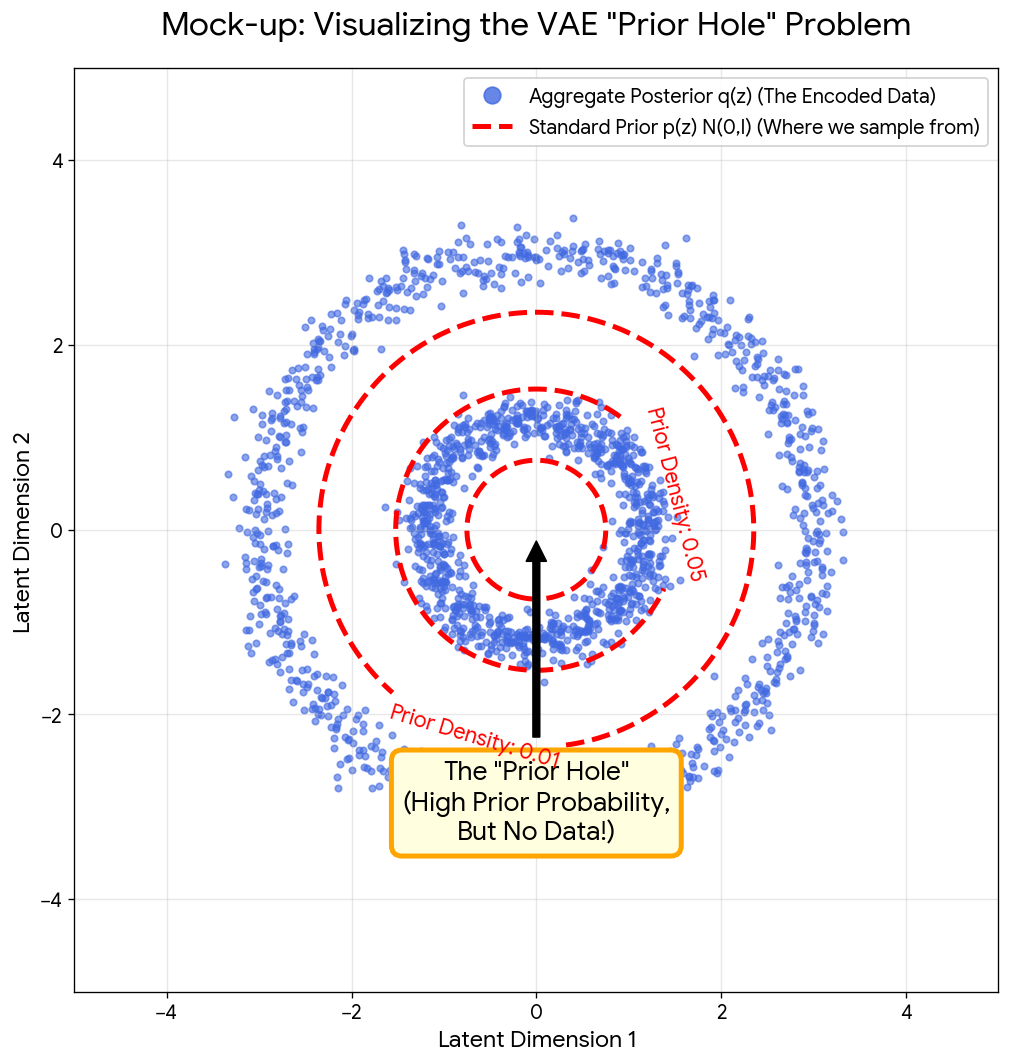

This 2022 perspective paper reviews 250 years of chemical notation evolution and proposes 16 concrete research projects to extend SELFIES beyond traditional organic chemistry into polymers, crystals, and reactions.