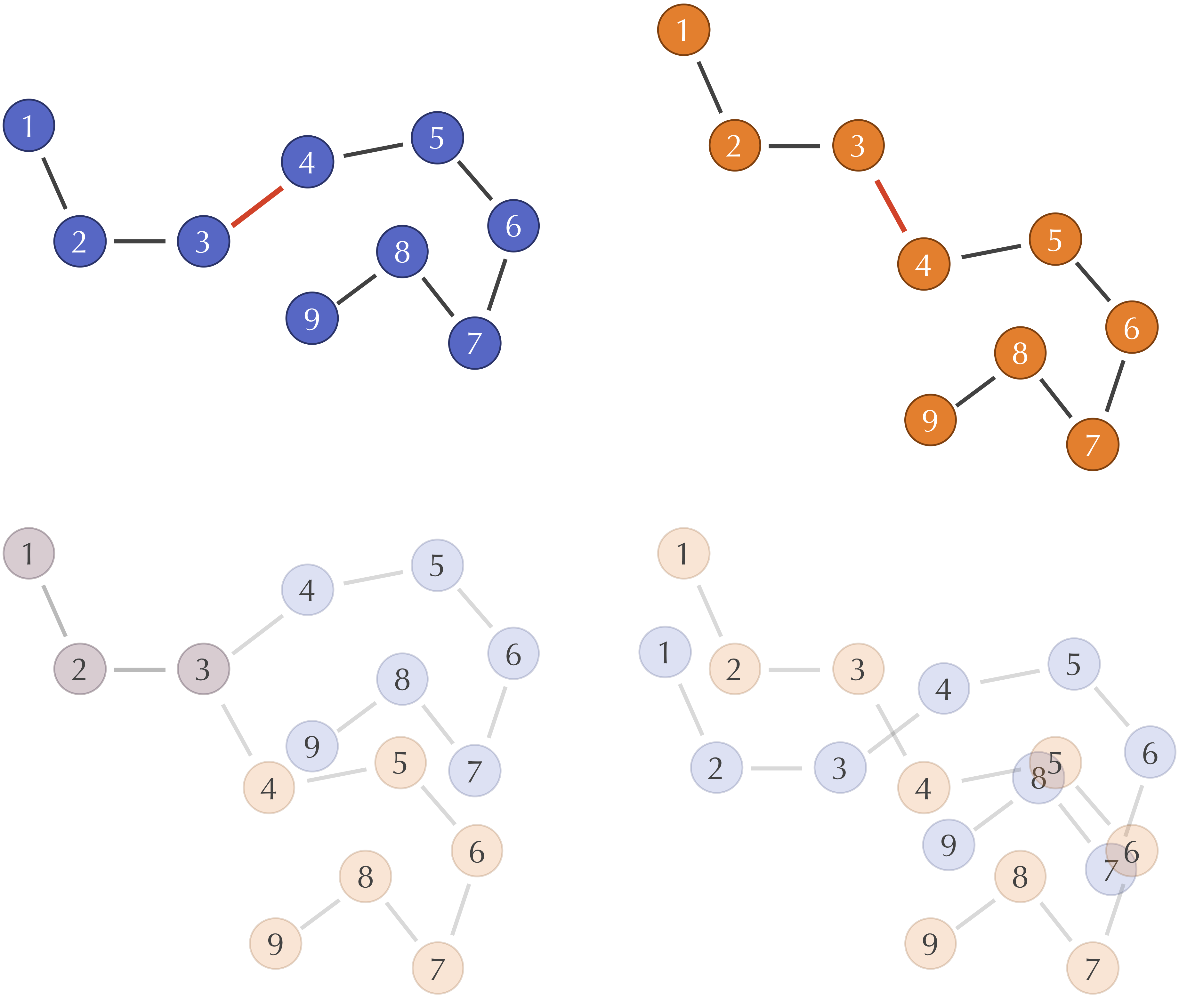

Kabsch Algorithm: NumPy, PyTorch, TensorFlow, and JAX

Learn to align molecular structures and point clouds using the Kabsch algorithm, with differentiable implementations for modern ML frameworks.

Learn to align molecular structures and point clouds using the Kabsch algorithm, with differentiable implementations for modern ML frameworks.

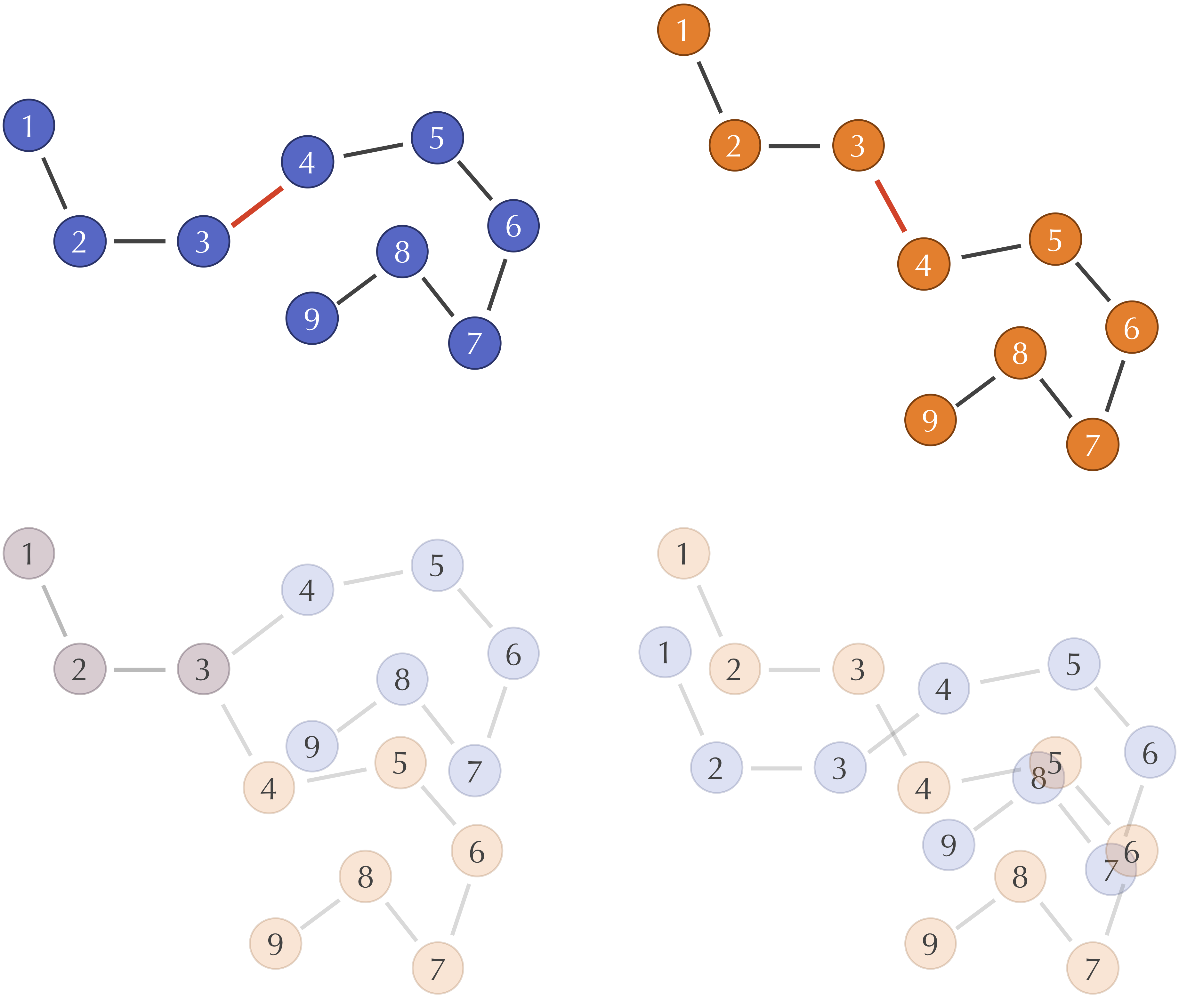

Step-by-step LAMMPS tutorial for simulating copper and platinum adatom diffusion. Learn surface dynamics simulation, trajectory analysis, and how atomic mass affects diffusion for machine learning datasets.

A complete input-to-analysis workflow for simulating adatom diffusion on FCC metal surfaces using LAMMPS and EAM potentials, providing comparative datasets for copper and platinum that demonstrate how atomic mass and bonding strength affect surface dynamics, with automated Python analysis generating publication-ready visualizations.

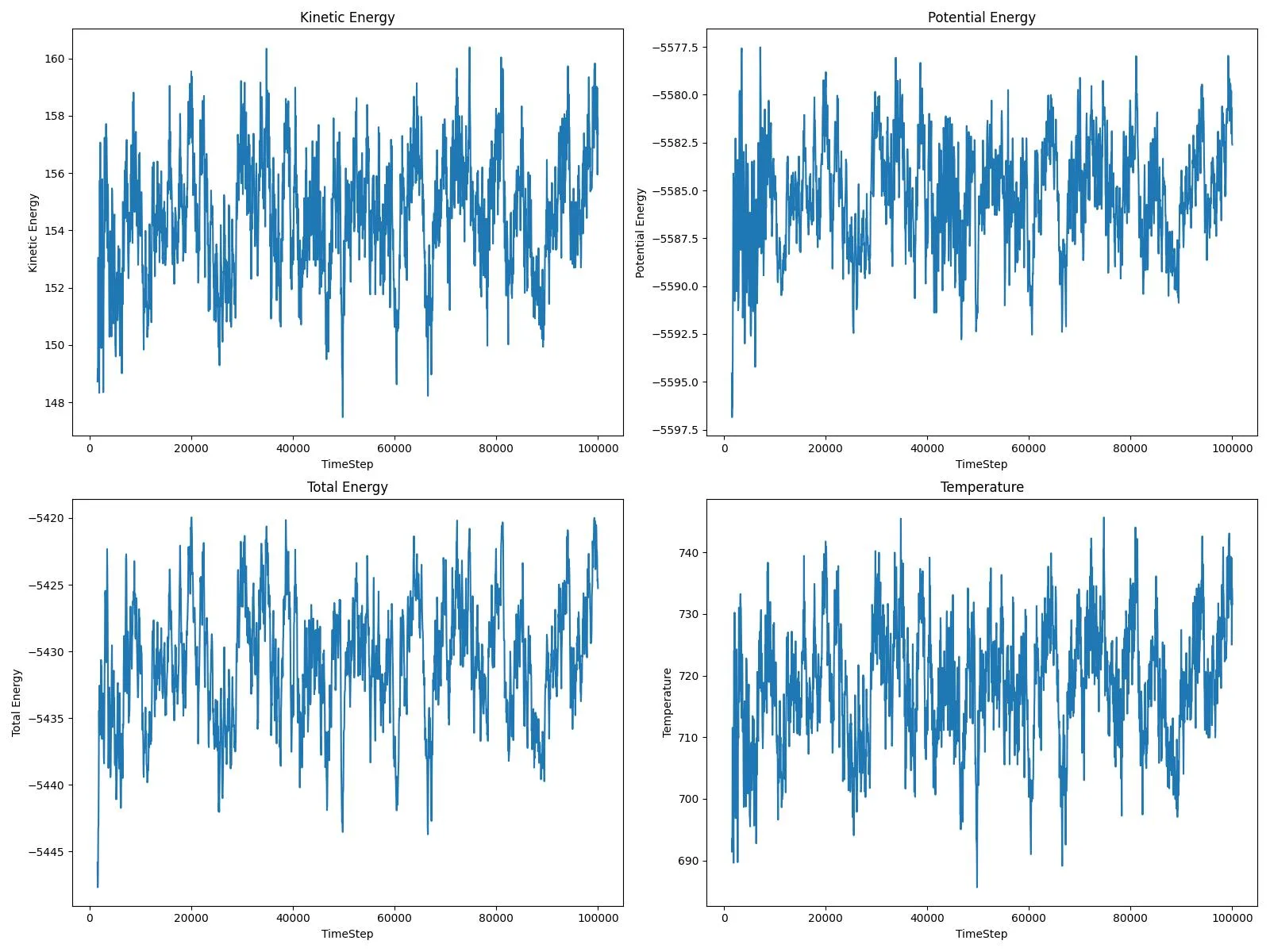

A practical guide to simulating mini-proteins using GROMACS; from alanine dipeptide to tryptophan systems for ML training data generation.

An automated GROMACS pipeline for generating high-fidelity molecular dynamics datasets suitable for machine learning, simulating capped dipeptides across nine residue types with 0.1 ps resolution and atomic force extraction optimized for training Neural Network Potentials.

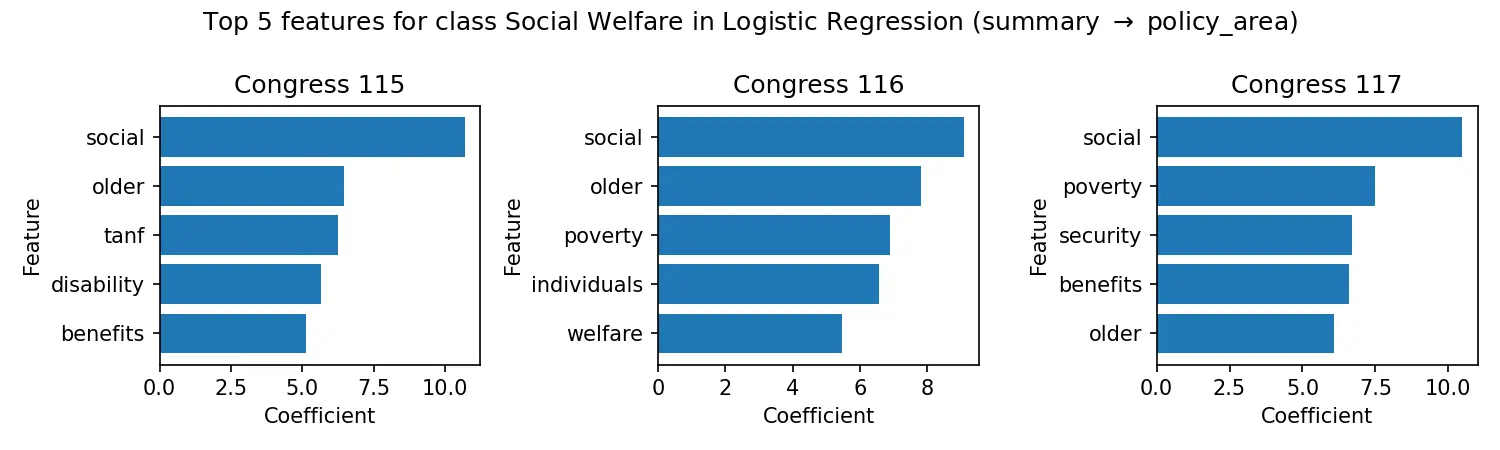

A computational social science project that engineered a custom extraction engine to build a 47,000+ bill knowledge graph from Congress.gov (115th-117th Congresses), creating a novel legislative graph with co-sponsorship networks and establishing an 87% accuracy benchmark for policy area classification now available on Hugging Face.

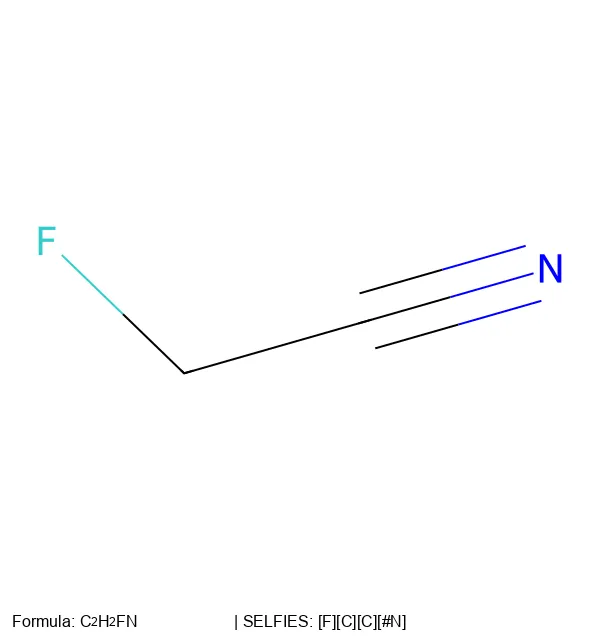

This 2022 perspective paper reviews 250 years of chemical notation evolution and proposes 16 concrete research projects to extend SELFIES beyond traditional organic chemistry into polymers, crystals, and reactions.

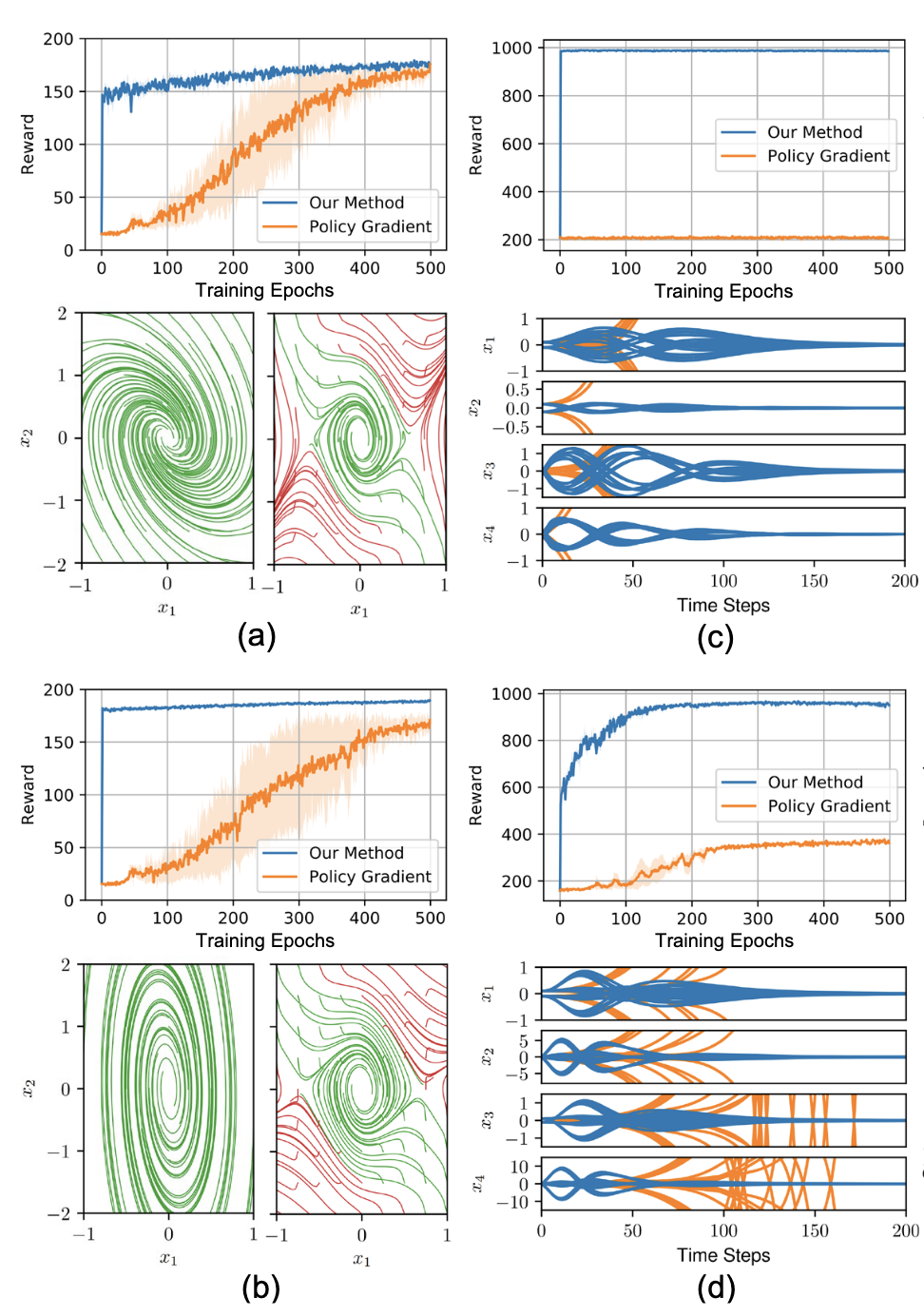

A PyTorch implementation enforcing strict Lyapunov stability guarantees on recurrent neural network controllers through Integral Quadratic Constraints, bridging 1990s robust control theory with modern deep reinforcement learning by solving semidefinite programs inside the gradient descent loop to provide mathematical certificates of safety.

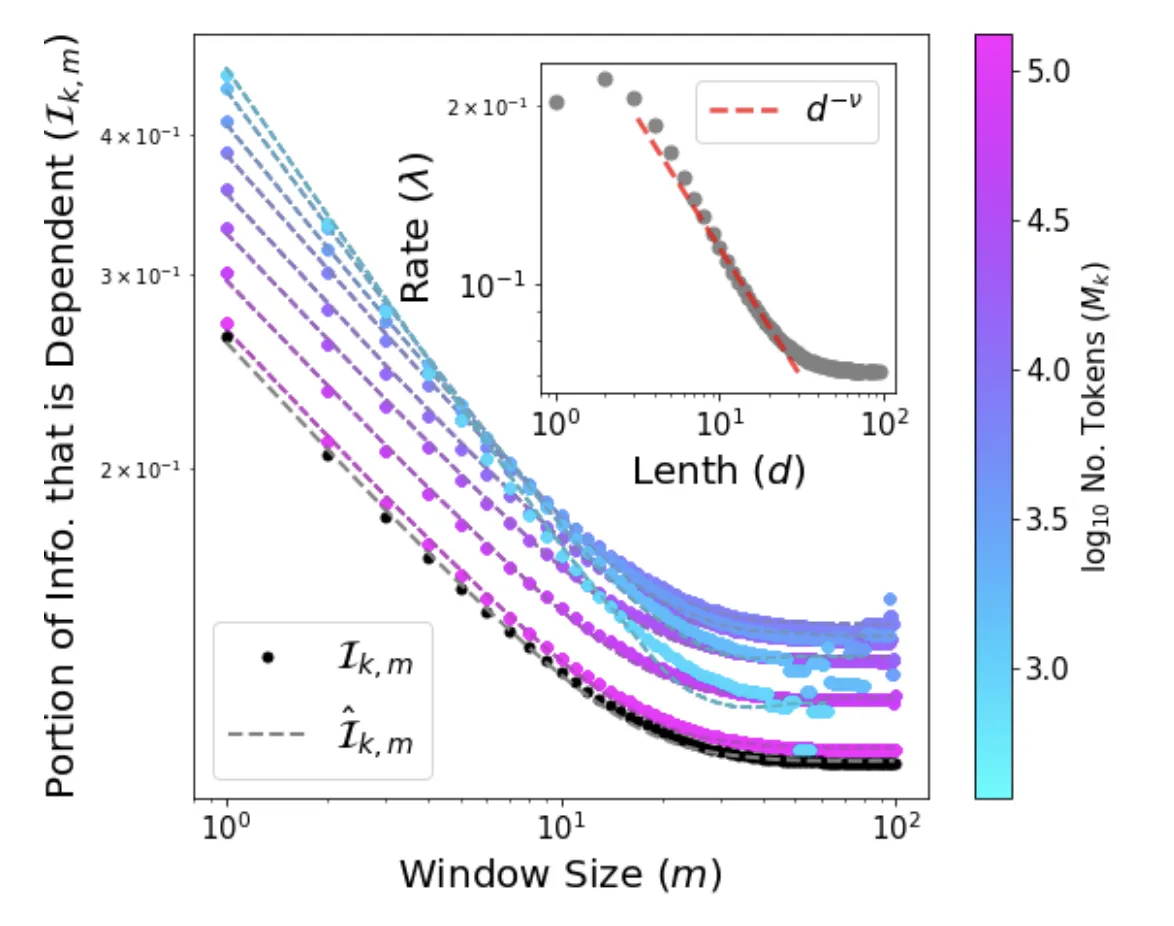

We provide the first analytical solution to Word2Vec’s softmax skip-gram objective, introducing the Independent Frequencies Model and deriving a low-cost, training-free method for measuring semantic bias directly from corpus statistics.

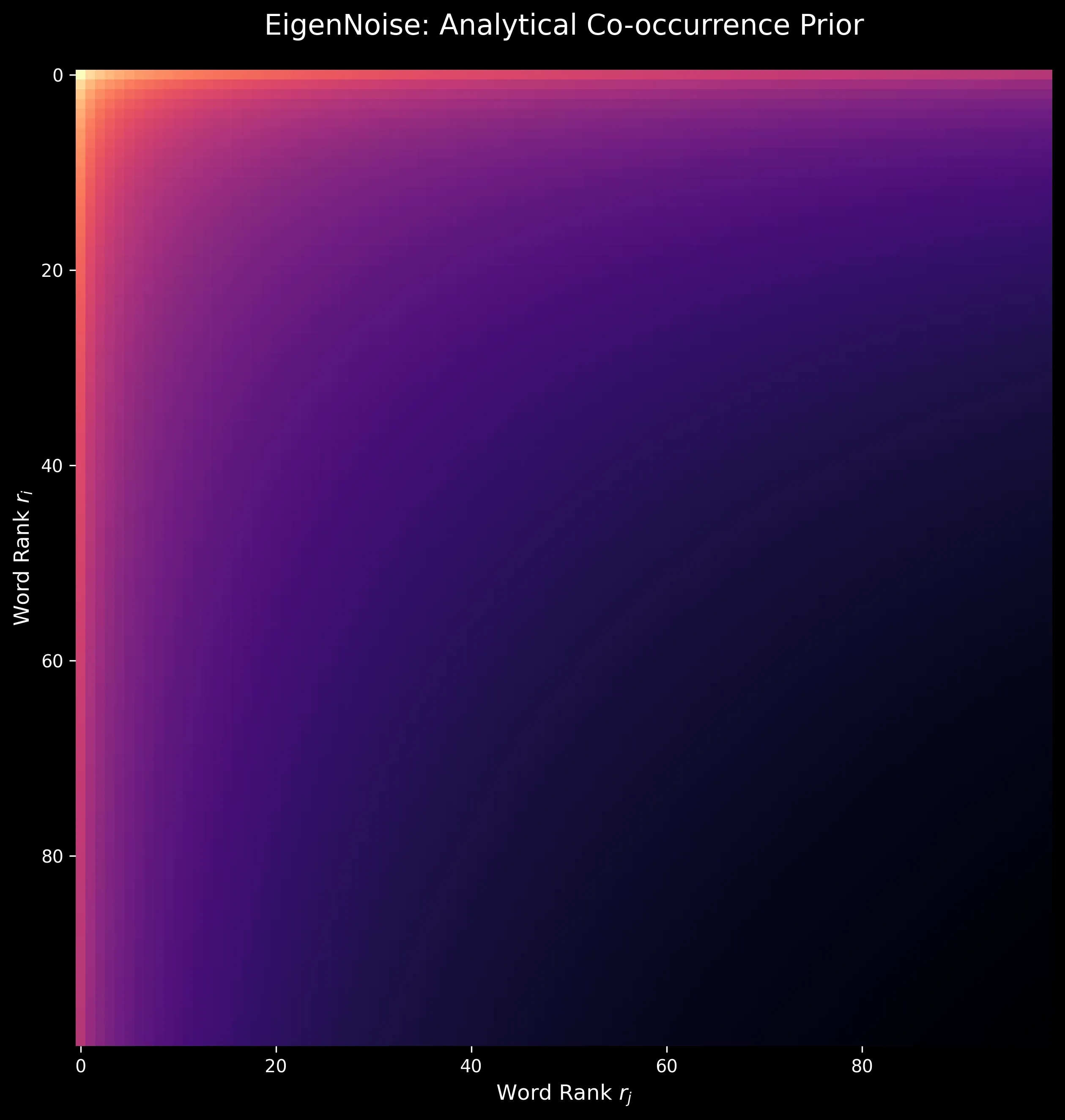

We develop EigenNoise, a zero-data initialization method for word vectors that synthesizes representations from Zipf’s Law alone, demonstrating competitive performance to GloVe after fine-tuning without requiring any pre-training corpus.

Bachelor’s thesis introducing PyConversations, an open-source library that normalizes over 308 million posts from Twitter, Reddit, Facebook, and 4chan into a unified data model for cross-platform social media research.

Research project that investigated how different NLP models perform on social media data, finding that domain-specific approaches often outperform large pre-trained models. Includes PyConversations, a Python module for analyzing conversations across social media platforms.