What kind of paper is this?

This is a method paper that introduces the theory and implementation of convolutional neural networks on the sphere. The key contribution is defining spherical cross-correlation that is SO(3)-equivariant and can be computed efficiently using generalized Fast Fourier Transforms from non-commutative harmonic analysis.

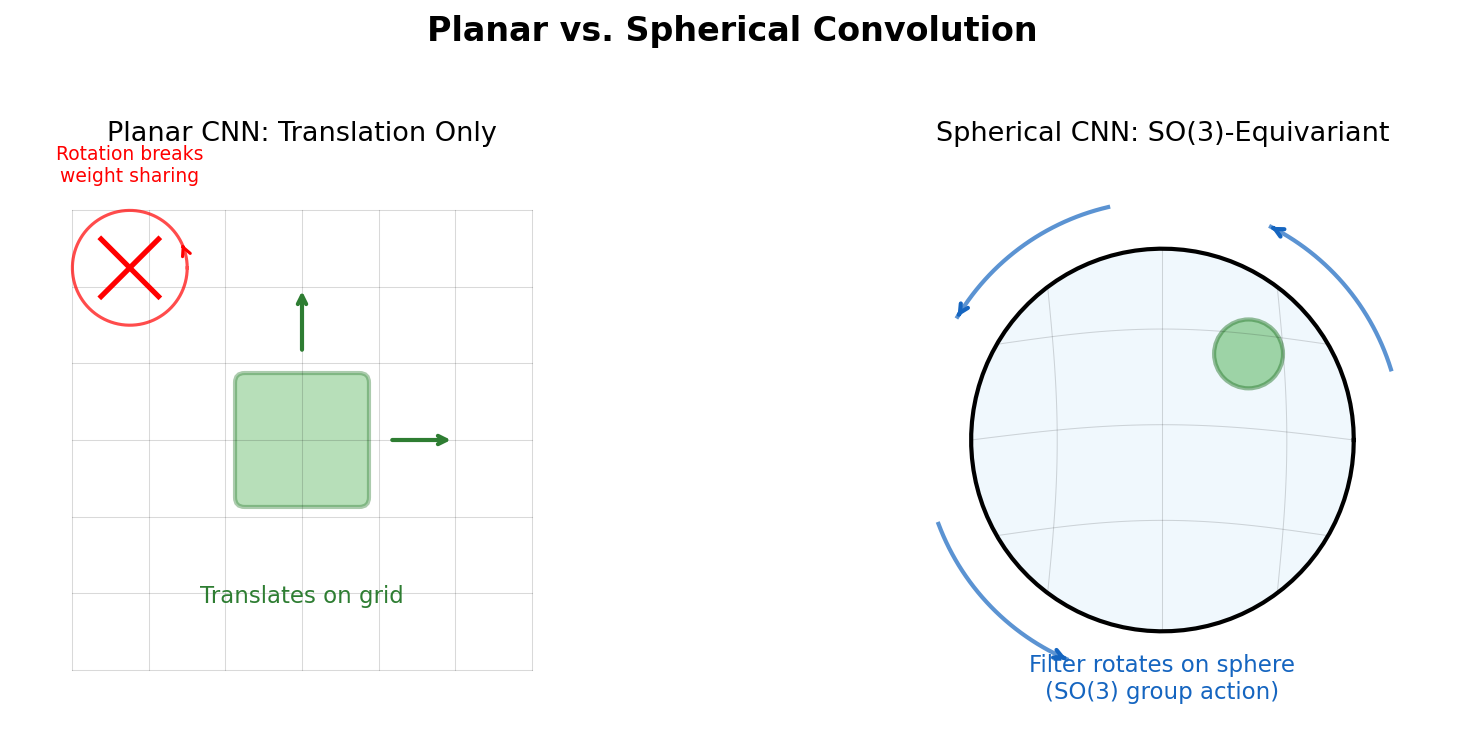

Why planar convolutions fail on spherical data

Many problems require analyzing spherical signals: omnidirectional vision for robots and autonomous vehicles, molecular regression, and global weather modeling. A naive approach of projecting spherical data to a plane introduces space-varying distortions that break translational weight sharing. Rotating a spherical signal cannot be emulated by translating its planar projection.

The fundamental issue is geometric: patterns on a plane move via translations, but patterns on a sphere move via 3D rotations. A spherical CNN should detect patterns regardless of how they are rotated over the sphere. The relevant symmetry group is SO(3) (the group of all 3D rotations).

Spherical cross-correlation and the SO(3) output space

The paper defines spherical cross-correlation by replacing filter translations with rotations. For spherical signals $f$ on $S^2$ (the unit sphere) and filter $\psi$, the correlation is:

$$\lbrack\psi \star f\rbrack(R) = \langle L_R \psi, f \rangle = \int_{S^2} \sum_{k=1}^{K} \psi_k(R^{-1}x) f_k(x) , dx$$

where $L_R$ is the rotation operator $\lbrack L_R f\rbrack(x) = f(R^{-1}x)$.

A crucial subtlety: whereas the space of moves for the plane (2D translations) is isomorphic to the plane itself, the space of moves for the sphere (3D rotations) is SO(3), a different three-dimensional manifold. The output of a spherical correlation is therefore a function on SO(3), not on $S^2$. This means subsequent layers must use SO(3) correlation:

$$\lbrack\psi \star f\rbrack(R) = \int_{\text{SO}(3)} \sum_{k=1}^{K} \psi_k(R^{-1}Q) f_k(Q) , dQ$$

Equivariance proof

Equivariance follows from the unitarity of $L_R$ in a single line:

$$\lbrack\psi \star \lbrack L_Q f\rbrack\rbrack(R) = \langle L_R \psi, L_Q f \rangle = \langle L_{Q^{-1}R} \psi, f \rangle = \lbrack\psi \star f\rbrack(Q^{-1}R) = \lbrack L_Q\lbrack\psi \star f\rbrack\rbrack(R)$$

This holds for both $S^2$ and SO(3) correlation.

Efficient computation via generalized FFT

A naive SO(3) correlation is $O(n^6)$. The paper addresses this using the generalized Fourier transform (GFT) from non-commutative harmonic analysis.

The GFT projects functions onto orthogonal basis functions: spherical harmonics $Y_m^l(x)$ for $S^2$, and Wigner D-functions $D_{mn}^l(R)$ for SO(3). Both satisfy generalized Fourier theorems:

- SO(3) convolution theorem: $\widehat{\psi \star f} = \hat{f} \cdot \hat{\psi}^\dagger$ (matrix multiplication of block Fourier coefficients)

- $S^2$ convolution theorem: $\widehat{\psi \star f}^l = \hat{f}^l \cdot \hat{\psi}^{l\dagger}$ (outer product of $S^2$ Fourier coefficient vectors)

The SO(3) FFT works in two steps: (1) standard 2D FFT over the $\alpha$ and $\gamma$ Euler angles, then (2) linear contraction of the $\beta$ axis with precomputed Wigner-d function samples, implemented as a custom GPU kernel.

Experiments

Equivariance error

Since the theory applies to continuous functions but the implementation is discretized, the authors rigorously measure equivariance error. The approximation error grows with resolution and depth but stays manageable for practical bandwidths. With ReLU activations, the error is higher but stays flat across layers, indicating the error comes from feature map rotation (exact only for bandlimited functions) rather than accumulating through the network.

Spherical MNIST

MNIST digits projected onto the sphere, tested in non-rotated (NR) and rotated (R) settings with ~165K parameters per model:

| Train / Test | Planar CNN | Spherical CNN |

|---|---|---|

| NR / NR | 99% | 91% |

| R / R | 45% | 91% |

| NR / R | 9% | 85% |

The planar CNN collapses to chance when trained on non-rotated data and tested on rotated data. The spherical CNN maintains strong performance across all settings.

3D shape recognition (SHREC17)

3D meshes projected onto an enclosing sphere via ray casting. For each point on the sphere, a ray is cast toward the origin, collecting three types of information from the intersection: ray length and cos/sin of the surface angle. The same three channels are computed for the convex hull, giving 6 channels total. The network (~1.4M parameters) placed 2nd on recall, mAP, and NDCG, and 3rd on precision and F1 in the SHREC17 competition, competing against methods with highly task-specialized architectures.

Molecular atomization energy (QM7)

Molecules represented as spherical potential functions around each atom (generalizing the Coulomb matrix). A deep ResNet-style $S^2$CNN with DeepSets-style permutation-invariant aggregation over atoms achieved 8.47 RMSE, outperforming all kernel-based approaches and sorted Coulomb matrix methods.

Discussion and future directions

The authors highlight several avenues for future work. For volumetric tasks like 3D model recognition, extending beyond SO(3) to the roto-translation group SE(3) could improve results. They also note that a Steerable CNN for the sphere would enable analysis of vector fields (e.g., global wind directions). Omnidirectional vision is mentioned as a compelling application as 360-degree sensors become more prevalent.

Reproducibility

The official PyTorch implementation is publicly available. The code does not support recent PyTorch versions due to changes in the FFT interface.

| Artifact | Type | License | Notes |

|---|---|---|---|

| s2cnn | Code | MIT | Official PyTorch implementation (deprecated for modern PyTorch) |

Hardware requirements from the paper: the SHREC17 model uses 8GB GPU memory at batch size 16 and takes 50 hours to train. The QM7 model uses 7GB at batch size 20 and takes 3 hours to train. Datasets used (Spherical MNIST, SHREC17, QM7) are all publicly available.

Paper Information

Citation: Cohen, T. S., Geiger, M., Köhler, J., & Welling, M. (2018). Spherical CNNs. International Conference on Learning Representations (ICLR 2018).

Publication: ICLR 2018

Additional Resources:

Citation

@inproceedings{cohen2018spherical,

title={Spherical {CNNs}},

author={Cohen, Taco S. and Geiger, Mario and K{\"o}hler, Jonas and Welling, Max},

booktitle={International Conference on Learning Representations},

year={2018}

}