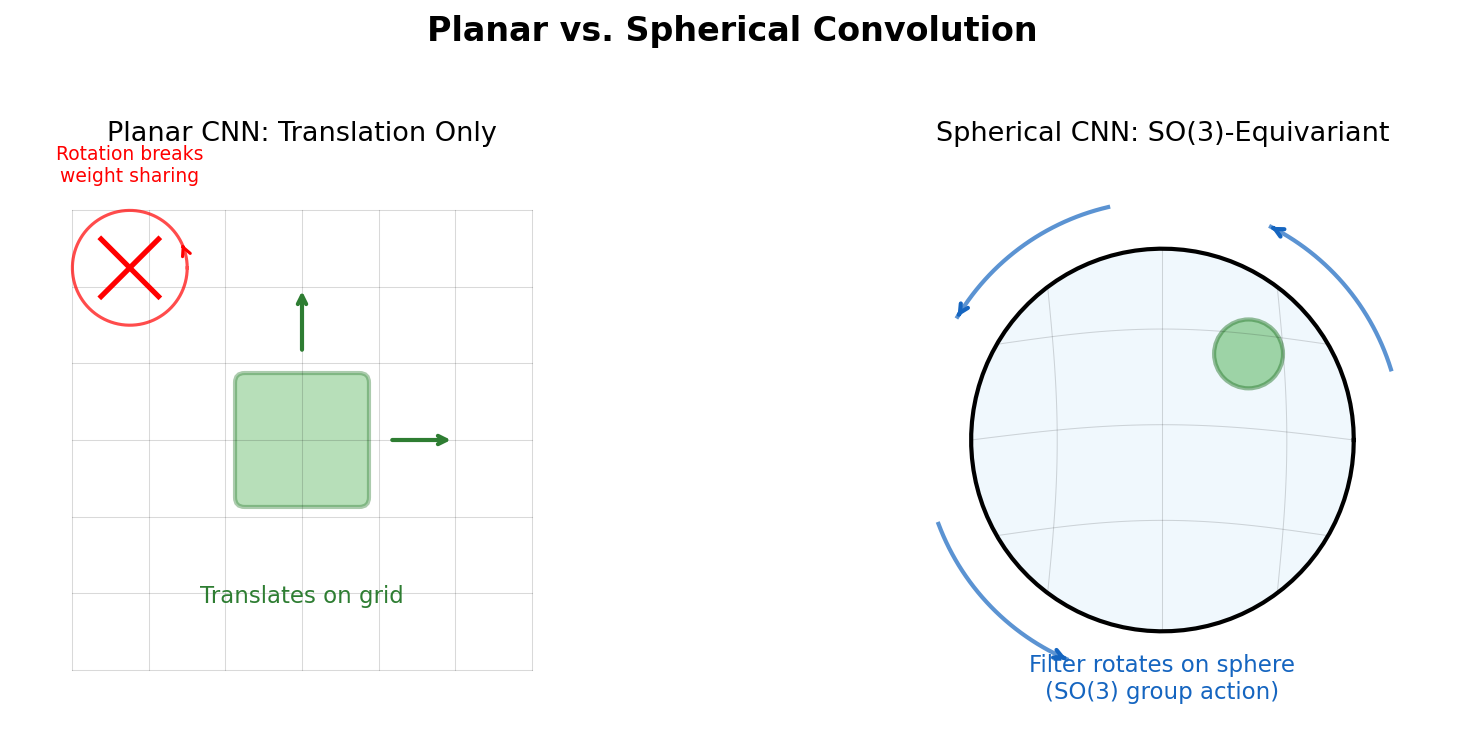

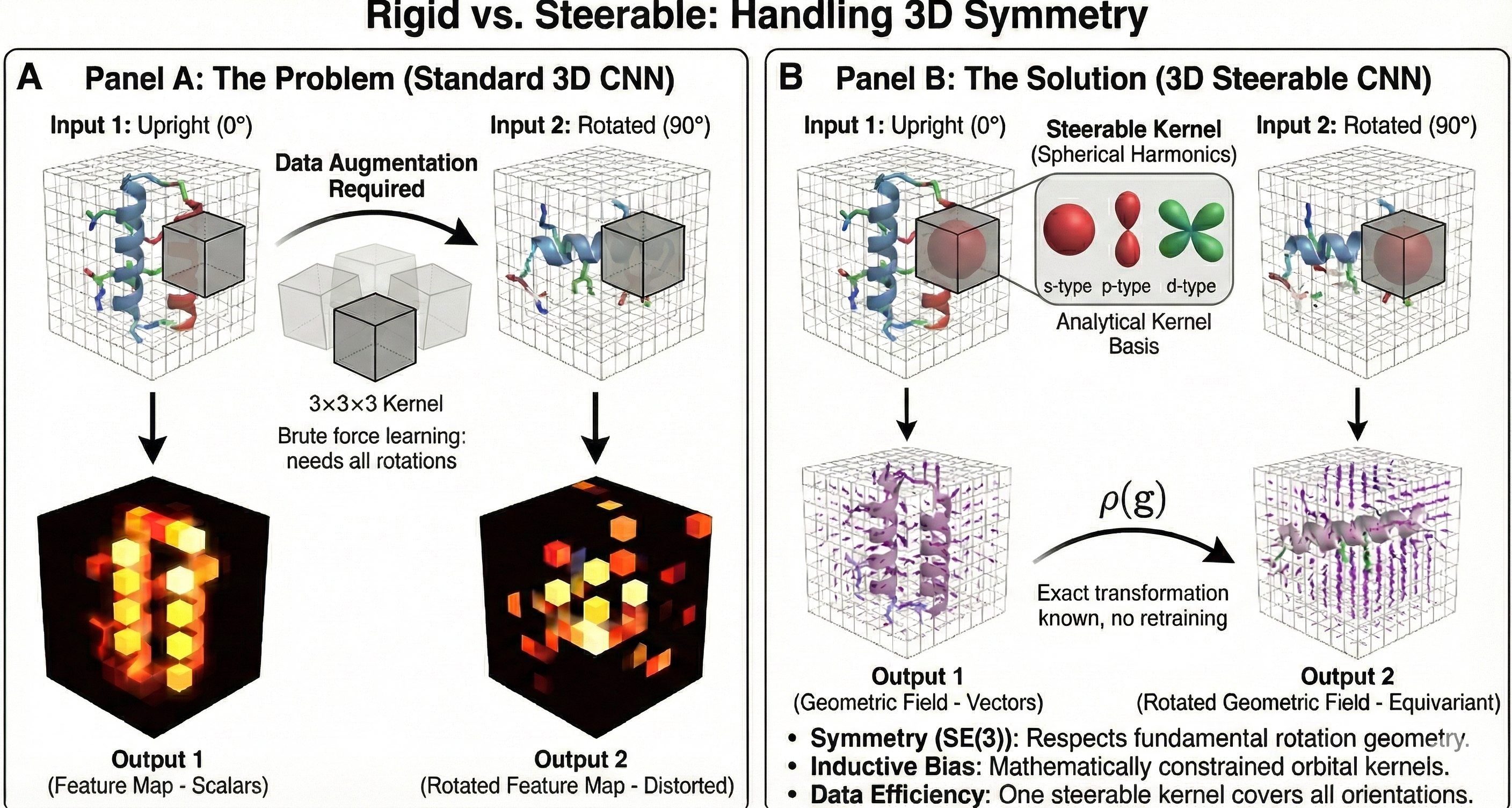

Many problems in chemistry and biology involve data with geometric structure: molecules have 3D coordinates, proteins have orientations, and physical forces transform predictably under rotation. Geometric deep learning addresses this by building symmetries directly into model architectures. Notes in this section cover equivariant networks, focusing on SO(3) and SE(3) equivariance achieved through group representation theory and spherical harmonics. The goal is to understand how these models work and why the symmetry constraints matter for data efficiency and physical correctness.

SE(3)-Transformers: Equivariant Attention for 3D Data

Fuchs et al. introduce the SE(3)-Transformer, which combines self-attention with SE(3)-equivariance for 3D point clouds and graphs. Invariant attention weights modulate equivariant value messages from tensor field networks, resolving angular filter constraints while enabling data-adaptive, anisotropic processing.