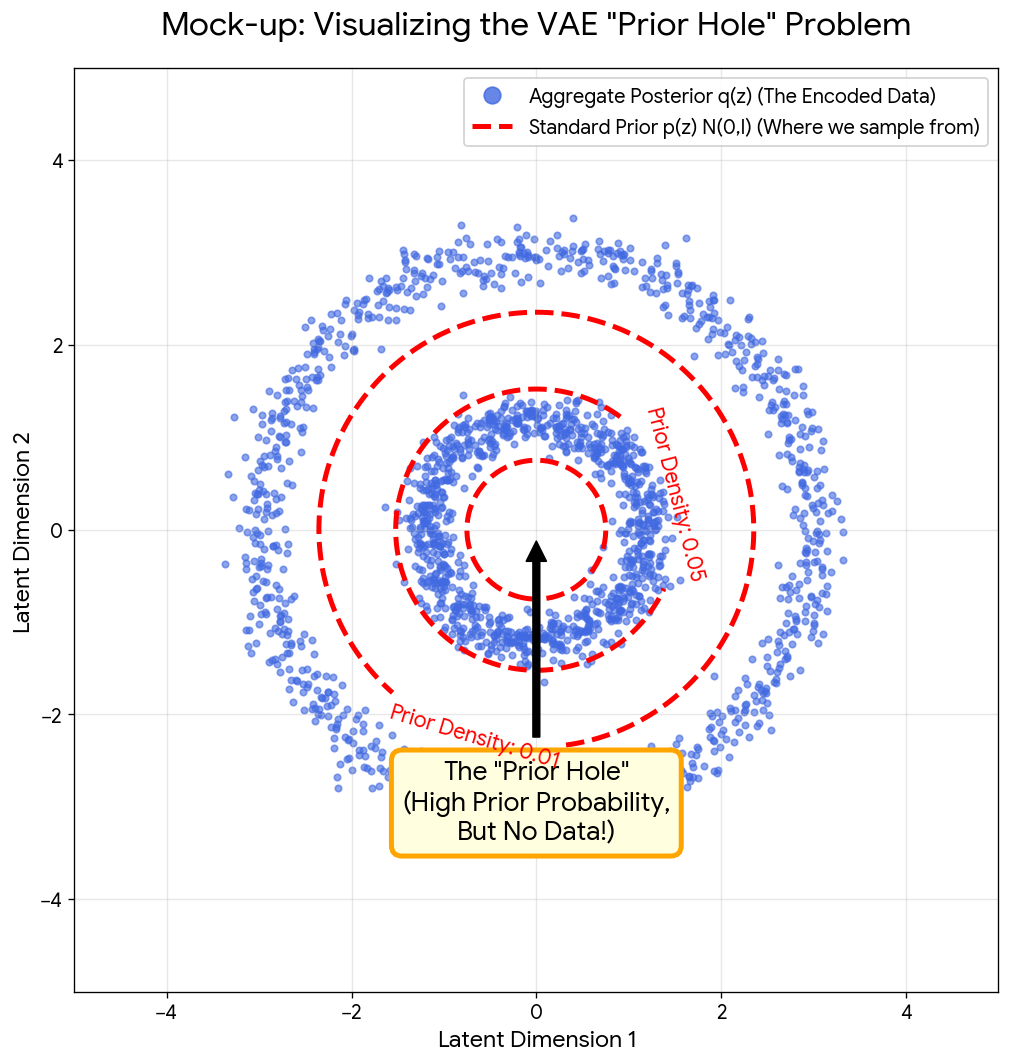

This section covers the core families of generative models used in modern machine learning. Notes begin with the foundational variational autoencoder (VAE) and its extensions (importance-weighted objectives, contrastive priors), then move through continuous normalizing flows, neural ODEs, score-based and diffusion models, and flow matching. The thread connecting these works is the shared goal of learning to sample from complex distributions, and each set of notes tries to make the mathematical connections between approaches explicit rather than treating them as isolated methods.

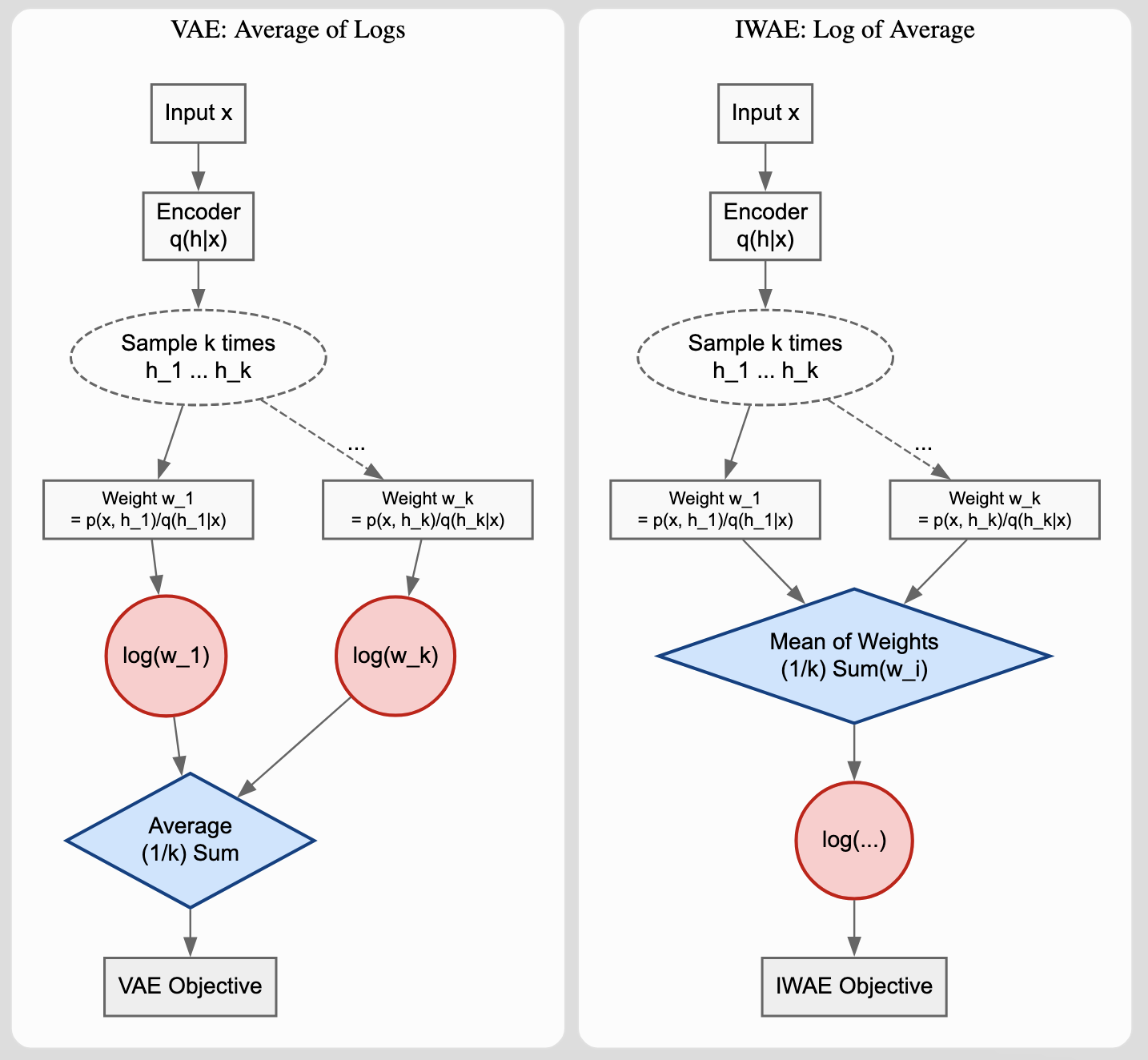

Importance Weighted Autoencoders (IWAE) for Tighter Bounds

Burda et al.’s ICLR 2016 paper introducing Importance Weighted Autoencoders, which use importance sampling to derive a strictly tighter log-likelihood lower bound than standard VAEs, addressing posterior collapse and improving generative quality. The model architecture remains the same.