This section collects notes on papers that established discipline-wide frameworks in machine learning and related fields. The curation criterion is scope: a note belongs here when the paper introduced a concept, formalism, or structure that became a field-level reference, cited and reused across subfields rather than within a single technique area. Shannon’s formalization of channel capacity and the sampling theorem is an example: it underpins information-theoretic reasoning throughout ML. Papers that are historically important within a specific technique or architecture family are filed under their technique subcategory instead. Reading these originals is useful both for historical perspective and for grounding intuitions that later work often assumes without stating.

Distributed Representations: A Foundational Theory

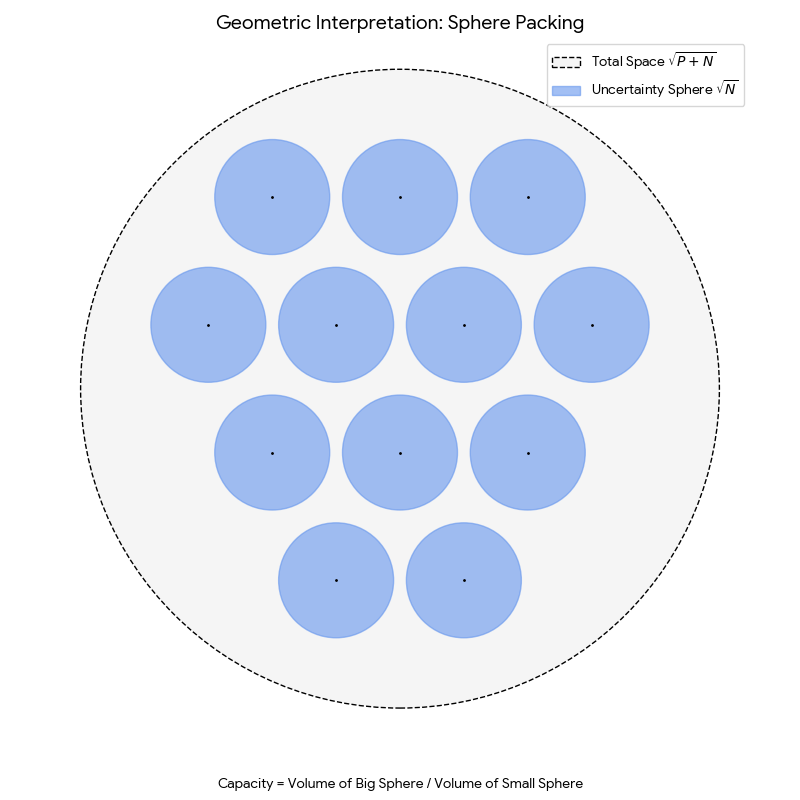

Geoffrey Hinton’s 1984 technical report that formally derives the efficiency of distributed representations (coarse coding) and demonstrates their properties of automatic generalization, content-addressability, and robustness to damage.