Fixing the Reflection Problem in SVD-Based Alignment

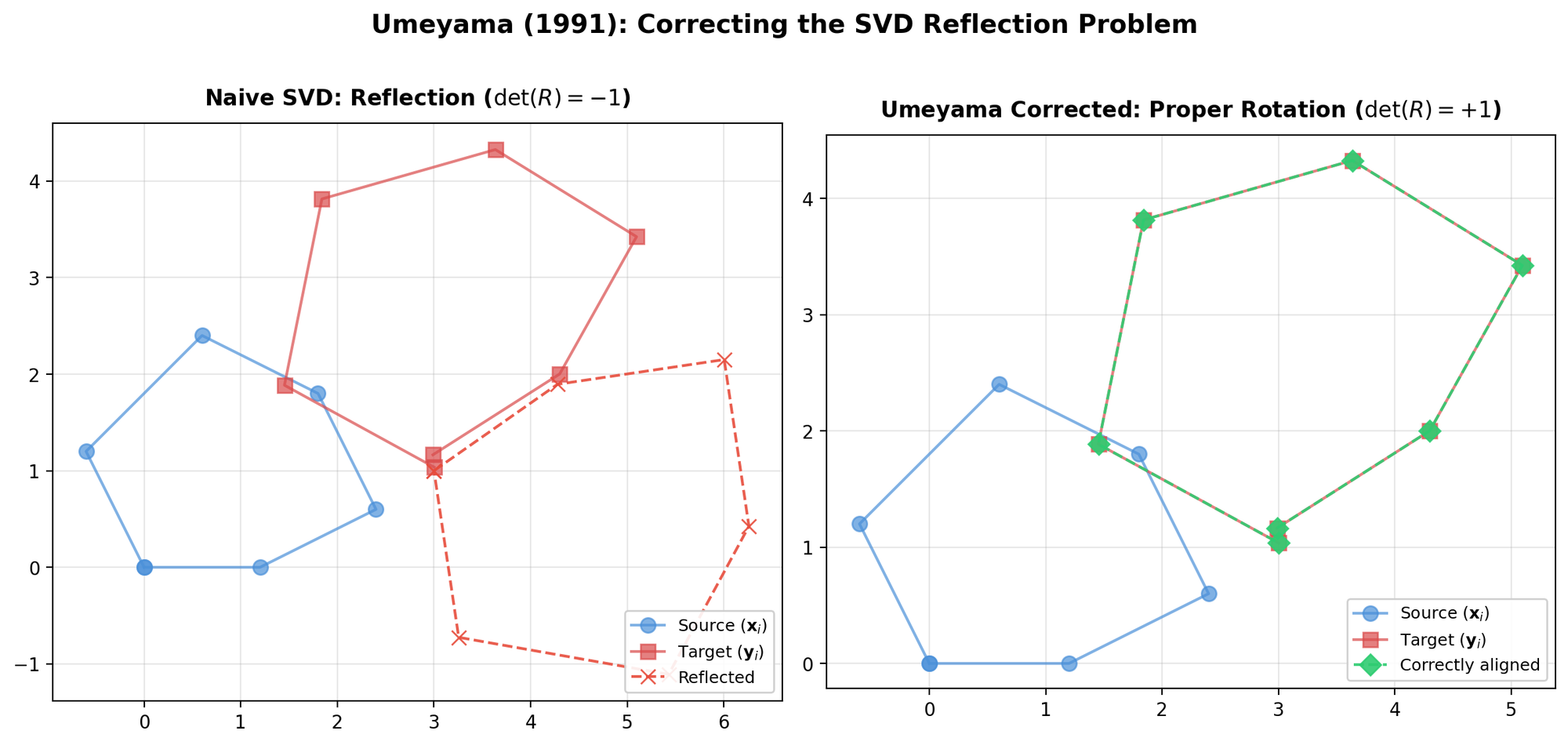

This Method paper addresses a specific failure mode in prior SVD-based solutions to the point set registration problem. Both Arun et al. (1987) and Horn, Hilden, and Negahdaripour (1988) presented SVD-based methods for finding the optimal rotation between two point patterns. (Note: this is a different paper from Horn’s 1987 quaternion method, which does not suffer from this issue.) These SVD-based methods can produce a reflection ($\det(R) = -1$) instead of a proper rotation when the data is severely corrupted. Umeyama provides a corrected formulation that always yields a proper rotation matrix.

The Similarity Transformation Problem

Given two point sets ${\mathbf{x}_i}$ and ${\mathbf{y}_i}$ ($i = 1, \ldots, n$) in $m$-dimensional space, find the similarity transformation parameters (rotation $R$, translation $\mathbf{t}$, and scale $c$) minimizing the mean squared error:

$$ e^2(R, \mathbf{t}, c) = \frac{1}{n} \sum_{i=1}^{n} \lVert \mathbf{y}_i - (cR\mathbf{x}_i + \mathbf{t}) \rVert^2 $$

This generalizes the Kabsch problem (rotation only) and the absolute orientation problem (rotation + translation + scale) to arbitrary dimensions $m$.

The Core Lemma: Corrected SVD Rotation

The key contribution is a lemma for finding the rotation $R$ minimizing $\lVert A - RB \rVert^2$. Given the SVD of $AB^T = UDV^T$ (with $d_1 \geq d_2 \geq \cdots \geq d_m \geq 0$), define the correction matrix:

$$ S = \begin{cases} I & \text{if } \det(AB^T) \geq 0 \\ \operatorname{diag}(1, 1, \ldots, 1, -1) & \text{if } \det(AB^T) < 0 \end{cases} $$

The minimum value is:

$$ \min_{R} \lVert A - RB \rVert^2 = \lVert A \rVert^2 + \lVert B \rVert^2 - 2\operatorname{tr}(DS) $$

When $\operatorname{rank}(AB^T) \geq m - 1$, the optimal rotation is uniquely determined as:

$$ R = USV^T $$

The critical insight is that when $\det(AB^T) = 0$ (i.e., $\operatorname{rank}(AB^T) = m - 1$), the matrix $S$ must instead be chosen based on $\det(U)\det(V)$:

$$ S = \begin{cases} I & \text{if } \det(U)\det(V) = 1 \\ \operatorname{diag}(1, 1, \ldots, 1, -1) & \text{if } \det(U)\det(V) = -1 \end{cases} $$

This handles the degenerate case where the sign of $\det(AB^T)$ is unreliable.

Complete Similarity Transformation Solution

Umeyama derives the full solution using centered coordinates and the covariance matrix $\Sigma_{xy} = \frac{1}{n} \sum_i (\mathbf{y}_i - \boldsymbol{\mu}_y)(\mathbf{x}_i - \boldsymbol{\mu}_x)^T$.

Given the SVD $\Sigma_{xy} = UDV^T$:

Rotation:

$$ R = USV^T $$

Scale:

$$ c = \frac{1}{\sigma_x^2} \operatorname{tr}(DS) $$

Translation:

$$ \mathbf{t} = \boldsymbol{\mu}_y - cR\boldsymbol{\mu}_x $$

Minimum error:

$$ \varepsilon^2 = \sigma_y^2 - \frac{\operatorname{tr}(DS)^2}{\sigma_x^2} $$

where $\sigma_x^2$ and $\sigma_y^2$ are the variances of the respective point sets around their centroids.

Why Prior Methods Fail

The methods of Arun et al. and Horn et al. use $R = UV^T$ directly from the SVD. This works when $\det(UV^T) = 1$ (proper rotation). When $\det(UV^T) = -1$, these methods either produce a reflection or apply an ad hoc correction (flipping the sign of the last column of $U$). Umeyama shows that the correct fix depends on $\det(\Sigma_{xy})$:

- If $\det(\Sigma_{xy}) \geq 0$: set $S = I$, so $R = UV^T$

- If $\det(\Sigma_{xy}) < 0$: set $S = \operatorname{diag}(1, \ldots, 1, -1)$, flipping the last singular value’s contribution

This distinction matters because corrupted data can make $\det(UV^T) = -1$ even when the true transformation is a proper rotation. Simply flipping a column of $U$ does not always yield the correct least-squares solution.

Generality

The formulation works for any dimension $m$, covering both 2D and 3D registration problems. The proof uses Lagrange multipliers with explicit enforcement of both orthogonality ($R^T R = I$) and the proper rotation constraint ($\det(R) = 1$), which prior methods enforced only partially.

Paper Information

Citation: Umeyama, S. (1991). Least-squares estimation of transformation parameters between two point patterns. IEEE Transactions on Pattern Analysis and Machine Intelligence, 13(4), 376-380. https://doi.org/10.1109/34.88573

Publication: IEEE TPAMI, 1991

Additional Resources:

- Kabsch Algorithm: NumPy, PyTorch, TensorFlow, and JAX (tutorial with implementations including the Kabsch-Umeyama scaling extension)

@article{umeyama1991least,

title={Least-squares estimation of transformation parameters between two point patterns},

author={Umeyama, Shinji},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

volume={13},

number={4},

pages={376--380},

year={1991},

publisher={IEEE},

doi={10.1109/34.88573}

}