SVD for 3D Point Set Registration

This Method paper presents a concise algorithm for finding the least-squares rotation and translation between two 3D point sets using the singular value decomposition (SVD) of a $3 \times 3$ cross-covariance matrix. The approach is closely related to the earlier Kabsch algorithm (1976), which used eigendecomposition, and was developed independently of Horn’s quaternion method (1987). The paper also identifies a reflection degeneracy that Umeyama later provided a complete fix for.

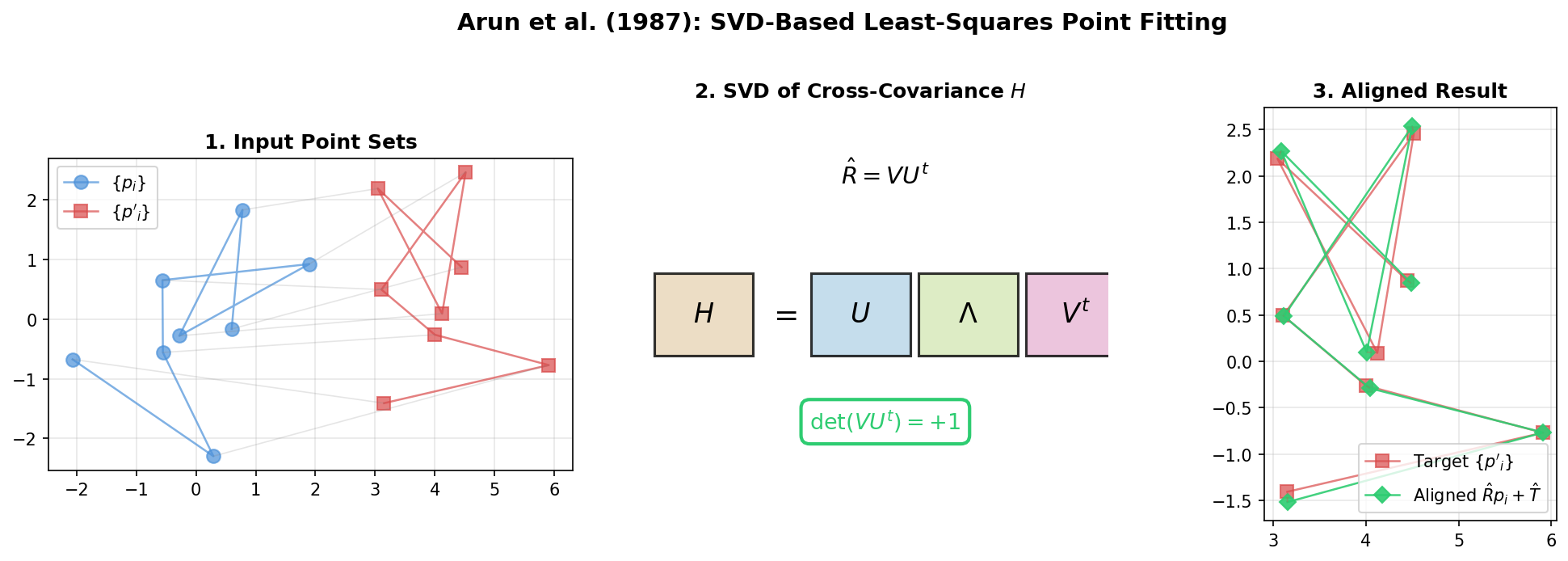

Problem Formulation

Given two 3D point sets ${p_i}$ and ${p’_i}$ ($i = 1, \ldots, N$) related by:

$$ p’_i = R p_i + T + N_i $$

where $R$ is a rotation matrix, $T$ is a translation vector, and $N_i$ is noise, find $\hat{R}$ and $\hat{T}$ minimizing:

$$ \Sigma^2 = \sum_{i=1}^{N} \lVert p’_i - (R p_i + T) \rVert^2 $$

Decoupling Translation and Rotation

The translation is eliminated by centering both point sets at their centroids $p$ and $p’$. Defining centered coordinates $q_i = p_i - p$ and $q’_i = p’_i - p’$, the problem reduces to:

$$ \Sigma^2 = \sum_{i=1}^{N} \lVert q’_i - R q_i \rVert^2 $$

Once $\hat{R}$ is found, the translation follows as $\hat{T} = p’ - \hat{R} p$.

The SVD Algorithm

The algorithm proceeds in five steps:

- Center both point sets by subtracting centroids

- Compute the $3 \times 3$ cross-covariance matrix: $H = \sum_{i=1}^{N} q_i q’^t_i$

- Compute the SVD: $H = U \Lambda V^t$

- Form the candidate rotation: $X = V U^t$

- Check $\det(X)$: if $+1$, then $\hat{R} = X$; if $-1$, the result is a reflection

The key insight is that minimizing $\Sigma^2$ is equivalent to maximizing $\operatorname{Trace}(RH)$. Using a lemma based on the Cauchy-Schwarz inequality, Arun et al. show that $X = VU^t$ maximizes this trace over all orthonormal matrices.

The Reflection Problem

When $\det(VU^t) = -1$, the SVD produces a reflection rather than a proper rotation. Arun et al. analyze three cases:

Noiseless, non-coplanar points: The SVD always gives a proper rotation ($\det = +1$). No issue arises.

Coplanar points (including $N = 3$): One singular value of $H$ is zero. Both a rotation and a reflection achieve $\Sigma^2 = 0$. The fix is to flip the sign of the column of $V$ corresponding to the zero singular value:

$$ V’ = [v_1, v_2, -v_3], \quad X’ = V’ U^t $$

Noisy, non-coplanar points with $\det = -1$: The paper acknowledges this case cannot be handled by the algorithm. The reflection genuinely minimizes $\Sigma^2$ over all orthonormal matrices, meaning no rotation achieves a lower error. The authors suggest this only occurs with very large noise and recommend RANSAC-like approaches.

This last case is precisely what Umeyama (1991) later resolved with a corrected formulation using a sign matrix $S$ conditioned on $\det(\Sigma_{xy})$.

Computational Comparison

The paper includes VAX 11/780 benchmarks comparing three methods:

| Points | SVD (ms) | Quaternion (ms) | Iterative (ms) |

|---|---|---|---|

| 3 | 54.6 | 26.6 | 126.8 |

| 11 | 37.0 | 41.0 | 105.2 |

| 30 | 44.2 | 48.3 | 111.0 |

The SVD and quaternion methods have comparable speed, both significantly faster than the iterative approach. SVD becomes faster than quaternion for larger point sets since its core computation operates on a $3 \times 3$ matrix regardless of $N$.

Paper Information

Citation: Arun, K. S., Huang, T. S., & Blostein, S. D. (1987). Least-Squares Fitting of Two 3-D Point Sets. IEEE Transactions on Pattern Analysis and Machine Intelligence, PAMI-9(5), 698-700. https://doi.org/10.1109/TPAMI.1987.4767965

Publication: IEEE TPAMI, 1987

Additional Resources:

- Kabsch Algorithm: NumPy, PyTorch, TensorFlow, and JAX (tutorial with differentiable implementations)

@article{arun1987least,

title={Least-Squares Fitting of Two 3-D Point Sets},

author={Arun, K. S. and Huang, T. S. and Blostein, S. D.},

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

volume={PAMI-9},

number={5},

pages={698--700},

year={1987},

publisher={IEEE},

doi={10.1109/TPAMI.1987.4767965}

}