Paper Information

Citation: Xu, Z., Li, J., Yang, Z. et al. (2022). SwinOCSR: end-to-end optical chemical structure recognition using a Swin Transformer. Journal of Cheminformatics, 14(41). https://doi.org/10.1186/s13321-022-00624-5

Publication: Journal of Cheminformatics 2022

Additional Resources:

Contribution: Methodological Architecture and Datasets

This is a Methodological Paper with a significant Resource component.

- Method: It proposes a novel architecture (Swin Transformer backbone) and a specific loss function optimization (Focal Loss) for the task of Optical Chemical Structure Recognition (OCSR).

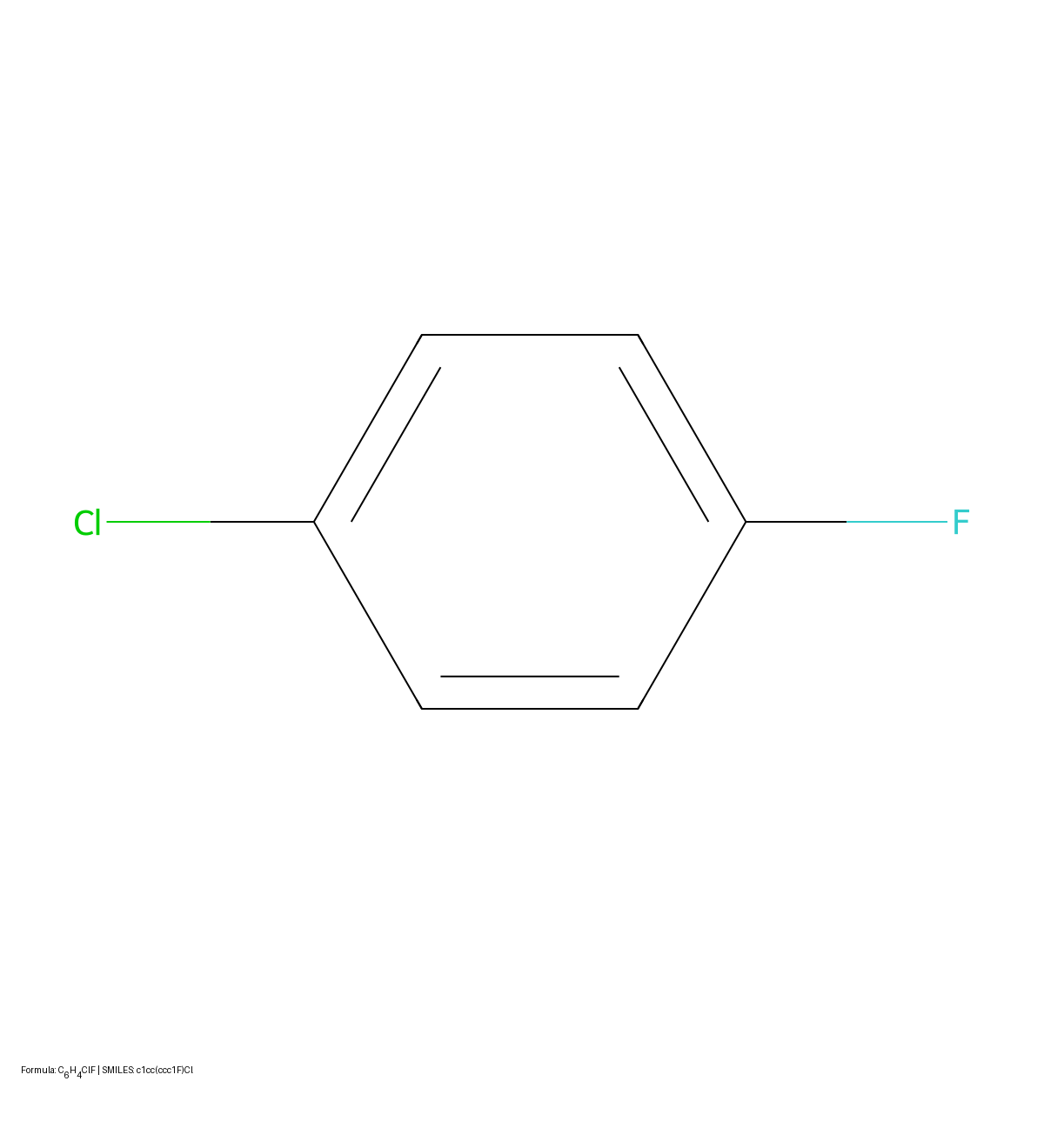

- Resource: It constructs a large-scale synthetic dataset of 5 million molecules, specifically designing it to cover complex cases like substituents and aromatic rings.

Motivation: Addressing Visual Context and Data Imbalance

- Problem: OCSR (converting images of chemical structures to SMILES) is difficult due to complex chemical patterns and long sequences. Existing deep learning methods (often CNN-based) struggle to achieve satisfactory recognition rates.

- Technical Gap: Standard CNN backbones (like ResNet or EfficientNet) focus on local feature extraction and miss global dependencies required for interpreting complex molecular diagrams.

- Data Imbalance: Chemical strings suffer from severe class imbalance (e.g., ‘C’ and ‘H’ are frequent; ‘Br’ or ‘Cl’ are rare), which causes standard Cross Entropy loss to underperform.

Core Innovation: Swin Transformers and Focal Loss

- Swin Transformer Backbone: SwinOCSR replaces the standard CNN backbone with a Swin Transformer, using shifted window attention to capture both local and global image features more effectively.

- Multi-label Focal Loss (MFL): The paper introduces a modified Focal Loss to OCSR, the first explicit attempt to address token imbalance in OCSR (per the authors). This penalizes the model for errors on rare tokens, addressing the “long-tail” distribution of chemical elements. The standard Focal Loss formulation heavily weights hard-to-classify examples: $$ \begin{aligned} FL(p_t) = -\alpha_t (1 - p_t)^\gamma \log(p_t) \\ \end{aligned} $$

- Structured Synthetic Dataset: Creation of a dataset explicitly balanced across four structural categories: Kekule rings, Aromatic rings, and their combinations with substituents.

Experimental Setup and Baselines

- Backbone Comparison: The authors benchmarked SwinOCSR against the backbones of leading competitors: ResNet-50 (used in Image2SMILES) and EfficientNet-B3 (used in DECIMER 1.0).

- Loss Function Ablation: They compared the performance of standard Cross Entropy (CE) loss against their proposed Multi-label Focal Loss (MFL).

- Category Stress Test: Performance was evaluated separately on molecules with/without substituents and with/without aromaticity to test robustness.

- Real-world Evaluation: The model was tested on 100 images manually extracted from the literature (with manually labeled SMILES), and separately on 100 CDK-generated images from those same SMILES, to measure the domain gap between synthetic and real-world data.

Results and Limitations

- Synthetic test set performance: With Multi-label Focal Loss (MFL), SwinOCSR achieved 98.58% accuracy on the synthetic test set, compared to 97.36% with standard CE loss. Both ResNet-50 (89.17%) and EfficientNet-B3 (86.70%) backbones scored lower when using CE loss (Table 3).

- Handling of long sequences: The model maintained high accuracy (94.76%) even on very long DeepSMILES strings (76-100 characters), indicating effective global feature extraction.

- Per-category results: Performance was consistent across molecule categories: Category 1 (Kekule, 98.20%), Category 2 (Aromatic, 98.46%), Category 3 (Kekule + Substituents, 98.76%), Category 4 (Aromatic + Substituents, 98.89%). The model performed slightly better on molecules with substituents and aromatic rings.

- Domain shift: While performance on synthetic data was strong, accuracy dropped to 25% on 100 real-world literature images. On 100 CDK-generated images from the same SMILES strings, accuracy was 94%, confirming that the gap stems from stylistic differences between CDK-rendered and real-world images. The authors attribute this to noise, low resolution, and variations such as condensed structural formulas and abbreviations.

Reproducibility Details

Data

- Source: The first 8.5 million structures from PubChem were downloaded, yielding ~6.9 million unique SMILES.

- Generation Pipeline:

- Tools: CDK (Chemistry Development Kit) for image rendering; RDKit for SMILES canonicalization.

- Augmentation: To ensure diversity, the dataset was split into 4 categories (1.25M each): (1) Kekule, (2) Aromatic, (3) Kekule + Substituents, (4) Aromatic + Substituents. Substituents were randomly added from a list of 224 common patent substituents.

- Preprocessing: Images rendered as binary, resized to 224x224, and copied to 3 channels (RGB simulation).

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Training | Synthetic (PubChem-derived) | 4,500,000 | 18:1:1 split (Train/Val/Test) |

| Validation | Synthetic (PubChem-derived) | 250,000 | |

| Test | Synthetic (PubChem-derived) | 250,000 |

Algorithms

- Loss Function: Multi-label Focal Loss (MFL). The single-label classification task was cast as multi-label to apply Focal Loss, using a sigmoid activation on logits.

- Optimization:

- Optimizer: Adam with initial learning rate

5e-4. - Schedulers: Cosine decay for the Swin Transformer backbone; Step decay for the Transformer encoder/decoder.

- Regularization: Dropout rate of

0.1.

- Optimizer: Adam with initial learning rate

Models

- Backbone (Encoder 1): Swin Transformer.

- Patch size: $4 \times 4$.

- Linear embedding dimension: 192.

- Structure: 4 stages with Swin Transformer Blocks (Window MSA + Shifted Window MSA).

- Output: Flattened patch sequence $S_b$.

- Transformer Encoder (Encoder 2): 6 standard Transformer encoder layers. Uses Positional Embedding + Multi-Head Attention + MLP.

- Transformer Decoder: 6 standard Transformer decoder layers. Uses Masked Multi-Head Attention (to prevent look-ahead) + Multi-Head Attention (connecting to encoder output $S_e$).

- Tokenization: DeepSMILES format used (syntactically more robust than SMILES). Vocabulary size: 76 tokens (76 unique characters found in dataset). Embedding dimension: 256.

Evaluation

- Metrics: Accuracy (Exact Match), Tanimoto Similarity (PubChem fingerprints), BLEU, ROUGE.

| Metric | SwinOCSR (CE) | SwinOCSR (MFL) | ResNet-50 (CE) | EfficientNet-B3 (CE) |

|---|---|---|---|---|

| Accuracy | 97.36% | 98.58% | 89.17% | 86.70% |

| Tanimoto | 99.65% | 99.77% | 98.79% | 98.46% |

| BLEU | 99.46% | 99.59% | 98.62% | 98.37% |

| ROUGE | 99.64% | 99.78% | 98.87% | 98.66% |

Hardware

- GPU: Trained on NVIDIA Tesla V100-PCIE.

- Training Time: 30 epochs.

- Batch Size: 256 images ($224 \times 224$ pixels).

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| SwinOCSR | Code + Data | Unknown | Official implementation with dataset and trained models |