Paper Information

Citation: Chang, Q., Chen, M., Pi, C., Hu, P., Zhang, Z., Ma, J., Du, J., Yin, B., & Hu, J. (2025). RFL: Simplifying Chemical Structure Recognition with Ring-Free Language. In Proceedings of the AAAI Conference on Artificial Intelligence (AAAI 2025). https://doi.org/10.48550/arXiv.2412.07594

Publication: AAAI 2025 (Oral)

Additional Resources:

Methodological Contribution

This is a Methodological paper ($\Psi_{\text{Method}}$). It introduces a novel representation system (Ring-Free Language) and a specialized neural architecture (Molecular Skeleton Decoder) designed to solve specific limitations in converting 2D images to 1D chemical strings. The paper validates this method through direct comparison with existing baselines and ablation studies.

Motivation: Limitations of 1D Serialization

Current Optical Chemical Structure Recognition (OCSR) methods typically rely on “unstructured modeling,” where 2D molecular graphs are flattened into 1D strings like SMILES or SSML. While simple, these linear formats struggle to explicitly capture complex spatial relationships, particularly in molecules with multiple rings and branches. End-to-end models often fail to “understand” the graph structure when forced to predict these implicit 1D sequences, leading to error accumulation in complex scenarios.

Innovation: Ring-Free Language (RFL) and Molecular Skeleton Decoder (MSD)

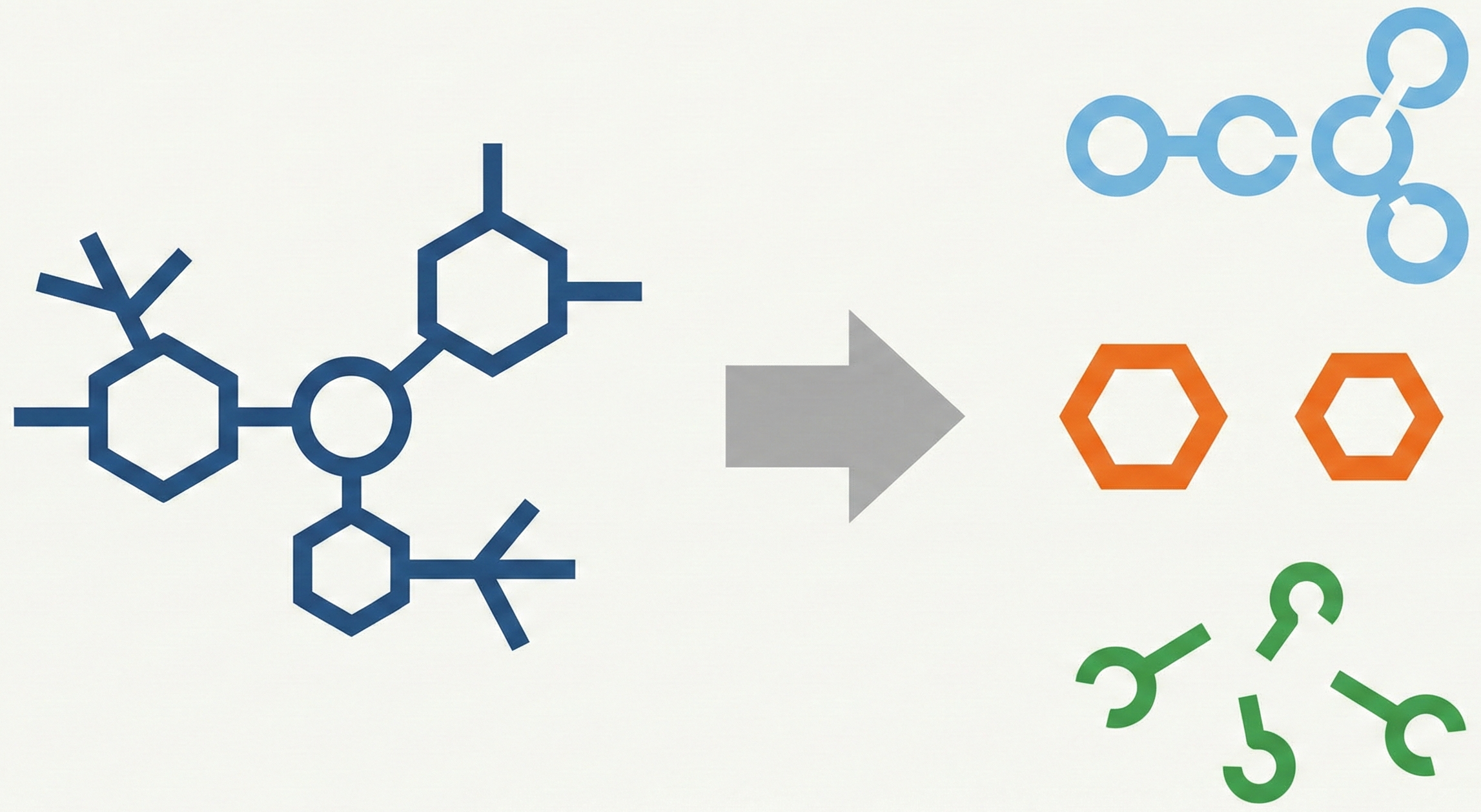

The authors propose two primary contributions to decouple spatial complexity:

- Ring-Free Language (RFL): A divide-and-conquer representation that splits a molecular graph $G$ into three explicit components: a molecular skeleton $\mathcal{S}$, individual ring structures $\mathcal{R}$, and branch information $\mathcal{F}$. This allows rings to be collapsed into “SuperAtoms” or “SuperBonds” during initial parsing.

- Molecular Skeleton Decoder (MSD): A hierarchical architecture that progressively predicts the skeleton first, then the individual rings (using SuperAtom features as conditions), and finally classifies the branch connections.

Methodology and Experiments

The method was evaluated on both handwritten and printed chemical structures against two baselines: DenseWAP (Zhang et al. 2018) and RCGD (Hu et al. 2023).

- Datasets:

- EDU-CHEMC: ~49k handwritten samples (challenging, diverse styles)

- Mini-CASIA-CSDB: ~89k printed samples (from ChEMBL)

- Synthetic Complexity Dataset: A custom split of ChEMBL data grouped by structural complexity (atoms + bonds + rings) to test generalization

- Ablation Studies (Table 2, on EDU-CHEMC with MSD-DenseWAP): Without MSD or

[conn], EM=38.70%. Adding[conn]alone raised EM to 44.02%. Adding MSD alone raised EM to 52.76%. Both together achieved EM=64.96%, confirming each component’s contribution.

Outcomes and Conclusions

- New best results: MSD-RCGD achieved 65.39% EM on EDU-CHEMC (handwritten) and 95.23% EM on Mini-CASIA-CSDB (printed), outperforming the RCGD baseline (62.86% and 95.01%, respectively). MSD-DenseWAP surpassed the previous best on EDU-CHEMC by 2.06% EM (64.92% vs. 62.86%).

- Universal improvement: Applying MSD/RFL to DenseWAP improved its accuracy from 61.35% to 64.92% EM on EDU-CHEMC and from 92.09% to 94.10% EM on Mini-CASIA-CSDB, demonstrating the method is model-agnostic.

- Complexity handling: When trained on low-complexity molecules only (levels 1-2), MSD-DenseWAP still recognized higher-complexity unseen structures, while standard DenseWAP could hardly recognize them at all (Figure 6 in the paper).

The authors note that this is the first end-to-end solution that decouples and models chemical structures in a structured form. Future work aims to extend structured-based modeling to other tasks such as tables, flowcharts, and diagrams.

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| RFL-MSD | Code | MIT | Official PyTorch implementation |

Reproducibility Details

Data

The authors utilized one handwritten and one printed dataset, plus a synthetic set for stress-testing complexity.

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Training/Test | EDU-CHEMC | 48,998 Train / 2,992 Test | Handwritten images from educational scenarios |

| Training/Test | Mini-CASIA-CSDB | 89,023 Train / 8,287 Test | Printed images rendered from ChEMBL using RDKit |

| Generalization | ChEMBL Subset | 5 levels of complexity | Custom split based on Eq: $N_{atom} + N_{bond} + 12 \times N_{ring}$ |

Algorithms

RFL Splitting (Encoding):

- Detect Rings: Use DFS to find all non-nested rings $\mathcal{R}$.

- Determine Adjacency ($\gamma$): Calculate shared edges between rings.

- Merge:

- If $\gamma(r_i) = 0$ (isolated), merge ring into a SuperAtom node.

- If $\gamma(r_i) > 0$ (adjacent), merge ring into a SuperBond edge.

- Update: Record connection info in $\mathcal{F}$ and remove ring details from the main graph to form Skeleton $\mathcal{S}$.

MSD Decoding:

- Hierarchical Prediction: The model predicts the Skeleton $\mathcal{S}$ first.

- Contextual Ring Prediction: When a SuperAtom/Bond token is predicted, its hidden state $f^s$ is stored. After the skeleton is finished, $f^s$ is used as a condition to autoregressively decode the specific ring structure.

- Token

[conn]: A special token separates connected ring bonds from unconnected ones to sparsify the branch classification task.

Models

The architecture follows a standard Image-to-Sequence pattern but with a forked decoder.

- Encoder: DenseNet (Growth rate=24, Depth=32 per block)

- Decoder (MSD):

- Core: GRU with Attention (Hidden dim=256, Embedding dim=256, Dropout=0.15)

- Skeleton Module: Autoregressively predicts sequence tokens. Uses Maxout activation.

- Branch Module: A binary classifier (MLP) taking concatenated features of skeleton bonds $f_{bs}$ and ring bonds $f_{br}$ to predict connectivity matrix $\mathcal{F}$.

- Loss Function: $\mathcal{O} = \lambda_1 \mathcal{L}_{ce} + \lambda_2 \mathcal{L}_{cls}$ (where $\lambda_1 = \lambda_2 = 1$)

Evaluation

Metrics focus on exact image reconstruction and structural validity.

| Metric | Description | Notes |

|---|---|---|

| EM (Exact Match) | % of images where predicted graph exactly matches ground truth. | Primary metric |

| Struct-EM | % of correctly identified chemical structures (ignoring non-chemical text). | Auxiliary metric |

Hardware

- Compute: 4 x NVIDIA Tesla V100 (32GB RAM)

- Training Configuration:

- Batch size: 8 (Handwritten), 32 (Printed)

- Epochs: 50

- Optimizer: Adam ($lr=2\times10^{-4}$, decayed by 0.5 via MultiStepLR)

Citation

@inproceedings{changRFLSimplifyingChemical2025,

title = {RFL: Simplifying Chemical Structure Recognition with Ring-Free Language},

shorttitle = {RFL},

author = {Chang, Qikai and Chen, Mingjun and Pi, Changpeng and Hu, Pengfei and Zhang, Zhenrong and Ma, Jiefeng and Du, Jun and Yin, Baocai and Hu, Jinshui},

year = {2025},

booktitle = {Proceedings of the AAAI Conference on Artificial Intelligence},

eprint = {2412.07594},

primaryclass = {cs},

doi = {10.48550/arXiv.2412.07594},

archiveprefix = {arXiv}

}