Paper Information

Citation: Rajan, K., Brinkhaus, H.O., Zielesny, A. et al. (2024). Advancements in hand-drawn chemical structure recognition through an enhanced DECIMER architecture. Journal of Cheminformatics, 16(78). https://doi.org/10.1186/s13321-024-00872-7

Publication: Journal of Cheminformatics 2024

Additional Resources:

Method Contribution: Architectural Optimization

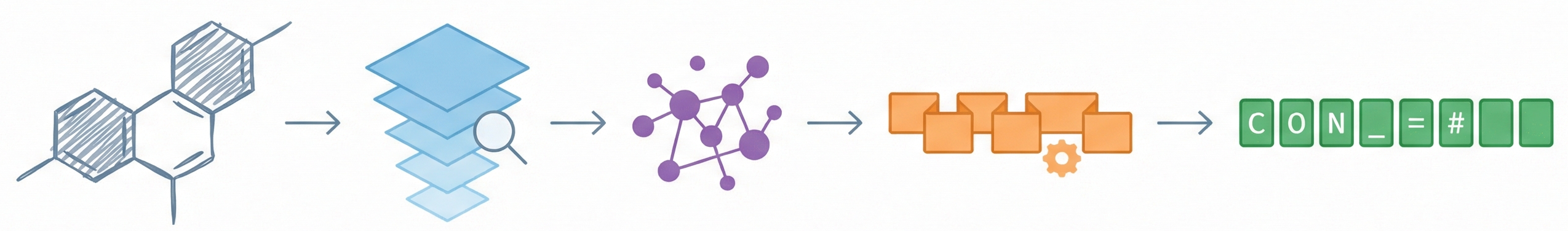

This is a Method paper. It proposes an enhanced neural network architecture (EfficientNetV2 + Transformer) specifically designed to solve the problem of recognizing hand-drawn chemical structures. The primary contribution is architectural optimization and a data-driven training strategy, validated through ablation studies (comparing encoders) and benchmarked against existing rule-based and deep learning tools.

Motivation: Digitizing “Dark” Chemical Data

Chemical information in legacy laboratory notebooks and modern tablet-based inputs often exists as hand-drawn sketches.

- Gap: Existing Optical Chemical Structure Recognition (OCSR) tools (particularly rule-based ones) lack robustness and fail when images have variability in style, line thickness, or noise.

- Need: There is a critical need for automated tools to digitize this “dark data” effectively to preserve it and make it machine-readable and searchable.

Core Innovation: Decoder-Only Design and Synthetic Scaling

The core novelty is the architectural enhancement and synthetic training strategy:

- Decoder-Only Transformer: Using only the decoder part of the Transformer (instead of a full encoder-decoder Transformer) improved average accuracy across OCSR benchmarks from 61.28% to 69.27% (Table 3 in the paper).

- EfficientNetV2 Integration: Replacing standard CNNs or EfficientNetV1 with EfficientNetV2-M provided better feature extraction and 2x faster training speeds.

- Scale of Synthetic Data: The authors demonstrate that scaling synthetic training data (up to 152 million images generated by RanDepict) directly correlates with improved generalization to real-world hand-drawn images, without ever training on real hand-drawn data.

Experimental Setup: Ablation and Real-World Baselines

- Model Selection (Ablation): Tested three architectures (EfficientNetV2-M + Full Transformer, EfficientNetV1-B7 + Decoder-only, EfficientNetV2-M + Decoder-only) on standard benchmarks (JPO, CLEF, USPTO, UOB).

- Data Scaling: Trained the best model on four progressively larger datasets (from 4M to 152M images) to measure performance gains.

- Real-World Benchmarking: Validated the final model on the DECIMER Hand-drawn dataset (5088 real images drawn by volunteers) and compared against 9 other tools (OSRA, MolVec, Img2Mol, MolScribe, etc.).

Results and Conclusions: Strong Accuracy on Hand-Drawn Scans

- Strong Performance: The final DECIMER model achieved 99.72% valid predictions and 73.25% exact accuracy on the hand-drawn benchmark. The next best non-DECIMER tool was MolGrapher at 10.81% accuracy, followed by MolScribe at 7.65%.

- Robustness: Deep learning methods outperform rule-based methods (which scored 3% or less accuracy) on hand-drawn data.

- Data Saturation: Quadrupling the dataset from 38M to 152M images yielded only marginal gains (about 3 percentage points in accuracy), suggesting current synthetic data strategies may be hitting a plateau.

Reproducibility

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| DECIMER Image Transformer (GitHub) | Code | MIT | Official TensorFlow implementation |

| Model Weights (Zenodo) | Model | Unknown | Pre-trained hand-drawn model weights |

| DECIMER PyPi Package | Code | MIT | Installable Python package |

| RanDepict (GitHub) | Code | MIT | Synthetic hand-drawn image generation toolkit |

Data

The model was trained entirely on synthetic data generated using the RanDepict toolkit. No real hand-drawn images were used for training.

| Dataset | Source | Molecules | Total Images | Notes |

|---|---|---|---|---|

| 1 | ChEMBL | 2,187,669 | 4,375,338 | 1 augmented + 1 clean per molecule |

| 2 | ChEMBL | 2,187,669 | 13,126,014 | 2 augmented + 4 clean per molecule |

| 3 | PubChem | 9,510,000 | 38,040,000 | 1 augmented + 3 clean per molecule |

| 4 | PubChem | 38,040,000 | 152,160,000 | 1 augmented + 3 clean per molecule |

A separate model selection experiment used a 1,024,000-molecule subset of ChEMBL to compare the three architectures (Table 1 in the paper). The DECIMER Hand-Drawn evaluation dataset consists of 5,088 real hand-drawn images from 23 volunteers.

Preprocessing:

- SMILES strings length < 300 characters.

- Images resized to $512 \times 512$.

- Images generated with and without “hand-drawn style” augmentations.

Algorithms

- Tokenization: SMILES split by heavy atoms, brackets, bond symbols, and special characters. Start

<start>and end<end>tokens added; padded with<pad>. - Optimization: Adam optimizer with a custom learning rate schedule (as specified in the original Transformer paper). A dropout rate of 0.1 was used.

- Loss Function: Trained using focal loss to address class imbalance for rare tokens. The focal loss formulation reduces the relative loss for well-classified examples: $$ \text{FL}(p_{\text{t}}) = -\alpha_{\text{t}} (1 - p_{\text{t}})^\gamma \log(p_{\text{t}}) $$

- Augmentations: RanDepict applied synthetic distortions to mimic handwriting (wobbly lines, variable thickness, etc.).

Models

The final architecture (Model 3) is an Encoder-Decoder structure:

- Encoder: EfficientNetV2-M (pretrained ImageNet backbone).

- Input: $512 \times 512 \times 3$ image.

- Output Features: $16 \times 16 \times 512$ (reshaped to sequence length 256, dimension 512).

- Note: The final fully connected layer of the CNN is removed.

- Decoder: Transformer (Decoder-only).

- Layers: 6

- Attention Heads: 8

- Embedding Dimension: 512

- Output: Predicted SMILES string token by token.

Evaluation

Metrics used for evaluation:

- Valid Predictions (%): Percentage of outputs that are syntactically valid SMILES.

- Exact Match Accuracy (%): Canonical SMILES string identity.

- Tanimoto Similarity: Fingerprint similarity (PubChem fingerprints) between ground truth and prediction.

Data Scaling Results (Hand-Drawn Dataset, Table 4 in the paper):

| Dataset | Training Images | Valid Predictions | Exact Accuracy | Tanimoto |

|---|---|---|---|---|

| 1 (ChEMBL) | 4,375,338 | 96.21% | 5.09% | 0.490 |

| 2 (ChEMBL) | 13,126,014 | 97.41% | 26.08% | 0.690 |

| 3 (PubChem) | 38,040,000 | 99.67% | 70.34% | 0.939 |

| 4 (PubChem) | 152,160,000 | 99.72% | 73.25% | 0.942 |

Comparison with Other Tools (Hand-Drawn Dataset, Table 5 in the paper):

| OCSR Tool | Method | Valid Predictions | Exact Accuracy | Tanimoto |

|---|---|---|---|---|

| DECIMER (Ours) | Deep Learning | 99.72% | 73.25% | 0.94 |

| DECIMER.ai | Deep Learning | 96.07% | 26.98% | 0.69 |

| MolGrapher | Deep Learning | 99.94% | 10.81% | 0.51 |

| MolScribe | Deep Learning | 95.66% | 7.65% | 0.59 |

| Img2Mol | Deep Learning | 98.96% | 5.25% | 0.52 |

| SwinOCSR | Deep Learning | 97.37% | 5.11% | 0.64 |

| ChemGrapher | Deep Learning | 69.56% | N/A | 0.09 |

| Imago | Rule-based | 43.14% | 2.99% | 0.22 |

| MolVec | Rule-based | 71.86% | 1.30% | 0.23 |

| OSRA | Rule-based | 54.66% | 0.57% | 0.17 |

Hardware

- Compute: Google Cloud TPU v4-128 pod slice.

- Training Time:

- EfficientNetV2-M model trained ~2x faster than EfficientNetV1-B7.

- Average training time per epoch: 34 minutes (for Model 3 on 1M dataset subset).

- Epochs: Models trained for 25 epochs.

Citation

@article{rajanAdvancementsHanddrawnChemical2024,

title = {Advancements in Hand-Drawn Chemical Structure Recognition through an Enhanced {{DECIMER}} Architecture},

author = {Rajan, Kohulan and Brinkhaus, Henning Otto and Zielesny, Achim and Steinbeck, Christoph},

year = 2024,

month = jul,

journal = {Journal of Cheminformatics},

volume = {16},

number = {1},

pages = {78},

issn = {1758-2946},

doi = {10.1186/s13321-024-00872-7}

}