Methodological Contribution: MOFFlow Architecture

This is a Methodological Paper ($\Psi_{\text{Method}}$).

It introduces MOFFlow, a generative architecture and training framework designed specifically for the structure prediction of Metal-Organic Frameworks (MOFs). The paper focuses on the algorithmic innovation of decomposing the problem into rigid-body assembly on a Riemannian manifold, validates this through comparison against existing baselines, and performs ablation studies to justify architectural choices. While it leverages the theory of flow matching, its primary contribution is the application-specific architecture and the handling of modular constraints.

Motivation: Scaling Limits of Atom-Level Generation

The primary motivation is to overcome the scalability and accuracy limitations of existing methods for MOF structure prediction.

- Computational Cost of DFT: Conventional approaches rely on ab initio calculations (DFT) combined with random search, which are computationally prohibitive for large, complex systems like MOFs.

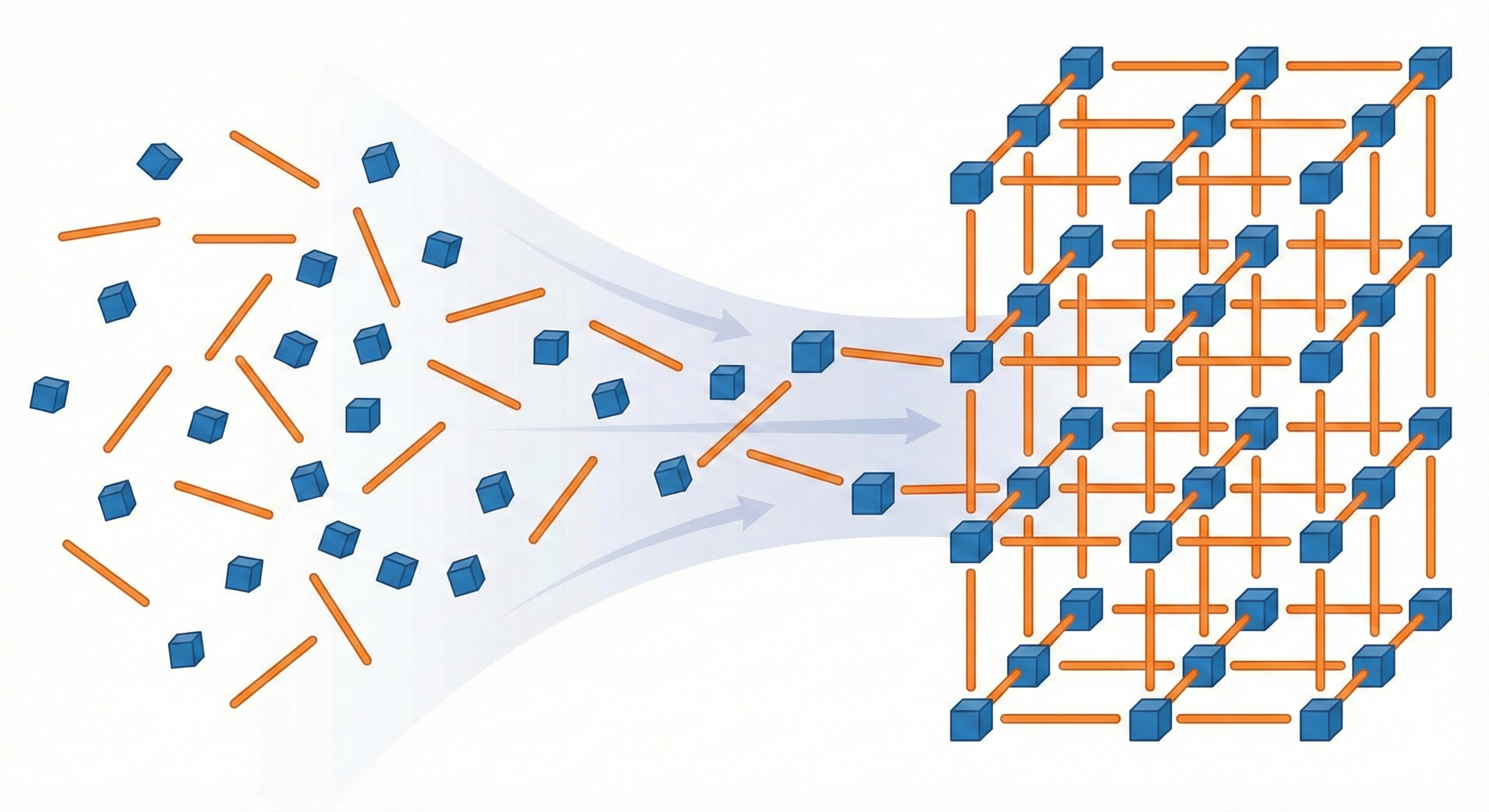

- Failure of General CSP: Existing deep generative models for general Crystal Structure Prediction (CSP) operate on an atom-by-atom basis. They fail to scale to MOFs, which often contain hundreds or thousands of atoms per unit cell, and do not exploit the inherent modular nature (building blocks) of MOFs.

- Tunability: MOFs have applications in carbon capture and drug delivery due to their tunable porosity, making automated design tools valuable.

Core Innovation: Rigid-Body Flow Matching on SE(3)

MOFFlow introduces a hierarchical, rigid-body flow matching framework tailored for MOFs.

- Rigid Body Decomposition: MOFFlow treats metal nodes and organic linkers as rigid bodies, reducing the search space from $3N$ (atoms) to $6M$ (roto-translation of $M$ blocks) compared to atom-based methods.

- Riemannian Flow Matching on $SE(3)$: It is the first end-to-end model to jointly generate block-level rotations ($SO(3)$), translations ($\mathbb{R}^3$), and lattice parameters using Riemannian flow matching.

- MOFAttention: A custom attention module designed to encode the geometric relationships between building blocks, lattice parameters, and rotational constraints.

- Constraint Handling: It incorporates domain knowledge by operating on a mean-free system for translation invariance and using canonicalized coordinates for rotation invariance.

Experimental Setup and Baselines

The authors evaluated MOFFlow on structure prediction accuracy, physical property preservation, and scalability.

- Dataset: The Boyd et al. (2019) dataset consisting of 324,426 hypothetical MOF structures, decomposed into building blocks using the MOFid algorithm. Filtered to structures with <200 blocks, yielding 308,829 structures (247,066 train / 30,883 val / 30,880 test). Structures contain up to approximately 2,400 atoms per unit cell.

- Baselines:

- Optimization-based: Random Search (RS) and Evolutionary Algorithm (EA) using CrySPY and CHGNet.

- Deep Learning: DiffCSP (deep generative model for general crystals).

- Self-Assembly: A heuristic algorithm used in MOFDiff (adapted for comparison).

- Metrics:

- Match Rate (MR): Percentage of generated structures matching ground truth within tolerance.

- RMSE: Root mean squared displacement normalized by average free length per atom.

- Structural Properties: Volumetric/Gravimetric Surface Area (VSA/GSA), Pore Limiting Diameter (PLD), Void Fraction, etc., calculated via Zeo++.

- Scalability: Performance vs. number of atoms and building blocks.

Results and Generative Performance

MOFFlow outperformed all baselines in accuracy and efficiency, particularly for large structures.

- Accuracy: With a single sample, MOFFlow achieved a 31.69% match rate (stol=0.5) and 87.46% (stol=1.0) on the full test set (30,880 structures). With 5 samples, these rose to 44.75% (stol=0.5) and 100.0% (stol=1.0). RS and EA (tested on 100 and 15 samples respectively due to computational cost, generating 20 candidates each) achieved 0.00% MR at both tolerance levels. DiffCSP reached 0.09% (stol=0.5) and 23.12% (stol=1.0) with 1 sample.

- Speed: Inference took 1.94 seconds per structure, compared to 5.37s for DiffCSP, 332s for RS, and 1,959s for EA.

- Scalability: MOFFlow preserved high match rates across all system sizes, while DiffCSP’s match rate dropped sharply beyond 200 atoms.

- Property Preservation: The distributions of physical properties (e.g., surface area, void fraction) for MOFFlow-generated structures closely matched the ground truth. DiffCSP frequently reduced volumetric surface area and void fraction to zero.

- Self-Assembly Comparison: In a controlled comparison where the self-assembly (SA) algorithm received MOFFlow’s predicted translations and lattice, MOFFlow (MR=31.69%, RMSE=0.2820) outperformed SA (MR=30.04%, RMSE=0.3084), confirming the value of the learned rotational vector fields. In an extended scalability comparison, SA scaled better for structures with many building blocks, but MOFFlow achieved higher overall match rate (31.69% vs. 27.14%).

- Batch Implementation: A refactored Batch version achieves improved results: 32.73% MR (stol=0.5), RMSE of 0.2743, inference in 0.19s per structure (10x faster), and training in roughly 1/3 the GPU hours.

Limitations

The paper identifies three key limitations:

- Hypothetical-only evaluation: All experiments use the Boyd et al. hypothetical database. Evaluation on more challenging real-world datasets remains needed.

- Rigid-body assumption: The model assumes that local building block structures are known, which may be impractical for rare building blocks whose structural information is missing from existing libraries or is inaccurate.

- Periodic invariance: The model is not invariant to periodic transformations of the input. Explicitly modeling periodic invariance could further improve performance.

Reproducibility Details

Data

- Source: MOF dataset by Boyd et al. (2019).

- Preprocessing: Structures were decomposed using the metal-oxo decomposition algorithm from MOFid.

- Filtering: Structures with fewer than 200 building blocks were used, yielding 308,829 structures.

- Splits: Train/Validation/Test ratio of 8:1:1 (247,066 / 30,883 / 30,880).

- Availability: Pre-processed dataset is available on Zenodo.

- Representations:

- Atom-level: Tuple $(X, a, l)$ (coordinates, types, lattice).

- Block-level: Tuple $(\mathcal{B}, q, \tau, l)$ (blocks, rotations, translations, lattice).

Algorithms

- Framework: Riemannian Flow Matching.

- Objective: Conditional Flow Matching (CFM) loss regressing to clean data $q_1, \tau_1, l_1$. $$ \begin{aligned} \mathcal{L}(\theta) = \mathbb{E}_{t, \mathcal{S}^{(1)}} \left[ \frac{1}{(1-t)^2} \left( \lambda_1 |\log_{q_t}(\hat{q}_1) - \log_{q_t}(q_1)|^2 + \dots \right) \right] \end{aligned} $$

- Priors:

- Rotations ($q$): Uniform on $SO(3)$.

- Translations ($\tau$): Standard normal on $\mathbb{R}^3$.

- Lattice ($l$): Log-normal for lengths, Uniform(60, 120) for angles (Niggli reduced).

- Inference: ODE solver with 50 integration steps.

- Local Coordinates: Defined using PCA axes, corrected for symmetry to ensure consistency.

Models

- Architecture: Hierarchical structure with two key modules.

- Atom-level Update Layers: 4-layer EGNN-like structure to encode building block features $h_m$ from atomic graphs (cutoff 5Å).

- Block-level Update Layers: 6 layers that iteratively update $q, \tau, l$ using the MOFAttention module.

- MOFAttention: Modified Invariant Point Attention (IPA) that incorporates lattice parameters as offsets to the attention matrix.

- Hyperparameters:

- Node dimension: 256 (block-level), 64 (atom-level).

- Attention heads: 24.

- Loss coefficients: $\lambda_1=1.0$ (rot), $\lambda_2=2.0$ (trans), $\lambda_3=0.1$ (lattice).

- Checkpoints: Pre-trained weights and models are openly provided on Zenodo.

Evaluation

- Metrics:

- Match Rate: Using

StructureMatcherfrompymatgen. Tolerances:stol=0.5/1.0,ltol=0.3,angle_tol=10.0. - RMSE: Normalized by average free length per atom.

- Match Rate: Using

- Tools: Zeo++ for structural property calculations (Surface Area, Pore Diameter, etc.).

| Metric | MOFFlow | DiffCSP | RS (20 cands) | EA (20 cands) |

|---|---|---|---|---|

| MR (stol=0.5, k=1) | 31.69% | 0.09% | 0.00% | 0.00% |

| MR (stol=1.0, k=1) | 87.46% | 23.12% | 0.00% | 0.00% |

| MR (stol=0.5, k=5) | 44.75% | 0.34% | - | - |

| MR (stol=1.0, k=5) | 100.0% | 38.94% | - | - |

| RMSE (stol=0.5, k=1) | 0.2820 | 0.3961 | - | - |

| Avg. time per structure | 1.94s | 5.37s | 332s | 1,959s |

Hardware

- Training Hardware: 8 $\times$ NVIDIA RTX 3090 (24GB VRAM).

- Training Time:

- TimestepBatch version (main paper): ~5 days 15 hours.

- Batch version: ~1 day 17 hours (332.74 GPU hours). The authors also release this refactored implementation, which achieves comparable performance with faster convergence.

- Batch Size: 160 (capped by $N^2$ where $N$ is the number of atoms, for memory management).

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| MOFFlow (GitHub) | Code | MIT | Official implementation built on DiffDock, EGNN, MOFDiff, and protein-frame-flow |

| Pre-processed dataset and checkpoints (Zenodo) | Dataset / Model | Unknown | Includes pre-processed MOF structures and trained model weights |

Paper Information

Citation: Kim, N., Kim, S., Kim, M., Park, J., & Ahn, S. (2025). MOFFlow: Flow Matching for Structure Prediction of Metal-Organic Frameworks. International Conference on Learning Representations (ICLR).

Publication: ICLR 2025

@inproceedings{kimMOFFlowFlowMatching2025,

title={MOFFlow: Flow Matching for Structure Prediction of Metal-Organic Frameworks},

author={Kim, Nayoung and Kim, Seongsu and Kim, Minsu and Park, Jinkyoo and Ahn, Sungsoo},

booktitle={The Thirteenth International Conference on Learning Representations},

year={2025},

url={https://openreview.net/forum?id=dNT3abOsLo}

}

Additional Resources: