Core Innovation: Adaptive Sparsity in SE(3) Networks

This is a methodological paper introducing a novel architecture and training curriculum to solve efficiency bottlenecks in Geometric Deep Learning. It directly tackles the primary computational bottleneck in modern SE(3)-equivariant graph neural networks (the tensor product operation) and proposes a generalizable solution through adaptive network sparsification.

The Computational Bottleneck in DFT Hamiltonian Prediction

SE(3)-equivariant networks are accurate but unscalable for DFT Hamiltonian prediction due to two key bottlenecks:

- Atom Scaling: Tensor Product (TP) operations grow quadratically with atoms ($N^2$).

- Basis Set Scaling: Computational complexity grows with the sixth power of the angular momentum order ($L^6$). Larger basis sets (e.g., def2-TZVP) require higher orders ($L=6$), making them prohibitively slow.

Existing SE(3)-equivariant models cannot handle large molecules (40-100 atoms) with high-quality basis sets, limiting their practical applicability in computational chemistry.

SPHNet Architecture and the Three-Phase Sparsity Scheduler

SPHNet introduces Adaptive Sparsity to prune redundant computations at two levels:

- Sparse Pair Gate: Learns which atom pairs to include in message passing, adapting the interaction graph based on importance.

- Sparse TP Gate: Filters which spherical harmonic triplets $(l_1, l_2, l_3)$ are computed in tensor product operations, pruning higher-order combinations that contribute less to accuracy.

- Three-Phase Sparsity Scheduler: A training curriculum (Random → Adaptive → Fixed) that enables stable convergence to high-performing sparse subnetworks.

Key insight: The Sparse Pair Gate learns to preserve long-range interactions (16-25 Angstrom) at higher rates than short-range ones. Short-range pairs are abundant and easier to learn, while rare long-range interactions require more samples for accurate representation, making them more critical to retain.

Benchmarks and Ablation Studies

The authors evaluated SPHNet on three datasets (MD17, QH9, and PubChemQH) with varying molecule sizes and basis set complexities. Baselines include SchNOrb, PhiSNet, QHNet, and WANet. SchNOrb and PhiSNet results are limited to MD17, as those models are designed for trajectory datasets. WANet was not open-sourced, so only partial metrics from its paper are reported.

Evaluation Metrics

- Hamiltonian MAE ($H$): Mean absolute error between predicted and DFT-computed Hamiltonian matrices, in Hartrees ($E_h$)

- Occupied Orbital Energy MAE ($\epsilon$): Mean absolute error of all occupied molecular orbital energies derived from the predicted Hamiltonian

- Orbital Coefficient Similarity ($\psi$): Cosine similarity of occupied molecular orbital coefficients between predicted and reference wavefunctions

Ablation Studies

Sparse Gates (on PubChemQH):

| Configuration | $H$ [$10^{-6} E_h$] $\downarrow$ | Memory [GB] $\downarrow$ | Speedup $\uparrow$ |

|---|---|---|---|

| Both gates | 97.31 | 5.62 | 7.09x |

| Pair Gate only | 87.70 | 6.98 | 2.73x |

| TP Gate only | 94.31 | 8.04 | 3.98x |

| Neither gate | 86.35 | 10.91 | 1.73x |

The Sparse Pair Gate contributes a 78% speedup with 30% memory reduction. The Sparse TP Gate (pruning 70% of combinations) yields a 160% speedup. Both gates together achieve the highest speedup, though accuracy slightly decreases compared to no gating.

Three-Phase Scheduler: Removing the random phase causes convergence to local optima ($112.68 \pm 10.75$ vs $97.31 \pm 0.52$). Removing the adaptive phase increases variance and lowers accuracy ($122.79 \pm 19.02$). Removing the fixed phase has minimal accuracy impact but reduces speedup from 7.09x to 5.45x due to dynamic graph overhead.

Sparsity Rate: The critical sparsity threshold scales with system complexity: 30% for MD17 (small molecules), 40% for QH9 (medium), and 70% for PubChemQH (large). Beyond the threshold, MAE increases sharply. Computational cost decreases approximately linearly with sparsity rate.

Transferability to Other Models

To demonstrate the speedup is architecture-agnostic, the authors applied the Sparse Pair Gate and Sparse TP Gate to the QHNet baseline on PubChemQH:

| Configuration | $H$ [$10^{-6} E_h$] $\downarrow$ | Memory [GB] $\downarrow$ | Speedup $\uparrow$ |

|---|---|---|---|

| QHNet baseline | 123.74 | 22.50 | 1.00x |

| + TP Gate | 128.16 | 12.68 | 2.04x |

| + Pair Gate | 126.27 | 10.07 | 1.66x |

| + Both gates | 128.89 | 8.46 | 3.30x |

The gates reduced QHNet’s memory by 62% and improved speed by 3.3x with modest accuracy trade-off, confirming the gates are portable modules applicable to other SE(3)-equivariant architectures.

Performance Results

QH9 (134k molecules, $\leq$ 20 atoms)

SPHNet achieves 3.3x to 4.0x speedup over QHNet across all four QH9 splits, with improved Hamiltonian MAE and orbital energy MAE. Memory drops to 0.23 GB/sample (33% of QHNet’s 0.70 GB). On the stable-iid split, Hamiltonian MAE improves from 76.31 to 45.48 ($10^{-6} E_h$).

PubChemQH (50k molecules, 40-100 atoms)

| Model | $H$ [$10^{-6} E_h$] $\downarrow$ | $\epsilon$ [$E_h$] $\downarrow$ | $\psi$ [$10^{-2}$] $\uparrow$ | Memory [GB] $\downarrow$ | Speedup $\uparrow$ |

|---|---|---|---|---|---|

| QHNet | 123.74 | 3.33 | 2.32 | 22.5 | 1.0x |

| WANet | 99.98 | 1.17 | 3.13 | 15.0 | 2.4x |

| SPHNet | 97.31 | 2.16 | 2.97 | 5.62 | 7.1x |

SPHNet achieves the best Hamiltonian MAE and efficiency, though WANet outperforms on orbital energy MAE and coefficient similarity. The higher speedup on PubChemQH (vs QH9) reflects greater computational redundancy in larger systems with higher-order basis sets ($L_{max} = 6$ for def2-TZVP vs $L_{max} = 4$ for def2-SVP).

MD17 (Small Molecule Trajectories)

SPHNet achieves accuracy comparable to QHNet and PhiSNet on four MD17 molecules (water, ethanol, malondialdehyde, uracil; 3-12 atoms). MD17 represents a simpler task where baseline models already perform well, leaving limited room for improvement. For water (3 atoms), the number of interaction combinations is inherently small, limiting the benefit of adaptive sparsification.

Scaling Limit

SPHNet can train on systems with approximately 3000 atomic orbitals on a single A6000 GPU; the QHNet baseline runs out of memory at approximately 1800 orbitals. Memory consumption scales more favorably as molecule size increases.

Key Findings

- Adaptive sparsity scales with system complexity: The method is most effective for large systems where redundancy is high. For small molecules (e.g., water with only 3 atoms), every interaction is critical, so pruning hurts accuracy and yields negligible speedup.

- Long-range pair preservation: The Sparse Pair Gate selects long-range pairs (16-25 Angstrom) at higher rates than short-range ones. Short-range pairs are numerous and easier to learn, while rare long-range interactions are harder to represent and thus more critical to retain.

- Generalizable components: The sparsification techniques are portable modules, demonstrated by successful integration into QHNet with 3.3x speedup.

- Architecture ablation: Removing one Vectorial Node Interaction block or Spherical Node Interaction block significantly hurts accuracy, confirming the importance of the progressive order-increase design. Removing one Pair Construction block has less impact, suggesting room for further speedup.

Reproducibility Details

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| SPHNet (GitHub) | Code | MIT | Official implementation; archived by Microsoft (Dec 2025), read-only |

| PubChemQH (Hugging Face) | Dataset | MIT | 50k molecules, 40-100 atoms, def2-TZVP basis |

No pre-trained model weights are provided. MD17 and QH9 are publicly available community datasets. Training requires 4x NVIDIA A100 (80GB) GPUs; benchmarking uses a single NVIDIA RTX A6000 (46GB).

Data

The experiments evaluated SPHNet on three datasets with different molecular sizes and basis set complexities. All datasets use DFT calculations as ground truth, with MD17 using the PBE exchange-correlation functional and QH9/PubChemQH using B3LYP.

| Dataset | Molecules | Molecule Size | Basis Set | $L_{max}$ | Functional |

|---|---|---|---|---|---|

| MD17 | 4 systems | 3-12 atoms (water, ethanol, malondialdehyde, uracil) | def2-SVP | 4 | PBE |

| QH9 | 134k | $\leq$ 20 atoms (Stable/Dynamic splits) | def2-SVP | 4 | B3LYP |

| PubChemQH | 50k | 40-100 atoms | def2-TZVP | 6 | B3LYP |

Data Availability:

- MD17 & QH9: Publicly available

- PubChemQH: Publicly available on Hugging Face (EperLuo/PubChemQH)

Algorithms

Loss Function:

The model learns the residual $\Delta H$:

$$ \begin{aligned} \Delta H &= H_{\text{ref}} - H_{\text{init}} \\ \mathcal{L} &= \text{MAE}(H_{\text{ref}}, H_{\text{pred}}) + \text{MSE}(H_{\text{ref}}, H_{\text{pred}}) \end{aligned} $$

where $H_{\text{init}}$ is a computationally inexpensive initial guess computed via PySCF.

Hyperparameters:

| Parameter | PubChemQH | QH9 | MD17 |

|---|---|---|---|

| Batch Size | 8 | 32 | 10 (uracil: 5) |

| Training Steps | 300k | 260k | 200k |

| Warmup Steps | 1k | 1k | 1k |

| Learning Rate | 1e-3 | 1e-3 | 5e-4 |

| Sparsity Rate | 0.7 | 0.4 | 0.1-0.3 |

| TSS Epoch $t$ | 3 | 3 | 3 |

Sparse Pair Gate: Adapts the interaction graph. It concatenates zero-order features and inner products of atom pairs, then passes them through a linear layer $F_p$ with sigmoid activation to learn a weight $W_p^{ij}$ for every pair. Pairs are kept only if selected by the scheduler ($U_p^{TSS}$). The overhead comes primarily from the linear layer $F_p$.

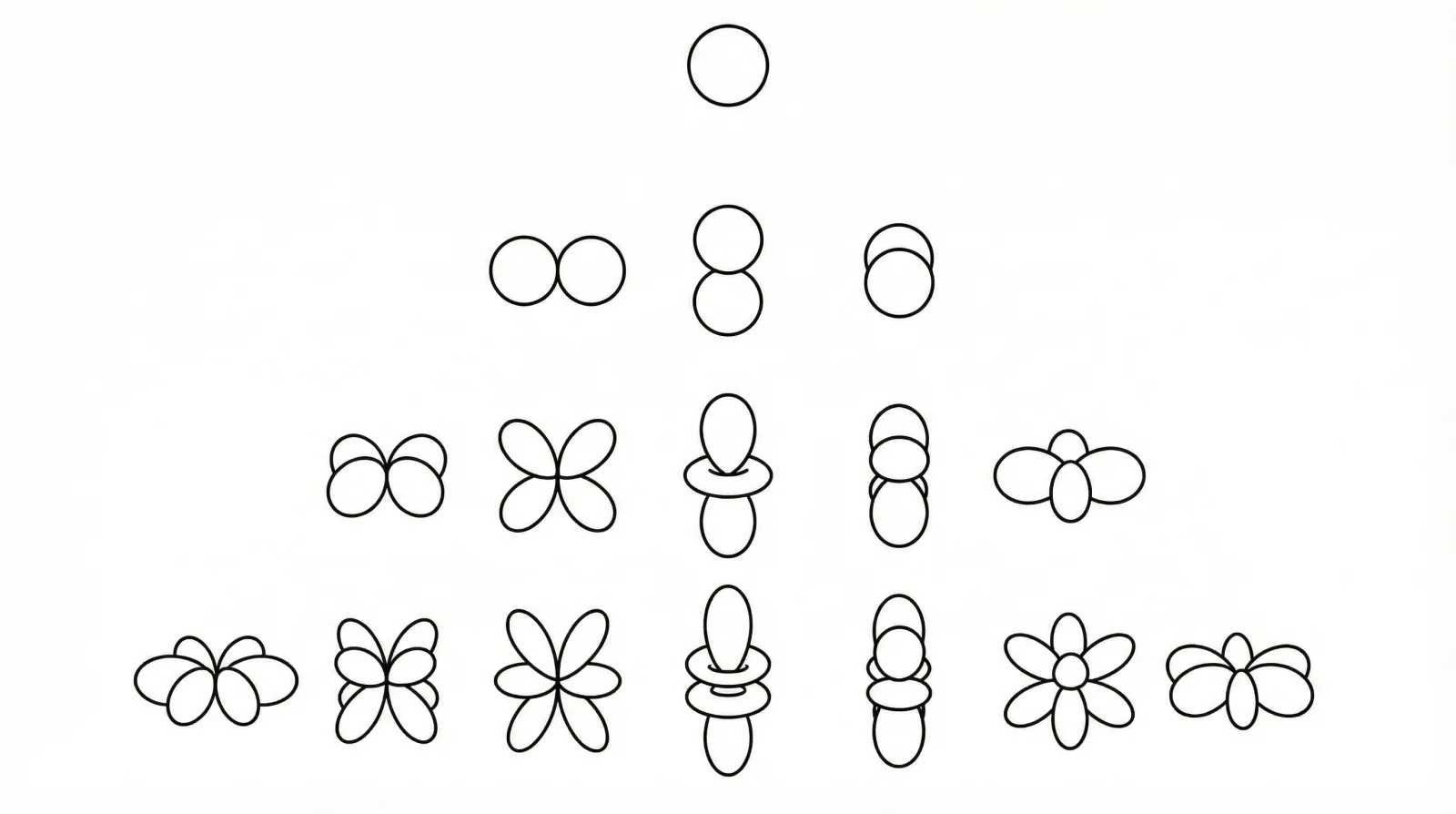

Sparse TP Gate: Filters triplets $(l_1, l_2, l_3)$ inside the TP operation. Higher-order combinations are more likely to be pruned. Complexity: $\mathcal{O}(L^3)$.

Three-Phase Sparsity Scheduler: Training curriculum designed to optimize the sparse gates effectively:

- Phase 1 (Random): Random selection ($1-k$ probability) to ensure unbiased weight updates. Complexity: $\mathcal{O}(|U|)$.

- Phase 2 (Adaptive): Selects top $(1-k)$ percent based on learned magnitude. Complexity: $\mathcal{O}(|U|\log|U|)$.

- Phase 3 (Fixed): Freezes the connectivity mask for maximum inference speed. No overhead.

Weight Initialization: Learnable sparsity weights ($W$) initialized as all-ones vector.

Models

The model predicts the Hamiltonian matrix $H$ from atomic numbers $Z$ and coordinates $r$.

Inputs: Atomic numbers ($Z$) and 3D coordinates.

Backbone Structure:

- Vectorial Node Interaction (x4): Uses long-short range message passing. Extracts vectorial representations ($l=1$) without high-order TPs to save cost.

- Spherical Node Interaction (x2): Projects features to high-order spherical harmonics (up to $L_{max}$). The first block increases the maximum order from 0 to $L_{max}$ without the Sparse Pair Gate; the second block applies the Sparse Pair Gate to filter node pairs.

- Pair Construction Block (x2): Splits into Diagonal (self-interaction) and Non-Diagonal (cross-interaction) blocks. Both use the Sparse TP Gate to prune cross-order combinations $(l_1, l_2, l_3)$. The Non-Diagonal blocks also use the Sparse Pair Gate to filter atom pairs. The two Pair Construction blocks receive representations from the two Spherical Node Interaction blocks respectively, and their outputs are summed.

- Expansion Block: Reconstructs the full Hamiltonian matrix from the sparse irreducible representations, exploiting symmetry ($H_{ji} = H_{ij}^T$) to halve computations.

Hardware

- Training: 4x NVIDIA A100 (80GB)

- Benchmarking: Single NVIDIA RTX A6000 (46GB)

Paper Information

Citation: Luo, E., Wei, X., Huang, L., Li, Y., Yang, H., Xia, Z., Wang, Z., Liu, C., Shao, B., & Zhang, J. (2025). Efficient and Scalable Density Functional Theory Hamiltonian Prediction through Adaptive Sparsity. Proceedings of the 42nd International Conference on Machine Learning (ICML), Vancouver, Canada.

Publication: ICML 2025

@inproceedings{luo2025efficient,

title={Efficient and Scalable Density Functional Theory Hamiltonian Prediction through Adaptive Sparsity},

author={Luo, Erpai and Wei, Xinran and Huang, Lin and Li, Yunyang and Yang, Han and Xia, Zaishuo and Wang, Zun and Liu, Chang and Shao, Bin and Zhang, Jia},

booktitle={Proceedings of the 42nd International Conference on Machine Learning},

year={2025}

}

Additional Resources:

- ICML 2025 poster page

- OpenReview forum

- PDF on OpenReview

- GitHub Repository (Note: The official repository was archived by Microsoft in December 2025. It is available for reference but no longer actively maintained.)