Contribution: Systematic Assessment of Non-Conservative ML Force Models

This is a Systematization paper. It systematically catalogs the exact failure modes of existing non-conservative force approaches, quantifies them with a new diagnostic metric, and proposes a hybrid Multiple Time-Stepping solution combining the speed benefits of direct force prediction with the physical correctness of conservative models.

Motivation: The Speed-Accuracy Trade-off in ML Force Fields

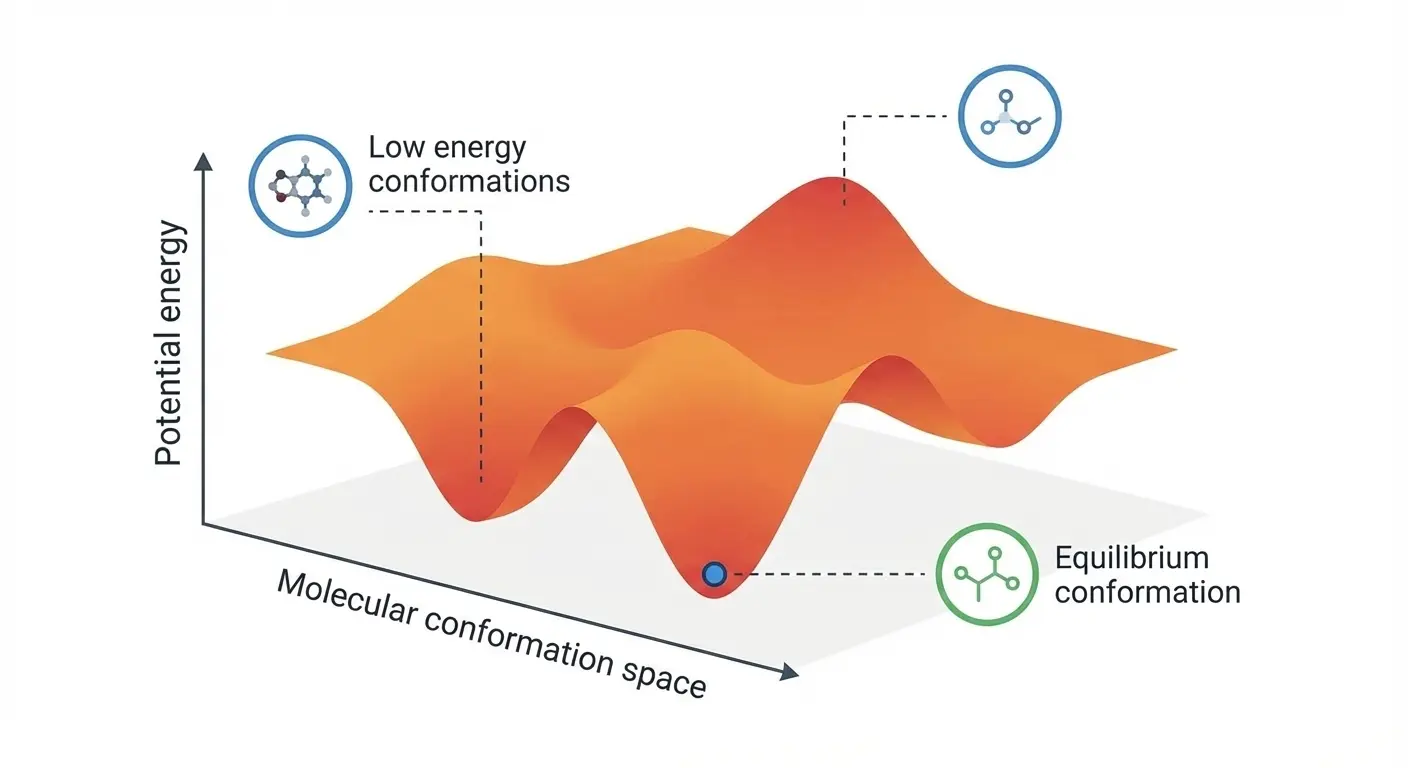

Many recent machine learning interatomic potential (MLIP) architectures predict forces directly ($F_\theta(r)$). This “non-conservative” approach avoids the computational overhead of automatic differentiation, yielding faster inference (typically 2-3x speedup) and faster training (up to 3x). However, it sacrifices energy conservation and rotational constraints, potentially destabilizing molecular dynamics simulations. The field lacks rigorous quantification of when this trade-off breaks down and how to mitigate the failures.

Novelty: Jacobian Asymmetry and Hybrid Architectures

Four key contributions:

Jacobian Asymmetry Metric ($\lambda$): A quantitative diagnostic for non-conservation. Since conservative forces derive from a scalar field, their Jacobian (the Hessian of energy) must be symmetric. The normalized norm of the antisymmetric part quantifies the degree of violation: $$ \lambda = \frac{|| \mathbf{J}_{\text{anti}} ||_F}{|| \mathbf{J} ||_F} $$ where $\mathbf{J}_{\text{anti}} = (\mathbf{J} - \mathbf{J}^\top)/2$. Measured values range from $\lambda \approx 0.004$ (PET-NC) to $\lambda \approx 0.032$ (SOAP-BPNN-NC), with ORB at 0.015 and EquiformerV2 at 0.017. Notably, the pairwise $\lambda_{ij}$ approaches 1 at large interatomic distances, meaning non-conservative artifacts disproportionately affect long-range and collective interactions.

Systematic Failure Mode Catalog: First comprehensive demonstration that non-conservative models cause runaway heating in NVE ensembles (temperature drifts of ~7,000 billion K/s for PET-NC and ~10x larger for ORB) and equipartition violations in NVT ensembles where different atom types equilibrate to different temperatures, a physical impossibility.

Theoretical Analysis of Force vs. Energy Training: Force-only training overemphasizes high-frequency vibrational modes because force labels carry per-atom gradients that are dominated by stiff, short-range interactions. Energy labels provide a more balanced representation across the frequency spectrum. Additionally, conservative models benefit from backpropagation extending the effective receptive field to approximately 2x the interaction cutoff, while direct-force models are limited to the nominal cutoff radius.

Hybrid Training and Inference Protocol: A practical workflow that combines fast direct-force prediction with conservative corrections:

- Training: Pre-train on direct forces, then fine-tune on energy gradients (2-4x faster than training conservative models from scratch)

- Inference: Multiple Time-Stepping (MTS) where fast non-conservative forces are periodically corrected by slower conservative forces

Methodology: Systematic Failure Mode Analysis

The evaluation systematically tests multiple state-of-the-art models across diverse simulation scenarios:

Models tested:

- PET-C/PET-NC (Point Edge Transformer, conservative and non-conservative variants)

- PET-M (hybrid variant jointly predicting both conservative and non-conservative forces)

- ORB-v2 (non-conservative, trained on Alexandria/MPtrj)

- EquiformerV2 (non-conservative equivariant Transformer)

- MACE-MP-0 (conservative message-passing)

- SevenNet (conservative message-passing)

- SOAP-BPNN-C/SOAP-BPNN-NC (descriptor-based baseline, both conservative and non-conservative variants)

Test scenarios:

- NVE stability tests on bulk liquid water, graphene, amorphous carbon, and FCC aluminum

- Thermostat artifact analysis with Langevin and GLE thermostats

- Geometry optimization on water snapshots and QM9 molecules using FIRE and L-BFGS

- MTS validation on OC20 catalysis dataset

- Species-resolved temperature measurements for equipartition testing

Key metrics:

- Jacobian asymmetry ($\lambda$)

- Kinetic temperature drift in NVE

- Velocity-velocity correlations

- Radial distribution functions

- Species-resolved temperatures

- Inference speed benchmarks

Results: Simulation Instability and Hybrid Solutions

Purely non-conservative models are unsuitable for production simulations due to uncontrollable unphysical artifacts that no thermostat can correct. Key findings:

Performance failures:

- Non-conservative models exhibited catastrophic temperature drift in NVE simulations: ~7,000 billion K/s for PET-NC and ~70,000 billion K/s for ORB, with EquiformerV2 comparable to PET-NC

- Strong Langevin thermostats ($\tau=10$ fs) damped diffusion by ~5x, negating the speed benefits of non-conservative models

- Advanced GLE thermostats also failed to control non-conservative drift (ORB reached 1181 K vs. 300 K target)

- Equipartition violations: under stochastic velocity rescaling, O and H atoms equilibrated at different temperatures. For ORB, H atoms reached 336 K and O atoms 230 K against a 300 K target. For PET-NC, deviations were smaller but still significant (H at 296 K, O at 310 K).

- Geometry optimization was more fragile with non-conservative forces: inaccurate NC models (SOAP-BPNN-NC) failed catastrophically, while more accurate ones (PET-NC) could converge with FIRE but showed large force fluctuations with L-BFGS. Non-conservative models consistently had lower success rates across water and QM9 benchmarks.

Hybrid solution success:

- MTS with non-conservative forces corrected every 8 steps ($M=8$) achieved conservative stability with only ~20% overhead compared to a purely non-conservative trajectory. Results were essentially indistinguishable from fully conservative simulations. Higher stride values ($M=16$) became unstable due to resonances between fast degrees of freedom and integration errors.

- Conservative fine-tuning achieved the accuracy of from-scratch training in about 1/3 the total training time (2-4x resource reduction)

- Validated on OC20 catalysis benchmark

Scaling caveat: The authors note that as training datasets grow and models become more expressive, non-conservative artifacts should diminish because accurate models naturally exhibit less non-conservative behavior. However, they argue the best path forward is hybrid approaches rather than waiting for scale to solve the problem.

Recommendation: The optimal production path is hybrid architectures using direct forces for acceleration (via MTS and pre-training) while anchoring models in conservative energy surfaces. This captures computational benefits without sacrificing physical reliability.

Reproducibility Details

Data

Primary training/evaluation:

- Bulk Liquid Water (Cheng et al., 2019): revPBE0-D3 calculations with over 250,000 force/energy targets, chosen for rigorous thermodynamic testing

Generalization tests:

- Graphene, amorphous carbon, FCC aluminum (tested with general-purpose foundation models)

Benchmarks:

- QM9: Geometry optimization tests

- OC20 (Open Catalyst): Oxygen on alloy surfaces for MTS validation

All datasets publicly available through cited sources.

Models

Point Edge Transformer (PET) variants:

- PET-C (Conservative): Forces via energy backpropagation

- PET-NC (Non-Conservative): Direct force prediction head, slightly higher parameter count

- PET-M (Hybrid): Jointly predicts both conservative and non-conservative forces, accuracy within ~10% of the best single-task models

Baseline comparisons:

| Model | Type | Training Data | Notes |

|---|---|---|---|

| ORB-v2 | Non-conservative | Alexandria/MPtrj | Rotationally unconstrained |

| EquiformerV2 | Non-conservative | Alexandria/MPtrj | Equivariant Transformer |

| MACE-MP-0 | Conservative | MPtrj | Equivariant message-passing |

| SevenNet | Conservative | MPtrj | Equivariant message-passing |

| SOAP-BPNN-C | Conservative | Bulk water | Descriptor-based baseline |

| SOAP-BPNN-NC | Non-conservative | Bulk water | Descriptor-based baseline |

Training details:

- Loss functions: PET-C uses joint Energy + Force $L^2$ loss; PET-NC uses Force-only $L^2$ loss

- Fine-tuning protocol: PET-NC converted to conservative via energy head fine-tuning

- MTS configuration: Non-conservative forces with conservative corrections every 8 steps ($M=8$)

Evaluation

Metrics & Software: Molecular dynamics evaluations were performed using i-PI, while geometry optimizations used ASE (Atomic Simulation Environment). Note that primary code reproducibility is provided via an archived Zenodo snapshot; the authors did not link a live, public GitHub repository.

- Jacobian asymmetry ($\lambda$): Quantifies non-conservation via antisymmetric component

- Temperature drift: NVE ensemble stability

- Velocity-velocity correlation ($\hat{c}_{vv}(\omega)$): Thermostat artifact detection

- Radial distribution functions ($g(r)$): Structural accuracy

- Species-resolved temperature: Equipartition testing

- Inference speed: Wall-clock time per MD step

Key results:

| Model | Speed (ms/step) | NVE Stability | Notes |

|---|---|---|---|

| PET-NC | 8.58 | Failed | ~7,000 billion K/s drift |

| PET-C | 19.4 | Stable | 2.3x slower than PET-NC |

| SevenNet | 52.8 | Stable | Conservative baseline |

| PET Hybrid (MTS) | ~10.3 | Stable | ~20% overhead vs. pure NC |

Thermostat artifacts:

- Langevin ($\tau=10$ fs) dampened diffusion by ~5x (weaker coupling at $\tau=100$ fs reduced diffusion by ~1.5x)

- GLE thermostats also failed to control non-conservative drift

- Equipartition violations under SVR: ORB showed H at 336 K and O at 230 K (target 300 K); PET-NC showed smaller but significant species-resolved deviations

Optimization failures:

- Non-conservative models showed lower geometry optimization success rates across water and QM9 benchmarks, with inaccurate NC models failing catastrophically

Hardware

Compute resources:

- Training: From-scratch baseline models were trained using 4x Nvidia H100 GPUs (over a duration of around two days).

- Fine-Tuning: Conservative fine-tuning was performed using a single (1x) Nvidia H100 GPU for a duration of one day.

- This hybrid fine-tuning approach achieved a 2-4x reduction in computational resources compared to training conservative models from scratch.

Reproduction resources:

| Artifact | Type | License | Notes |

|---|---|---|---|

| Zenodo repository | Code/Data | Unknown | Code and data to reproduce all results |

| MTS inference tutorial | Other | Unknown | Multiple time-stepping dynamics tutorial |

| Conservative fine-tuning tutorial | Other | Unknown | Fine-tuning workflow tutorial |

Paper Information

Citation: Bigi, F., Langer, M. F., & Ceriotti, M. (2025). The dark side of the forces: assessing non-conservative force models for atomistic machine learning. Proceedings of the 42nd International Conference on Machine Learning (ICML).

Publication: ICML 2025

@inproceedings{bigi2025dark,

title={The dark side of the forces: assessing non-conservative force models for atomistic machine learning},

author={Bigi, Filippo and Langer, Marcel F and Ceriotti, Michele},

booktitle={Proceedings of the 42nd International Conference on Machine Learning},

year={2025}

}

Additional Resources: