A Systematization of Scientific Language Models

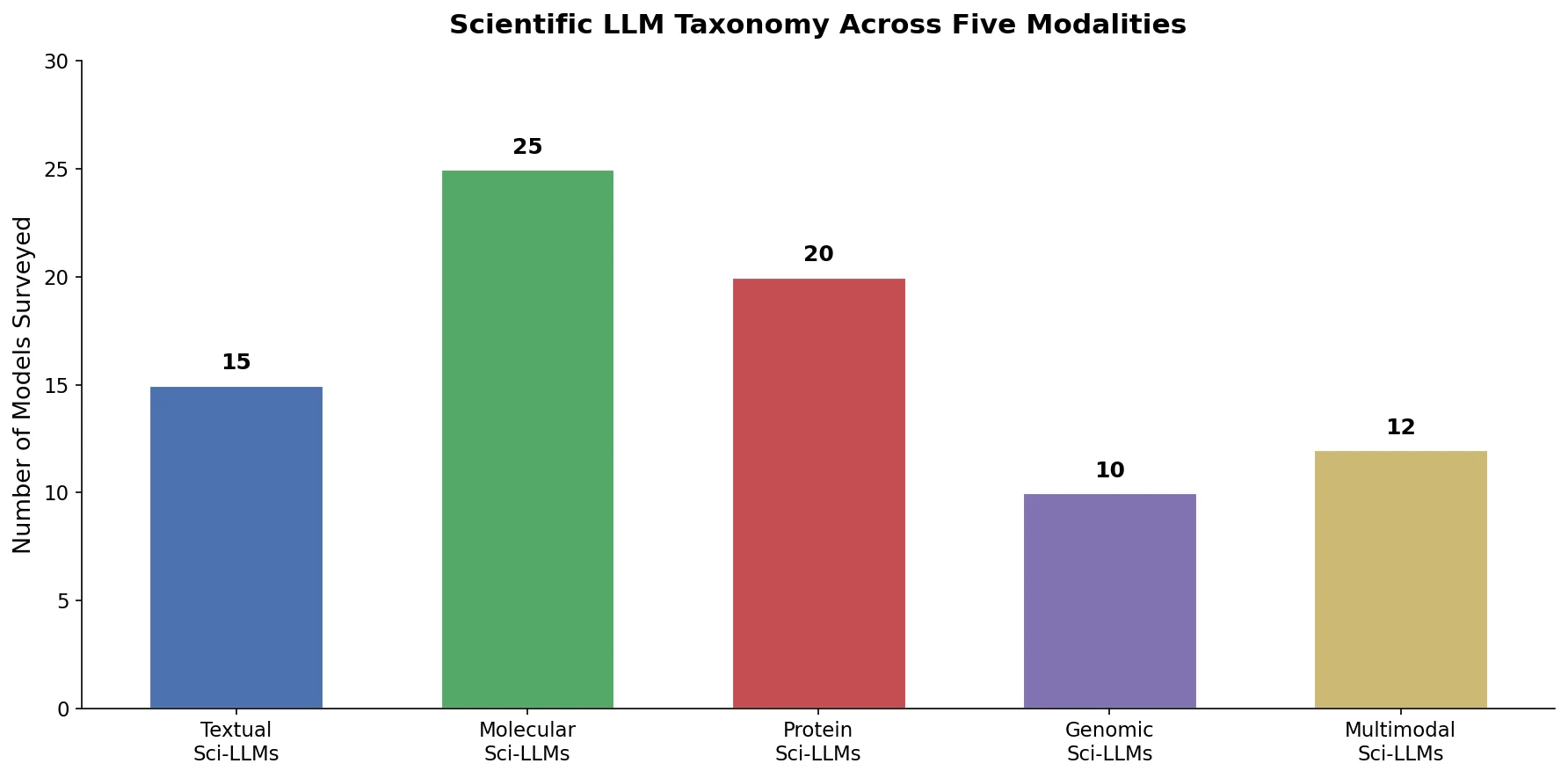

This paper is a Systematization (survey) that provides a comprehensive review of scientific large language models (Sci-LLMs) designed for biological and chemical domains. The survey covers five main branches of scientific language modeling: textual, molecular, protein, genomic, and multimodal LLMs. For each branch, the authors analyze model architectures, capabilities, training datasets, evaluation benchmarks, and assessment criteria, then identify open challenges and future research directions.

Motivation: Bridging Scientific Languages and LLMs

Large language models have demonstrated strong capabilities in natural language understanding, but scientific research involves specialized “languages” that differ fundamentally from natural text. Chemical molecules are expressed as SMILES or SELFIES strings, proteins as amino acid sequences, and genomes as nucleotide sequences. Each of these language systems has its own vocabulary and grammar. General-purpose LLMs like ChatGPT and GPT-4 often fail to properly handle these scientific data types because the semantics and grammar of scientific languages diverge substantially from natural language.

Prior surveys have focused on individual modalities (molecules, proteins, or genomes) in isolation. No comprehensive review had unified these language modeling advances into a single framework. This survey fills that gap by systematically covering all five modalities and, notably, the emerging area of multimodal Sci-LLMs that integrate multiple scientific languages.

Taxonomy of Scientific Language Models

The survey organizes Sci-LLMs into a clear taxonomic framework built on two axes: the scientific language modality and the model architecture type.

Scientific Language Modalities

The authors define five categories of Sci-LLMs:

Text-Sci-LLMs: LLMs trained on scientific textual corpora (medical, biological, chemical, and comprehensive domains). Examples include BioBERT, BioGPT, ChemBERT, SciBERT, and Galactica.

Mol-LLMs: Models that process molecular languages (SMILES, SELFIES, InChI). These include encoder-only models like ChemBERTa and MolFormer for property prediction, decoder-only models like MolGPT for molecular generation, and encoder-decoder models like Molecular Transformer and Chemformer for reaction prediction.

Prot-LLMs: Models operating on protein amino acid sequences. The ESM series (ESM-1b, ESM-2) and ProtTrans serve as encoders for function and structure prediction, while ProGen and ProtGPT2 generate novel protein sequences.

Gene-LLMs: Models for DNA and RNA sequences, including DNABERT, Nucleotide Transformer, HyenaDNA, and Evo, covering tasks from variant effect prediction to genome-scale sequence modeling.

MM-Sci-LLMs: Multimodal models integrating multiple scientific data types (molecule-text, protein-text, gene-cell-text, molecule-protein), such as MoleculeSTM, BioT5, Mol-Instructions, and BioMedGPT.

Architecture Classification

For each modality, models are categorized into three architecture types:

- Encoder-only: Based on BERT/RoBERTa, these models learn fixed-size representations via masked language modeling. They excel at discriminative tasks like property prediction and classification.

- Decoder-only: Based on GPT, these models perform autoregressive generation. They are used for de novo molecule design, protein sequence generation, and DNA sequence generation.

- Encoder-decoder: Based on architectures like T5 or BART, these handle sequence-to-sequence tasks such as reaction prediction, molecule captioning, and protein sequence-structure translation.

Comprehensive Catalog of Models, Datasets, and Benchmarks

A central contribution of the survey is its exhaustive cataloging of resources across all five modalities. The authors compile detailed summary tables covering over 100 Sci-LLMs, their parameter counts, base architectures, training data, and capabilities.

Molecular LLMs

The survey documents a rich landscape of Mol-LLMs:

Encoder-only models for property prediction include SMILES-BERT, ChemBERTa, ChemBERTa-2, MolBERT, MolFormer, MG-BERT, GROVER, MAT, Uni-Mol, and others. These models are pre-trained on ZINC, PubChem, or ChEMBL datasets and fine-tuned for molecular property prediction tasks on benchmarks like MoleculeNet.

Decoder-only models for molecular generation include MolGPT, SMILES GPT, iupacGPT, cMolGPT, and Taiga. These generate SMILES strings autoregressively, often combining GPT with reinforcement learning for property optimization.

Encoder-decoder models for reaction prediction include Molecular Transformer, Retrosynthesis Transformer, Chemformer, BARTSmiles, Graph2SMILES, and MOLGEN. These handle forward reaction prediction and retrosynthesis.

Key Datasets Surveyed

The survey catalogs pre-training datasets and benchmarks for each modality:

| Modality | Pre-training Sources | Key Benchmarks |

|---|---|---|

| Text | PubMed, PMC, arXiv, Semantic Scholar | MMLU, MedQA, PubMedQA, SciEval |

| Molecule | ZINC, PubChem, ChEMBL, USPTO, GDB-17 | MoleculeNet, GuacaMol, MOSES, SPECTRA |

| Protein | UniRef50/90/100, BFD, PDB, AlphaFoldDB | CASP, TAPE, ProteinGym, FLIP, PEER |

| Genome | GRCh38, 1000 Genomes, ENCODE | NT-Bench, GenBench, BEACON |

| Multimodal | ChEBI-20, PubChemSTM, Mol-Instructions | Various cross-modal retrieval and generation tasks |

Evaluation Metrics

For molecular generation, the survey details standard metrics:

- Validity: percentage of chemically viable molecules

- Uniqueness: fraction of distinct generated structures

- Novelty: fraction not present in the training set

- Internal diversity: measured as

$$ \text{IntDiv}_{p}(G) = 1 - \sqrt[p]{\frac{1}{|G|^{2}} \sum_{m_{1}, m_{2} \in G} T(m_{1}, m_{2})^{p}} $$

where $T(m_{1}, m_{2})$ is the Tanimoto similarity between molecules $m_{1}$ and $m_{2}$.

- Frechet ChemNet Distance (FCD): comparing distributions of generated and reference molecules

$$ \text{FCD}(G, R) = | \mu_{G} - \mu_{R} |^{2} + \text{Tr}\left[\Sigma_{G} + \Sigma_{R} - 2(\Sigma_{G}\Sigma_{R})^{1/2}\right] $$

For protein generation, analogous metrics include perplexity, Frechet Protein Distance (FPD), foldability (pLDDT), sequence recovery, and novelty (sequence identity).

Critical Challenges and Future Directions

The survey identifies four major challenges and seven future research directions for Sci-LLMs.

Challenges

Training data limitations: Sci-LLM training datasets are orders of magnitude smaller than those for general LLMs. ProGen was trained on 280M protein sequences (tens of billions of tokens), while ChatGPT used approximately 570 billion tokens. Scaling laws suggest larger datasets would improve performance, and advances in sequencing technologies may help close this gap.

Architecture mismatch: Standard Transformer architectures face difficulties with scientific languages. Scientific sequences (proteins with hundreds or thousands of amino acids, DNA with millions of base pairs) are far longer than typical natural language sentences. Additionally, 3D structural information is critical for function prediction but does not naturally map to sequence tokens. Autoregressive generation is also a poor fit since biological sequences function as a whole rather than being read left-to-right.

Evaluation gaps: Computational metrics for generated molecules and proteins provide only indirect quality measures. Wet-lab validation remains the gold standard but is beyond the scope of most AI research teams. Better computational evaluation methods that correlate with experimental outcomes are needed.

Ethics: Sensitive biological data raises privacy concerns. The potential for misuse (e.g., generating harmful substances) requires careful safeguards. Algorithmic bias and equitable access to Sci-LLM benefits also demand attention.

Future Directions

- Larger-scale, cross-modal training datasets with strong semantic alignment across modalities

- Incorporating 3D structural and temporal information into language-based modeling, including structural motifs as tokens

- Integration with external knowledge sources such as Gene Ontology and chemical knowledge graphs to reduce hallucination

- Coupling with physical simulation (e.g., molecular dynamics) to ground language models in physical reality

- Augmenting Sci-LLMs with specialized tools and agents, following the success of tool-augmented general LLMs like ChemCrow

- Development of computational evaluation metrics that are both fast and accurate, enabling rapid research iteration

- Super-alignment with human ethics, ensuring ethical reasoning is deeply integrated into Sci-LLM behavior

Reproducibility Details

Data

This is a survey paper that does not present new experimental results. The authors catalog extensive datasets across five modalities (see tables in the paper for comprehensive listings). The survey itself is maintained as an open resource.

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| Scientific-LLM-Survey GitHub | Other | Not specified | Curated list of papers, models, and resources |

Hardware

Not applicable (survey paper).

Paper Information

Citation: Zhang, Q., Ding, K., Lyv, T., Wang, X., Yin, Q., Zhang, Y., Yu, J., Wang, Y., Li, X., Xiang, Z., Feng, K., Zhuang, X., Wang, Z., Qin, M., Zhang, M., Zhang, J., Cui, J., Huang, T., Yan, P., Xu, R., Chen, H., Li, X., Fan, X., Xing, H., & Chen, H. (2025). Scientific Large Language Models: A Survey on Biological & Chemical Domains. ACM Computing Surveys, 57(6), 1–38. https://doi.org/10.1145/3715318

@article{zhang2025scientific,

title={Scientific Large Language Models: A Survey on Biological \& Chemical Domains},

author={Zhang, Qiang and Ding, Keyan and Lyv, Tianwen and Wang, Xinda and Yin, Qingyu and Zhang, Yiwen and Yu, Jing and Wang, Yuhao and Li, Xiaotong and Xiang, Zhuoyi and Feng, Kehua and Zhuang, Xiang and Wang, Zeyuan and Qin, Ming and Zhang, Mengyao and Zhang, Jinlu and Cui, Jiyu and Huang, Tao and Yan, Pengju and Xu, Renjun and Chen, Hongyang and Li, Xiaolin and Fan, Xiaohui and Xing, Huabin and Chen, Huajun},

journal={ACM Computing Surveys},

volume={57},

number={6},

pages={1--38},

year={2025},

doi={10.1145/3715318}

}