A Resource for Chemistry Instruction Tuning

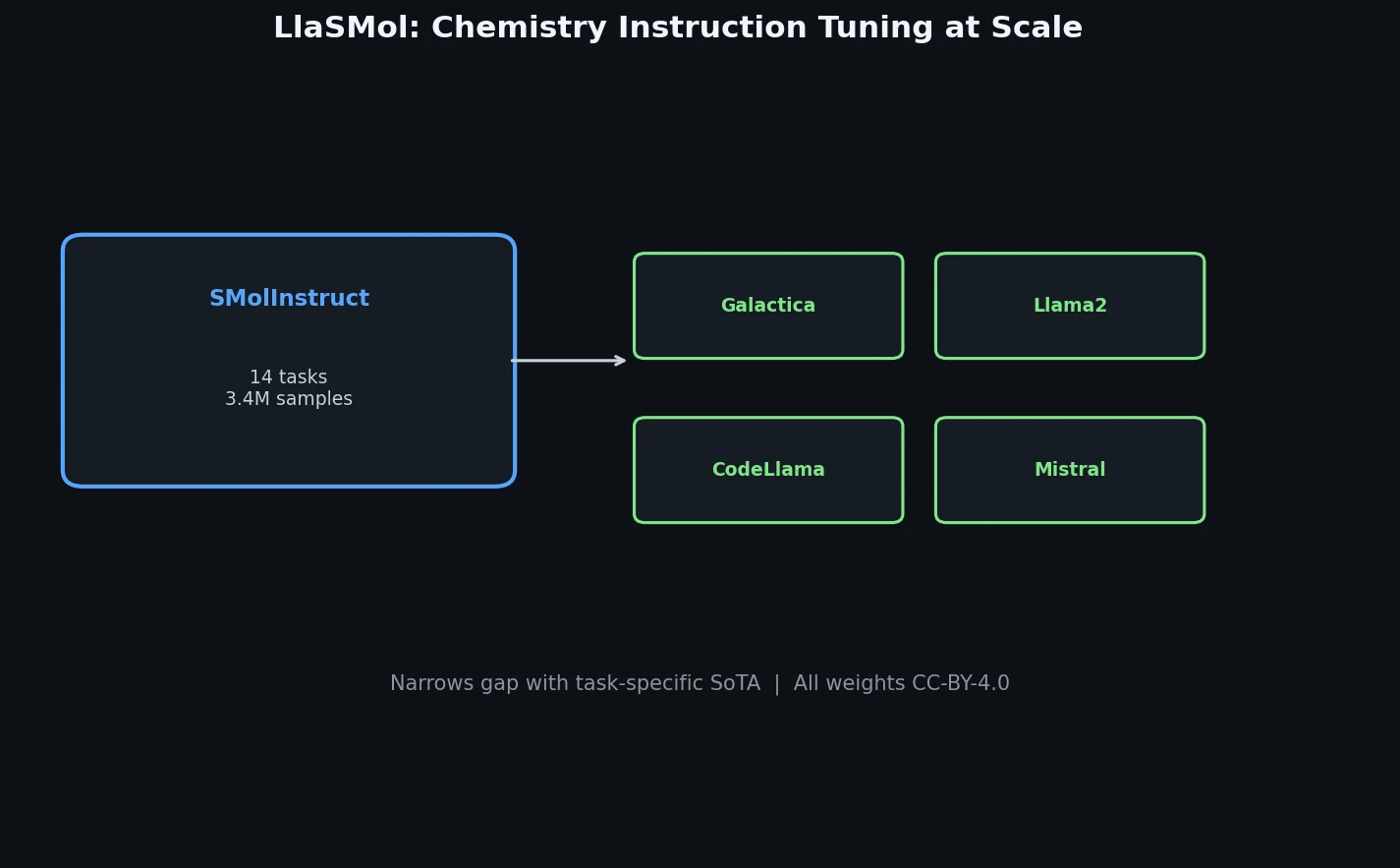

This is a Resource paper that contributes both a large-scale instruction tuning dataset (SMolInstruct) and a family of fine-tuned LLMs (LlaSMol) for chemistry tasks. The primary contribution is SMolInstruct, a dataset of 3.3 million samples across 14 chemistry tasks, paired with systematic experiments showing that instruction-tuned open-source LLMs can substantially outperform GPT-4 and Claude 3 Opus on chemistry benchmarks. The dataset construction methodology, quality control pipeline, and careful data splitting are central to the paper’s value.

Why LLMs Struggle with Chemistry Tasks

Prior work demonstrated that general-purpose LLMs perform poorly on chemistry tasks. Guo et al. (2023) found that GPT-4, while outperforming other LLMs, falls far short of task-specific deep learning models, particularly on tasks requiring precise understanding of SMILES representations. Fang et al. (2023) attempted instruction tuning with Mol-Instructions, but the resulting models still performed well below task-specific baselines.

These results raised a fundamental question: are LLMs inherently limited for chemistry, or is the problem simply insufficient training data? The authors argue it is the latter. Previous instruction tuning datasets suffered from limited scale (Mol-Instructions had 1.3M samples with fewer task types), lower quality (numerous low-quality molecular descriptions, mislabeled reactants/reagents in reaction data), and suboptimal design choices (using SELFIES instead of canonical SMILES, inconsistent data splitting that allowed leakage).

SMolInstruct: A Comprehensive Chemistry Instruction Dataset

The core innovation is the SMolInstruct dataset, which addresses the limitations of prior datasets through three design principles:

Scale and comprehensiveness. SMolInstruct contains 3.3M samples across 14 tasks organized into four categories:

- Name conversion (4 tasks): IUPAC-to-formula, IUPAC-to-SMILES, SMILES-to-formula, SMILES-to-IUPAC, sourced from PubChem

- Property prediction (6 tasks): ESOL, Lipo, BBBP, ClinTox, HIV, SIDER, sourced from MoleculeNet

- Molecule description (2 tasks): molecule captioning and molecule generation, sourced from ChEBI-20 and Mol-Instructions

- Chemical reactions (2 tasks): forward synthesis and retrosynthesis, sourced from USPTO-full

Quality control. The authors apply rigorous curation: invalid SMILES are filtered using RDKit, mislabeled reactants/reagents in USPTO-full are corrected by comparing atom mappings with products, low-quality molecular descriptions are removed using pattern-based rules, and duplicates are eliminated.

Careful data splitting. To prevent data leakage across related tasks (e.g., forward synthesis and retrosynthesis share the same reactions), the authors ensure matched samples across reverse tasks are placed together in either training or evaluation sets. Samples with identical inputs but different outputs are also grouped together to prevent exaggerated performance estimates.

Additionally, all SMILES representations are canonicalized, and special tags (e.g., <SMILES>...</SMILES>) encapsulate different information types within the instruction templates.

Experimental Setup: Four Base Models and Comprehensive Baselines

The authors fine-tune four open-source LLMs using LoRA (applied to all attention and FFN linear layers, with rank and alpha both set to 16):

- Galactica 6.7B: pretrained on scientific text including chemistry data

- Llama 2 7B: general-purpose LLM

- Code Llama 7B: code-focused variant of Llama 2

- Mistral 7B: general-purpose LLM

Training uses 8-bit AdamW with learning rate 1e-4, cosine scheduler, and 3 epochs. Only 0.58% of parameters are fine-tuned (approximately 41.9M parameters). Beam search is used at inference.

Baselines include:

- General LLMs without fine-tuning: GPT-4, Claude 3 Opus, and the four base models

- Chemistry-specific LLMs: Molinst (Llama 2 tuned on Mol-Instructions), ChemLLM

- Task-specific non-LLM models: STOUT for name conversion, Uni-Mol for property prediction, MolT5 for molecule description, RSMILES and Molecular Transformer for reaction prediction

Main Results

| Task Category | Best LlaSMol | GPT-4 | Improvement |

|---|---|---|---|

| Name conversion (NC-I2F, EM%) | 87.9 (Mistral) | 8.7 | +79.2 |

| Name conversion (NC-I2S, EM%) | 70.1 (Mistral) | 3.3 | +66.8 |

| Property prediction (PP-ESOL, RMSE) | 1.150 (Mistral) | 2.570 | -1.42 (lower is better) |

| Property prediction (PP-BBBP, Acc%) | 74.6 (Mistral) | 62.9 | +11.7 |

| Molecule captioning (METEOR) | 0.452 (Mistral) | 0.188 | +0.264 |

| Molecule generation (FTS%) | 61.7 (Mistral) | 42.6 | +19.1 |

| Forward synthesis (EM%) | 63.3 (Mistral) | 1.6 | +61.7 |

| Retrosynthesis (EM%) | 32.9 (Mistral) | 0.0 | +32.9 |

LlaSMolMistral consistently outperforms all other LLMs and the other LlaSMol variants. It also surpasses task-specific SoTA models on PP-ClinTox (93.1 vs. 92.4) and PP-SIDER (70.7 vs. 70.0), though it has not yet matched SoTA on most other tasks.

Ablation Study

The ablation study examines three variants:

Without canonicalization: Performance drops on most tasks, with substantial decreases on forward synthesis (63.3 to 53.7 EM%) and retrosynthesis (32.9 to 23.8 EM%), confirming that canonicalized SMILES reduce learning difficulty.

Using SELFIES instead of SMILES: While SELFIES achieves slightly higher validity (100% vs. 99.7% on some tasks), it results in worse performance overall. SELFIES strings are typically longer than SMILES, making them harder for models to process accurately. This finding contradicts claims from prior work (Fang et al., 2023) that SELFIES should be preferred.

Training on Mol-Instructions instead of SMolInstruct: Using the same base model (Mistral) and identical training settings, the Mol-Instructions-trained model performs drastically worse, achieving near-zero accuracy on name conversion and property prediction tasks, and much lower performance on shared tasks (MC, MG, FS, RS).

Additional Analysis

Multi-task training generally outperforms single-task training, with particularly large improvements on PP-ESOL (RMSE 20.616 to 1.150) and molecule generation (FTS 33.1% to 61.7%). Increasing the number of trainable LoRA parameters from 6.8M (0.09%) to 173.0M (2.33%) leads to consistent performance improvements across most tasks, suggesting further gains are possible with more extensive fine-tuning.

Key Findings and Limitations

The paper establishes several findings:

LLMs can perform chemistry tasks effectively when provided with sufficient high-quality instruction tuning data. This refutes the notion that LLMs are fundamentally limited for chemistry.

The choice of base model matters considerably. Mistral 7B outperforms Llama 2, Code Llama, and Galactica despite identical training, suggesting that general language understanding transfers well to chemistry.

Canonical SMILES outperform both non-canonical SMILES and SELFIES for LLM-based chemistry, a practical recommendation for future work.

Dataset quality is more important than model architecture. The same base model trained on SMolInstruct vastly outperforms the same model trained on Mol-Instructions.

The authors acknowledge several limitations. The evaluation metrics for molecule captioning and generation (METEOR, FTS) measure text similarity rather than chemical correctness. The paper does not evaluate generalization to tasks beyond the 14 training tasks. LlaSMol models do not yet outperform task-specific SoTA models on most tasks, though the gap has narrowed substantially with only 0.58% of parameters fine-tuned.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Training | SMolInstruct | 3.29M samples | 14 tasks, canonical SMILES, publicly available on HuggingFace |

| Evaluation | SMolInstruct test split | 33,061 samples | Careful splitting to prevent leakage across tasks |

| NC tasks | PubChem | ~300K molecules | IUPAC names, SMILES, molecular formulas |

| PP tasks | MoleculeNet | ~78K samples | 6 datasets (ESOL, Lipo, BBBP, ClinTox, HIV, SIDER) |

| MC/MG tasks | ChEBI-20 + Mol-Instructions | ~60K samples | Quality-filtered molecular descriptions |

| FS/RS tasks | USPTO-full | ~1.9M samples | Cleaned, with corrected reactant/reagent labels |

Algorithms

- Fine-tuning: LoRA with rank=16, alpha=16, applied to all attention and FFN linear layers

- Optimizer: 8-bit AdamW, learning rate 1e-4, cosine scheduler

- Training: 3 epochs, max input length 512 tokens

- Inference: Beam search with beam size =

num_return_sequences+ 3

Models

| Model | Base | Parameters | LoRA Parameters |

|---|---|---|---|

| LlaSMolGalactica | Galactica 6.7B | 6.7B | 41.9M (0.58%) |

| LlaSMolLlama2 | Llama 2 7B | 7B | 41.9M (0.58%) |

| LlaSMolCodeLlama | Code Llama 7B | 7B | 41.9M (0.58%) |

| LlaSMolMistral | Mistral 7B | 7B | 41.9M (0.58%) |

All models and the dataset are publicly released on HuggingFace.

Evaluation

| Metric | Task(s) | Notes |

|---|---|---|

| Exact Match (EM) | NC, MG, FS, RS | Molecular identity comparison via RDKit |

| Fingerprint Tanimoto Similarity (FTS) | MG, FS, RS | Morgan fingerprints |

| METEOR | MC | Text similarity metric |

| RMSE | PP-ESOL, PP-Lipo | Regression tasks |

| Accuracy | PP-BBBP, PP-ClinTox, PP-HIV, PP-SIDER | Binary classification |

| Validity | NC-I2S, MG, FS, RS | Ratio of valid SMILES outputs |

Hardware

The paper does not specify exact GPU hardware or training times. Training uses the HuggingFace Transformers library with LoRA, and inference is conducted on the Ohio Supercomputer Center.

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| LlaSMol Code | Code | MIT | Training, evaluation, and inference scripts |

| SMolInstruct | Dataset | CC-BY-4.0 | 3.3M samples across 14 chemistry tasks |

| LlaSMol-Mistral-7B | Model | CC-BY-4.0 | Best-performing model (LoRA adapters) |

| LlaSMol-Galactica-6.7B | Model | CC-BY-4.0 | LoRA adapters for Galactica |

| LlaSMol-Llama2-7B | Model | CC-BY-4.0 | LoRA adapters for Llama 2 |

| LlaSMol-CodeLlama-7B | Model | CC-BY-4.0 | LoRA adapters for Code Llama |

Paper Information

Citation: Yu, B., Baker, F. N., Chen, Z., Ning, X., & Sun, H. (2024). LlaSMol: Advancing large language models for chemistry with a large-scale, comprehensive, high-quality instruction tuning dataset. arXiv preprint arXiv:2402.09391.

@article{yu2024llamsmol,

title={LlaSMol: Advancing Large Language Models for Chemistry with a Large-Scale, Comprehensive, High-Quality Instruction Tuning Dataset},

author={Yu, Botao and Baker, Frazier N. and Chen, Ziqi and Ning, Xia and Sun, Huan},

journal={arXiv preprint arXiv:2402.09391},

year={2024}

}