GPT-3 as a General-Purpose Chemistry Predictor

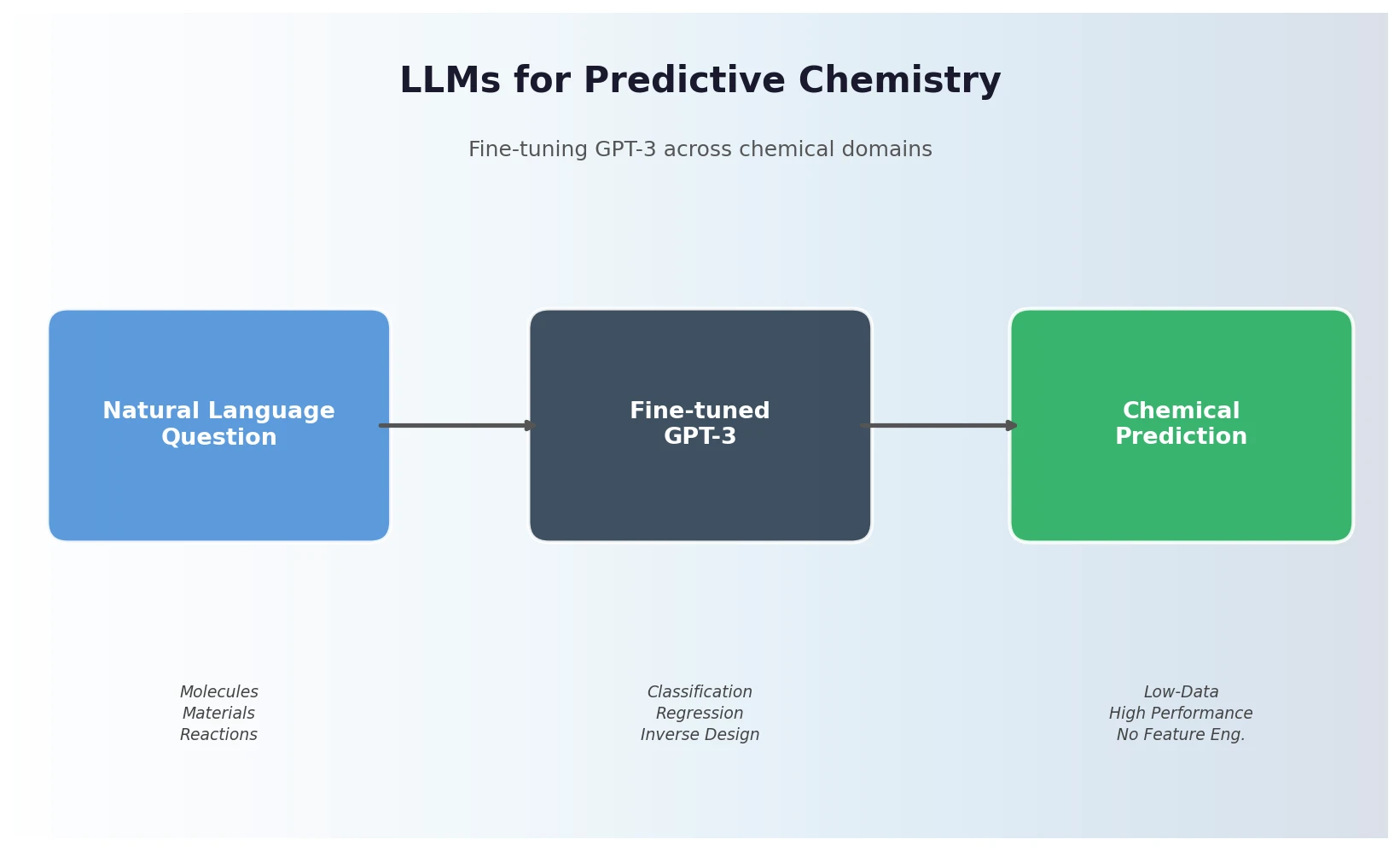

This is an Empirical paper that systematically benchmarks fine-tuned GPT-3 against dedicated machine learning models across 15 chemistry and materials science prediction tasks. The primary contribution is demonstrating that a general-purpose large language model, with no chemistry-specific architecture or featurization, can match or outperform specialized ML approaches, particularly when training data is limited. The paper also demonstrates inverse molecular design through simple prompt inversion.

Why General-Purpose LLMs for Chemistry

Machine learning in chemistry typically requires domain-specific feature engineering: molecular fingerprints, graph neural network architectures, or hand-crafted descriptors tailored to each application. Developing these approaches demands specialized expertise and significant effort for each new problem. The small datasets common in experimental chemistry further complicate matters, as many sophisticated ML approaches require large training sets to learn meaningful representations.

Large language models like GPT-3, trained on vast internet text corpora, had shown surprising capability at tasks they were not explicitly trained for. The key question motivating this work was whether these general-purpose models could also answer scientific questions for which we lack answers, given that most chemistry problems can be represented in text form. For example: “If I change the metal in my metal-organic framework, will it be stable in water?”

Prior chemical language models (e.g., Transformer-CNN, Regression Transformer, SELFormer) were pre-trained on chemistry-specific corpora. In contrast, this work investigates models trained primarily on general internet text, examining whether the implicit chemical knowledge encoded during pre-training, combined with task-specific fine-tuning, can substitute for explicit chemical featurization.

Language-Interfaced Fine-Tuning for Chemistry

The core innovation is “language-interfaced fine-tuning” (LIFT): reformulating chemistry prediction tasks as natural language question-answering. Training examples take the form of question-completion pairs, where questions describe the chemical system in text and completions provide the target property. For example:

- Classification: “What is the phase of Co1Cu1Fe1Ni1V1?” with completion “0” (multi-phase)

- Regression: Property values are rounded to a fixed precision, converting continuous prediction into a text generation problem

- Inverse design: Questions and completions are simply swapped, asking “What is a molecule with property X?” and expecting a SMILES string as completion

The fine-tuning uses OpenAI’s API with the smallest ada variant of GPT-3, with uniform hyperparameters across all tasks (8 epochs, learning rate multiplier of 0.02). No optimization of prompt structure, tokenization, or training schedule was performed, making the approach deliberately simple.

For regression, since language models generate discrete tokens rather than continuous values, the authors round target values to a fixed precision (e.g., 1% for Henry coefficients). This converts regression into a form of classification over numeric strings, with the assumption that GPT-3 can interpolate between these discretized values.

The approach also extends to open-source models. The authors demonstrate that GPT-J-6B can be fine-tuned using parameter-efficient techniques (LoRA, 8-bit quantization) on consumer hardware, and provide the chemlift Python package for this purpose.

Benchmarks Across Molecules, Materials, and Reactions

Datasets and Tasks

The evaluation spans three chemical domains with 15 total benchmarks:

Molecules:

- Photoswitch transition wavelength prediction (2022)

- Free energy of solvation (FreeSolv, 2014)

- Aqueous solubility (ESOL, 2004)

- Lipophilicity (ChEMBL, 2012)

- HOMO-LUMO gap (QMugs, 2022)

- Organic photovoltaic power conversion efficiency (2018)

Materials:

- Coarse-grained surfactant adsorption free energy (2021)

- CO2 and CH4 Henry coefficients in MOFs (2020)

- MOF heat capacity (2022)

- High-entropy alloy phase prediction (2020)

- Bulk metallic glass formation ability (2006)

- Metallic behavior prediction (2018)

Reactions:

- C-N cross-coupling yield (Buchwald-Hartwig, 2018)

- C-C cross-coupling yield (Suzuki, 2022)

Baselines

The baselines include both traditional ML and deep learning approaches:

- Non-DL: XGBoost with molecular descriptors/fragprints, Gaussian Process Regression (GPR), random forests, n-Gram models, Automatminer, differential reaction fingerprints (DRFP)

- Deep learning: MolCLR, ModNet, CrabNet, TabPFN

Data Efficiency Analysis

To compare data efficiency, the authors fit power law curves to learning curves for all models and measure the “data efficiency factor”: how much more (or fewer) data the best baseline needs to match GPT-3’s performance in the low-data regime.

| Domain | Benchmark | Data Efficiency vs. Non-DL | vs. DL Baseline |

|---|---|---|---|

| Molecules | Photoswitch wavelength | 1.1x (n-Gram) | 1.2x (TabPFN) |

| Molecules | Solvation free energy | 3.1x (GPR) | 1.3x (TabPFN) |

| Molecules | Solubility | 1.0x (XGBoost) | 0.002x (MolCLR) |

| Molecules | Lipophilicity | 3.43x (GPR) | 0.97x (TabPFN) |

| Molecules | HOMO-LUMO gap | 4.3x (XGBoost) | 0.62x (TabPFN) |

| Materials | HEA phase | 24x (RF) | 9.0x (CrabNet) |

| Materials | CO2 Henry coeff. | 0.40x (XGBoost) | 12x (TabPFN) |

| Reactions | C-N cross-coupling | 2.9x (DRFP) | - |

Values >1 indicate GPT-3 is more data-efficient. For the HEA phase prediction task, GPT-3 achieved comparable accuracy to a random forest model trained on 1,126 data points using only about 50 training examples.

Representation Sensitivity

An important finding is that GPT-3 performs well regardless of molecular representation format. The authors tested IUPAC names, SMILES, and SELFIES, finding good results across all representations. IUPAC names often produced the best performance, which is notable because it makes the approach accessible to non-specialists who can simply use chemical names rather than learning specialized encodings.

Inverse Design

For inverse design, the authors fine-tuned GPT-3 with reversed question-completion pairs. On photoswitches:

- Generated molecules include both training set members and novel structures (some not in PubChem)

- Transition wavelengths matched target values within about 10% mean absolute percentage error (validated using the GPR model from Griffiths et al.)

- A temperature parameter controls the diversity-validity tradeoff: low temperatures produce training set copies, high temperatures produce diverse but potentially invalid structures

- Across all temperatures, generated molecules showed low synthetic accessibility (SA) scores, suggesting synthesizability

The authors also demonstrated iterative inverse design for HOMO-LUMO gap optimization: starting from QMugs data, they iteratively fine-tuned GPT-3 to generate molecules with progressively larger bandgaps (>5 eV), successfully shifting the distribution over four generations. This worked even when extrapolating beyond the training distribution (e.g., training only on molecules with gaps <3.5 eV, then generating molecules with gaps >4.0 eV).

Coarse-Grained Polymer Design

A striking test involved coarse-grained dispersant polymers with four monomer types and chain lengths of 16-48 units. GPT-3 had no prior knowledge of these abstract representations, yet it outperformed dedicated models for adsorption free energy prediction and successfully performed inverse design, generating monomer sequences with a mean percentage error of about 22% for the desired property.

Key Findings and Limitations

Key Findings

Low-data advantage: Fine-tuned GPT-3 consistently shows the largest advantages over conventional ML in low-data regimes (tens to hundreds of data points), which is precisely where experimental chemistry datasets typically fall.

Representation agnostic: The model works with IUPAC names, SMILES, SELFIES, and even invented abstract representations, removing the need for chemistry-specific tokenization.

No feature engineering: The approach requires no domain-specific descriptors, fingerprints, or architectural modifications, making it accessible to researchers without ML expertise.

Bidirectional design: Inverse design is achieved by simply reversing the question format, with no architectural changes or separate generative model needed.

Extrapolation capability: The model can generate molecules with properties outside the training distribution, as demonstrated by the HOMO-LUMO gap extrapolation experiments.

Limitations

- In the high-data regime, conventional ML models with chemistry-specific features often catch up to or surpass GPT-3, as the inductive biases encoded in GPT-3 become less necessary with sufficient data.

- Regression is inherently limited by the discretization of continuous values into tokens. This requires more data than classification and introduces quantization error.

- The approach relies on the OpenAI API, introducing cost and reproducibility concerns (model versions may change). The authors partially address this by providing open-source alternatives via

chemlift. - The authors acknowledge that identified correlations may not represent causal relationships. GPT-3 finding predictive patterns does not guarantee that the patterns are chemically meaningful.

- No optimization of prompts, tokenization, or hyperparameters was performed, suggesting room for improvement but also making it difficult to assess the ceiling of this approach.

Reproducibility Details

Data

All datasets are publicly available and were obtained from published benchmarks.

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Classification | HEA phase (Pei et al.) | 1,252 alloys | Single-phase vs. multi-phase |

| Regression | FreeSolv | 643 molecules | Hydration free energies |

| Regression | ESOL | 1,128 molecules | Aqueous solubility |

| Regression | QMugs | 665,000 molecules | HOMO-LUMO gaps via GFN2-xTB |

| Classification | Lipophilicity (ChEMBL) | Varies | LogP classification |

| Classification | OPV PCE | Varies | Organic photovoltaic efficiency |

| Regression | MOF Henry coefficients | Varies | CO2/CH4 adsorption |

| Inverse design | Photoswitches (Griffiths et al.) | 392 molecules | Transition wavelengths |

Algorithms

- Fine-tuning via OpenAI API: 8 epochs, learning rate multiplier 0.02

- GPT-3

adavariant (smallest model) used for all main results - In-context learning also tested with larger GPT-3 models and GPT-4

- Open-source alternative: GPT-J-6B with LoRA + 8-bit quantization

- Learning curves fit to power laws $-a \exp(-bx + c)$ for data efficiency comparison

- Validity checked using RDKit via GuacaMol’s

is\_validmethod

Models

- GPT-3 ada (OpenAI API, proprietary)

- GPT-J-6B (open-source, fine-tunable on consumer hardware)

Evaluation

| Metric | Task | Notes |

|---|---|---|

| Accuracy | HEA phase | Classification |

| $F_1$ macro | All classification tasks | Class-balanced |

| Cohen’s $\kappa$ | Classification | Used for learning curve thresholds |

| MAE / MAPE | Regression, inverse design | Property prediction accuracy |

| Validity rate | Inverse design | Fraction of parseable SMILES |

| Frechet ChemNet distance | Inverse design | Distribution similarity |

| SA score | Inverse design | Synthetic accessibility |

Hardware

- Fine-tuning via OpenAI API (cloud compute, not user-specified)

- Open-source experiments: consumer GPU hardware with 8-bit quantization

- Quantum chemistry validation: GFN2-xTB for HOMO-LUMO calculations

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| gptchem | Code | MIT | All experiments with OpenAI API |

| chemlift | Code | MIT | Open-source LLM fine-tuning support |

| Zenodo (gptchem) | Code | MIT | Archived release |

| Zenodo (chemlift) | Code | MIT | Archived release |

Paper Information

Citation: Jablonka, K. M., Schwaller, P., Ortega-Guerrero, A., & Smit, B. (2024). Leveraging large language models for predictive chemistry. Nature Machine Intelligence, 6(2), 161-169. https://doi.org/10.1038/s42256-023-00788-1

@article{jablonka2024leveraging,

title={Leveraging large language models for predictive chemistry},

author={Jablonka, Kevin Maik and Schwaller, Philippe and Ortega-Guerrero, Andres and Smit, Berend},

journal={Nature Machine Intelligence},

volume={6},

number={2},

pages={161--169},

year={2024},

publisher={Nature Publishing Group},

doi={10.1038/s42256-023-00788-1}

}