An Interactive LLM for Molecule Optimization

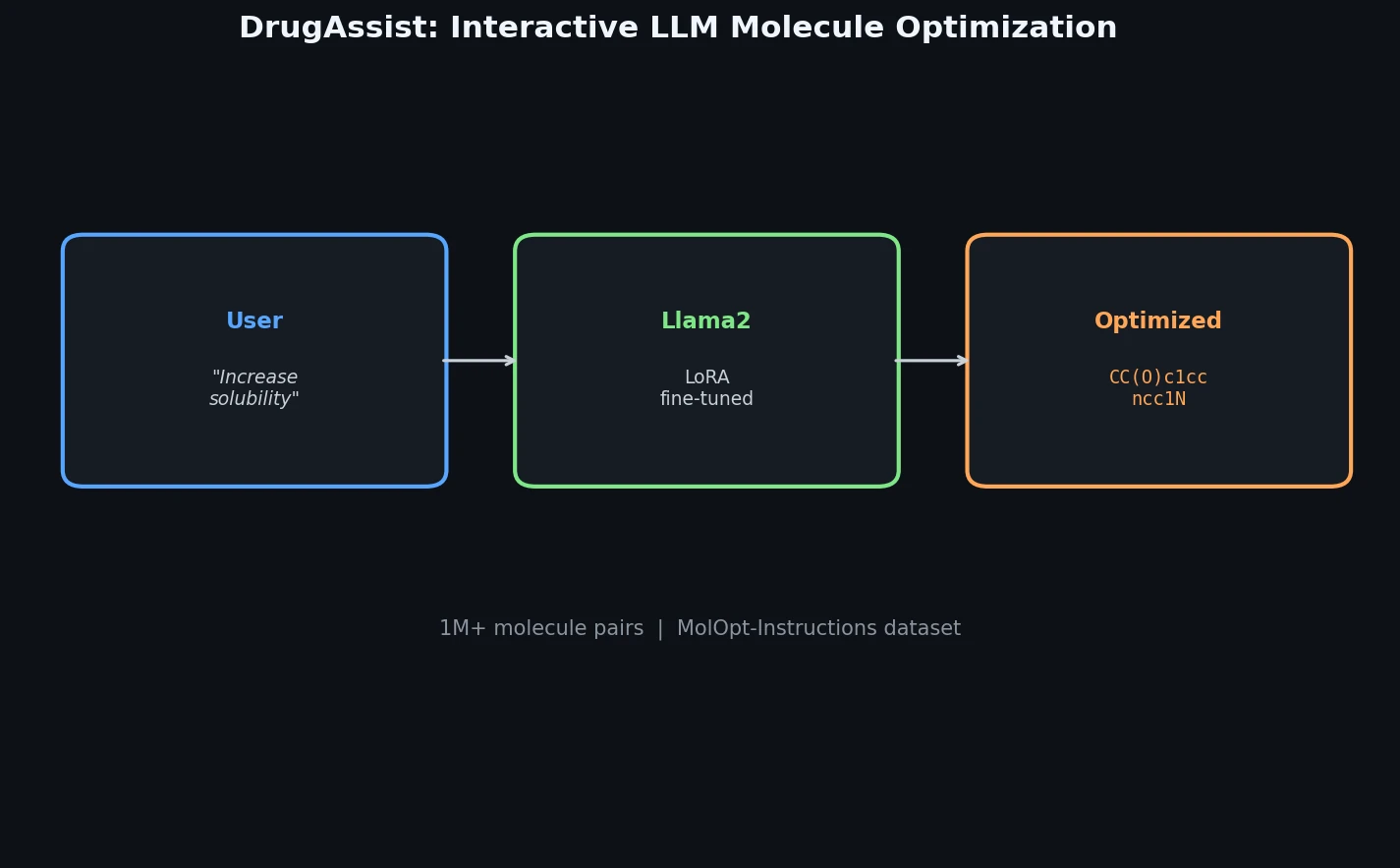

DrugAssist is a Method paper that proposes an interactive molecule optimization model built by fine-tuning Llama2-7B-Chat with LoRA on a newly constructed instruction dataset. The primary contribution is twofold: (1) the MolOpt-Instructions dataset containing over one million molecule pairs with six molecular properties and three optimization task categories, and (2) a dialogue-based molecule optimization system that allows domain experts to iteratively refine molecular modifications through multi-turn natural language conversations.

Why Interactive Molecule Optimization Matters

Molecule optimization is a core step in the drug discovery pipeline, where lead compounds must be modified to improve specific pharmacological properties while maintaining structural similarity. Existing approaches fall into sequence-based methods (treating SMILES optimization as machine translation) and graph-based methods (graph-to-graph translation), but they share a critical limitation: they are non-interactive. These models learn patterns from chemical structure data without incorporating expert feedback.

The drug discovery process is inherently iterative and requires integrating domain expertise. Medicinal chemists typically refine candidates through repeated cycles of suggestion, evaluation, and adjustment. Prior LLM-based approaches like ChatDrug relied on prompt engineering with general-purpose models (GPT-3.5-turbo) rather than fine-tuning, limiting their optimization accuracy. Additionally, most existing molecule optimization benchmarks focus on single-property optimization with vague objectives (e.g., “maximize QED”), while real-world drug design requires optimizing property values within specific ranges across multiple properties simultaneously.

Instruction-Based Fine-Tuning with MolOpt-Instructions

The core innovation has two components: the MolOpt-Instructions dataset construction pipeline and the multi-task instruction tuning strategy.

Dataset Construction

MolOpt-Instructions is built from one million molecules randomly sampled from the ZINC database. The construction workflow uses mmpdb (an open-source Matched Molecular Pair platform) to generate structurally similar molecule pairs through Matched Molecular Pair Analysis (MMPA). Pairs are filtered to satisfy two criteria: Tanimoto similarity greater than 0.65 and logP difference greater than 2.5. Property values for six properties (Solubility, BBBP, hERG inhibition, QED, hydrogen bond donor count, and hydrogen bond acceptor count) are computed using Tencent’s iDrug platform. The final dataset contains 1,029,949 unique pairs covering 1,595,839 unique molecules, with mean similarity of 0.69 and mean logP difference of 2.82.

Three categories of optimization tasks are defined:

- Loose: Increase or decrease a given property value (no threshold)

- Strict: Increase or decrease by at least a specified threshold

- Range: Optimize the property value to fall within a given interval

Instruction templates are generated with ChatGPT assistance and manually refined. To ensure balance, source and target molecules are swapped for some pairs to maintain a roughly 1:1 ratio of property increases to decreases.

Murcko scaffold analysis confirms chemical diversity: the average molecules per scaffold is 2.95, and over 93.7% of scaffolds contain no more than five molecules.

Multi-Task Instruction Tuning

The model is fine-tuned on Llama2-7B-Chat using LoRA (rank 64, alpha 128). To prevent catastrophic forgetting of general language capabilities, the training data combines MolOpt-Instructions with the Stanford Alpaca dataset (52k instruction-following examples, replicated 5x to balance the mixture). The training objective minimizes the negative log-likelihood over the response tokens:

$$L(R; \boldsymbol{\theta}) = -\sum_{u_i \in R} \log \Phi(u_i \mid u_{<i}, I)$$

where $I$ is the instruction, $R$ is the response, and $\Phi$ is the model’s conditional probability.

Training runs for 10 epochs with batch size 512, using AdamW ($\beta = (0.9, 0.999)$), learning rate 1e-4, 3% warm-up steps with cosine decay, and no weight decay. The data is split 90/5/5 for train/validation/test.

Experimental Setup and Multi-Property Optimization Results

Comparison with Traditional Approaches

DrugAssist is compared against Mol-Seq2Seq and Mol-Transformer (He et al., 2021) on simultaneous Solubility and BBBP optimization with range constraints. The evaluation prompt asks the model to generate an optimized molecule with solubility within a given range and BBBP category changed from one level to another.

| Model | Solubility | BBBP | Both | Valid Rate | Similarity |

|---|---|---|---|---|---|

| Mol-Seq2Seq | 0.46 | 0.55 | 0.35 | 0.76 | 0.61 |

| Mol-Transformer | 0.70 | 0.78 | 0.59 | 0.96 | 0.70 |

| DrugAssist | 0.74 | 0.80 | 0.62 | 0.98 | 0.69 |

DrugAssist achieves the highest success rates in both single-property and multi-property optimization while maintaining high validity (0.98) and comparable structural similarity (0.69).

Comparison with LLMs

DrugAssist is compared against Llama2-7B-Chat, GPT-3.5-turbo (via ChatDrug), and BioMedGPT-LM-7B on 16 tasks covering all three optimization categories. These comparisons use multi-turn dialogues following the ChatDrug protocol: if the model’s output fails to meet requirements, a database-retrieved molecule meeting the criteria and similar to the model’s output is provided as a hint for iterative refinement.

Selected results on single-property tasks (valid ratio / correct ratio, loose/strict):

| Task | Llama2-7B-Chat | GPT-3.5-turbo | BioMedGPT-LM | DrugAssist |

|---|---|---|---|---|

| QED+ | 0.17 / 0.16 | 0.15 / 0.15 | 0.15 / 0.09 | 0.76 / 0.63 |

| Acceptor+ | 0.08 / 0.08 | 0.04 / 0.06 | 0.18 / 0.13 | 0.71 / 0.67 |

| Donor+ | 0.15 / 0.08 | 0.10 / 0.04 | 0.17 / 0.09 | 0.72 / 0.76 |

| Solubility+ | 0.36 / 0.20 | 0.16 / 0.05 | 0.18 / 0.09 | 0.80 / 0.41 |

| BBBP+ | 0.19 / 0.14 | 0.10 / 0.10 | 0.16 / 0.07 | 0.82 / 0.61 |

| hERG- | 0.39 / 0.31 | 0.13 / 0.15 | 0.13 / 0.12 | 0.71 / 0.67 |

Multi-property tasks:

| Task | Llama2-7B-Chat | GPT-3.5-turbo | BioMedGPT-LM | DrugAssist |

|---|---|---|---|---|

| Sol+ & Acc+ | 0.15 / 0.04 | 0.09 / 0.02 | 0.10 / 0.07 | 0.50 / 0.27 |

| QED+ & BBBP+ | 0.14 / 0.09 | 0.09 / 0.06 | 0.16 / 0.11 | 0.65 / 0.41 |

DrugAssist outperforms all baselines across every task. BioMedGPT-LM frequently misunderstands the task, generating guidance text rather than molecules. GPT-3.5-turbo achieves high validity but often outputs the input molecule unchanged.

Transferability, Iterative Refinement, and Limitations

Key Findings

Zero-shot transferability: Although DrugAssist trains on single-property optimization data, it successfully handles multi-property optimization requests at inference time. In a case study, the model simultaneously increased both BBBP and QED by at least 0.1 while maintaining structural similarity, without any multi-property training examples.

Few-shot generalization: DrugAssist optimizes properties not seen during training (e.g., logP) when provided with a few in-context examples of successful optimizations, a capability that traditional sequence-based or graph-based models cannot achieve without retraining.

Iterative optimization: When an initial optimization fails to meet requirements, DrugAssist can incorporate feedback (a database-retrieved hint molecule) and modify different functional groups in a second attempt to produce a compliant molecule.

Limitations

The authors acknowledge that DrugAssist has a relatively lower success rate on the most challenging task category, strict range-constrained solubility optimization (0.41 success rate under strict criteria vs. 0.80 under loose criteria). The model also relies on iDrug for property prediction of Solubility, BBBP, and hERG inhibition, meaning its optimization quality is bounded by the accuracy of these property predictors. The evaluation uses only 500 test molecules for LLM comparisons, which is a relatively small evaluation set. The paper does not report statistical significance tests or confidence intervals for any results.

Future Directions

The authors plan to improve multimodal data handling to reduce hallucination problems and to further enhance DrugAssist’s interactive capabilities for better understanding of user needs and feedback.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Training | MolOpt-Instructions | 1,029,949 molecule pairs | Sourced from ZINC via mmpdb; 6 properties |

| Training (auxiliary) | Stanford Alpaca | 52k instructions (5x replicated) | Mitigates catastrophic forgetting |

| Evaluation (traditional) | From He et al. (2021) | Not specified | Multi-property optimization test |

| Evaluation (LLM) | ZINC subset | 500 molecules | Randomly selected |

Algorithms

- Base model: Llama2-7B-Chat

- Fine-tuning: LoRA with rank 64, alpha 128

- Optimizer: AdamW, $\beta = (0.9, 0.999)$, lr = 1e-4, no weight decay

- Schedule: 3% warm-up, cosine decay

- Epochs: 10

- Batch size: 512

- Property calculation: iDrug (Solubility, BBBP, hERG); RDKit (H-bond donors/acceptors, QED)

- Molecular pairs: mmpdb for Matched Molecular Pair Analysis

Models

- Fine-tuned Llama2-7B-Chat with LoRA adapters

- No pre-trained weights released (code and data available)

Evaluation

| Metric | Description |

|---|---|

| Success rate | Fraction of molecules meeting optimization criteria |

| Valid rate | Fraction of generated SMILES that parse as valid molecules |

| Similarity | Tanimoto similarity between input and optimized molecules |

Hardware

- 8 NVIDIA Tesla A100-SXM4-40GB GPUs

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| DrugAssist Code | Code | Not specified | Training and inference code |

| MolOpt-Instructions | Dataset | Not specified | 1M+ molecule pairs, 6 properties |

Paper Information

Citation: Ye, G., Cai, X., Lai, H., Wang, X., Huang, J., Wang, L., Liu, W., & Zeng, X. (2024). DrugAssist: A Large Language Model for Molecule Optimization. Briefings in Bioinformatics, 26(1), bbae693.

@article{ye2024drugassist,

title={DrugAssist: A Large Language Model for Molecule Optimization},

author={Ye, Geyan and Cai, Xibao and Lai, Houtim and Wang, Xing and Huang, Junhong and Wang, Longyue and Liu, Wei and Zeng, Xiangxiang},

journal={Briefings in Bioinformatics},

volume={26},

number={1},

pages={bbae693},

year={2024},

doi={10.1093/bib/bbae693}

}