A Resource for Chemistry-Specific Language Modeling

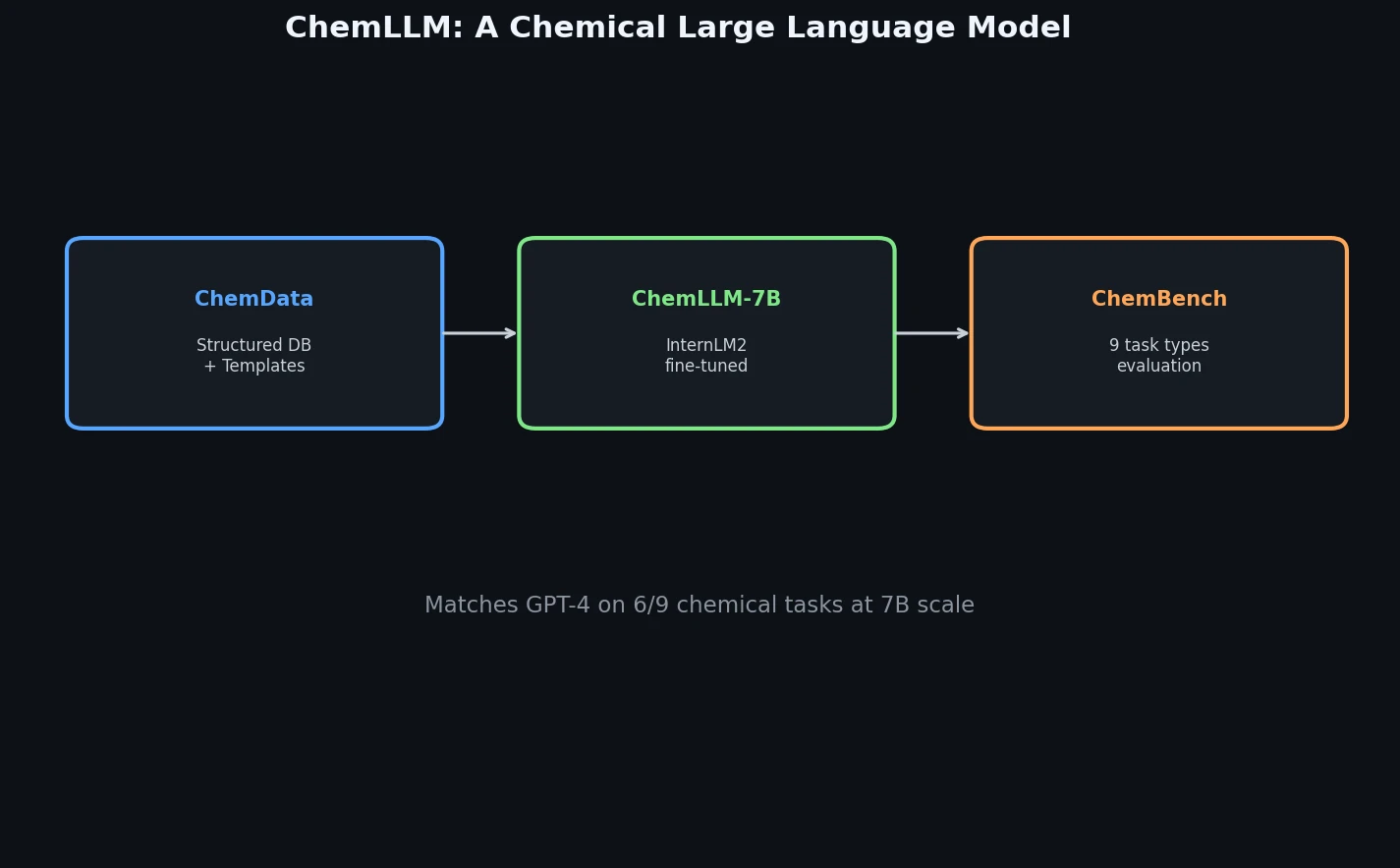

ChemLLM is a Resource paper that delivers three interconnected artifacts: ChemData (a 7M-sample instruction tuning dataset for chemistry), ChemBench (a 4,100-question multiple-choice benchmark spanning nine chemistry tasks), and ChemLLM itself (a 7B-parameter language model fine-tuned on InternLM2-Base-7B). Together, these components form the first comprehensive framework for building and evaluating LLMs dedicated to the chemical domain. The primary contribution is not a novel architecture but rather the data curation pipeline, evaluation benchmark, and training methodology that converts structured chemical knowledge into dialogue-formatted instruction data.

Bridging Structured Chemical Databases and Conversational LLMs

While general-purpose LLMs like GPT-4 have shown promise on chemistry tasks, they are not specifically designed for the chemical domain. Several challenges motivate ChemLLM:

Structured data incompatibility: Most chemical information resides in structured databases (PubChem, ChEMBL, ChEBI, ZINC, USPTO) that are not naturally suited for training conversational language models. Using this data directly can degrade natural language processing capabilities.

Molecular notation understanding: Molecules are represented in specialized notations like SMILES, which differ from natural language and require explicit alignment during training.

Task diversity: Chemical tasks span name conversion, property prediction, molecular captioning, retrosynthesis, product prediction, yield prediction, and more. A uniform training pipeline must handle this diversity without task-specific adaptation.

Evaluation gaps: Existing chemical benchmarks (e.g., MoleculeNet) are designed for specialist models, not LLMs. Text-based evaluation metrics like BLEU and ROUGE are sensitive to output style rather than factual correctness, making them unreliable for scientific accuracy assessment.

Prior work focused on developing specialist models for individual downstream tasks while neglecting instruction-following and dialogue capabilities that are essential for broader reasoning and generalization.

Template-Based Instruction Construction from Structured Data

The core innovation is a systematic approach for converting structured chemical data into instruction-tuning format through two techniques:

Seed Template Prompt Technique

For each task type, the authors design a foundational seed template and use GPT-4 to generate variations that differ in expression but maintain semantic consistency. For each structured data entry, one template is randomly selected to create a single-turn dialogue sample. For example, converting IUPAC-to-SMILES entries:

- “Convert the IUPAC name [name] to its corresponding SMILES representation.”

- “What’s the SMILES notation for the chemical known as [name]?”

- “Show me the SMILES sequence for [name], please.”

Play as Playwrights Technique

To generate richer, multi-turn dialogues, the authors prompt GPT-4 with a chain-of-thought (CoT) style “script” construction method. GPT-4 is guided to create multi-turn exchanges that simulate expert discussions, smoothly transitioning between question and answer stages. An additional “answer masking” variant has the model inquire about supplementary chemical information before providing a final answer, simulating realistic expert reasoning.

Training Objective

The model is fine-tuned using LoRA with an autoregressive cross-entropy loss:

$$L_{CE} = -\sum_{c=1}^{M} y_{o,c} \log(p_{o,c})$$

where $M$ is the vocabulary size, $y_{o,c}$ is a binary indicator for whether observation $o$ belongs to class $c$, and $p_{o,c}$ is the predicted probability.

Two-Stage Training Pipeline and ChemBench Evaluation

Training Setup

ChemLLM uses a two-stage instruction tuning approach built on InternLM2-Base-7B:

Stage 1: Fine-tune on Multi-Corpus (1.7M Q&A pairs from Hugging Face) to enhance general linguistic capabilities, producing InternLM2-Chat-7B.

Stage 2: Fine-tune on a mixture of ChemData (7M entries) and Multi-Corpus, balancing domain-specific chemical expertise with general language ability.

Training details include:

- LoRA with rank 8, scale factor 16.0, dropout 0.1

- AdamW optimizer with initial learning rate $5.0 \times 10^{-5}$

- NEFTune noise injection (alpha = 5) to prevent overfitting

- Flash Attention-2 and KV Cache for efficiency

- ZeRO Stage-2 for parameter offloading

- Per-card batch size of 8 (total batch size 128)

- 1.06 epochs, 85,255 steps

- Training loss reduced from 1.4998 to 0.7158

ChemData Composition

ChemData spans three principal task categories with 7M instruction-tuning Q&A pairs:

| Category | Tasks |

|---|---|

| Molecules | Name Conversion, Caption2Mol, Mol2Caption, Molecular Property Prediction |

| Reactions | Retrosynthesis, Product Prediction, Yield Prediction, Temperature Prediction, Solvent Prediction |

| Domain-specific | General chemical knowledge for broader chemical space understanding |

Data sources include PubChem, ChEMBL, ChEBI, ZINC, USPTO, ORDerly, ChemRxiv, LibreTexts Chemistry, Wikipedia, and Wikidata.

ChemBench Design

ChemBench contains 4,100 multiple-choice questions across the same nine tasks as ChemData. The choice of multiple-choice format is deliberate: it minimizes the influence of output style and focuses evaluation on factual correctness, unlike BLEU/ROUGE-based evaluation. Wrong answers are generated by sampling nearby values (for prediction tasks) or using GPT-4 to create plausible distractors. Deduplication ensures no overlap between ChemData training entries and ChemBench questions.

ChemBench has been contributed to the OpenCompass evaluation platform.

Baselines

All evaluations use 5-shot prompting. Baselines include:

| Model | Type | Parameters |

|---|---|---|

| LLaMA-2 | Open-source | 7B |

| Mistral | Open-source | 7B |

| ChatGLM3 | Open-source | 7B |

| Qwen | Open-source | 7B |

| InternLM2-Chat-7B | Open-source (Stage 1 only) | 7B |

| GPT-3.5 | Closed-source | N/A |

| GPT-4 | Closed-source | N/A |

ChemLLM Matches GPT-4 on Chemical Tasks and Outperforms 7B Peers

Chemical Evaluation (ChemBench)

ChemLLM significantly outperforms general LLMs of similar scale and surpasses GPT-3.5 across all nine tasks. Compared to GPT-4, ChemLLM achieves higher scores on six of nine tasks, with the remaining three ranking just below GPT-4. LLaMA-2 scores near random chance (~25 per task), highlighting the difficulty of these tasks for models without chemical training.

Compared to InternLM2-Chat-7B (the Stage 1 model), ChemLLM shows substantial improvement, confirming the effectiveness of the Stage 2 chemical fine-tuning.

General Evaluation

| Benchmark | ChemLLM | Best 7B Baseline | GPT-4 |

|---|---|---|---|

| MMLU | 65.6 | < 65.6 | Higher |

| C-Eval | 67.2 | < 67.2 | Higher |

| GSM8K | 67.2 | < 67.2 | Higher |

| C-MHChem | 76.4 | < 76.4 | < 76.4 |

ChemLLM outperforms all competing 7B models on MMLU, C-Eval, and GSM8K. On C-MHChem (Chinese middle and high school chemistry), ChemLLM scores 76.4, surpassing GPT-4. The authors note that chemical data fine-tuning may enhance reasoning capabilities due to the logical reasoning required in chemical problem-solving. ChemLLM also comprehensively surpasses InternLM2-Chat-7B on all four general benchmarks, indicating that chemical data does not harm general capabilities.

Qualitative Capabilities

The paper demonstrates qualitative performance on chemistry-related NLP tasks including:

- Chemical literature translation (English to Chinese and vice versa)

- Chemical poetry creation

- Information extraction from chemical text

- Text summarization of chemical research

- Reading comprehension on chemistry topics

- Named entity recognition for chemical entities

- Ethics and safety reasoning in chemical contexts

Limitations

The paper does not provide individual task-level scores in tabular form for ChemBench (only radar charts), making precise comparison difficult. Specific scores for each of the nine tasks across all baselines are not reported numerically. The evaluation is limited to 5-shot prompting without exploration of zero-shot or chain-of-thought prompting variants. The paper also does not discuss failure modes or systematic weaknesses of ChemLLM on particular task types.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Stage 1 Training | Multi-Corpus | 1.7M Q&A | Collected from Hugging Face |

| Stage 2 Training | ChemData + Multi-Corpus | 7M + 1.7M | Chemical + general mixture |

| Chemical Evaluation | ChemBench | 4,100 MCQ | 9 tasks, contributed to OpenCompass |

| General Evaluation | MMLU, C-Eval, GSM8K, C-MHChem | Varies | Standard benchmarks |

Data sources for ChemData: PubChem, ChEMBL, ChEBI, ZINC, USPTO, ORDerly, ChemRxiv, LibreTexts Chemistry, Wikipedia, Wikidata.

Algorithms

- Two-stage instruction tuning (general then chemical)

- LoRA fine-tuning (rank 8, scale 16.0, dropout 0.1)

- Template-based instruction construction with GPT-4 for diversity

- Play as Playwrights CoT prompting for multi-turn dialogue generation

- NEFTune noise injection (alpha 5)

- DeepSpeed ZeRO++ for distributed training

Models

| Model | Base | Parameters | Availability |

|---|---|---|---|

| ChemLLM-7B-Chat | InternLM2-Base-7B | 7B | Hugging Face |

| ChemLLM-7B-Chat-1.5-DPO | InternLM2 | 7B | Hugging Face |

| ChemLLM-20B-Chat-DPO | InternLM | 20B | Hugging Face |

Evaluation

5-shot evaluation across all benchmarks. Multiple-choice format for ChemBench to minimize output style bias.

Hardware

- 2 machines, each with 8 NVIDIA A100 SMX GPUs

- 2 AMD EPYC 7742 64-Core CPUs per machine (256 threads each)

- SLURM cluster management

- BF16 mixed precision training

- Flash Attention-2 + KV Cache

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| ChemLLM-7B-Chat | Model | Apache-2.0 | Original 7B chat model |

| ChemLLM-7B-Chat-1.5-DPO | Model | Other | Updated v1.5 with DPO |

| ChemLLM-20B-Chat-DPO | Model | Apache-2.0 | 20B parameter variant |

| AI4Chem HuggingFace | Collection | Various | All models, datasets, and code |

Paper Information

Citation: Zhang, D., Liu, W., Tan, Q., Chen, J., Yan, H., Yan, Y., Li, J., Huang, W., Yue, X., Ouyang, W., Zhou, D., Zhang, S., Su, M., Zhong, H.-S., & Li, Y. (2024). ChemLLM: A Chemical Large Language Model. arXiv preprint arXiv:2402.06852.

@article{zhang2024chemllm,

title={ChemLLM: A Chemical Large Language Model},

author={Zhang, Di and Liu, Wei and Tan, Qian and Chen, Jingdan and Yan, Hang and Yan, Yuliang and Li, Jiatong and Huang, Weiran and Yue, Xiangyu and Ouyang, Wanli and Zhou, Dongzhan and Zhang, Shufei and Su, Mao and Zhong, Han-Sen and Li, Yuqiang},

journal={arXiv preprint arXiv:2402.06852},

year={2024}

}