An LLM-Powered Chemistry Agent

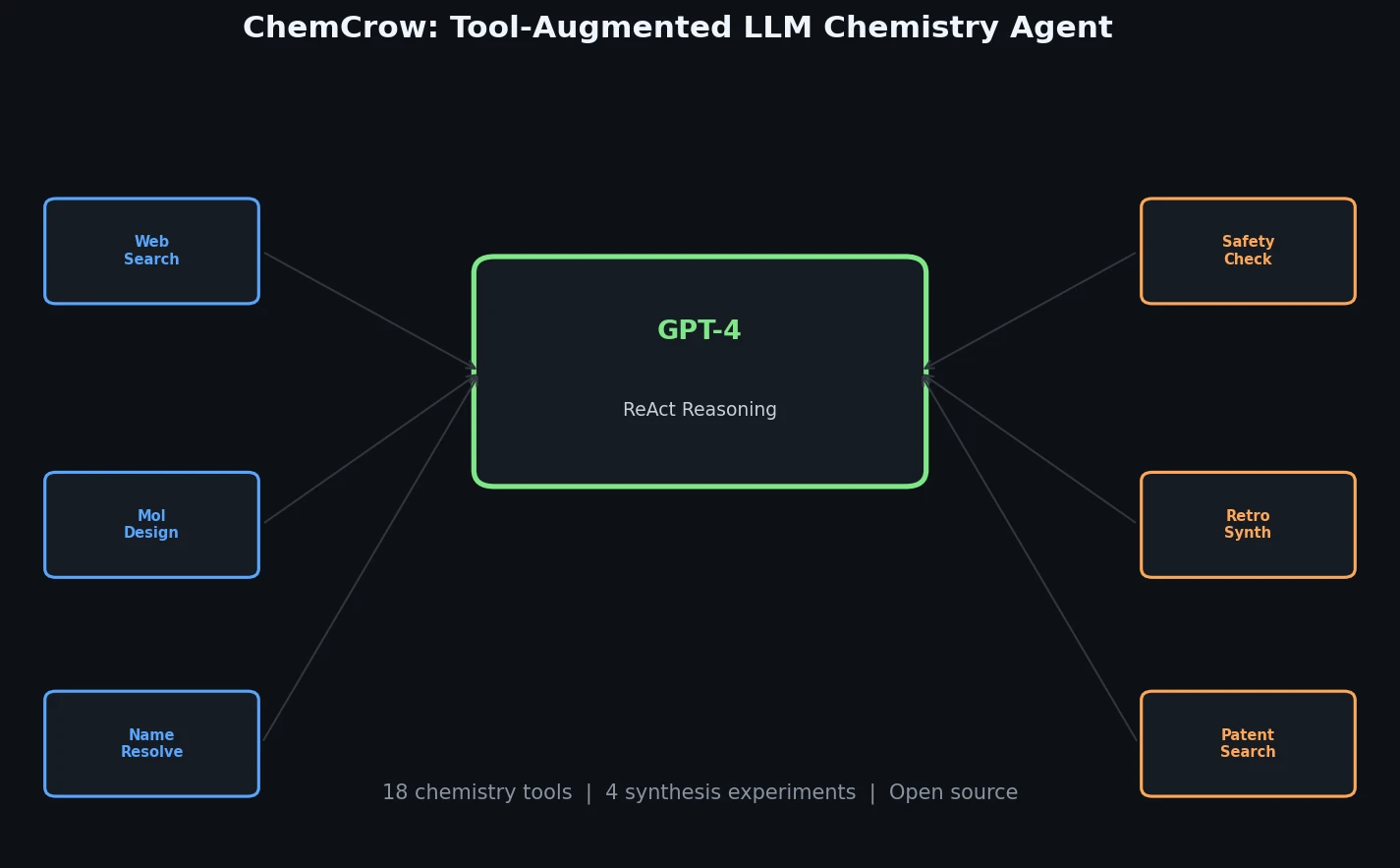

This is a Method paper that introduces ChemCrow, an LLM chemistry agent that augments GPT-4 with 18 expert-designed tools to accomplish tasks across organic synthesis, drug discovery, and materials design. Rather than relying on the LLM’s internal knowledge (which is often inaccurate for chemistry), ChemCrow uses the LLM as a reasoning engine that iteratively calls specialized tools to gather information, plan actions, and execute experiments. The system successfully planned and executed real-world chemical syntheses on a robotic platform, demonstrating one of the first chemistry-related LLM agent interactions with the physical world.

Bridging LLM Reasoning and Chemical Expertise

Large language models have transformed many domains, but they struggle with chemistry-specific problems. GPT-4 cannot reliably perform basic operations like multiplying large numbers, converting IUPAC names to molecular structures, or predicting reaction outcomes. These limitations stem from the models’ token-prediction design, which does not encode chemical reasoning or factual chemical knowledge reliably.

Meanwhile, the chemistry community has developed numerous specialized computational tools for reaction prediction, retrosynthesis planning, molecular property prediction, and de novo molecular generation. These tools exist in isolated environments with steep learning curves, making them difficult for experimental chemists to integrate and use together. The gap between LLM reasoning capabilities and specialized chemistry tools presents an opportunity: augmenting LLMs with these tools could compensate for the models’ chemical knowledge deficiencies while providing a natural language interface to specialized computational chemistry capabilities.

Tool-Augmented Reasoning via ReAct

ChemCrow builds on the ReAct (Reasoning and Acting) framework, where the LLM follows an iterative Thought-Action-Action Input-Observation loop. At each step, the model reasons about the current state of the task, selects an appropriate tool, provides input, pauses while the tool executes, and then incorporates the observation before deciding on the next step. This continues until the final answer is reached.

The system integrates 18 tools organized into four categories:

General tools include web search (via SerpAPI), literature search (using paper-qa with OpenAI embeddings and FAISS), a Python REPL for arbitrary code execution, and a human interaction interface.

Molecule tools cover Name2SMILES (converting molecule names to SMILES via Chem-Space, PubChem, and OPSIN), SMILES2Price (checking purchasability via molbloom and ZINC20), Name2CAS (CAS number lookup via PubChem), molecular Similarity (Tanimoto similarity with ECFP2 fingerprints), ModifyMol (local chemical space exploration via SynSpace), PatentCheck (bloom filter patent lookup via molbloom), FuncGroups (functional group identification via SMARTS patterns), and SMILES2Weight (molecular weight calculation via RDKit).

Safety tools include ControlledChemicalCheck (screening against chemical weapons lists from OPCW and the Australia Group), ExplosiveCheck (GHS explosive classification via PubChem), and SafetySummary (comprehensive safety overview from PubChem data).

Chemical reaction tools include NameRXN (reaction classification via NextMove Software), ReactionPredict (product prediction via IBM’s RXN4Chemistry API using the Molecular Transformer), ReactionPlanner (multi-step synthesis planning via RXN4Chemistry), and ReactionExecute (direct synthesis execution on IBM’s RoboRXN robotic platform).

A key design feature is that safety checks are automatically invoked before synthesis execution. If a molecule is flagged as a controlled chemical or precursor, execution stops immediately.

Experimental Validation and Evaluation

Autonomous Synthesis

ChemCrow autonomously planned and executed four real-world syntheses on the IBM RoboRXN cloud-connected robotic platform:

- DEET (insect repellent), from the prompt “Plan and execute the synthesis of an insect repellent”

- Three thiourea organocatalysts (Schreiner’s, Ricci’s, and Takemoto’s catalysts), from a prompt asking to find and synthesize a thiourea organocatalyst that accelerates the Diels-Alder reaction

All four syntheses yielded the anticipated compounds. ChemCrow demonstrated the ability to autonomously adapt synthesis procedures when the RoboRXN platform flagged issues (such as insufficient solvent or invalid purification actions), iteratively modifying the procedure until it was valid.

Novel Chromophore Discovery

In a human-AI collaboration scenario, ChemCrow was instructed to train a machine learning model to screen candidate chromophores. The system loaded and cleaned data from a chromophore database, trained and evaluated a random forest model, and suggested a molecule with a target absorption maximum of 369 nm. The proposed molecule was subsequently synthesized and characterized, revealing a measured absorption maximum of 336 nm, confirming the discovery of a new chromophore.

Expert vs. LLM Evaluation

The evaluation used 14 use cases spanning synthesis planning, molecular design, and chemical logic. Both ChemCrow and standalone GPT-4 (without tools) were evaluated by:

- Expert human evaluators (n=4): Assessed correctness of chemistry, quality of reasoning, and degree of task completion

- EvaluatorGPT: An LLM evaluator prompted to assess responses

Key findings from the evaluation:

| Evaluator | Preferred System | Reasoning |

|---|---|---|

| Human experts | ChemCrow | Better chemical accuracy and task completeness, especially on complex tasks |

| EvaluatorGPT | GPT-4 | Favored fluent, complete-sounding responses despite factual errors |

Human experts preferred ChemCrow across most tasks, with the exception of very simple tasks where GPT-4 could answer from memorized training data (e.g., synthesis of well-known molecules like paracetamol). GPT-4 without tools consistently produced hallucinations that appeared convincing but were factually incorrect upon expert inspection.

An important finding is that LLM-based evaluation (EvaluatorGPT) cannot replace expert human assessment for scientific tasks. The LLM evaluator lacks the domain knowledge needed to distinguish fluent but incorrect answers from accurate ones, rendering it unsuitable for benchmarking factuality in chemistry.

Key Findings and Limitations

ChemCrow demonstrates that augmenting LLMs with expert-designed tools transforms them from “hyperconfident, typically wrong information sources” into reasoning engines that can gather and act on accurate chemical information. The system lowers the barrier for non-experts to access computational chemistry tools through natural language while serving as an assistant to expert chemists.

Several limitations are acknowledged:

- Tool dependency: ChemCrow’s performance is bounded by the quality and coverage of its tools. Improved synthesis engines would directly improve synthesis planning capabilities.

- Reasoning failures: Tools become useless if the LLM’s reasoning about when and how to use them is flawed, or if garbage inputs are provided.

- Reproducibility: The API-based approach to closed-source LLMs (GPT-4) limits reproducibility of individual results. The authors note that open-source models could address this, potentially at the cost of reasoning quality.

- Evaluation scope: The 14 evaluation tasks, while diverse, represent a limited test set. Standardized benchmarks for LLM-based chemistry tools did not exist at the time of publication.

- Safety considerations: While safety tools prevent execution of controlled chemical syntheses, risks remain from inaccurate reasoning or tool outputs leading to suboptimal conclusions.

The authors emphasize that ChemCrow’s modular design allows easy extension with new tools, and that future integration of image-processing tools, additional language-based tools, and other capabilities could substantially enhance the system.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Chromophore screening | DB for chromophore (Joung et al.) | Not specified | Used for training random forest model |

| Evaluation | 14 expert-designed tasks | 14 tasks | Spanning synthesis, molecular design, and chemical logic |

| Chemical safety | OPCW Schedules 1-3, Australia Group lists | Not specified | Used for controlled chemical screening |

Algorithms

- LLM: GPT-4 with temperature 0.1

- Framework: LangChain for tool integration

- Reasoning: ReAct (Reasoning + Acting) framework with chain-of-thought prompting

- Synthesis planning: IBM RXN4Chemistry API (Molecular Transformer-based)

- Molecule similarity: Tanimoto similarity with ECFP2 fingerprints via RDKit

- Chemical space exploration: SynSpace with 50 robust medicinal chemistry reactions

Models

- GPT-4 (OpenAI, closed-source) for reasoning

- Random forest for chromophore screening (trained on the fly)

- Molecular Transformer via RXN4Chemistry API for reaction prediction and retrosynthesis

Evaluation

- Human evaluation: 4 expert chemists rated responses on chemistry correctness, reasoning quality, and task completion

- LLM evaluation: EvaluatorGPT assessed responses (found unreliable for factuality)

- Experimental validation: 4 syntheses on RoboRXN platform, 1 novel chromophore characterization

Hardware

Hardware requirements are not specified in the paper. The system relies primarily on API calls to GPT-4 and RXN4Chemistry, so local compute requirements are minimal.

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| chemcrow-public | Code | MIT | Open-source implementation with 12 of 18 tools |

| chemcrow-runs | Data | Not specified | All experiment outputs and evaluation data |

| Zenodo release (code) | Code | MIT | Archived release v0.3.24 |

| Zenodo release (runs) | Data | Not specified | Archived experiment runs |

Paper Information

Citation: Bran, A. M., Cox, S., Schilter, O., Baldassari, C., White, A. D., & Schwaller, P. (2024). Augmenting large language models with chemistry tools. Nature Machine Intelligence, 6(5), 525-535.

@article{bran2024augmenting,

title={Augmenting large language models with chemistry tools},

author={Bran, Andres M. and Cox, Sam and Schilter, Oliver and Baldassari, Carlo and White, Andrew D. and Schwaller, Philippe},

journal={Nature Machine Intelligence},

volume={6},

number={5},

pages={525--535},

year={2024},

publisher={Nature Publishing Group},

doi={10.1038/s42256-024-00832-8}

}