A Framework for Conversational Drug Editing with LLMs

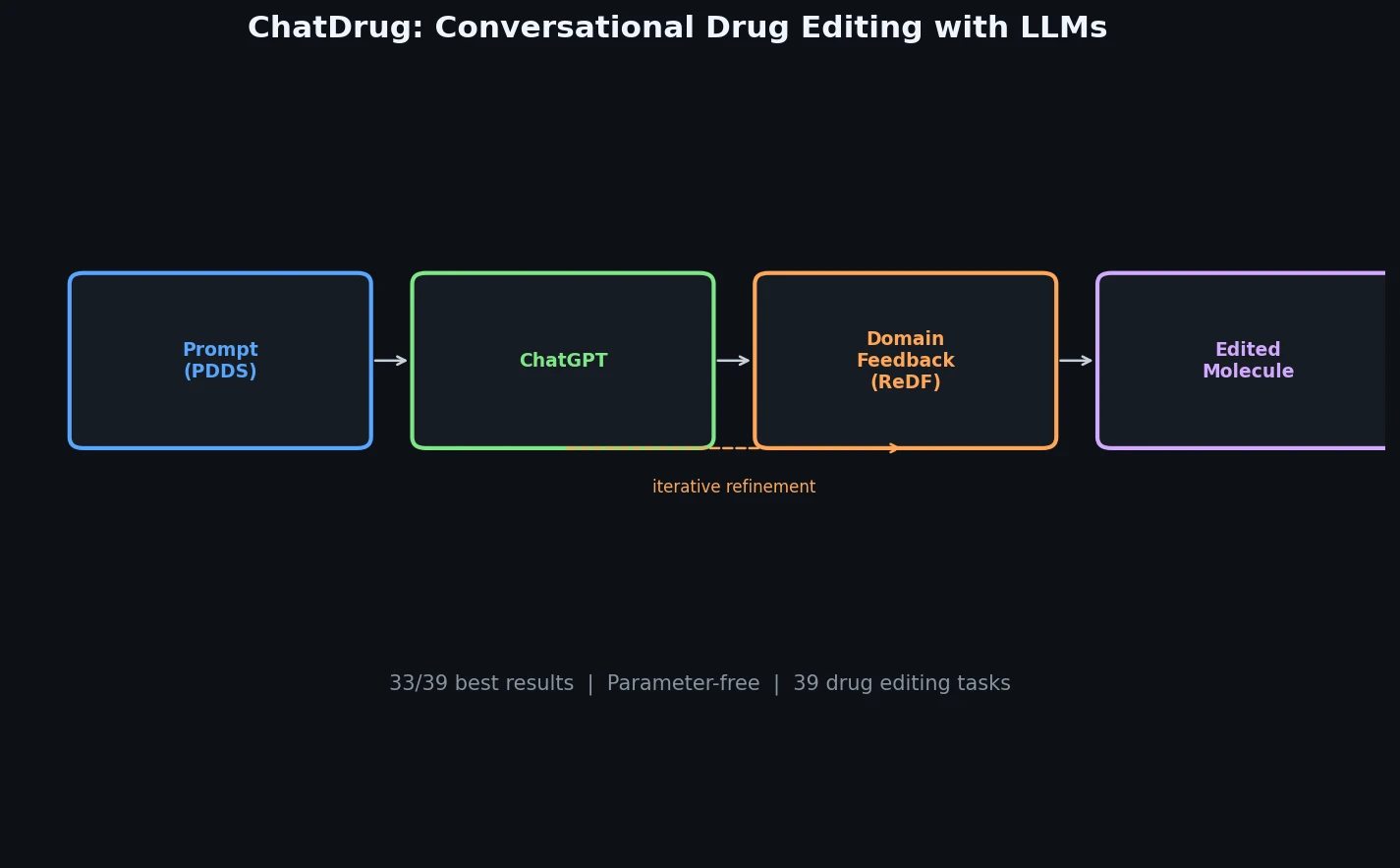

ChatDrug is a Method paper that introduces a parameter-free framework for drug editing using conversational large language models (specifically ChatGPT/GPT-3.5). The primary contribution is a three-module pipeline that combines prompt engineering, retrieval-augmented domain feedback, and iterative conversation to perform text-guided editing of small molecules, peptides, and proteins. The paper also establishes a benchmark of 39 drug editing tasks spanning these three drug types.

Bridging Conversational AI and Drug Discovery

Drug editing (also called lead optimization or protein design) is a critical step in the drug discovery pipeline where molecular substructures are modified to achieve desired properties. Traditional approaches rely on domain experts for manual editing, which can be subjective and biased. Recent multi-modal approaches like MoleculeSTM and ProteinDT have started exploring text-guided drug editing, but they are domain-specific (limited to one drug type) and lack conversational capabilities for iterative refinement.

The authors identify three properties of conversational LLMs that make them suitable for drug discovery: (1) pretraining on comprehensive knowledge bases covering drug-related concepts, (2) strong few-shot adaptation and generalization abilities, and (3) interactive communication enabling iterative feedback incorporation. However, directly applying LLMs to drug editing yields suboptimal results because the models do not fully utilize prior domain knowledge. ChatDrug addresses this gap through structured retrieval and feedback mechanisms.

Three-Module Pipeline: PDDS, ReDF, and Conversation

ChatDrug consists of three modules that operate sequentially without any parameter learning.

PDDS Module (Prompt Design for Domain-Specific)

The PDDS module constructs domain-specific prompts for ChatGPT. Given an input drug $\pmb{x}_{\text{in}}$ and a text prompt $\pmb{x}_t$ describing the desired property change, the goal is:

$$ \pmb{x}_{\text{out}} = \text{ChatDrug}(\pmb{x}_{\text{in}}, \pmb{x}_t) $$

The prompts are designed around high-level property descriptions (e.g., “more soluble in water”) rather than exact substructure replacements. The authors argue that ChatDrug is better suited for “fuzzy searching” (property-based editing with non-deterministic answers) rather than “exact searching” (precise substructure replacement that experts can do directly).

ReDF Module (Retrieval and Domain Feedback)

The ReDF module retrieves structurally similar examples from a domain-specific database and injects them into the conversation as demonstrations. For an input drug $\pmb{x}_{\text{in}}$, a candidate drug $\tilde{\pmb{x}}$ that failed the desired property change, and a retrieval database, ReDF returns:

$$ \pmb{x}_R = \text{ReDF}(\pmb{x}_{\text{in}}, \tilde{\pmb{x}}; \pmb{x}_t) = \underset{\pmb{x}’_R \in \text{RetrievalDB}}{\arg\max} \langle \tilde{\pmb{x}}, \pmb{x}’_R \rangle \wedge D(\pmb{x}_{\text{in}}, \pmb{x}’_R; \pmb{x}_t) $$

where $D(\cdot, \cdot; \cdot) \in {\text{True}, \text{False}}$ is a domain feedback function checking whether the retrieved drug satisfies the desired property change, and $\langle \tilde{\pmb{x}}, \pmb{x}’_R \rangle$ is a similarity function (Tanimoto similarity for small molecules, Levenshtein distance for peptides and proteins).

The retrieved example $\pmb{x}_R$ is injected into the prompt as: “Your provided sequence [$\tilde{\pmb{x}}$] is not correct. We find a sequence [$\pmb{x}_R$] which is correct and similar to the molecule you provided. Can you give me a new molecule?”

Conversation Module

The conversation module enables iterative refinement over $C$ rounds. At each round $c$, if the edited drug $\pmb{x}_c$ does not satisfy the evaluation condition, ChatDrug retrieves a new example via ReDF using $\tilde{\pmb{x}} = \pmb{x}_c$ and continues the conversation. This aligns with the iterative nature of real drug discovery workflows.

Experiments Across 39 Drug Editing Tasks

Task Design

The benchmark includes 39 tasks across three drug types:

- Small molecules (28 tasks): 16 single-objective (tasks 101-108, each with loose and strict thresholds) and 12 multi-objective tasks (tasks 201-206, each with two thresholds). Properties include solubility (LogP), drug-likeness (QED), permeability (tPSA), hydrogen bond acceptors/donors.

- Peptides (9 tasks): 6 single-objective and 3 multi-objective tasks for editing peptide-MHC binding affinity across different HLA allele types.

- Proteins (2 tasks): Editing protein sequences to increase alpha-helix or beta-strand secondary structures.

Baselines

For small molecules, baselines include Random, PCA, High-Variance, and GS-Mutate (all based on MegaMolBART), plus MoleculeSTM with SMILES and Graph representations. For peptides and proteins, random mutation baselines with 1-3 mutated positions are used.

Main Results

ChatDrug achieves the best performance on 33 out of 39 tasks. Key results for small molecule editing (hit ratio):

| Task | Property | ChatDrug (loose) | Best Baseline (loose) |

|---|---|---|---|

| 101 | More soluble | 94.13 | 67.86 (MoleculeSTM-Graph) |

| 102 | Less soluble | 96.86 | 64.79 (MoleculeSTM-Graph) |

| 106 | Lower permeability | 77.35 | 34.13 (MoleculeSTM-SMILES) |

| 107 | More HBA | 95.35 | 54.01 (MoleculeSTM-SMILES) |

| 108 | More HBD | 96.54 | 60.97 (MoleculeSTM-Graph) |

ChatDrug underperforms on tasks 104 (less like a drug) and 105 (higher permeability) and most multi-objective tasks involving permeability (205), where MoleculeSTM variants perform better.

For peptide editing, ChatDrug achieves 41-69% hit ratios compared to 0.4-14.4% for random mutation baselines. For protein editing, ChatDrug reaches 34.79% and 51.38% hit ratios on helix and strand tasks respectively, compared to 26.90% and 21.44% for the best random mutation baseline.

Ablation Studies

Conversation rounds: Performance increases with more rounds, converging around $C = 2$. For example, on task 101 (loose threshold), zero-shot achieves 78.26%, $C = 1$ reaches 89.56%, and $C = 2$ reaches 93.37%.

ReDF threshold: Using a stricter threshold in the domain feedback function $D$ (matching the evaluation threshold) yields substantially higher performance than using a loose threshold. For example, on task 107 with strict evaluation, the strict-threshold ReDF achieves 72.60% vs. 14.96% for the loose-threshold ReDF.

Similarity analysis: Retrieved molecules $\pmb{x}_R$ tend to have lower similarity to input molecules than the intermediate outputs $\pmb{x}_1$, yet they have higher hit ratios. This suggests the ReDF module explores the chemical space effectively, and the conversation module balances similarity preservation with property optimization.

Knowledge extraction: ChatDrug can articulate domain-specific reasoning for its edits (e.g., summarizing rules for increasing water solubility by introducing polar functional groups), though the extracted knowledge shows some redundancy.

Limitations and Future Directions

ChatDrug demonstrates that conversational LLMs can serve as useful tools for drug editing, achieving strong results across diverse drug types with a parameter-free approach. The framework exhibits open vocabulary and compositional properties, allowing it to handle novel drug concepts and multi-objective tasks through natural language.

The authors acknowledge two main limitations. First, ChatDrug struggles with understanding complex 3D drug geometries, which would require deeper geometric modeling. Second, the framework requires multiple conversation rounds to achieve strong performance, adding computational cost through repeated API calls. The authors suggest that knowledge summarization capabilities of LLMs could help reduce this cost.

The evaluation relies entirely on computational oracles (RDKit for small molecules, MHCflurry2.0 for peptides, ProteinCLAP for proteins) rather than wet-lab validation. The hit ratio metric also excludes invalid outputs from the denominator, so the effective success rate on all attempted edits may be lower than reported.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Small molecule inputs | ZINC | 200 molecules | Sampled SMILES strings |

| Small molecule retrieval DB | ZINC | 10K molecules | For ReDF similarity search |

| Peptide inputs | Peptide-MHC binding dataset | 500 peptides per task | From 30 common MHC alleles |

| Peptide retrieval DB | Experimental binding data | Varies by allele | Target allele experimental data |

| Protein inputs | TAPE test set | Varies | Secondary structure prediction test data |

| Protein retrieval DB | TAPE training set | Varies | Secondary structure prediction training data |

Algorithms

- GPT-3.5-turbo via OpenAI ChatCompletion API, temperature=0, frequency_penalty=0.2

- System prompt: “You are an expert in the field of molecular chemistry.”

- $C = 2$ conversation rounds for main results

- 5 random seeds (0-4) for small molecule main results, seed 0 for ablations

Models

- ChatGPT (GPT-3.5-turbo): used as-is, no fine-tuning

- MHCflurry 2.0: pseudo-oracle for peptide binding affinity evaluation

- ProteinCLAP-EBM-NCE from ProteinDT: protein secondary structure prediction

- ESMFold: protein folding for visualization

- RDKit: molecular property calculations for small molecules

Evaluation

| Metric | Description | Notes |

|---|---|---|

| Hit Ratio | Fraction of valid edits satisfying property requirements | Invalid sequences excluded from denominator |

Hardware

All experiments conducted on a single NVIDIA RTX A6000 GPU (used only for peptide and protein evaluation). Total OpenAI API cost was less than $100.

| Artifact | Type | License | Notes |

|---|---|---|---|

| ChatDrug GitHub | Code | Not specified | Official implementation |

Paper Information

Citation: Liu, S., Wang, J., Yang, Y., Wang, C., Liu, L., Guo, H., & Xiao, C. (2024). Conversational Drug Editing Using Retrieval and Domain Feedback. ICLR 2024.

@inproceedings{liu2024chatdrug,

title={Conversational Drug Editing Using Retrieval and Domain Feedback},

author={Liu, Shengchao and Wang, Jiongxiao and Yang, Yijin and Wang, Chengpeng and Liu, Ling and Guo, Hongyu and Xiao, Chaowei},

booktitle={International Conference on Learning Representations},

year={2024}

}