Key Contribution

GEOM addresses the gap between 2D molecular graphs and flexible 3D properties by providing 450k+ molecules with 37M+ conformations. This extensive sampling connects conformer ensembles to experimental properties, providing the necessary infrastructure to benchmark conformer generation methods and train 3D-aware property predictors.

Overview

The Geometric Ensemble Of Molecules (GEOM) dataset provides energy-annotated molecular conformations generated through systematic computational methods. The dataset includes molecules from drug discovery campaigns (AICures), quantum chemistry benchmarks (QM9), and molecular property prediction benchmarks (MoleculeNet), with conformations sampled using CREST/GFN2-xTB and a subset refined with high-quality DFT calculations.

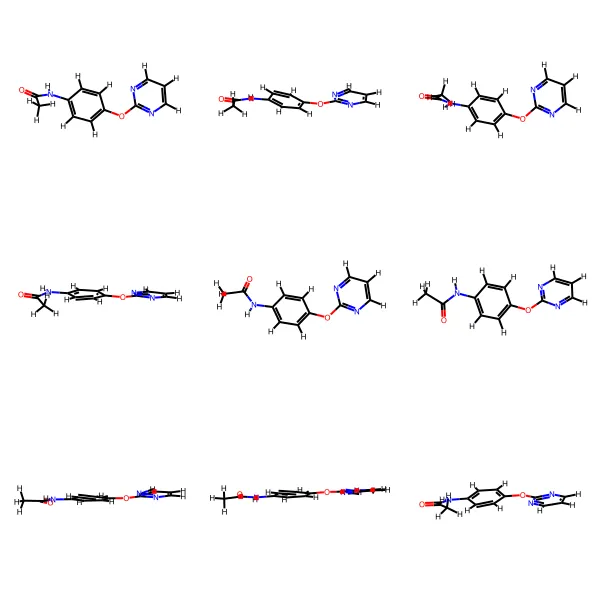

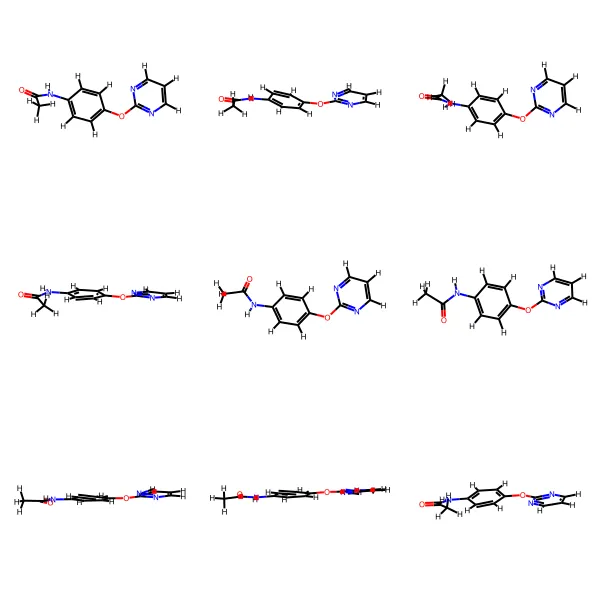

Dataset Examples

Dataset Subsets

| Subset | Count | Description |

|---|---|---|

| Drug-like (AICures) | 304,466 molecules | Drug-like molecules from AICures COVID-19 challenge (avg 44 atoms) |

| QM9 | 133,258 molecules | Small molecules from QM9 (up to 9 heavy atoms) |

| MoleculeNet | 16,865 molecules | Molecules from MoleculeNet benchmarks for physical chemistry, biophysics, and physiology (includes BACE) |

| BACE (High-quality DFT) | 1,511 molecules | BACE subset of MoleculeNet with high-quality DFT energies (r2scan-3c) and experimental inhibition data |

Benchmarks

Gibbs Free Energy Prediction

Predict ensemble Gibbs free energy (G) from molecular structure

| Rank | Model | MAE (kcal/mol) |

|---|---|---|

| 🥇 1 | SchNetFeatures 3D SchNet + graph features (trained on highest-prob conformer) | 0.203 |

| 🥈 2 | ChemProp Message Passing Neural Network (graph model) | 0.225 |

| 🥉 3 | FFNN Feed-forward network on Morgan fingerprints | 0.274 |

| 4 | KRR Kernel Ridge Regression on Morgan fingerprints | 0.289 |

| 5 | Random Forest Random Forest on Morgan fingerprints | 0.406 |

Average Energy Prediction

Predict ensemble average energy (E) from molecular structure

| Rank | Model | MAE (kcal/mol) |

|---|---|---|

| 🥇 1 | ChemProp Message Passing Neural Network (graph model) | 0.11 |

| 🥈 2 | SchNetFeatures 3D SchNet + graph features (trained on highest-prob conformer) | 0.113 |

| 🥉 3 | FFNN Feed-forward network on Morgan fingerprints | 0.119 |

| 4 | KRR Kernel Ridge Regression on Morgan fingerprints | 0.131 |

| 5 | Random Forest Random Forest on Morgan fingerprints | 0.166 |

Conformer Count Prediction

Predict ln(number of unique conformers) from molecular structure

| Rank | Model | MAE |

|---|---|---|

| 🥇 1 | SchNetFeatures 3D SchNet + graph features (trained on highest-prob conformer) | 0.363 |

| 🥈 2 | ChemProp Message Passing Neural Network (graph model) | 0.38 |

| 🥉 3 | FFNN Feed-forward network on Morgan fingerprints | 0.455 |

| 4 | KRR Kernel Ridge Regression on Morgan fingerprints | 0.484 |

| 5 | Random Forest Random Forest on Morgan fingerprints | 0.763 |

Related Datasets

| Dataset | Description |

|---|---|

| QM9 | 134k small molecules with up to 9 heavy atoms and DFT properties |

| PCQM4Mv2 | Millions of computationally generated molecules for HOMO-LUMO gap prediction |

| PubChemQC | DFT structures and energy properties for millions of PubChem molecules |

Strengths

- Scale: 37M+ conformations across 450k+ molecules, providing massive coverage of drug-like and small molecule chemical space.

- Energy Annotations: All conformations include semi-empirical energies (GFN2-xTB); the BACE subset includes high-quality DFT energies.

- Quality Tiers: Three levels of computational rigor allow researchers to trade off dataset size for simulation accuracy.

- Benchmark Ready: Includes validated splits and architectural baselines (e.g., ChemProp, SchNet) for property prediction tasks.

- Task Diversity: Combines molecules sourced from drug discovery (AICures), quantum chemistry (QM9), and biophysiology domains (MoleculeNet).

Limitations

- Computational Constraints: The highest-accuracy DFT subset (BACE) is limited to 1,511 molecules due to the extreme computational cost of exact free energy sampling and Hessian estimation.

- Semi-Empirical Accuracy Gap: The $p^{\text{CREST}}$ statistical weights rely on GFN2-xTB energies, which exhibit a $\sim$2 kcal/mol MAE against true DFT. At room temperature ($k_BT \approx 0.59$ kcal/mol), this error heavily skews the Boltzmann distribution, meaning standard subset weights are imprecise.

- Solvation Assumptions: Most subsets rely on vacuum calculations. Only the BACE subset uses an implicit solvent (ALPB/C-PCM for water).

- Coverage Lapses: Extremely flexible molecules (e.g., within the SIDER dataset) frequently failed the conformer generation pipeline due to runaway topologies.

Technical Notes

Data Generation Pipeline

Initial conformer sampling (RDKit):

EmbedMultipleConfswithnumConfs=50,pruneRmsThresh=0.01Å- MMFF force field optimization

- GFN2-xTB optimization of seed conformer

Conformational exploration (CREST):

- Metadynamics in NVT ensemble driven by a pushing bias potential: $$ V_{\text{bias}} = \sum_i k_i \exp(-\alpha_i \Delta_i^2) $$ where $\Delta_i$ is the root-mean-square displacement (RMSD) against the $i$-th reference structure.

- 12 independent MTD runs per molecule with different settings for $k_i$ and $\alpha_i$.

- 6.0 kcal/mol safety window for conformer retention.

- Solvent: ALPB for water (BACE); vacuum for others.

Energy calculation & Weighting:

Standard (GFN2-xTB): Semi-empirical tight-binding DFT ($\approx$ 2 kcal/mol MAE vs DFT). Conformers are assigned a statistical probability based on energy $E_i$ and rotamer degeneracy $d_i$: $$ p^{\text{CREST}}_i = \frac{d_i \exp(-E_i / k_B T)}{\sum_j d_j \exp(-E_j / k_B T)} $$

High-Quality DFT (CENSO): Refines structures using the

r2scan-3cfunctional, computing exact conformation-dependent free energies ($G_i$) that remove the need for explicit rotamer degeneracy approximations:$$ \begin{aligned} p^{\text{CENSO}}_i &= \frac{\exp(-G_i / k_B T)}{\sum_j \exp(-G_j / k_B T)} \\ G_i &= E_{\text{gas}}^{(i)} + \delta G_{\text{solv}}^{(i)}(T) + G_{\text{trv}}^{(i)}(T) \end{aligned} $$

Quality Levels

| Level | Method | Subset | Accuracy |

|---|---|---|---|

| Standard | CREST/GFN2-xTB | All subsets | ~2 kcal/mol MAE vs DFT |

| DFT Single-Point | r2scan-3c/mTZVPP on CREST geometries | BACE (1,511 molecules) | Sub-kcal/mol |

| DFT Optimized | CENSO full optimization + free energies | BACE (534 molecules) | ~0.3 kcal/mol vs CCSD(T) |

Benchmark Setup

Task: Predict ensemble summary statistics directly from the 2D molecular structure. The target properties include:

- Conformational Free Energy ($G$): $G = -TS$, where $S = -R \sum_i p_i \log p_i$.

- Average Energy ($\langle E \rangle$): $\langle E \rangle = \sum_i p_i E_i$.

- Unique Conformers: Natural log of the conformer count retained within the energy window.

Data: 100,000 species randomly sampled from AICures subset, split 60/20/20 (train/validation/test).

Hyperparameters: Optimized using Hyperopt package for each model/task combination.

Models:

- SchNetFeatures: 3D SchNet architecture + graph features, trained on highest-probability conformer

- ChemProp: Message Passing Neural Network on molecular graphs

- FFNN: Feed-forward network on Morgan fingerprints

- KRR: Kernel Ridge Regression on Morgan fingerprints

- Random Forest: Random Forest on Morgan fingerprints

Hardware & Computational Cost

CREST/GFN2-xTB Generation

Total compute: ~15.7 million core hours

AICures subset:

- 13M core hours on Knights Landing (32-core nodes)

- 1.2M core hours on Cascade Lake/Sky Lake (13-core nodes)

- Average wall time: 2.8 hours/molecule (KNL) or 0.63 hours/molecule (Sky Lake)

MoleculeNet subset: 1.5M core hours

DFT Calculations (BACE only)

Software: CENSO 1.1.2 + ORCA 5.0.1 (r2scan-3c/mTZVPP functional)

Solvent: C-PCM implicit solvation (water)

Hardware: ~54 cores per job

Compute cost:

- 781,000 CPU hours for CENSO optimizations

- 1.1M CPU hours for single-point energy calculations

Reproducibility Details

- Data Availability: All generated conformations, energies, and thermodynamic properties are publicly hosted on Harvard Dataverse. The data is provided in language-agnostic MessagePack format and Python-specific RDKit

.pklformats. - Code & Analysis: The primary GitHub repository (learningmatter-mit/geom) provides tutorials for data extraction, RDKit processing, and conformational visualization.

- Model Training & Baselines: The machine learning benchmarks (SchNet, ChemProp) and corresponding training scripts used to evaluate the dataset can be reproduced using the authors’ NeuralForceField repository.

- Hardware & Compute: Extreme compute was required (15.7M core hours for CREST sampling alone), heavily utilizing Knights Landing (KNL) and Cascade Lake architectures. See Hardware & Computational Cost section above for full details.

- Software Versions: Precise reproduction of conformational properties requires specific versions to mitigate numerical variances: CREST v2.9, xTB v6.2.3/v6.4.1, CENSO v1.1.2, ORCA v5.0.1/v5.0.2, and RDKit v2020.09.1.

- Open-Access Paper: The full methodology is accessible via the arXiv preprint.

Citation

@article{Axelrod_2022,

title={GEOM, energy-annotated molecular conformations for property prediction and molecular generation},

volume={9},

ISSN={2052-4463},

url={http://dx.doi.org/10.1038/s41597-022-01288-4},

DOI={10.1038/s41597-022-01288-4},

number={1},

journal={Scientific Data},

publisher={Springer Science and Business Media LLC},

author={Axelrod, Simon and Gómez-Bombarelli, Rafael},

year={2022},

month={apr}

}