This section collects work that surveys, reviews, or systematizes the chemical language model landscape rather than proposing new architectures.

| Year | Paper | Venue | Focus |

|---|---|---|---|

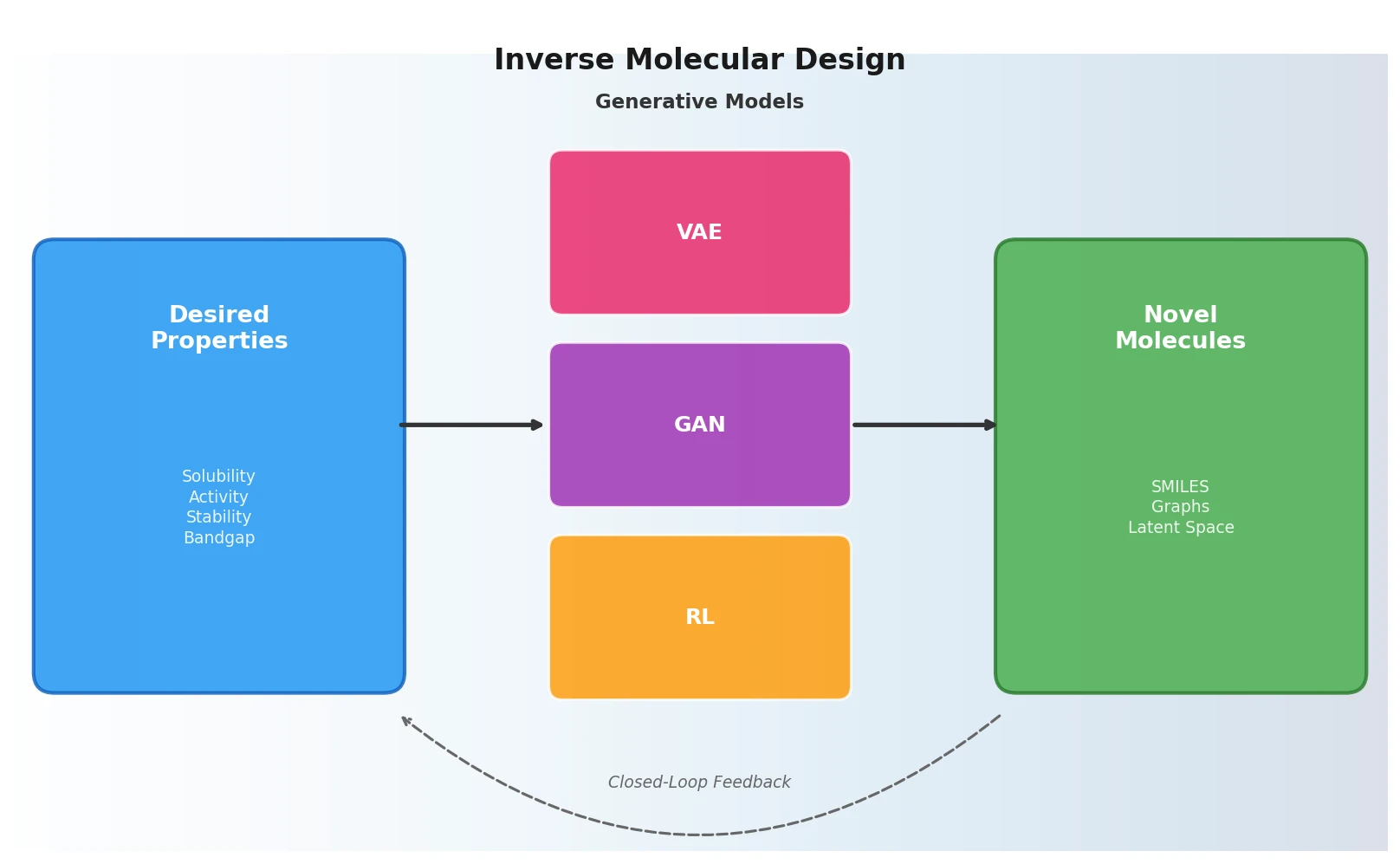

| 2018 | Sánchez-Lengeling & Aspuru-Guzik | Science | VAE/GAN/RL framework for inverse molecular design |

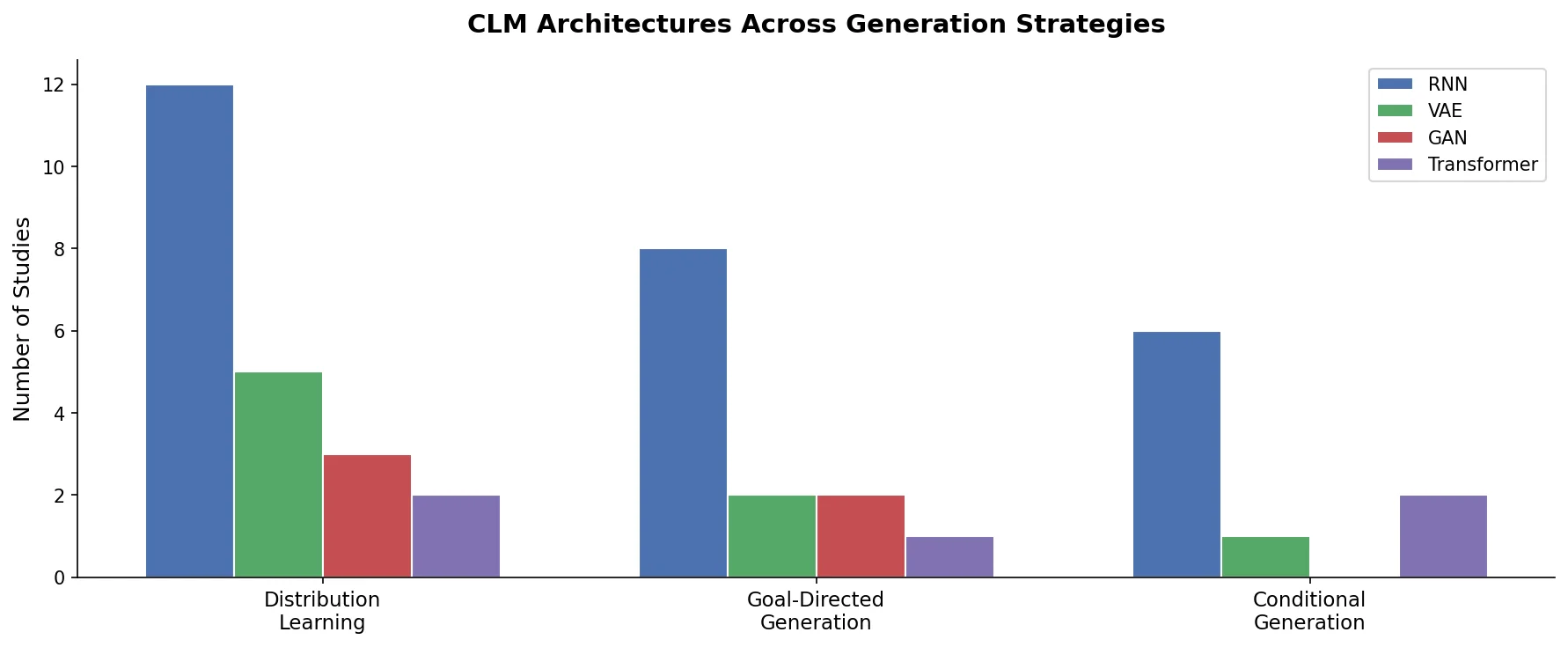

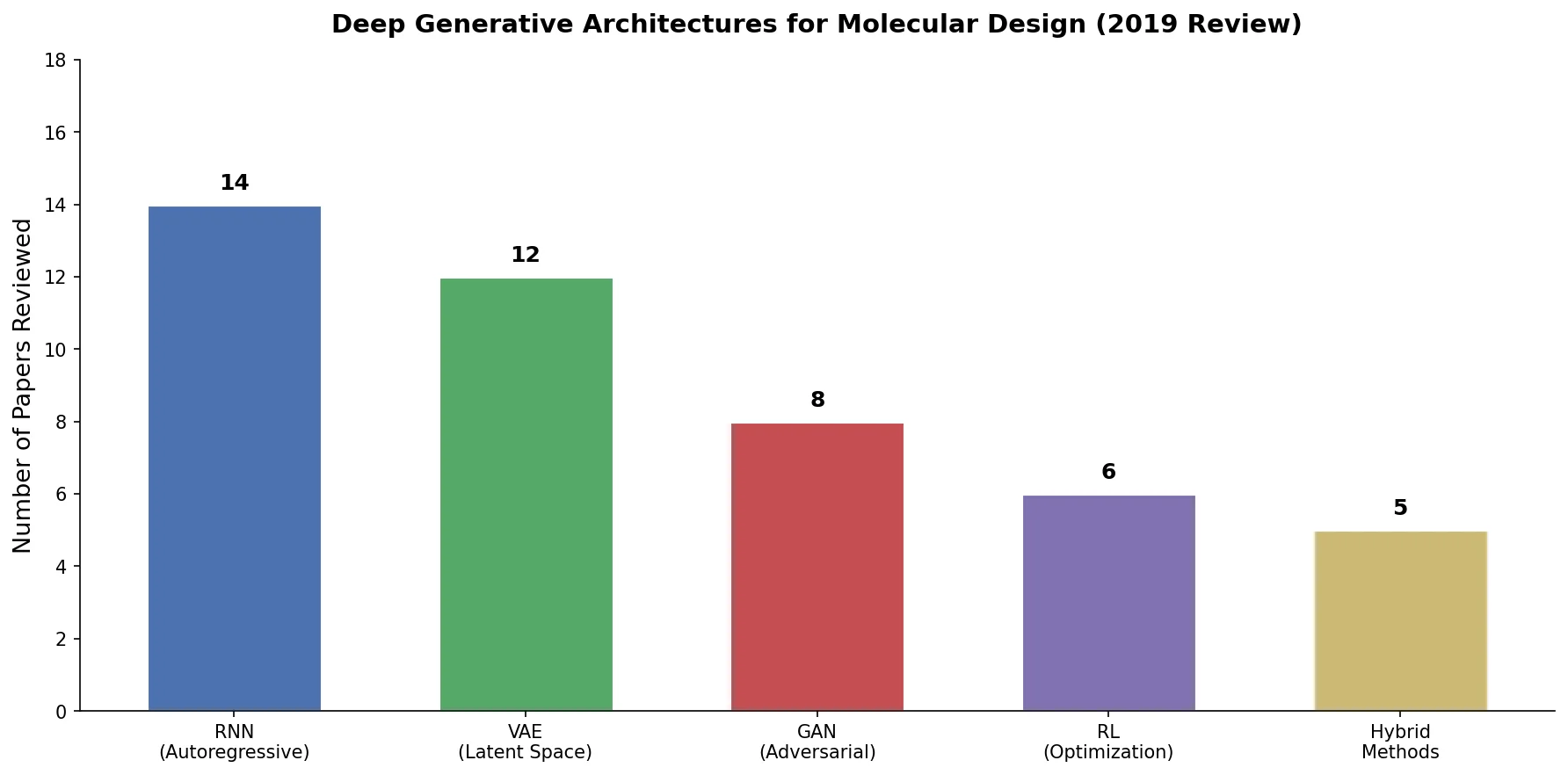

| 2019 | Elton et al. | Mol. Sys. Des. Eng. | 45 papers across RNN, VAE, GAN, RL architectures |

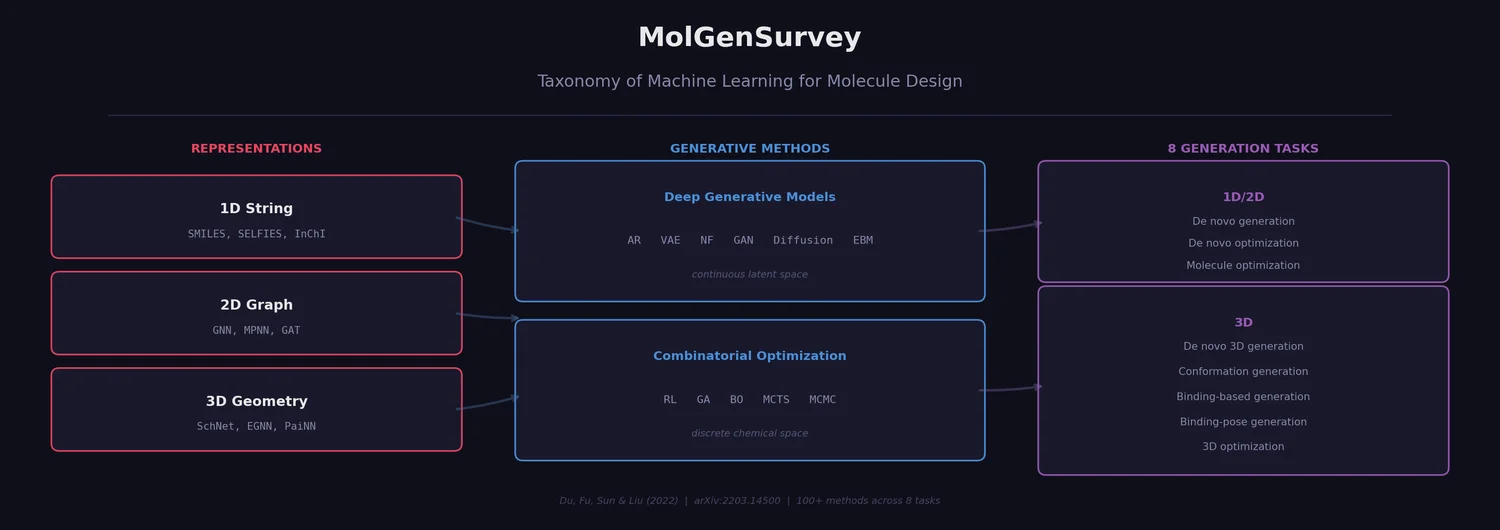

| 2022 | Du et al. (MolGenSurvey) | arXiv | 100+ methods across 1D/2D/3D representations |

| 2023 | Grisoni | Curr. Opin. Struct. Biol. | CLM generation strategies and experimental validations |

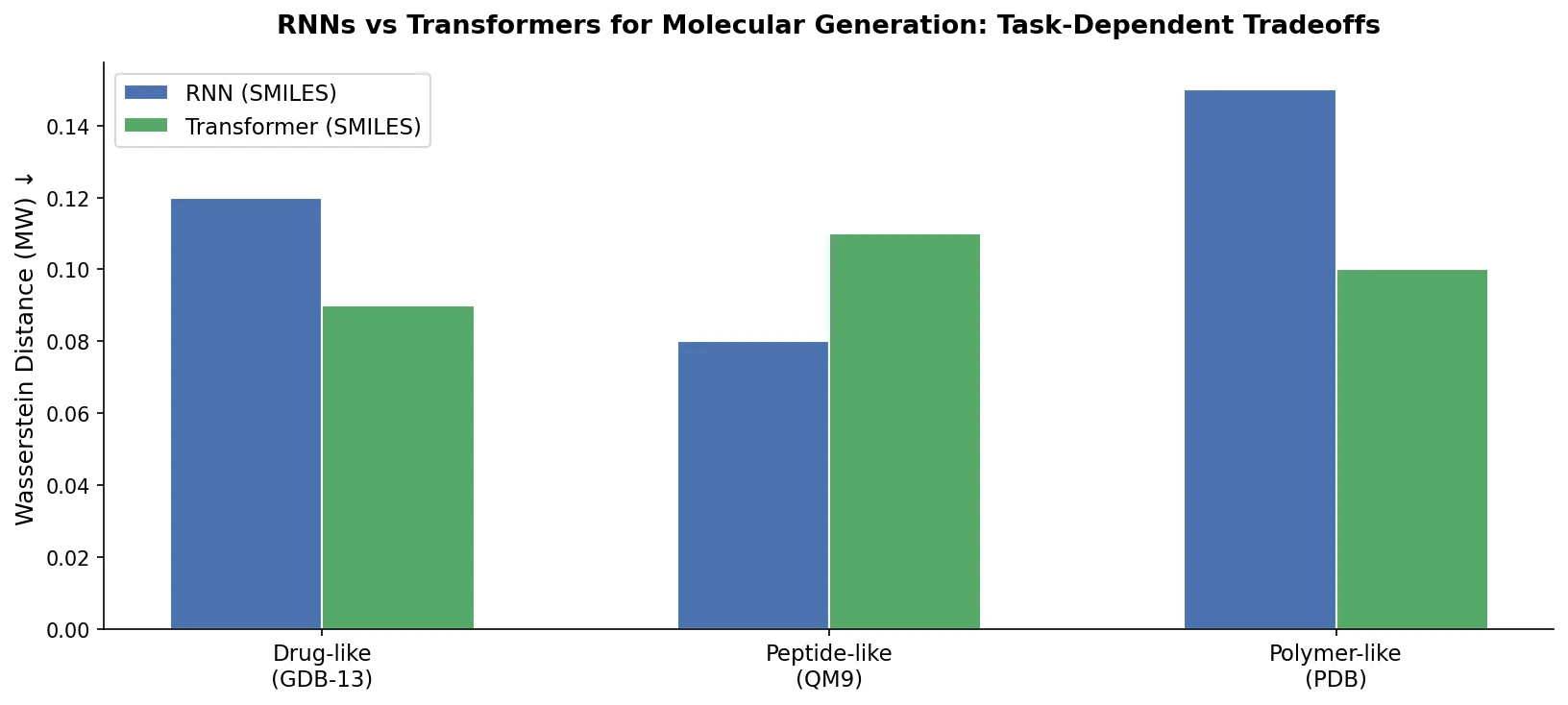

| 2023 | Chen et al. | Brief. Funct. Genom. | RNNs vs transformers empirical comparison |

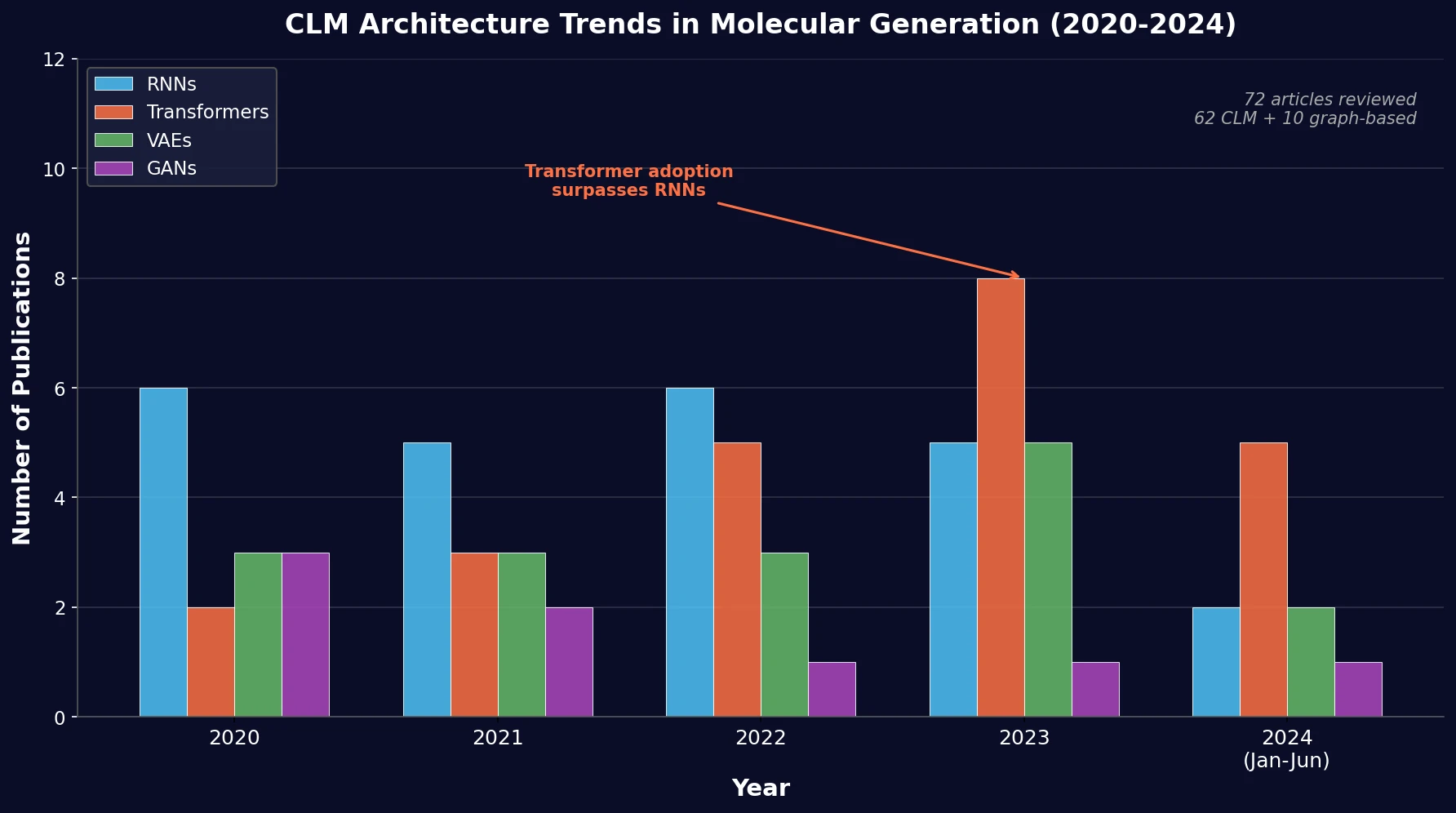

| 2024 | Flores-Hernandez & Martínez-Ledesma | J. Cheminform. | PRISMA review of 72 CLM papers via MOSES/GuacaMol |

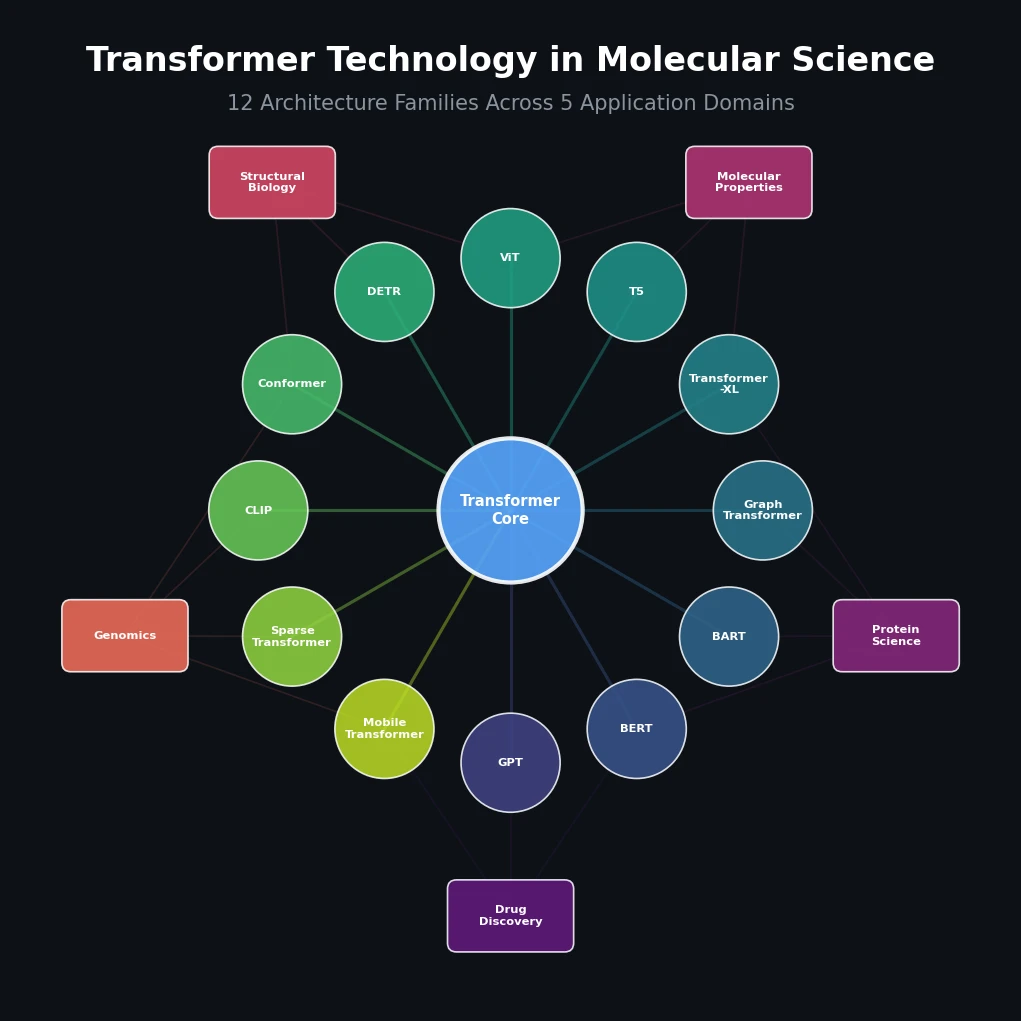

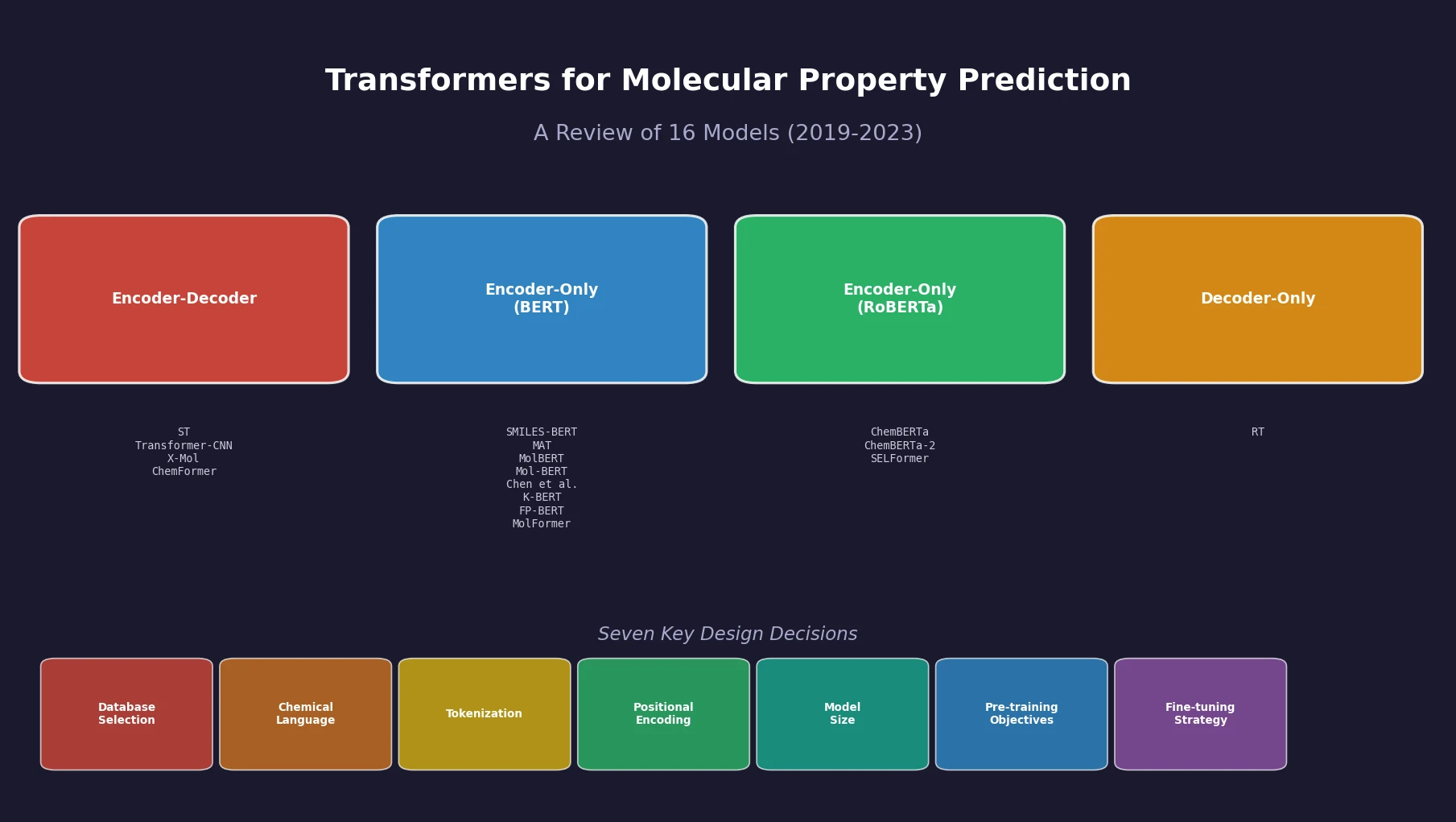

| 2024 | Sultan et al. | J. Chem. Inf. Model. | 16 transformer models, seven design decisions |

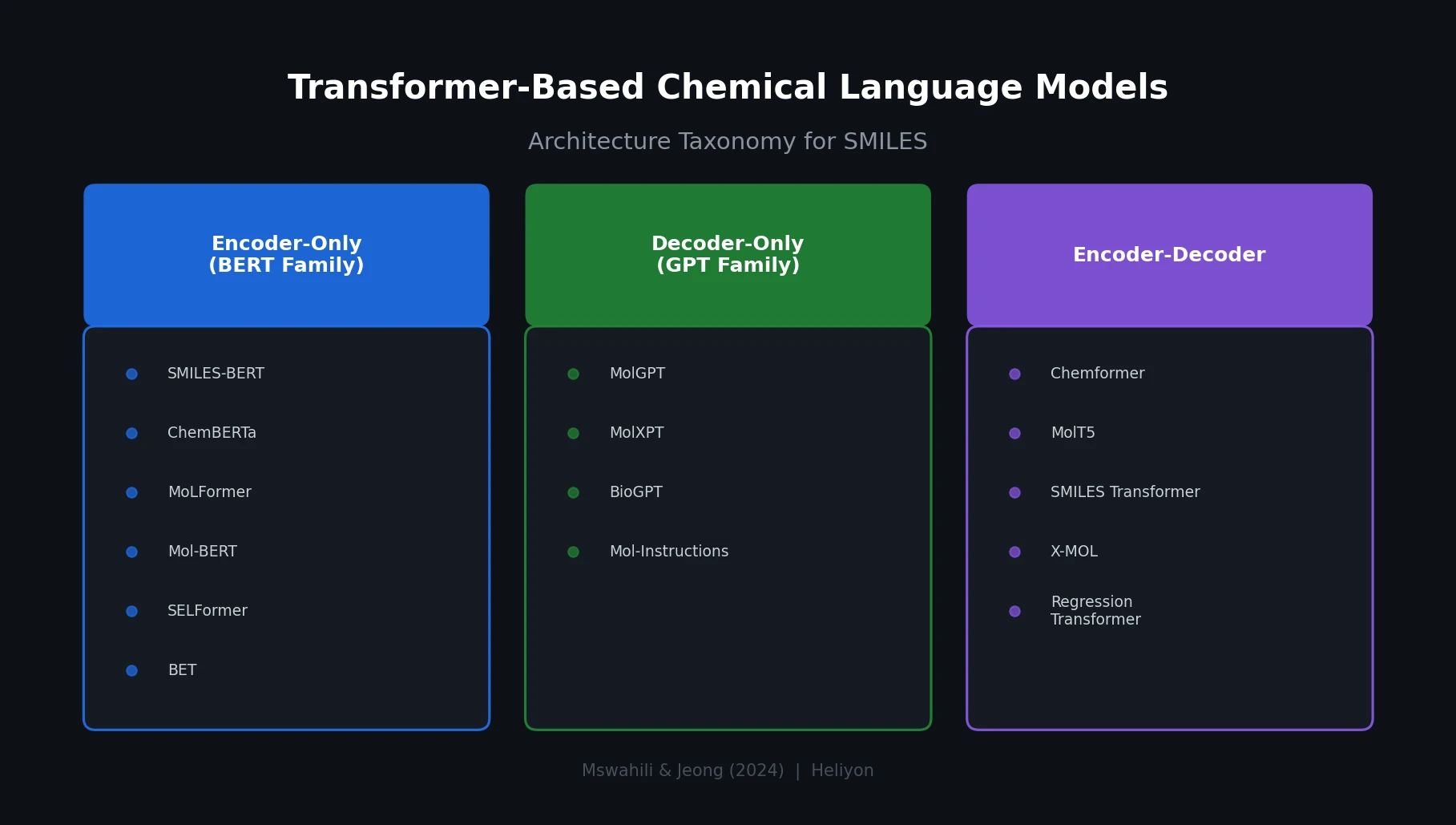

| 2024 | Mswahili & Jeong | Heliyon | ~30 CLMs by encoder/decoder architecture |

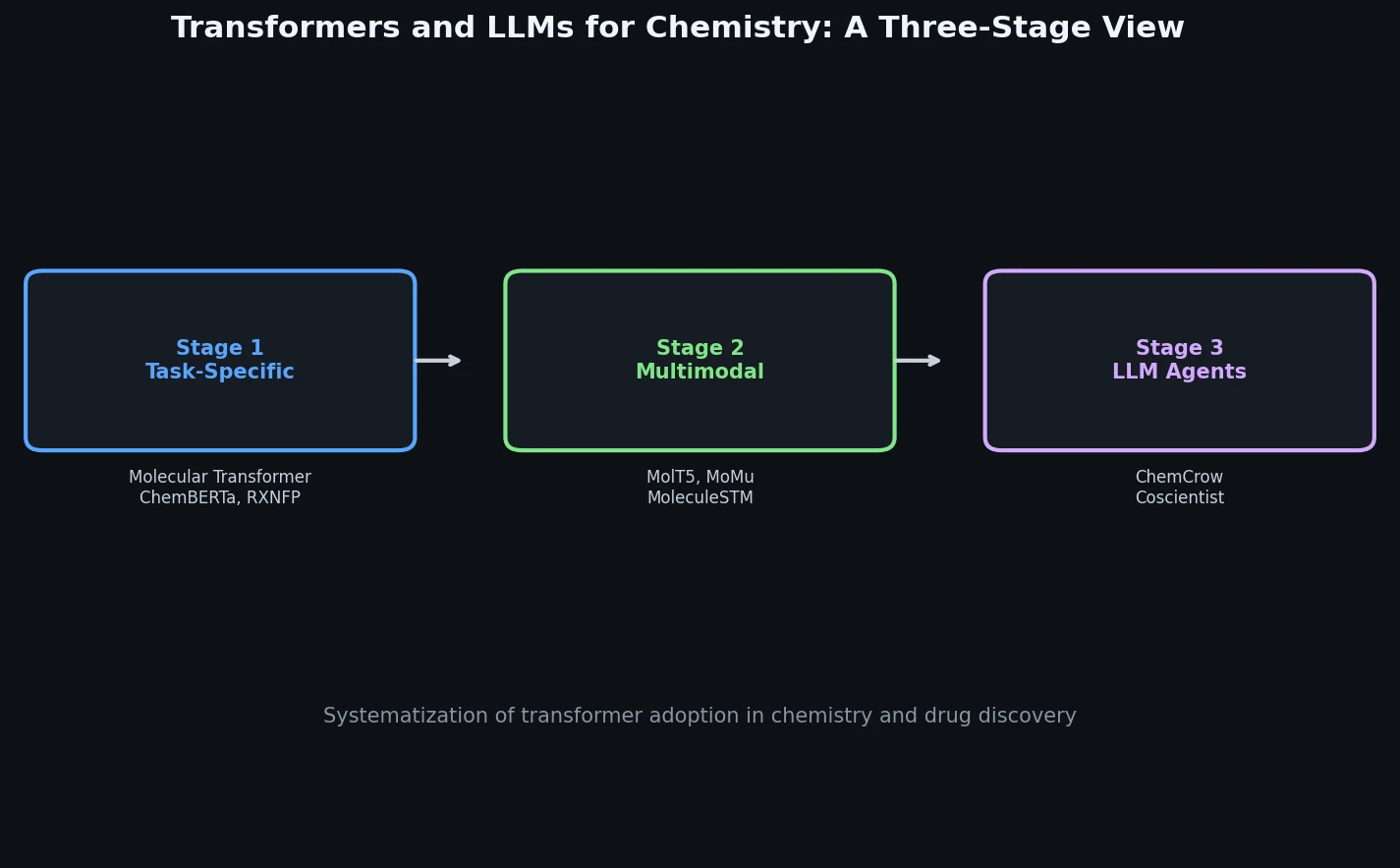

| 2024 | Bran & Schwaller | Drug Dev. Informatics | Task-specific to multimodal to LLM agents |

| 2024 | Atz et al. | Transformers across molecular science | |

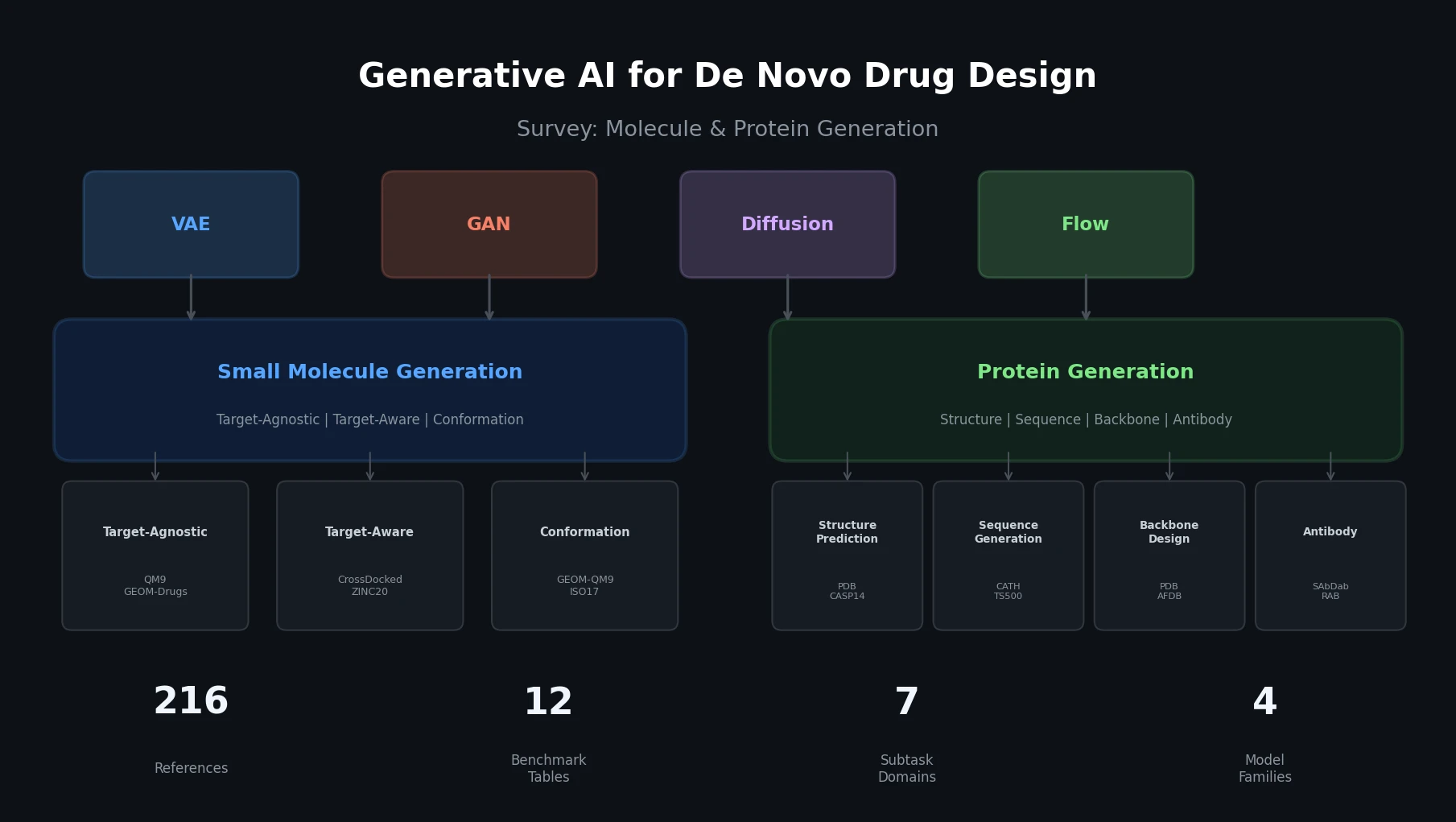

| 2024 | Tang et al. | Brief. Bioinform. | Molecule and protein generation, 12 benchmark tables |

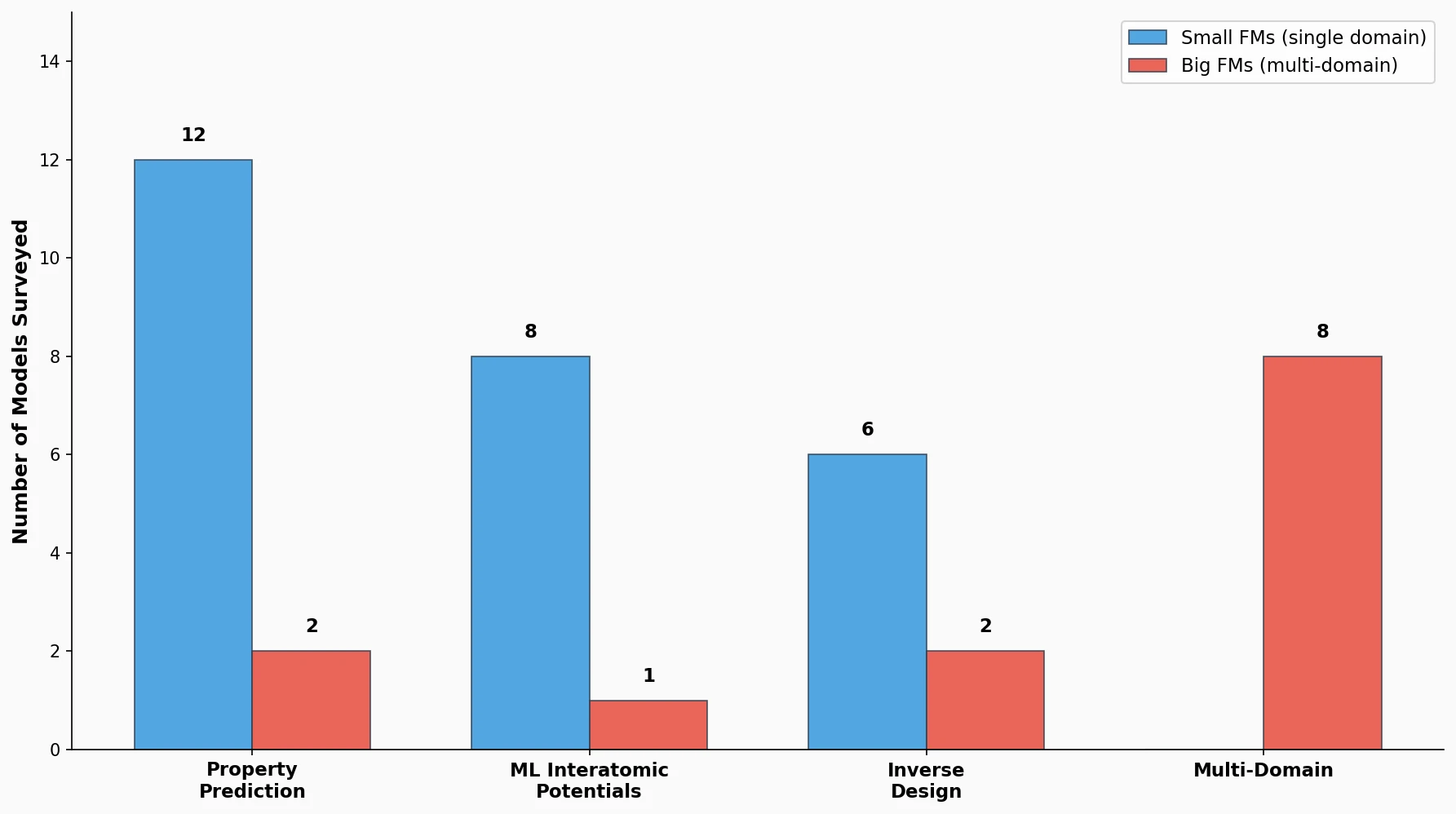

| 2025 | Choi et al. | JACS Au | Small vs big foundation models for chemistry |