A Two-Stage Pre-trained Transformer for Chemical Reactions

ReactionT5 is a Method paper that proposes a T5-based pre-trained model for chemical reaction tasks, specifically product prediction and yield prediction. The primary contribution is a two-stage pretraining pipeline: first on a compound library (ZINC, 23M molecules) to learn molecular representations, then on a large-scale reaction database (the Open Reaction Database, 1.5M reactions) to learn reaction-level patterns. The key result is that this pre-trained model can be fine-tuned with very limited target-domain data (as few as 30 reactions) and still achieve competitive performance against models trained on full datasets.

Bridging the Gap Between Single-Molecule and Multi-Molecule Pretraining

While transformer-based models pre-trained on compound libraries (e.g., SMILES-BERT, MolGPT) have seen substantial development, most focus on single-molecule inputs and outputs. Pretraining for multi-molecule contexts, such as chemical reactions involving reactants, reagents, catalysts, and products, remains underexplored. T5Chem supports multi-task reaction prediction but focuses on building a single multi-task model rather than investigating the effectiveness of pre-trained models for fine-tuning on limited in-house data.

The authors identify two key gaps:

- Most pre-trained chemical models do not account for reaction-level interactions between multiple molecules.

- In practical settings, target-domain reaction data is often scarce, making transfer learning from large public datasets essential.

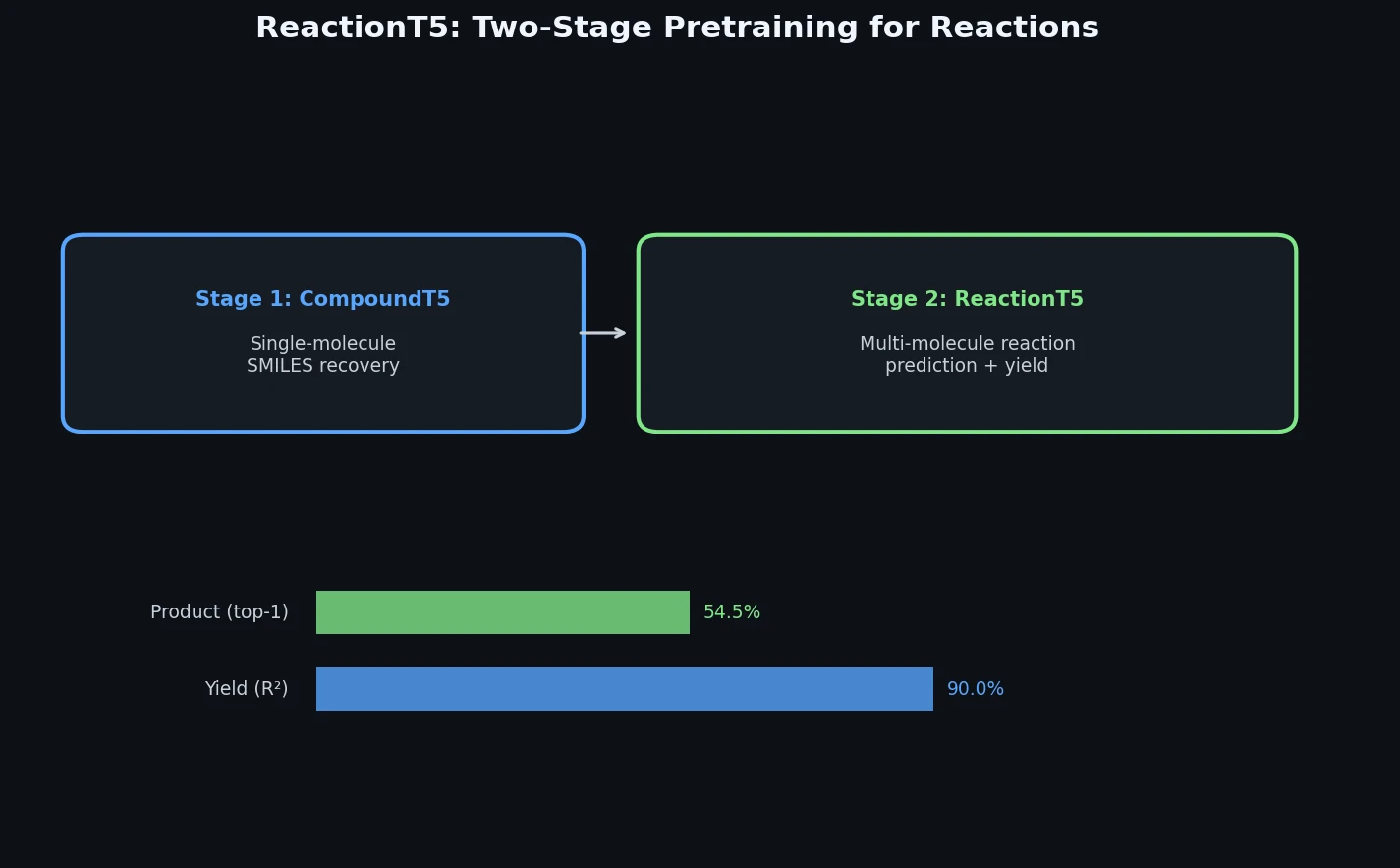

Two-Stage Pretraining with Compound Restoration

The core innovation is a two-stage pretraining procedure built on the T5 (text-to-text transfer transformer) architecture:

Stage 1: Compound Pretraining (CompoundT5). An initialized T5 model is trained on 23M SMILES from the ZINC database using span-masked language modeling. The model learns to predict masked subsequences of SMILES tokens. A SentencePiece unigram tokenizer is trained on this compound library, allowing more compact representations than character-level or atom-level tokenizers. After this stage, new tokens are added to the tokenizer to cover metal atoms and other characters present in the reaction database but absent from ZINC.

Stage 2: Reaction Pretraining (ReactionT5). CompoundT5 is further pretrained on 1.5M reactions from the Open Reaction Database (ORD) on both product prediction and yield prediction tasks. Reactions are formulated as text-to-text tasks using special tokens:

REACTANT:,REAGENT:, andPRODUCT:tokens delimit the role of each molecule in the reaction string.- For product prediction, the model takes reactants and reagents as input and generates product SMILES.

- For yield prediction, the model takes the full reaction (including products) and outputs a numerical yield value.

Compound Restoration. A notable methodological detail is the handling of uncategorized compounds in the ORD. About 31.8% of ORD reactions contain compounds with unknown roles. Simply discarding these reactions introduces severe product bias (only 447 unique products remain vs. 439,898 with uncategorized data included). The authors develop RestorationT5, a binary classifier built from CompoundT5, that assigns uncategorized compounds to either reactant or reagent roles. This classifier uses a sigmoid output layer and achieves an F1 score of 0.1564 at a threshold of 0.97, outperforming a random forest baseline (F1 = 0.1136). The restored dataset (“ORD(restored)”) is then used for reaction pretraining.

For yield prediction, the loss function is mean squared error:

$$L = \frac{1}{N} \sum_{i=1}^{N} (y_i - \hat{y}_i)^2$$

where $y_i$ is the true yield (normalized to [0, 1]) and $\hat{y}_i$ is the predicted yield.

Experimental Setup: Product and Yield Prediction Benchmarks

Product Prediction

The USPTO dataset (479K reactions) is used for evaluation, with standard train/val/test splits (409K/30K/40K). Reactions overlapping with the ORD (18%) are removed during evaluation. Beam search with beam size 10 is used for decoding, and minimum/maximum output length constraints are set based on the training data distribution. Top-k accuracy (k = 1, 2, 3, 5) and invalidity rate are reported.

Baselines include Seq-to-seq, WLDN (graph neural network), Molecular Transformer, and T5Chem.

| Model | Train | Top-1 | Top-2 | Top-3 | Top-5 | Invalidity |

|---|---|---|---|---|---|---|

| Seq-to-seq | USPTO | 80.3 | 84.7 | 86.2 | 87.5 | - |

| WLDN | USPTO | 85.6 | 90.5 | 92.8 | 93.4 | - |

| Molecular Transformer | USPTO | 88.8 | 92.6 | - | 94.4 | - |

| T5Chem | USPTO | 90.4 | 94.2 | - | 96.4 | - |

| CompoundT5 | USPTO | 88.0 | 92.4 | 93.9 | 95.0 | 7.5 |

| ReactionT5 (restored ORD) | USPTO200 | 85.5 | 91.7 | 93.5 | 94.9 | 12.0 |

A critical finding: ReactionT5 pre-trained on ORD achieves 0% accuracy on USPTO without fine-tuning due to domain mismatch (ORD includes byproducts; USPTO lists only the main product). Fine-tuning on just 200 USPTO reactions with the restored ORD model produces competitive results.

The few-shot fine-tuning analysis shows rapid performance scaling:

| Samples | Top-1 | Top-2 | Top-3 | Top-5 | Invalidity |

|---|---|---|---|---|---|

| 10 | 9.0 | 12.5 | 15.3 | 19.1 | 12.4 |

| 30 | 80.5 | 87.3 | 89.8 | 92.0 | 17.2 |

| 50 | 83.7 | 89.9 | 92.2 | 94.0 | 14.8 |

| 100 | 85.1 | 91.0 | 92.8 | 94.4 | 14.0 |

| 200 | 85.5 | 91.7 | 93.5 | 94.9 | 12.0 |

Yield Prediction

The Buchwald-Hartwig C-N cross-coupling dataset (3,955 reactions) is used with random 7:3 splits (repeated 10 times) plus four out-of-sample test sets (Tests 1-4) designed so that similar reactions do not appear in both train and test.

| Model | Random 7:3 | Test 1 | Test 2 | Test 3 | Test 4 | Avg. Tests 1-4 |

|---|---|---|---|---|---|---|

| DFT | 0.92 | 0.80 | 0.77 | 0.64 | 0.54 | 0.69 |

| MFF | 0.927 | 0.851 | 0.713 | 0.635 | 0.184 | 0.596 |

| Yield-BERT | 0.951 | 0.838 | 0.836 | 0.738 | 0.538 | 0.738 |

| T5Chem | 0.970 | 0.811 | 0.907 | 0.789 | 0.627 | 0.785 |

| CompoundT5 | 0.971 | 0.855 | 0.852 | 0.712 | 0.547 | 0.741 |

| ReactionT5 | 0.966 | 0.914 | 0.940 | 0.819 | 0.896 | 0.892 |

| ReactionT5 (zero-shot) | 0.904 | 0.919 | 0.927 | 0.847 | 0.909 | 0.900 |

ReactionT5 achieves the highest average $R^2$ across Tests 1-4 (0.892), with the zero-shot variant performing even better (0.900). The improvement is most dramatic on Test 4, the hardest split, where ReactionT5 achieves $R^2 = 0.896$ versus T5Chem’s 0.627 and Yield-BERT’s 0.538.

In a low-data regime (30% train / 70% test), ReactionT5 ($R^2 = 0.927$) substantially outperforms a random forest baseline ($R^2 = 0.853$), and even zero-shot ReactionT5 ($R^2 = 0.898$) exceeds the random forest.

Key Findings and Limitations

Key Findings

- Two-stage pretraining is effective: Compound pretraining followed by reaction pretraining produces models with strong generalization, particularly on out-of-distribution test sets.

- Few-shot transfer works: With as few as 30 fine-tuning reactions, ReactionT5 achieves over 80% Top-1 accuracy on product prediction, competitive with models trained on the full USPTO dataset.

- Compound restoration matters: Restoring uncategorized compounds in the ORD is essential for product prediction. Without restoration, fine-tuning on 200 USPTO reactions yields 0% accuracy; with restoration, the same fine-tuning yields 85.5% Top-1.

- Zero-shot yield prediction is surprisingly effective: ReactionT5 achieves $R^2 = 0.900$ on the out-of-sample yield tests without any task-specific fine-tuning, outperforming all fine-tuned baselines.

Limitations

- Product prediction shows a high invalidity rate (12.0% for the best ReactionT5 variant) compared to CompoundT5 (7.5%), suggesting the reaction pretraining may introduce some noise.

- The 0% accuracy without fine-tuning on product prediction reveals a significant domain gap between ORD and USPTO annotation conventions (byproducts vs. main products).

- The RestorationT5 classifier has low precision (0.0878) despite high recall (0.7212), meaning many compounds are incorrectly assigned roles. The paper does not investigate how this impacts downstream performance.

- The paper does not report training times, computational costs, or model sizes, making resource requirements unclear.

- Only two downstream tasks (product prediction on USPTO, yield prediction on Buchwald-Hartwig) are evaluated.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Compound pretraining | ZINC | 22,992,522 compounds | SMILES canonicalized with RDKit |

| Reaction pretraining | ORD (restored) | 1,505,916 reactions | Atom mapping removed, compounds canonicalized |

| Product prediction eval | USPTO | 479,035 reactions | 409K/30K/40K train/val/test split |

| Yield prediction eval | Buchwald-Hartwig C-N | 3,955 reactions | Random 7:3 split (10 repeats) + 4 OOS tests |

Algorithms

- Base architecture: T5 (text-to-text transfer transformer)

- Tokenizer: SentencePiece unigram, trained on ZINC, extended with special reaction tokens

- Compound pretraining: Span-masked language modeling (15% masking rate, average span length 3)

- Beam search: size 10 for product prediction

- Output length constraints: min/max from training data distribution

- Yield normalization: clipped to [0, 100], then scaled to [0, 1]

Models

- CompoundT5: T5 pretrained on ZINC

- RestorationT5: CompoundT5 fine-tuned for binary classification (reactant vs. reagent)

- ReactionT5: CompoundT5 pretrained on ORD for product and yield prediction

- Pre-trained weights available on Hugging Face

Evaluation

| Metric | Task | Best Value | Notes |

|---|---|---|---|

| Top-1 accuracy | Product prediction | 85.5% | ReactionT5 with 200 fine-tuning reactions |

| Top-5 accuracy | Product prediction | 94.9% | ReactionT5 with 200 fine-tuning reactions |

| $R^2$ | Yield prediction (random) | 0.966 | ReactionT5 fine-tuned |

| $R^2$ | Yield prediction (OOS avg.) | 0.900 | ReactionT5 zero-shot |

Hardware

Not specified in the paper. Training times and GPU requirements are not reported.

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| ReactionT5v2 (GitHub) | Code | MIT | Official implementation |

| ReactionT5 models (Hugging Face) | Model | MIT | Pre-trained weights |

Paper Information

Citation: Sagawa, T. & Kojima, R. (2023). ReactionT5: a large-scale pre-trained model towards application of limited reaction data. arXiv preprint arXiv:2311.06708.

@article{sagawa2023reactiont5,

title={ReactionT5: a large-scale pre-trained model towards application of limited reaction data},

author={Sagawa, Tatsuya and Kojima, Ryosuke},

journal={arXiv preprint arXiv:2311.06708},

year={2023},

doi={10.48550/arxiv.2311.06708}

}