A Multimodal Foundation Model for Structure-Property Comprehension

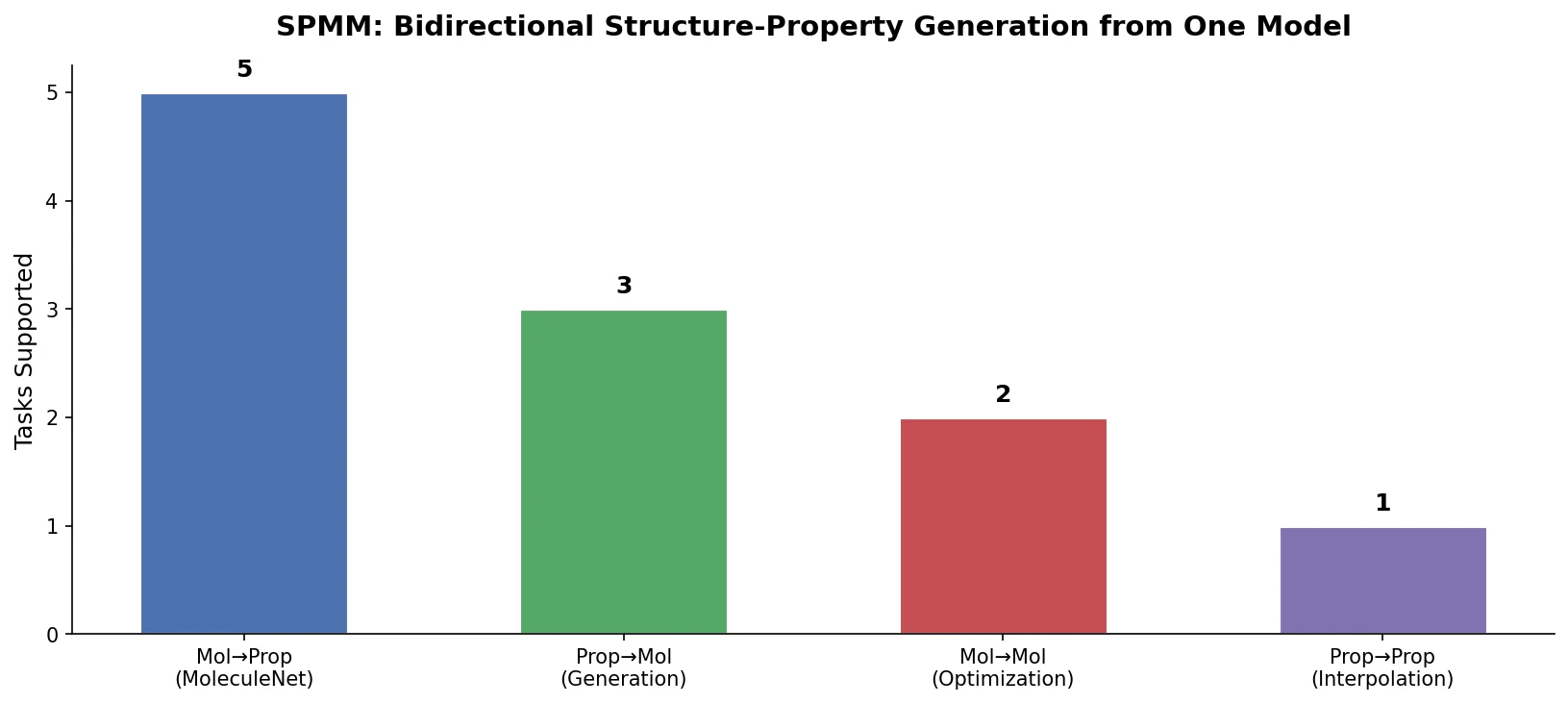

This is a Method paper that introduces the Structure-Property Multi-Modal foundation model (SPMM), a transformer-based architecture that treats SMILES strings and molecular property vectors (PVs) as two separate modalities and learns to align them in a shared embedding space. The primary contribution is enabling bidirectional generation through a single pre-trained model: given a property vector, SPMM can generate molecules (inverse-QSAR), and given a SMILES string, it can predict all 53 properties simultaneously. The model also transfers to unimodal downstream tasks including MoleculeNet benchmarks and reaction prediction.

Bridging the Gap Between Molecular Structure and Properties

Existing chemical pre-trained models typically learn representations from a single modality (SMILES, graphs, or fingerprints) and fine-tune for specific downstream tasks. While some approaches have attempted multimodal learning by combining SMILES with graph representations or InChI strings, these modalities encode nearly identical structural information, limiting the potential for emergent cross-modal knowledge.

The key gap SPMM addresses is the lack of multimodal pre-training that incorporates genuinely complementary modalities. Prior conditional molecule generation methods could typically control only a small number of properties simultaneously and required retraining when target properties changed. The authors draw on successes in vision-language pre-training (VLP), where aligning image and text modalities has enabled rich bidirectional understanding, and apply similar ideas to molecular structure and property domains.

Treating Property Vectors as a Language

The core innovation in SPMM is treating a collection of 53 RDKit-computed molecular properties as a “language” where each property value is analogous to a word token. This design allows the model to attend to individual properties independently rather than treating the entire property vector as a single fixed-length condition.

Dual-Stream Architecture

SPMM follows the dual-stream VLP architecture. The model has three components:

- SMILES Encoder: 6 BERT-base layers that encode tokenized SMILES (using a 300-subword BPE vocabulary) via self-attention

- PV Encoder: 6 BERT-base layers that encode the 53 normalized property values (each passed through a linear layer) with learnable positional embeddings

- Fusion Encoder: 6 BERT-base layers with cross-attention that combines both modalities, using one modality’s features as queries and the other as keys/values

Pre-training Objectives

The model is pre-trained with four complementary losses:

Contrastive Learning aligns SMILES and PV features in a shared embedding space. For [CLS] token outputs $\mathbf{S}_{cls}$ and $\mathbf{P}_{cls}$:

$$ \text{sim}(\mathbf{S}, \mathbf{P}) = \left(h_{S}(\mathbf{S}_{cls})\right)^{\top} h_{P}(\mathbf{P}_{cls}) $$

The intermodal similarities are computed with a learnable temperature $\tau$:

$$ s_{s2p} = \frac{\exp(\text{sim}(\mathbf{S}, \mathbf{P}) / \tau)}{\sum_{n=1}^{N} \exp(\text{sim}(\mathbf{S}, \mathbf{P}_{n}) / \tau)} $$

The contrastive loss uses cross-entropy with one-hot labels (1 for same-molecule pairs):

$$ L_{\text{contrastive}} = \frac{1}{2}\left(H(y_{s2p}, s_{s2p}) + H(y_{p2s}, s_{p2s}) + H(y_{s2s}, s_{s2s}) + H(y_{p2p}, s_{p2p})\right) $$

Next Word Prediction (NWP) trains autoregressive SMILES generation conditioned on the PV:

$$ L_{NWP} = \sum_{i=1}^{n} H\left(y_{n}^{NWP}, p^{NWP}(s_{n} \mid s_{0:n-1}, \mathbf{P})\right) $$

Next Property Prediction (NPP) applies the same autoregressive concept to property values, using mean-square-error loss:

$$ L_{NPP} = \sum_{i=1}^{n} \left(p_{n} - \hat{p}_{n}(p_{0:n-1}, \mathbf{S})\right)^{2} $$

SMILES-PV Matching (SPM) is a binary classification loss predicting whether a SMILES-PV pair originated from the same molecule, trained with hard-negative mining.

The overall pre-training loss combines all four:

$$ L = \widetilde{L}_{\text{contrastive}} + \widetilde{L}_{NWP} + L_{NPP} + L_{SPM} $$

where tildes indicate the use of momentum teacher distillation to soften one-hot labels, acknowledging that multiple valid SMILES-PV pairings may exist.

Random Property Masking

During pre-training, 50% of property values are randomly replaced with a special [UNK] token. This serves three purposes: preventing overfitting to specific properties, augmenting data, and enabling flexible inference where users can specify any subset of the 53 properties as generation conditions. The model can handle all $2^{53}$ possible property combinations at inference time despite never seeing most of them during training.

Experiments Across Bidirectional and Unimodal Tasks

PV-to-SMILES Generation (Conditional Molecule Design)

The authors evaluate SPMM on multiple generation scenarios using 1000 unseen PubChem PVs:

| Sampling | Input PV | Validity | Uniqueness | Novelty | Norm. RMSE |

|---|---|---|---|---|---|

| Deterministic | 1000 unseen PVs | 0.995 | 0.999 | 0.961 | 0.216 |

| Stochastic | Full PV (molecule 1) | 0.974 | 0.905 | 0.998 | 0.185 |

| Stochastic | Molar mass = 150 | 0.974 | 0.945 | 0.872 | 0.192 |

| Stochastic | 4 properties controlled | 0.998 | 0.981 | 0.952 | 0.257 |

| Stochastic | No control (all [UNK]) | 0.971 | 0.991 | 0.950 | - |

The normalized RMSE of 0.216 across 53 properties indicates that generated molecules closely match the input property conditions. The model can also perform unconditional generation (all properties masked) where outputs follow the pre-training distribution. The authors report that SPMM outperforms benchmark models including MolGAN, GraphVAE, and scaffold-based graph generative models in both conditional and unconditional settings (Supplementary Table 1).

SMILES-to-PV Generation (Multi-Property Prediction)

When given 1000 unseen ZINC15 molecules, SPMM predicts all 53 properties autoregressively with a mean $r^{2}$ of 0.924 across all properties.

MoleculeNet Benchmarks

Using only the SMILES encoder (6 BERT layers), SPMM achieves best or competitive performance on 9 MoleculeNet tasks:

| Dataset | Metric | SPMM | Best Baseline | Baseline Model |

|---|---|---|---|---|

| ESOL | RMSE | 0.817 | 0.798 | ChemRL-GEM |

| LIPO | RMSE | 0.681 | 0.660 | ChemRL-GEM |

| FreeSolv | RMSE | 1.868 | 1.877 | ChemRL-GEM |

| BACE (reg) | RMSE | 1.041 | 1.047 | MolFormer |

| Clearance | RMSE | 42.607 | 43.175 | MolFormer |

| BBBP | AUROC | 75.1% | 73.6% | MolFormer |

| BACE (cls) | AUROC | 84.4% | 86.3% | MolFormer |

| ClinTox | AUROC | 92.7% | 91.2% | MolFormer |

| SIDER | AUROC | 66.9% | 67.2% | ChemRL-GEM |

SPMM achieved best performance on 5 of 9 tasks, with notable gains on BBBP (75.1% vs. 73.6%) and ClinTox (92.7% vs. 91.2%). Without pre-training, all scores dropped substantially.

DILI Classification

On Drug-Induced Liver Injury prediction, SPMM achieved 92.6% AUROC, outperforming the 5-ensemble model of Ai et al. (90.4% AUROC) while using a single model.

Reaction Prediction

On USPTO-480k forward reaction prediction, SPMM achieved 91.5% top-1 accuracy, the highest among all models tested (including Chemformer at 91.3%). On USPTO-50k retro-reaction prediction, SPMM reached 53.4% top-1 accuracy, second only to Chemformer (54.3%) among string-based models.

Bidirectional Generation From a Single Pre-trained Model

SPMM demonstrates that multimodal pre-training with genuinely complementary modalities (structure and properties, rather than structurally redundant representations) enables a single foundation model to handle both generation directions and downstream unimodal tasks. Key findings include:

- Flexible conditional generation: The [UNK] masking strategy allows controlling any subset of 53 properties at inference time without retraining, a capability not demonstrated by prior methods.

- Interpretable cross-attention: Attention visualizations show that the model learns chemically meaningful structure-property relationships (e.g., hydrogen bonding properties attend to oxygen and nitrogen atoms; ring count properties attend to ring tokens).

- Competitive unimodal transfer: Despite using only 6 BERT layers and 50M pre-training molecules (smaller than ChemBERTa-2’s 77M or Chemformer’s 100M), the SMILES encoder alone achieves best or second-best results on 5 of 9 MoleculeNet tasks and the highest forward reaction prediction accuracy among tested models.

Limitations

The authors acknowledge several limitations:

- SMILES representation constraints: Implicit connectivity information in SMILES means small structural changes can cause drastic string changes. Graph representations could be a complementary alternative.

- Stereochemistry blindness: All 53 RDKit properties used are invariant to stereochemistry, meaning different stereoisomers produce identical PVs. The contrastive loss then forces their SMILES encoder outputs to converge, which the authors identify as the primary factor limiting MoleculeNet performance on stereo-sensitive tasks.

- No wet-lab validation: Generated molecules and predicted properties are not experimentally verified.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Pre-training | PubChem | 50M molecules | SMILES + 53 RDKit properties |

| Property prediction | MoleculeNet (9 tasks) | 642-4200 per task | Scaffold split via DeepChem (8:1:1) |

| DILI classification | Ai et al. dataset | Not specified | Following published preparation |

| Forward reaction | USPTO-480k | 479,035 pairs | Reactant-product pairs |

| Retro reaction | USPTO-50k | 50,037 pairs | Product-reactant pairs, no reaction types used |

| SMILES-to-PV test | ZINC15 | 1000 molecules | Not in pre-training set |

Algorithms

- Tokenization: BPE with 300-subword dictionary

- Property masking: 50% random replacement with [UNK] during pre-training

- Momentum distillation: EMA parameter $\lambda = 0.995$, soft-label mixing $\alpha$ linearly warmed from 0 to 0.4 over first epoch

- Contrastive queue: Size $k = 24{,}576$ for storing recent SMILES and PV instances

- Beam search: $k = 2$ for PV-to-SMILES generation

- SMILES augmentation: Random non-canonical augmentation with probability 0.5 for reaction tasks

Models

- Architecture: 6 BERT-base encoder layers each for SMILES encoder, PV encoder, and fusion encoder (18 total layers)

- Vocabulary: 300 BPE subwords for SMILES; 53 property tokens for PV

- Pre-trained weights: Available via GitHub

Evaluation

| Task | Metric | Value | Notes |

|---|---|---|---|

| PV-to-SMILES (deterministic) | Validity | 99.5% | 1000 unseen PubChem PVs |

| PV-to-SMILES (deterministic) | Normalized RMSE | 0.216 | Across 53 properties |

| SMILES-to-PV | Mean $r^{2}$ | 0.924 | 1000 ZINC15 molecules |

| Forward reaction (USPTO-480k) | Top-1 accuracy | 91.5% | Best among all tested models |

| Retro reaction (USPTO-50k) | Top-1 accuracy | 53.4% | Second-best string-based |

| DILI classification | AUROC | 92.6% | Single model vs. 5-ensemble |

Hardware

- Pre-training: 8 NVIDIA A100 GPUs, approximately 52,000 batch iterations, roughly 12 hours

- Batch size: 96

- Optimizer: AdamW with weight decay 0.02

- Learning rate: Warmed up to $10^{-4}$, cosine decay to $10^{-5}$

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| SPMM Source Code | Code | Apache-2.0 | Official implementation with experimental scripts |

| SPMM Zenodo Archive | Code | Apache-2.0 | Archived version for reproducibility |

| PubChem | Dataset | Public domain | 50M molecules for pre-training |

| MoleculeNet | Dataset | Varies | Benchmark datasets via DeepChem |

Paper Information

Citation: Chang, J., & Ye, J. C. (2024). Bidirectional generation of structure and properties through a single molecular foundation model. Nature Communications, 15, 2323. https://doi.org/10.1038/s41467-024-46440-3

@article{chang2024bidirectional,

title={Bidirectional generation of structure and properties through a single molecular foundation model},

author={Chang, Jinho and Ye, Jong Chul},

journal={Nature Communications},

volume={15},

pages={2323},

year={2024},

doi={10.1038/s41467-024-46440-3}

}