Bridging Molecular Graphs and Natural Language Through Contrastive Learning

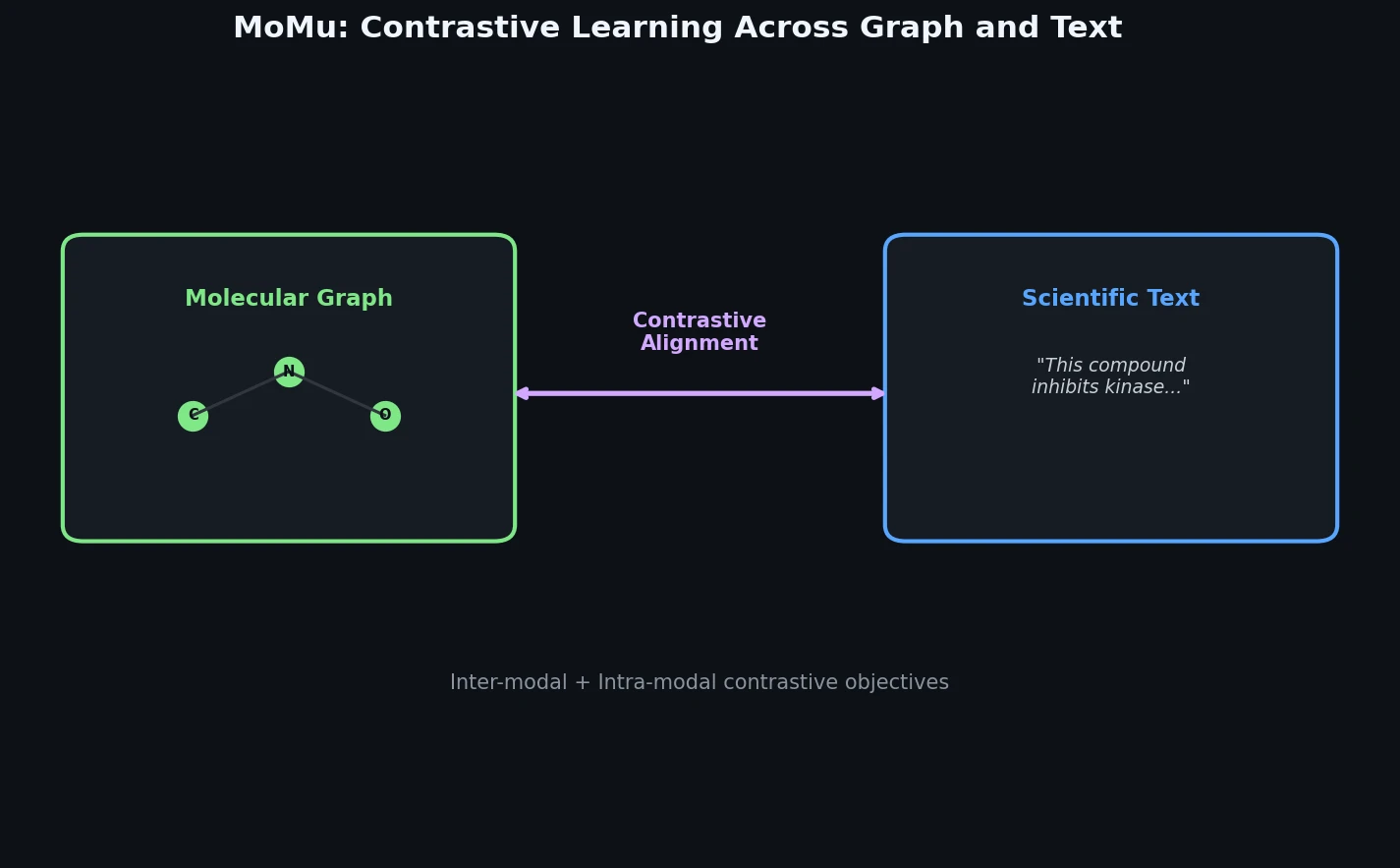

MoMu (Molecular Multimodal foundation model) is a Method paper that proposes a multimodal pre-training approach to associate molecular graphs with natural language descriptions. The primary contribution is a dual-encoder architecture, consisting of a Graph Isomorphism Network (GIN) for molecular graphs and a BERT-based text encoder, jointly trained through contrastive learning on weakly-correlated graph-text pairs collected from scientific literature. The pre-trained model supports four downstream capabilities: cross-modal retrieval (graph-to-text and text-to-graph), molecule captioning, zero-shot text-to-graph molecule generation, and molecular property prediction.

Why Single-Modality Models Are Insufficient for Molecular Understanding

Existing AI models for molecular tasks generally operate on a single modality and learn a single cognitive ability. Language-based models process SMILES strings or natural language texts and handle tasks like property prediction from strings, literature comprehension, or SMILES-based generation. Graph-based models use molecular graph representations and handle graph-level property prediction or graph generation. Neither category connects structural information from molecular graphs with the rich semantic knowledge encoded in scientific texts.

Prior work by Zeng et al. (KV-PLM) jointly modeled molecule-related texts and SMILES strings, but SMILES representations have inherent drawbacks: they are one-dimensional and may lose structural information, they cannot capture structural similarities between molecules, and a single molecule can have multiple valid SMILES representations. Molecular graphs, by contrast, are more intuitive and better reveal functional structures. Human experts learn molecular knowledge by associating both graphical representations and textual descriptions, yet no prior model bridged these two modalities directly.

The key challenge is the scarcity of paired molecular graph-text data compared to general image-text datasets. Additionally, learning specialized molecular knowledge requires foundational cognitive abilities in both the graph and text domains, making training from scratch infeasible with limited data.

Contrastive Pre-Training with Inter-Modal and Intra-Modal Objectives

MoMu consists of two encoders initialized from pre-trained unimodal models: a GIN graph encoder initialized from GraphCL self-supervised weights, and a BERT text encoder initialized from either Sci-BERT (yielding MoMu-S) or KV-PLM (yielding MoMu-K).

Data Collection

The authors collect approximately 15,613 molecular graph-document pairs by:

- Gathering names, synonyms, and SMILES for the top 50K compounds in PubChem

- Converting SMILES to molecular graphs using the OGB

smiles2graphfunction - Retrieving related text from the S2ORC corpus (136M+ papers) by querying with molecule names, filtering to Medicine, Biology, Chemistry, and Computer Science fields

- Restricting retrieval to abstract, introduction, and conclusion sections to avoid experimental data artifacts

Contrastive Training Objective

For each graph-text pair in a mini-batch of $N$ pairs, MoMu applies two graph augmentations (node dropping and subgraph extraction) to create two augmented graphs, and randomly samples two sentences from the document. This produces $2N$ graph representations ${z_1^G, \tilde{z}_1^G, \ldots, z_N^G, \tilde{z}_N^G}$ and $2N$ text representations ${z_1^T, \tilde{z}_1^T, \ldots, z_N^T, \tilde{z}_N^T}$.

The cross-modal contrastive loss for a pair $(z_i^G, z_i^T)$ is:

$$ \ell_i^{(z_i^G, z_i^T)} = -\log \frac{\exp(\text{sim}(z_i^G, z_i^T) / \tau)}{\sum_{j=1}^{N} \exp(\text{sim}(z_i^G, z_j^T) / \tau)} $$

where $\tau$ is the temperature parameter and $\text{sim}(\cdot, \cdot)$ projects both representations into a shared 256-dimensional space before computing cosine similarity. The total cross-modal loss includes four contrastive terms for each pair: $(z_i^G, z_i^T)$, $(\tilde{z}_i^G, z_i^T)$, $(z_i^G, \tilde{z}_i^T)$, and $(\tilde{z}_i^G, \tilde{z}_i^T)$.

An intra-modal graph contrastive loss further strengthens the graph encoder:

$$ \ell_i^{(z_i^G, \tilde{z}_i^G)} = -\log \frac{\exp(\text{sim}(z_i^G, \tilde{z}_i^G) / \tau)}{\sum_{j=1}^{N} \exp(\text{sim}(z_i^G, \tilde{z}_j^G) / \tau)} $$

Zero-Shot Text-to-Graph Generation

MoMu enables a zero-shot generation pipeline by combining the pre-trained MoMu encoders with MoFlow, a flow-based molecular generator. Given an input text description $x^T$, the method:

- Samples a latent variable $q$ from MoFlow’s Gaussian prior $P(q)$

- Generates a molecular graph through MoFlow’s reverse flows: $\hat{E} = f_g^{-1}(q_e)$ and $\hat{V} = f_c^{-1}(q_v \mid GN(\hat{E}))$

- Feeds $\hat{V}$ (using soft atom type probabilities instead of hard assignments) into MoMu’s graph encoder

- Optimizes $q$ to maximize the cosine similarity between the resulting graph and text representations:

$$ \ell_q = -\text{sim}(z^G, z^T) / \tau $$

All MoMu and MoFlow parameters are frozen; only $q$ is updated via Adam for up to 500 iterations. The final molecule is obtained by applying argmax to the optimized probability matrices $\hat{V}$ and $\hat{E}$.

Evaluation Across Four Downstream Tasks

Cross-Modal Retrieval

MoMu is evaluated on the PCdes dataset (15K SMILES-description pairs from PubChem, split 10,500/1,500/3,000 for train/val/test). Retrieval is performed in mini-batches of 64 pairs, reporting top-1 accuracy and Recall@20.

Graph-to-Text Retrieval (PCdes, fine-tuned):

| Method | Sentence Acc | Sentence R@20 | Paragraph Acc | Paragraph R@20 |

|---|---|---|---|---|

| Sci-BERT | 50.38 | 62.11 | 62.57 | 60.67 |

| KV-PLM | 53.79 | 66.63 | 64.81 | 63.87 |

| KV-PLM* | 55.92 | 68.59 | 77.92 | 75.93 |

| MoMu-S | 58.64 | 80.59 | 80.62 | 79.11 |

| MoMu-K | 58.74 | 81.29 | 81.09 | 80.15 |

Text-to-Graph Retrieval (PCdes, fine-tuned):

| Method | Sentence Acc | Sentence R@20 | Paragraph Acc | Paragraph R@20 |

|---|---|---|---|---|

| Sci-BERT | 50.12 | 68.02 | 61.75 | 60.77 |

| KV-PLM | 54.22 | 71.80 | 64.95 | 64.27 |

| KV-PLM* | 55.61 | 74.77 | 77.03 | 75.47 |

| MoMu-S | 55.44 | 76.92 | 80.22 | 79.02 |

| MoMu-K | 54.94 | 78.29 | 81.45 | 80.62 |

In zero-shot retrieval (on a separate test set of 5,562 pairs not seen during pre-training), MoMu achieves approximately 39-46% accuracy compared to below 2% for Sci-BERT and KV-PLM, demonstrating strong generalization.

Molecule Captioning

MoMu’s graph features are appended to MolT5’s encoder inputs through a learned MLP mapping module on the ChEBI-20 dataset. Results show improvements in BLEU, METEOR, and Text2Mol scores when incorporating graph features, though ROUGE-L slightly drops. The graph structural information leads to more accurate captions for complex molecular structures.

Molecular Property Prediction

The pre-trained graph encoder from MoMu is fine-tuned on eight MoleculeNet datasets using scaffold splitting and ROC-AUC evaluation (10 runs).

| Dataset | No Pre-Train | GraphCL | MoMu-S | MoMu-K |

|---|---|---|---|---|

| BBBP | 65.8 | 69.7 | 70.5 | 70.1 |

| Tox21 | 74.0 | 73.9 | 75.6 | 75.6 |

| ToxCast | 63.4 | 62.4 | 63.4 | 63.0 |

| SIDER | 57.3 | 60.5 | 60.5 | 60.4 |

| ClinTox | 58.0 | 76.0 | 79.9 | 77.4 |

| MUV | 71.8 | 69.8 | 70.5 | 71.1 |

| HIV | 75.3 | 78.5 | 75.9 | 76.2 |

| BACE | 70.1 | 75.4 | 76.7 | 77.1 |

| Average | 66.96 | 70.78 | 71.63 | 71.36 |

MoMu-S achieves the best average ROC-AUC (71.63%) across all eight datasets, outperforming GraphCL (70.78%), the self-supervised method used to initialize MoMu’s graph encoder. MoMu outperforms GraphCL on six of eight datasets. Notably, MoMu-S and MoMu-K perform comparably, indicating that KV-PLM’s SMILES-based knowledge does not transfer well to graph-based representations.

Zero-Shot Text-to-Graph Generation

The method generates molecules from three types of text descriptions:

- High-level vague descriptions (e.g., “The molecule is beautiful”): MoMu generates diverse, interpretable molecules where “beautiful” tends to produce locally symmetric and stretched graphs, “versatile” produces molecules with varied elements and functional groups, and “strange” produces cluttered, irregular structures.

- Functional descriptions (e.g., “fluorescent molecules”, “high water solubility and barrier permeability with low toxicity”): MoMu successfully generates molecules with appropriate functional groups and properties. For the solubility/permeability/toxicity query, MoMu generates molecules that satisfy three of three evaluable properties.

- Structural descriptions (e.g., “molecules containing nucleophilic groups”): MoMu generates diverse molecules with appropriate functional groups (amino, hydroxyl, carbonyl, halogen atoms).

Promising Multimodal Transfer with Clear Data Limitations

MoMu demonstrates that contrastive pre-training on weakly-correlated graph-text data can bridge molecular graphs and natural language in a shared representation space. The key findings are:

- Cross-modal alignment works with limited data: With only 15K graph-text pairs (far fewer than the millions used in vision-language models like CLIP), MoMu achieves meaningful cross-modal retrieval and enables zero-shot generation.

- Multimodal supervision improves graph representations: The graph encoder supervised by text descriptions outperforms self-supervised methods (GraphCL, AttrMasking, ContextPred) on average across molecular property prediction benchmarks.

- SMILES knowledge does not transfer to graphs: MoMu-S and MoMu-K perform comparably across all tasks, showing that structural information learned from one-dimensional SMILES strings does not readily generalize to graph neural networks.

Limitations

The authors acknowledge several important limitations:

- Data scarcity: 15K graph-text pairs is substantially smaller than general image-text datasets, potentially leaving the common space insufficiently aligned.

- Noisy supervision: Retrieved texts may mention a molecule by name without describing its properties or structure, leading to spurious correlations.

- Generator constraints: The zero-shot generation method is limited by MoFlow’s capacity (maximum 38 atoms, 9 element types from ZINC250K training).

- Property coverage: Generation quality degrades for molecular properties that appear infrequently or not at all in the training texts.

Future Directions

The authors propose four avenues: (1) collecting larger-scale multimodal molecular data including 3D conformations, (2) using strongly-correlated paired data with more advanced generators, (3) developing interpretable tools for the learned cross-modal space, and (4) wet-lab validation of generated molecules.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| Pre-training | Collected graph-text pairs (PubChem + S2ORC) | 15,613 pairs | ~37M paragraphs total; top 50K PubChem compounds |

| Cross-modal retrieval | PCdes | 15K pairs (10.5K/1.5K/3K split) | SMILES-description pairs from PubChem |

| Molecule captioning | ChEBI-20 | ~33K pairs | Used with MolT5 |

| Text-to-graph generation | ZINC250K (MoFlow) | 250K molecules | Pre-trained generator, max 38 atoms |

| Property prediction | MoleculeNet (8 datasets) | Varies | BBBP, Tox21, ToxCast, SIDER, ClinTox, MUV, HIV, BACE |

Algorithms

- Graph augmentations: Node dropping (10% ratio) and subgraph extraction (80% of original size via random walk)

- Contrastive learning: InfoNCE loss with temperature $\tau = 0.1$, following the DeClip paradigm with both inter-modal and intra-modal objectives

- Zero-shot generation: Adam optimizer on latent variable $q$ for up to 500 iterations; formal charges prohibited in output

Models

- Graph encoder: GIN with 5 layers, 300-dimensional hidden size, initialized from GraphCL checkpoint

- Text encoder: BERT-base (768 hidden size), initialized from Sci-BERT or KV-PLM

- Projection heads: Two MLPs projecting graph (300-dim) and text (768-dim) features to 256-dimensional shared space

- Optimizer: AdamW, learning rate 0.0001, weight decay 1e-5, 300 epochs, batch size 256

Evaluation

| Task | Metric | Best Result | Notes |

|---|---|---|---|

| G-T Retrieval (PCdes) | Accuracy / R@20 | 81.09 / 80.15 (paragraph) | MoMu-K, fine-tuned |

| T-G Retrieval (PCdes) | Accuracy / R@20 | 81.45 / 80.62 (paragraph) | MoMu-K, fine-tuned |

| Zero-shot G-T Retrieval | Accuracy | ~46% | vs. ~1.4% for baselines |

| Property Prediction | ROC-AUC (avg) | 71.63% | MoMu-S, 8 MoleculeNet datasets |

| Molecule Captioning | Text2Mol | Improved over MolT5 | MoMu + MolT5-large |

Hardware

- Pre-training: 8x NVIDIA Tesla V100 PCIe 32GB GPUs

- Framework: PyTorch

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| MoMu code | Code | Not specified | Pre-training and downstream task code |

| GraphTextRetrieval | Code | Not specified | Data collection and cross-modal retrieval code |

| Pre-training dataset | Dataset | Not specified | Hosted on Baidu Pan (Chinese cloud storage) |

Paper Information

Citation: Su, B., Du, D., Yang, Z., Zhou, Y., Li, J., Rao, A., Sun, H., Lu, Z., & Wen, J.-R. (2022). A Molecular Multimodal Foundation Model Associating Molecule Graphs with Natural Language. arXiv preprint arXiv:2209.05481.

@article{su2022momu,

title={A Molecular Multimodal Foundation Model Associating Molecule Graphs with Natural Language},

author={Su, Bing and Du, Dazhao and Yang, Zhao and Zhou, Yujie and Li, Jiangmeng and Rao, Anyi and Sun, Hao and Lu, Zhiwu and Wen, Ji-Rong},

journal={arXiv preprint arXiv:2209.05481},

year={2022}

}