This group covers models that use reinforcement learning to steer molecular generators toward desired property profiles. The common thread is reward-shaped optimization of a base generative policy, whether RNN, Transformer, GAN, or graph-based.

| Paper | Year | Base Model | Key Idea |

|---|---|---|---|

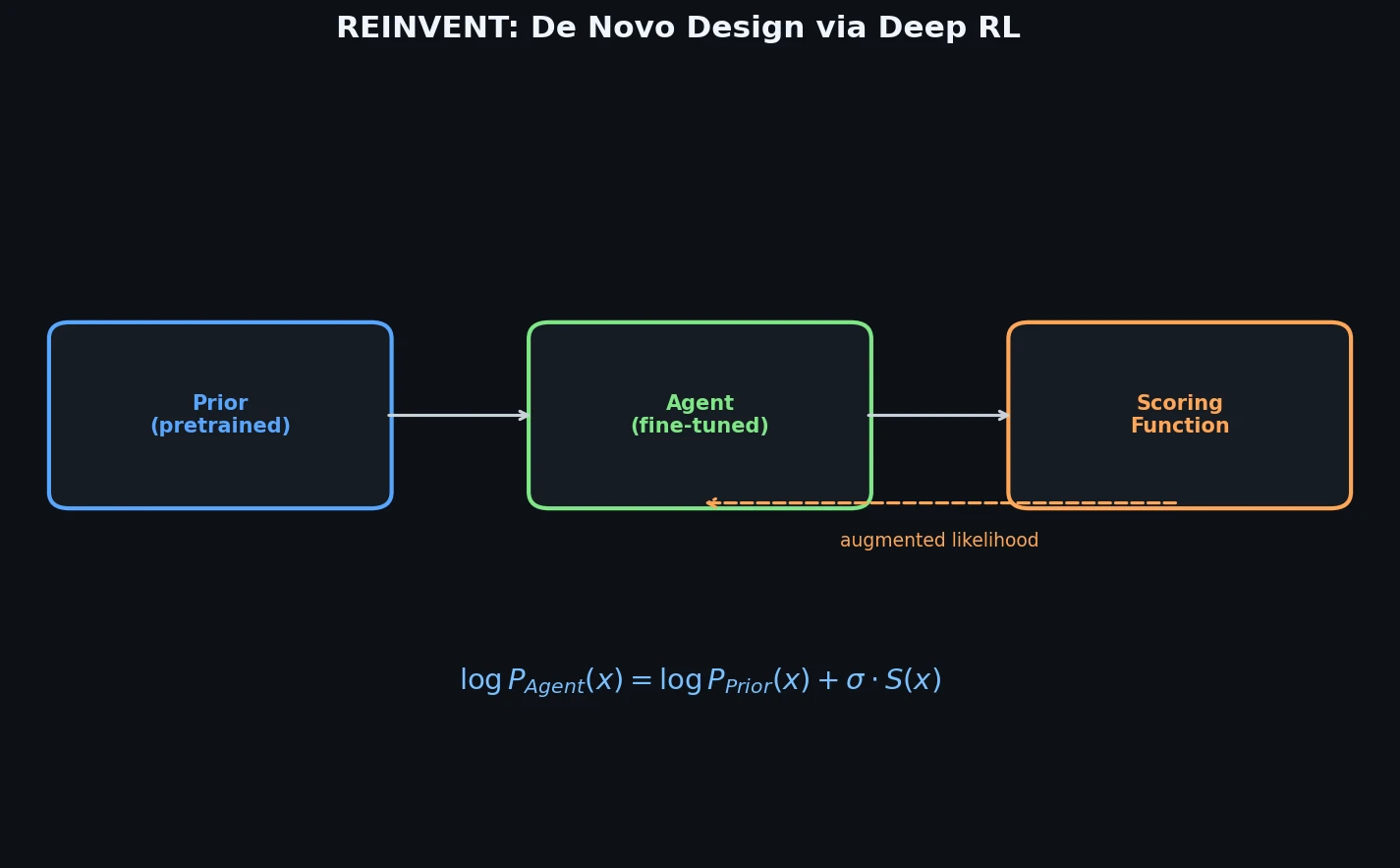

| REINVENT | 2017 | RNN | Augmented episodic likelihood for goal-directed SMILES generation |

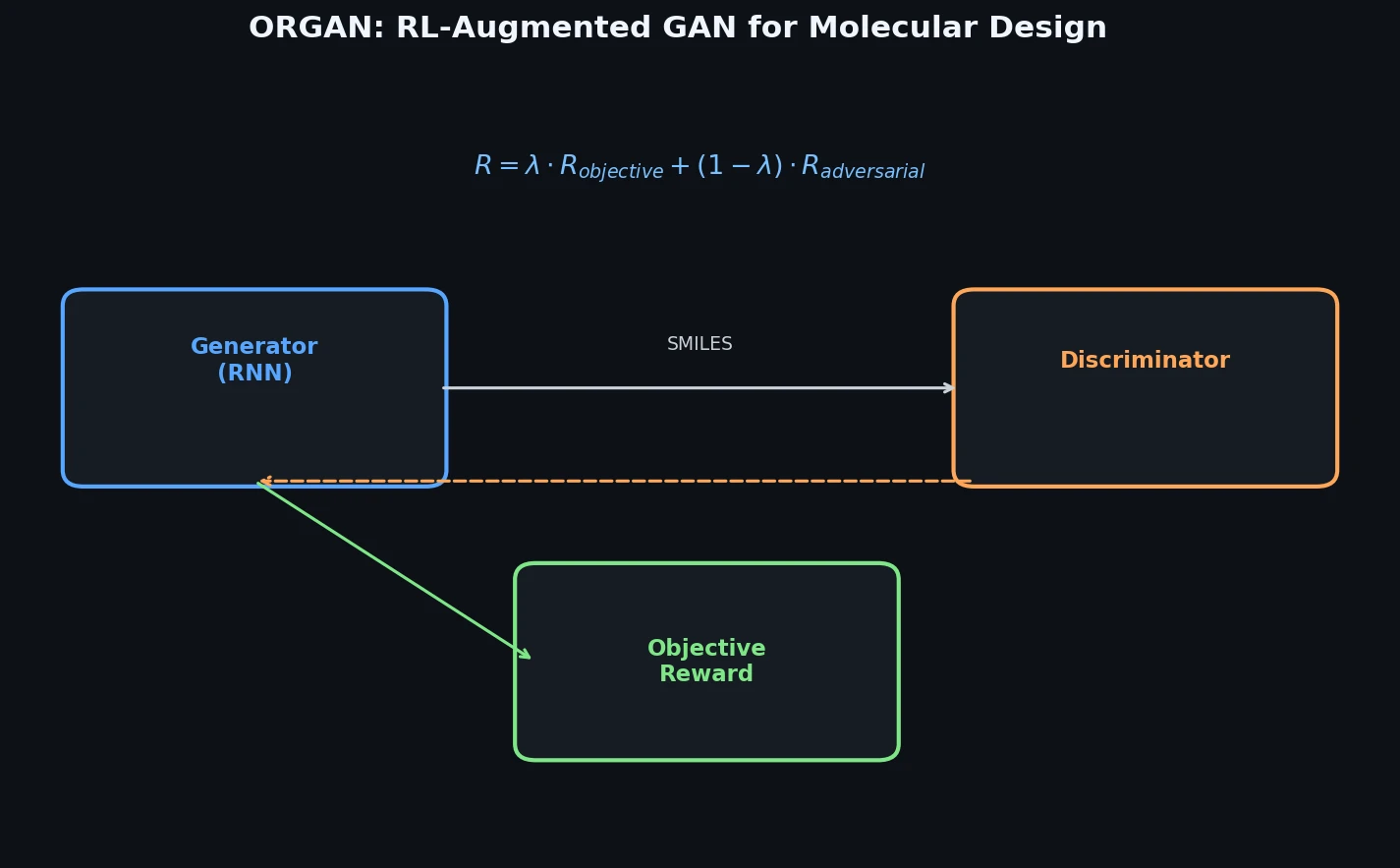

| ORGAN | 2017 | SeqGAN | RL reward functions for tunable drug-likeness, solubility, and synthesizability |

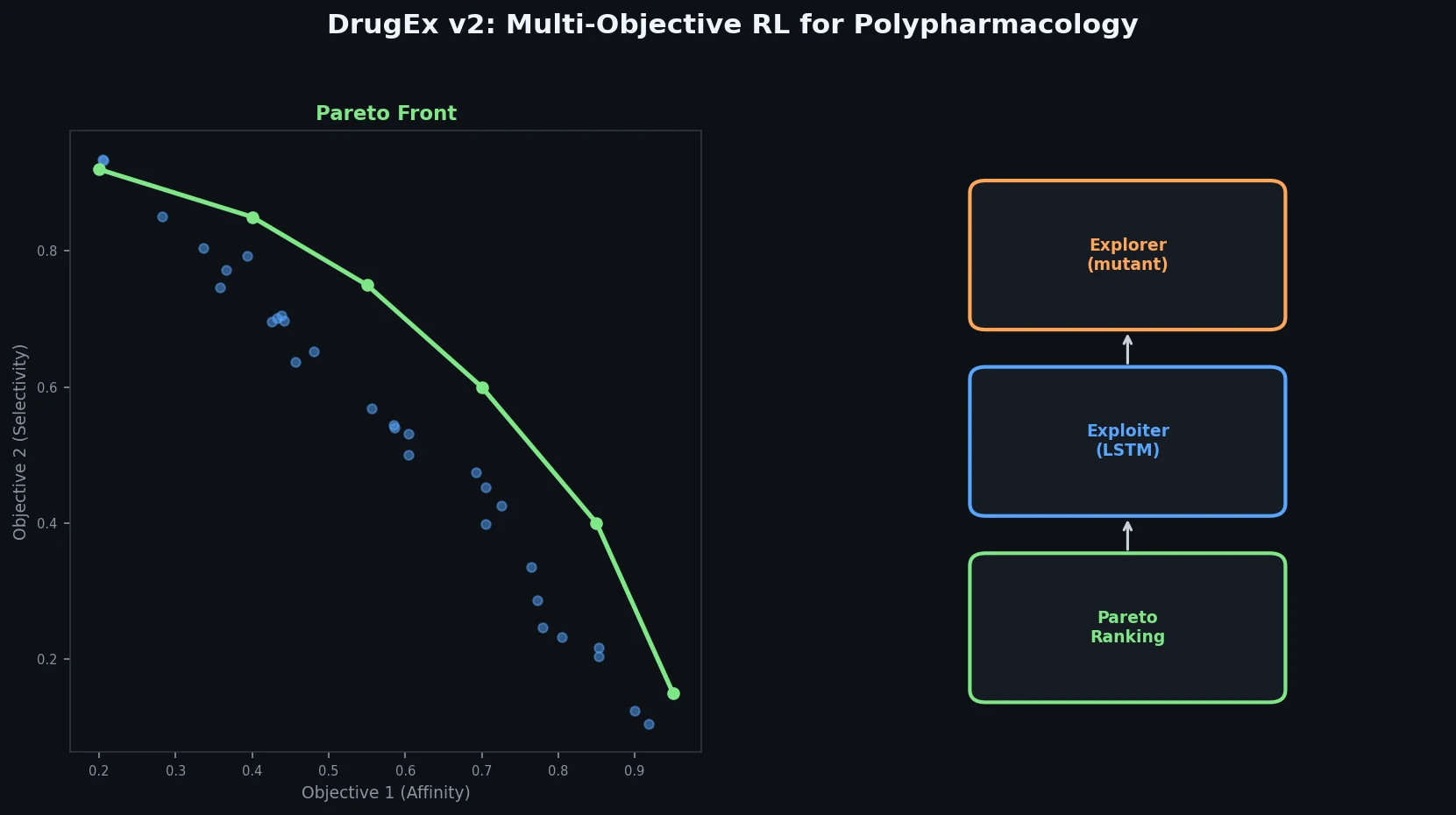

| DrugEx v2 | 2019 | RNN | Pareto ranking and evolutionary exploration for multi-objective design |

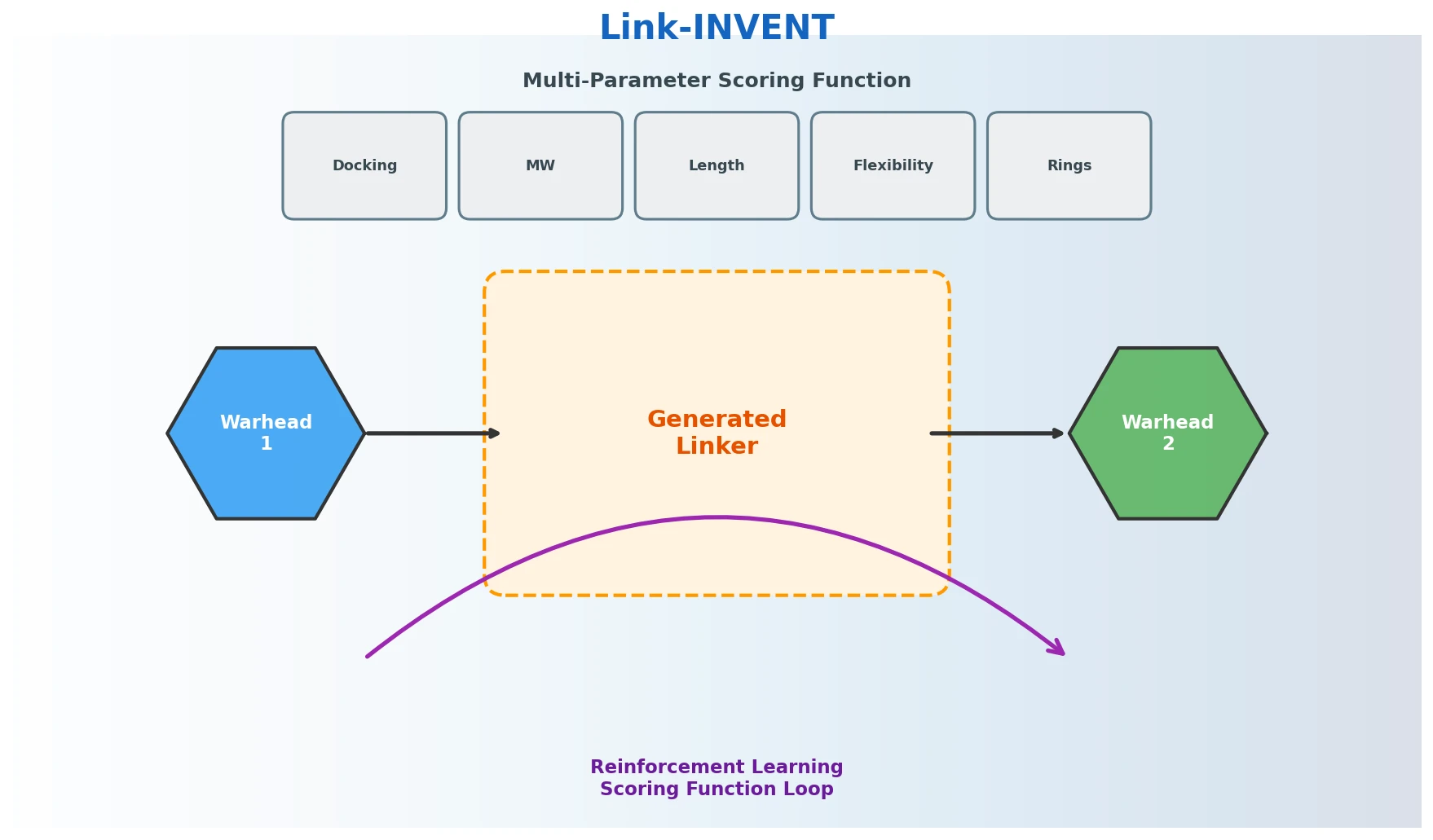

| Link-INVENT | 2019 | RNN | Molecular linker design with flexible multi-parameter RL scoring |

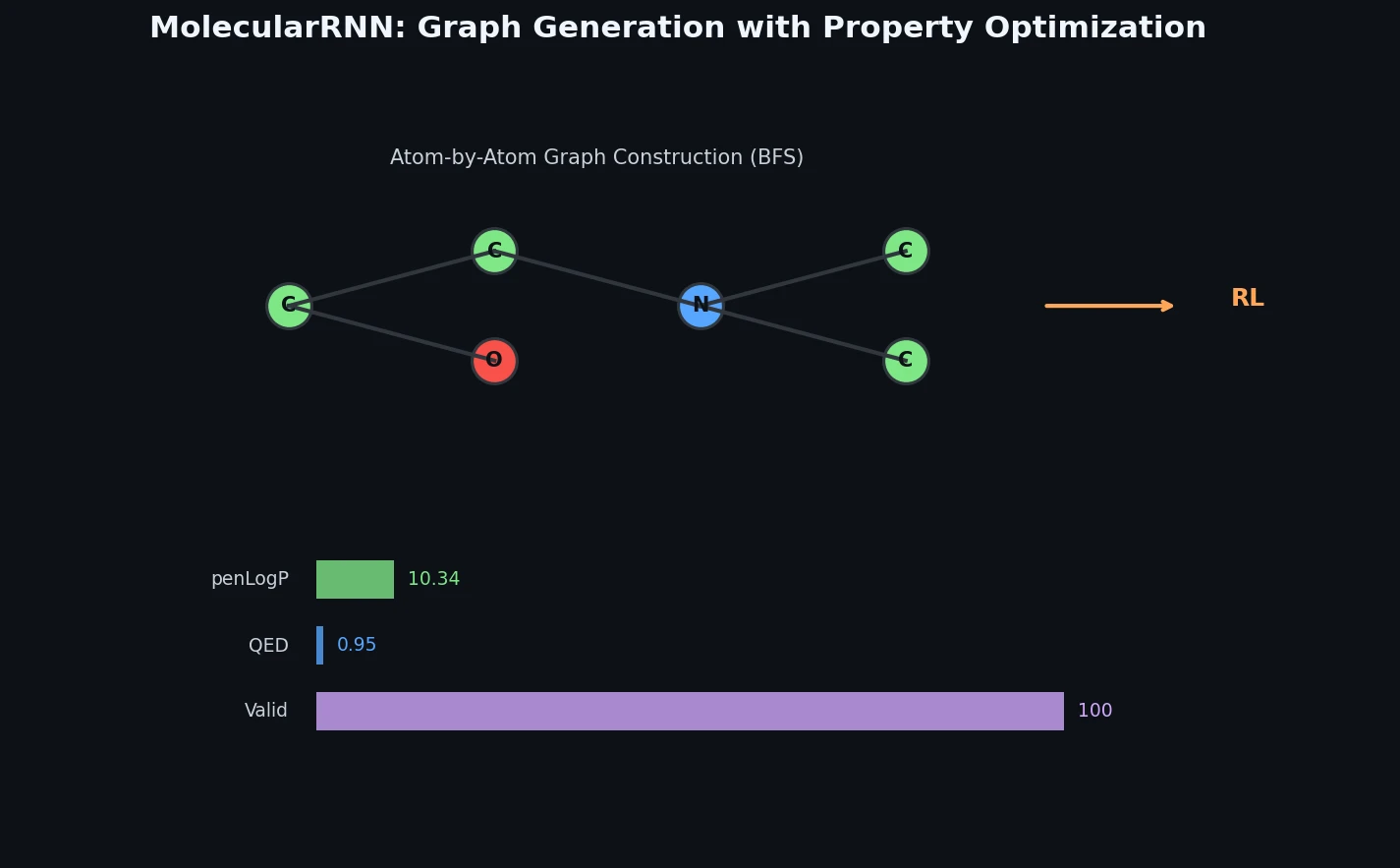

| MolecularRNN | 2019 | Graph RNN | Atom-by-atom generation with valency rejection sampling and policy gradients |

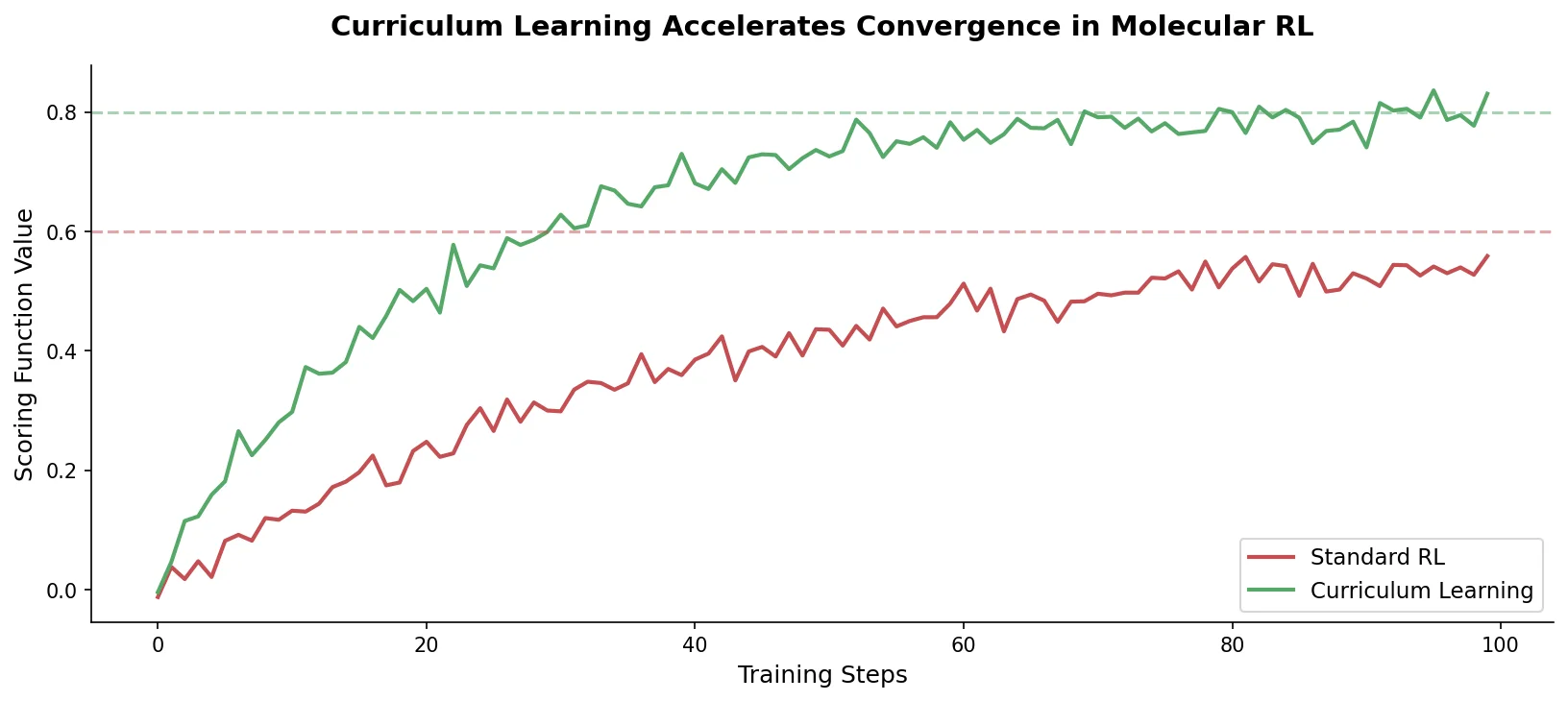

| Curriculum Learning | 2019 | REINVENT | Sequential task decomposition accelerating convergence on complex objectives |

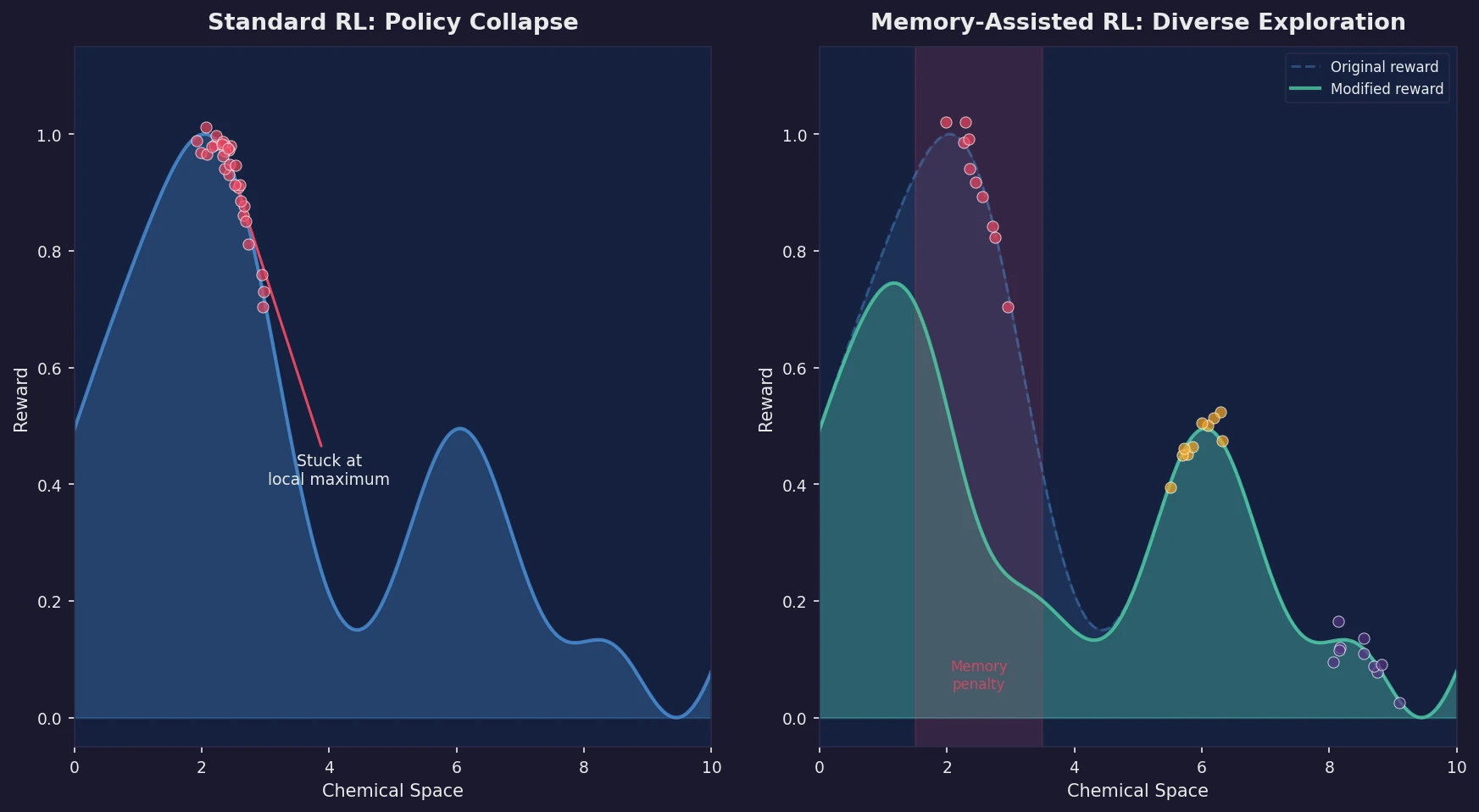

| Memory-Assisted RL | 2020 | REINVENT | Scaffold memory penalizes repeated solutions, increasing diversity fourfold |

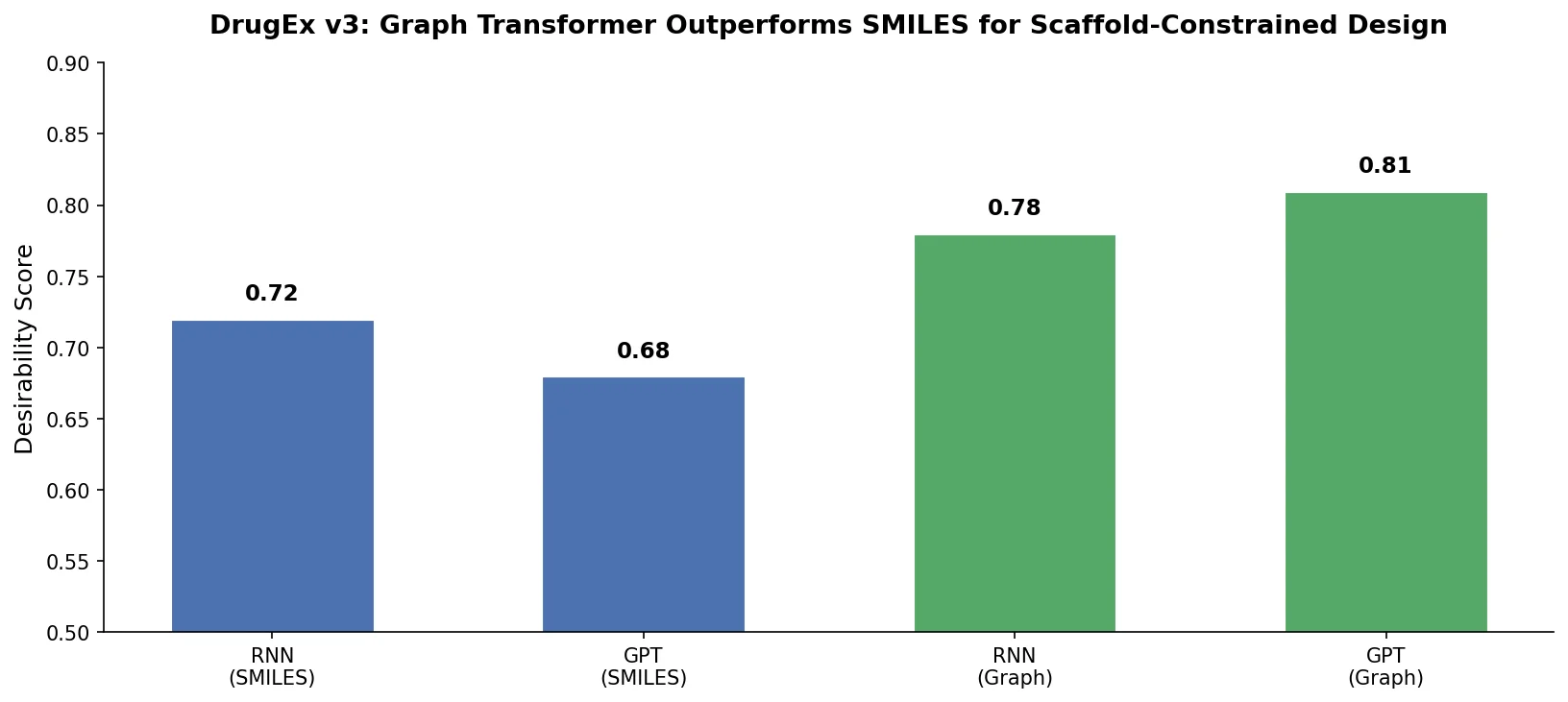

| DrugEx v3 | 2021 | Graph Transformer | Scaffold-constrained generation with 100% validity via adjacency-based encoding |

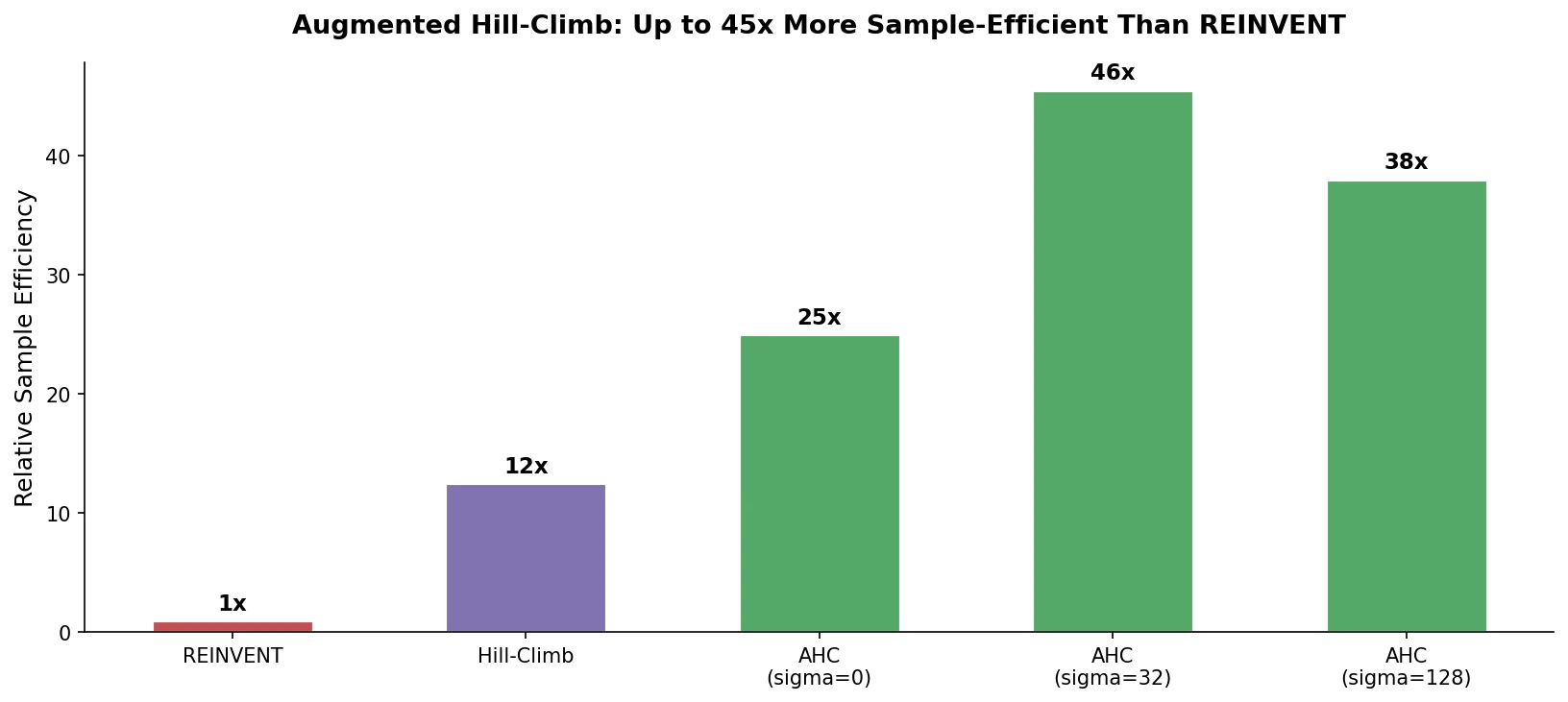

| Augmented Hill-Climb | 2022 | RNN | Hybrid RL strategy improving sample efficiency ~45x over REINVENT |

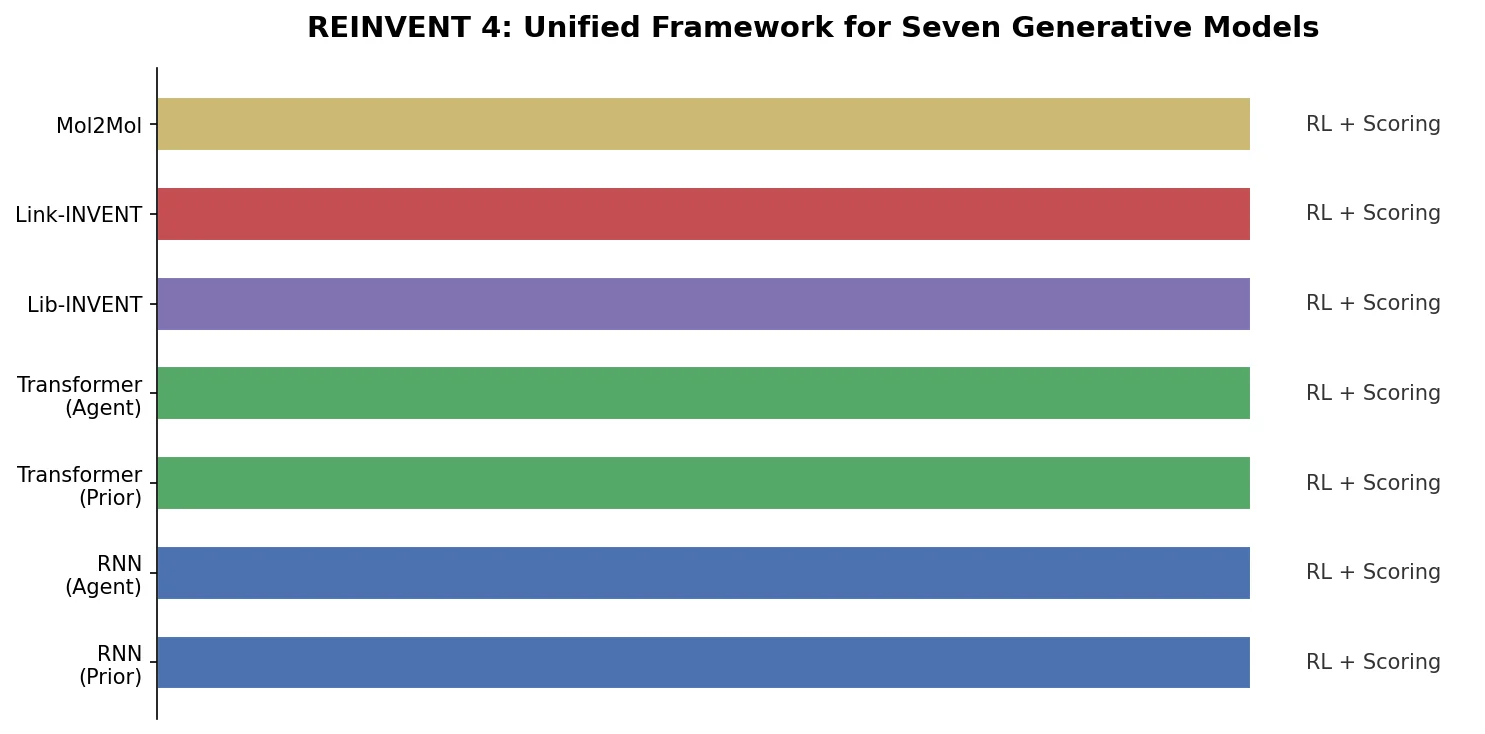

| REINVENT 4 | 2024 | RNN + Transformer | Open-source framework unifying RL, transfer learning, and curriculum learning |