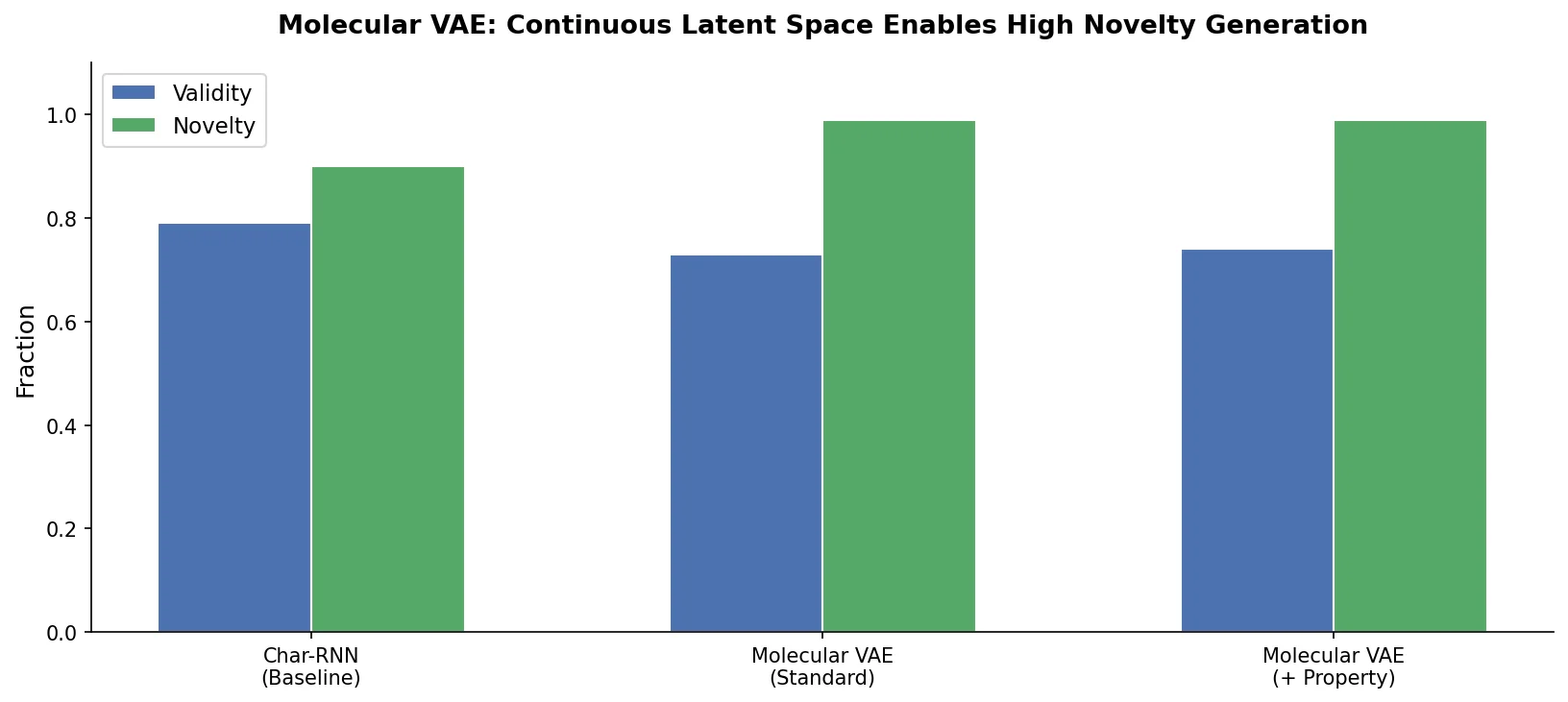

This group covers models that learn continuous latent representations of molecules and use that space for generation and optimization. The seminal SMILES VAE (Gomez-Bombarelli et al., 2018) established the paradigm: encode molecules into a smooth latent space, then search it via gradient-based or Bayesian optimization.

| Paper | Year | Architecture | Key Idea |

|---|---|---|---|

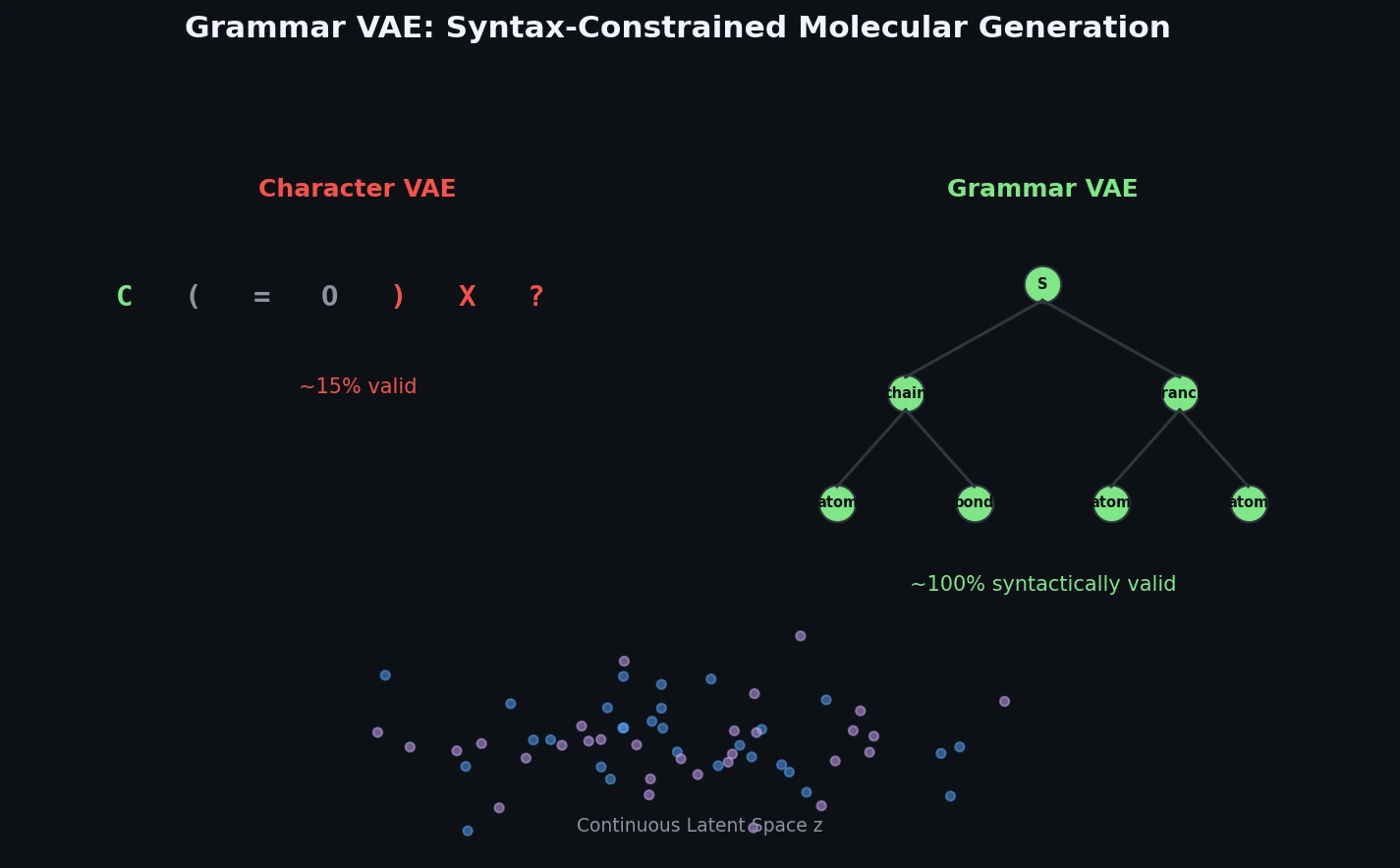

| Grammar VAE | 2017 | VAE + CFG | Context-free grammar decoder ensuring syntactically valid SMILES |

| Automatic Chemical Design | 2018 | VAE | Seminal SMILES VAE enabling Bayesian optimization in latent space |

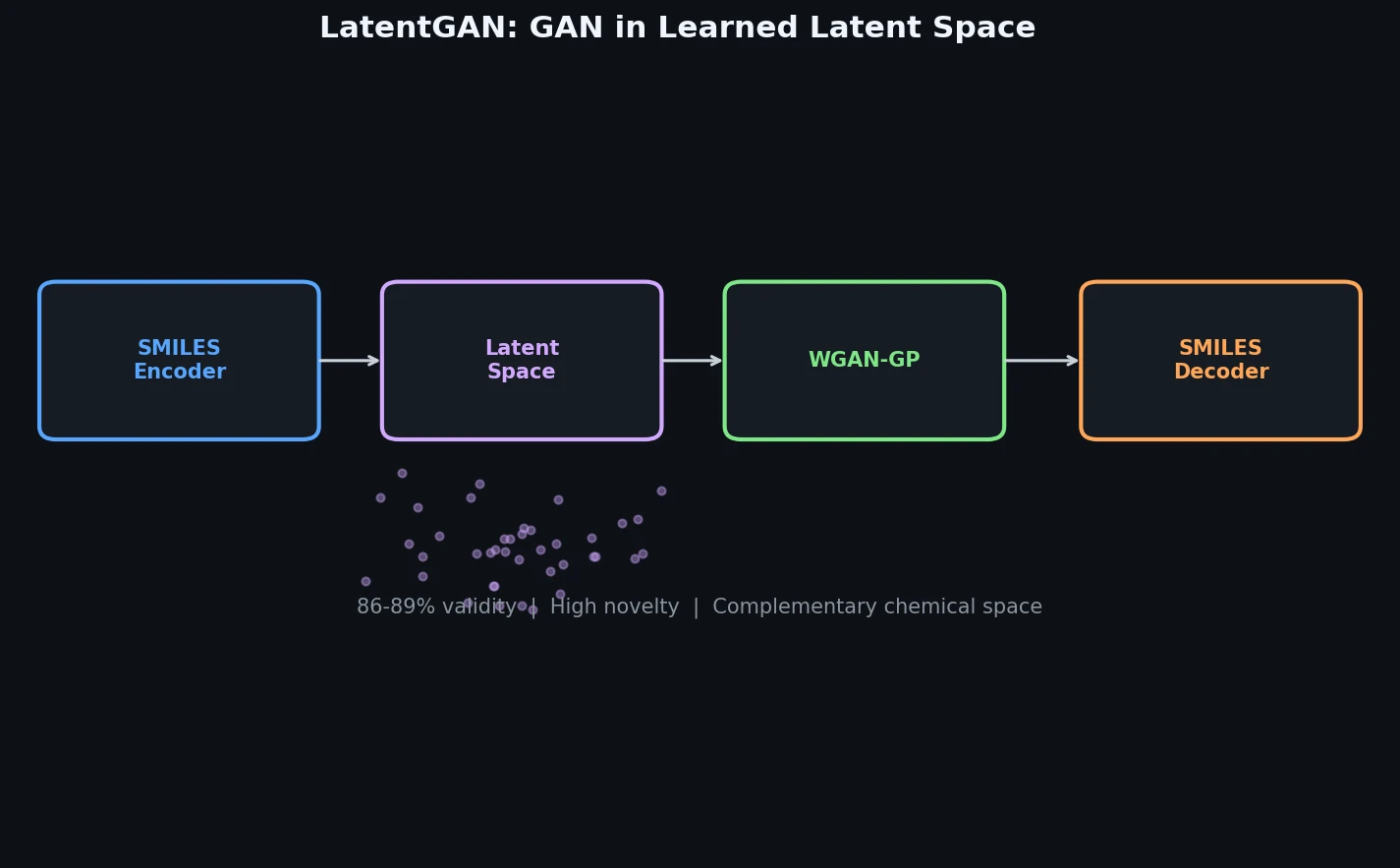

| LatentGAN | 2019 | WGAN + heteroencoder | Wasserstein GAN in heteroencoder latent space, bypassing SMILES syntax |

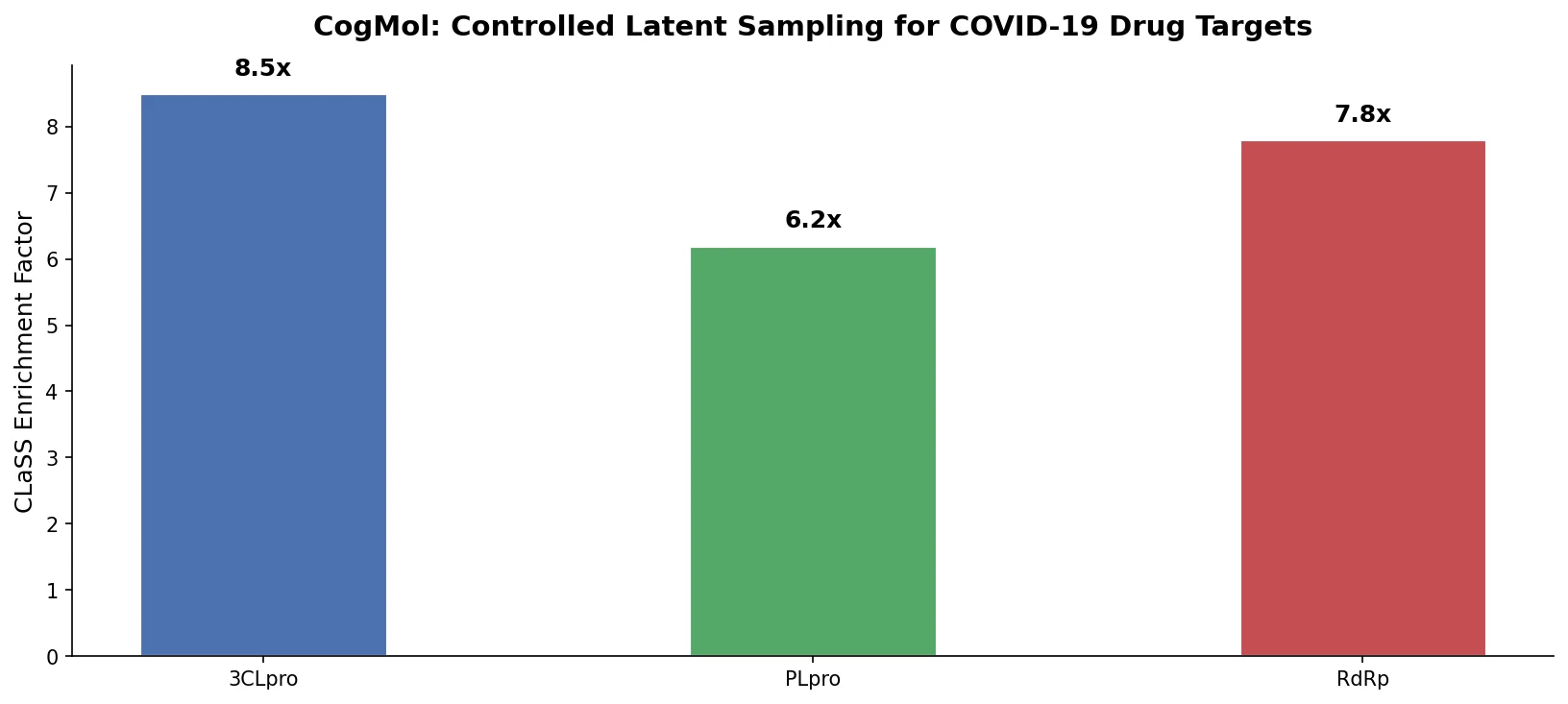

| CogMol | 2020 | VAE + CLaSS | Controlled latent sampling for target-specific design without retraining |

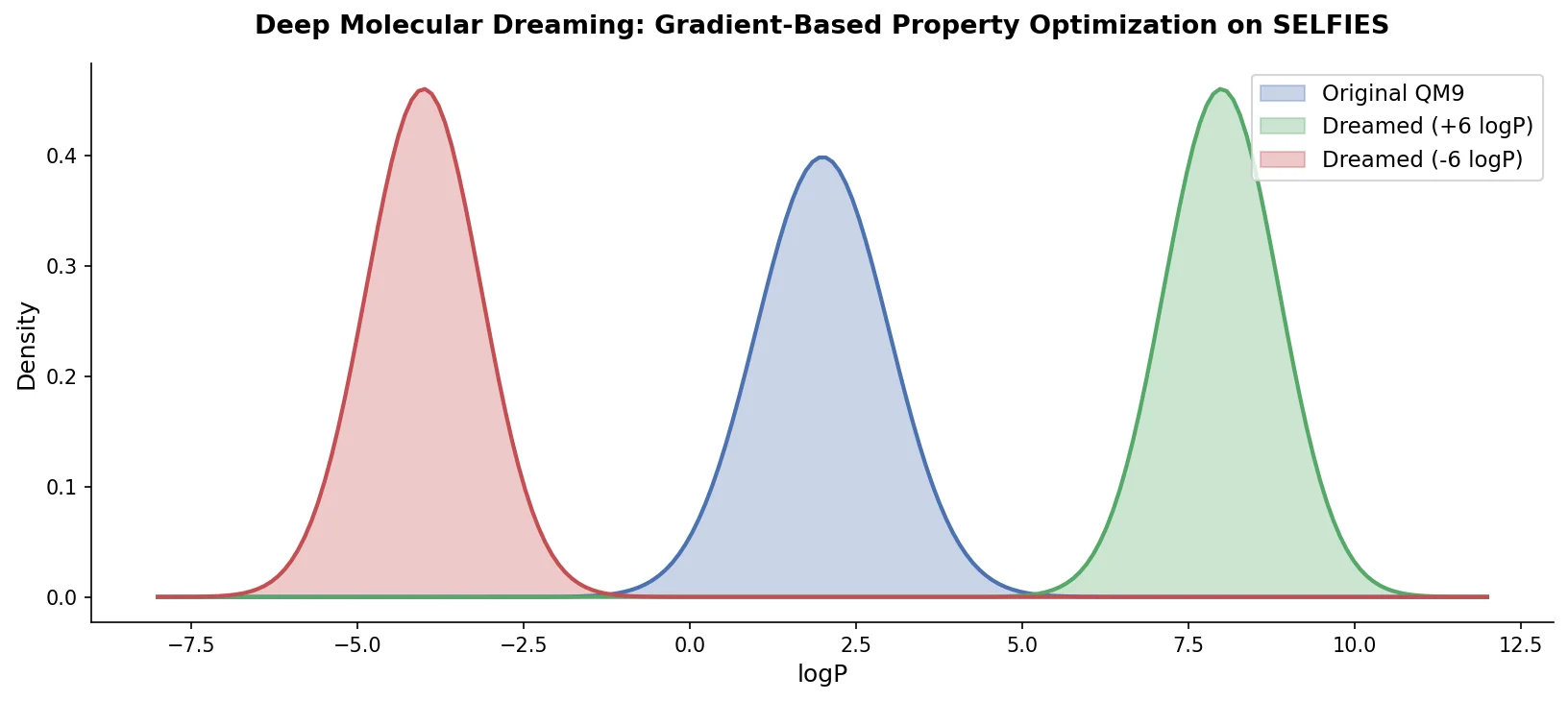

| PASITHEA | 2021 | Gradient dreaming | Deep dreaming on SELFIES via property network inversion |

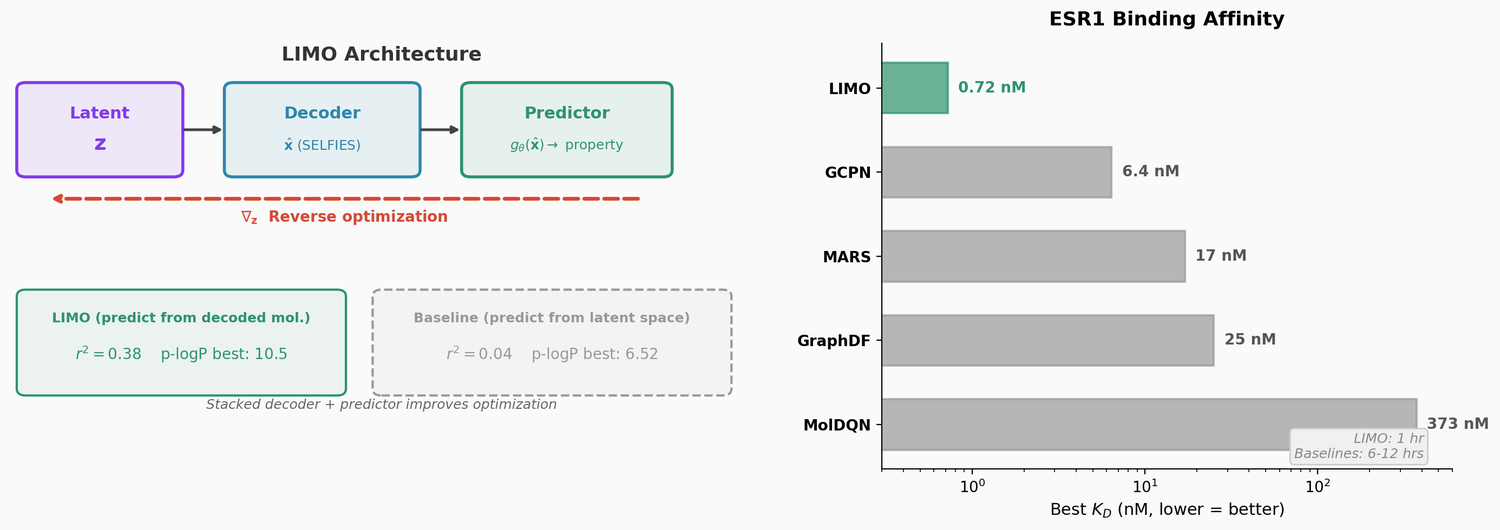

| LIMO | 2022 | VAE + property predictor | Stacked property predictor enabling gradient-based search for high-affinity molecules |