This group covers models that generate molecules autoregressively, producing tokens one at a time from chemical string representations. The collection spans from early RNN baselines through large-scale pre-trained transformers and alternative architectures like state-space models.

| Paper | Year | Architecture | Key Idea |

|---|---|---|---|

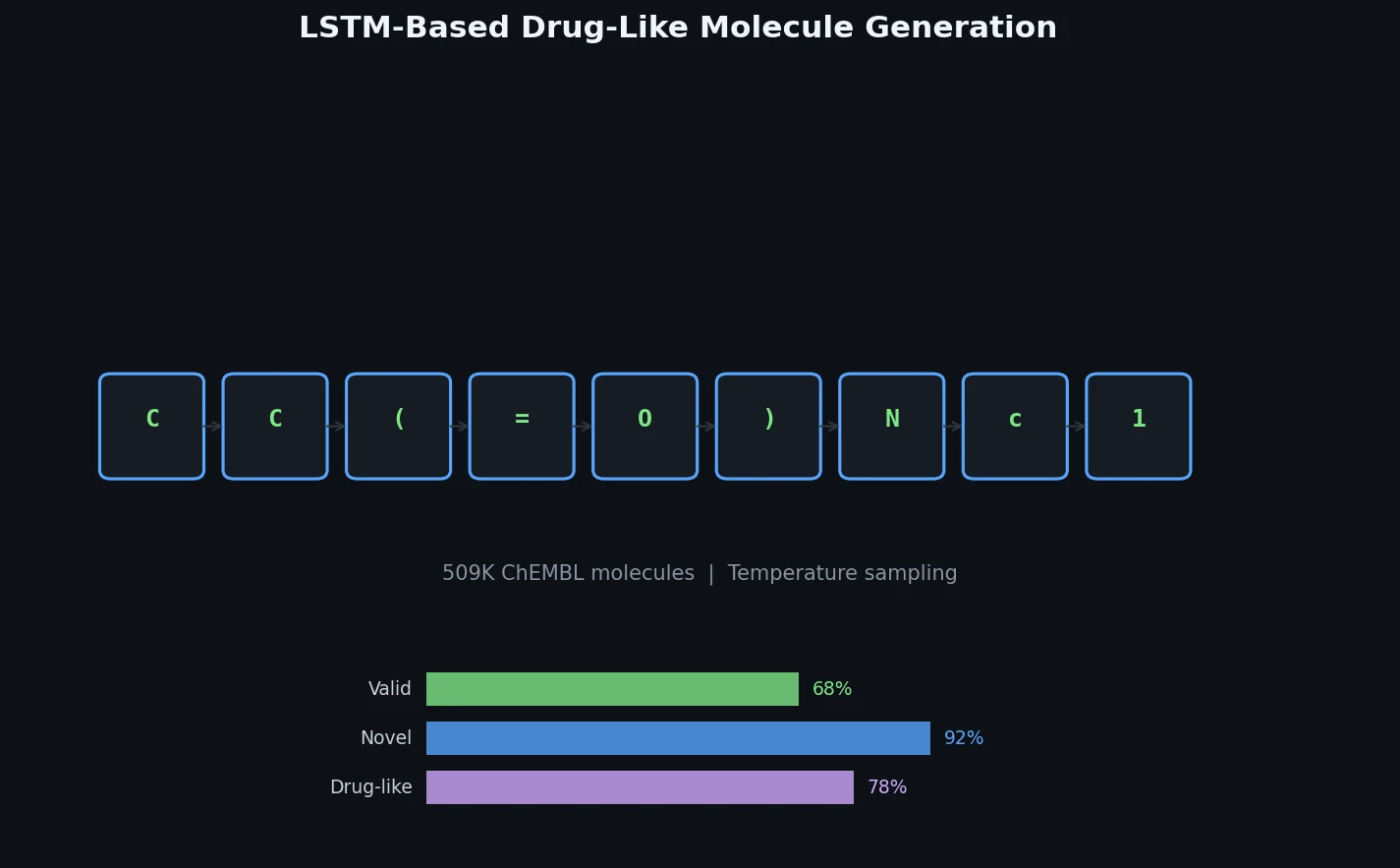

| LSTM Drug-Like Generation | 2017 | LSTM | Character-level SMILES generation trained on 509K ChEMBL molecules |

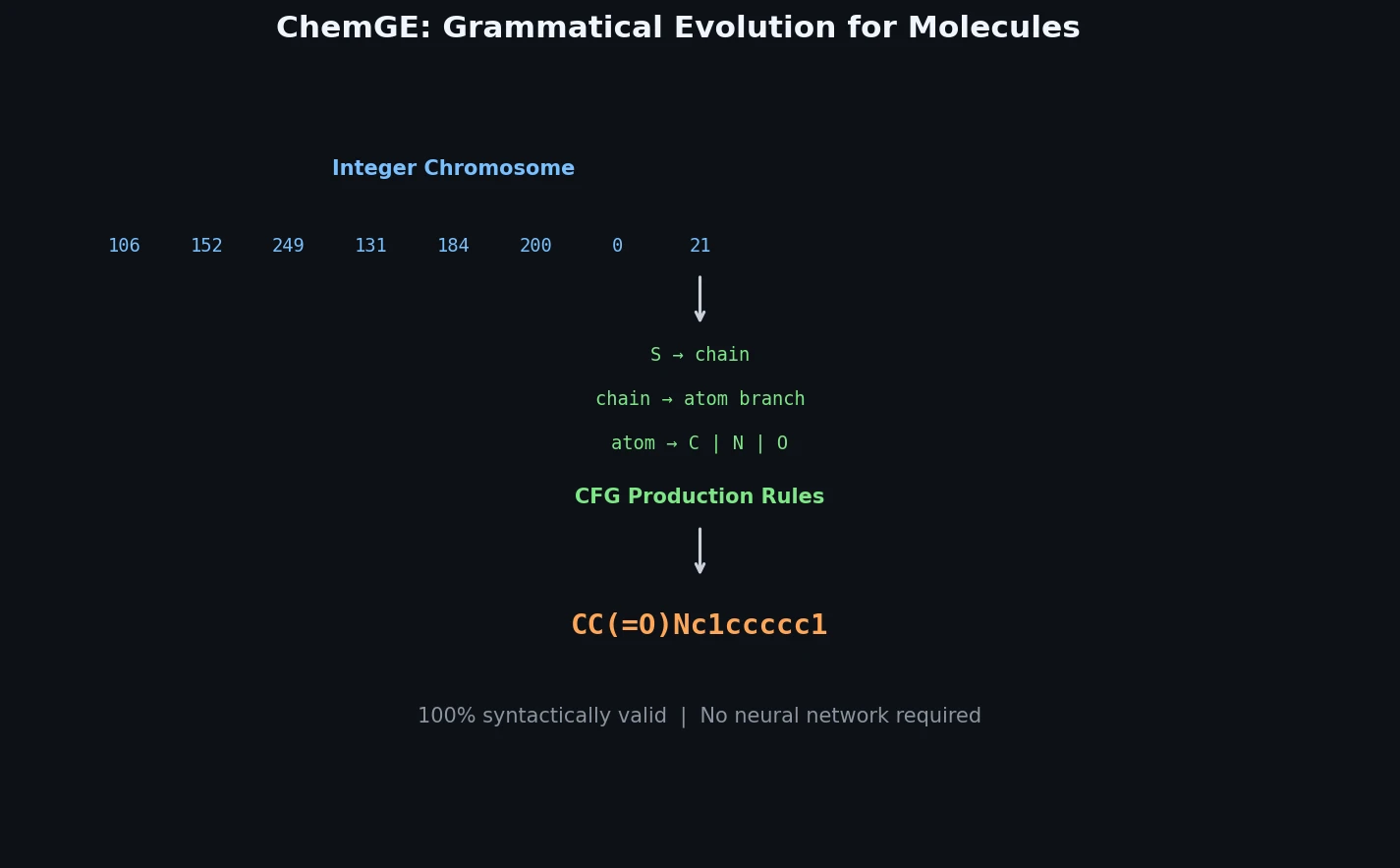

| ChemGE | 2018 | Grammatical evolution | Population-based search over SMILES grammar productions |

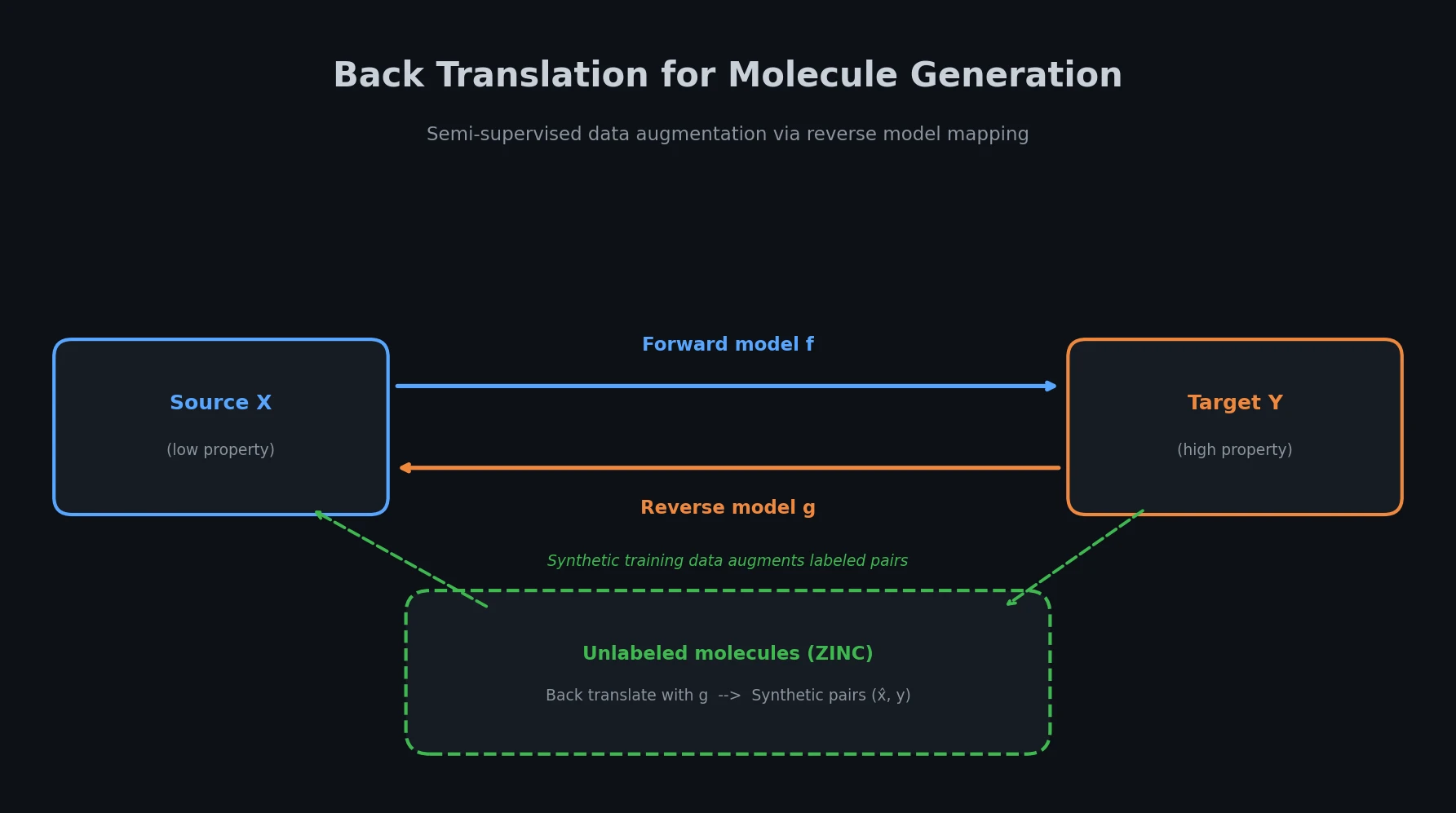

| Back-Translation | 2021 | Transformer | Semi-supervised generation using NLP back-translation with unlabeled ZINC data |

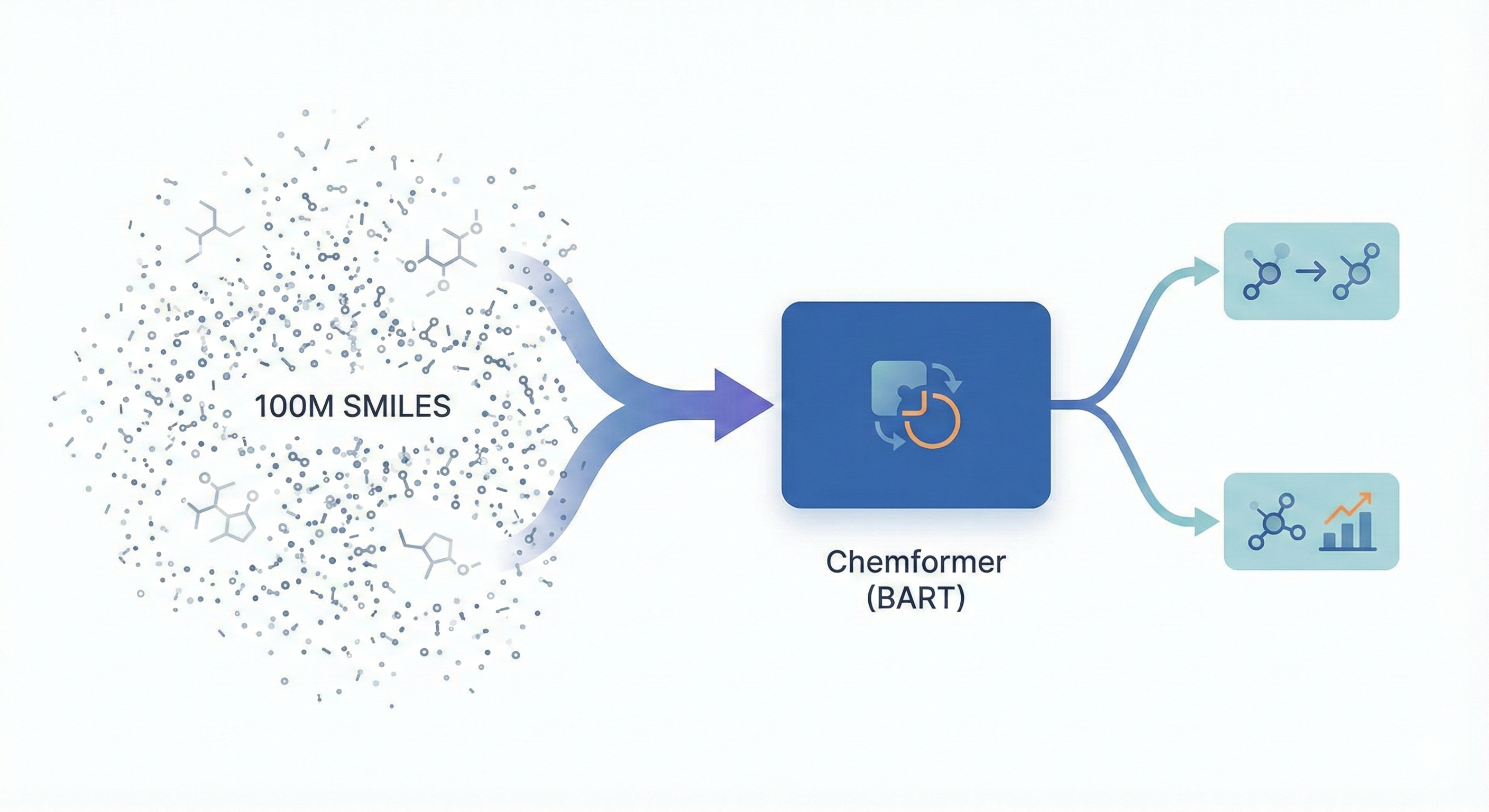

| Chemformer | 2022 | BART | Denoising pre-training on 100M molecules for generation and property prediction |

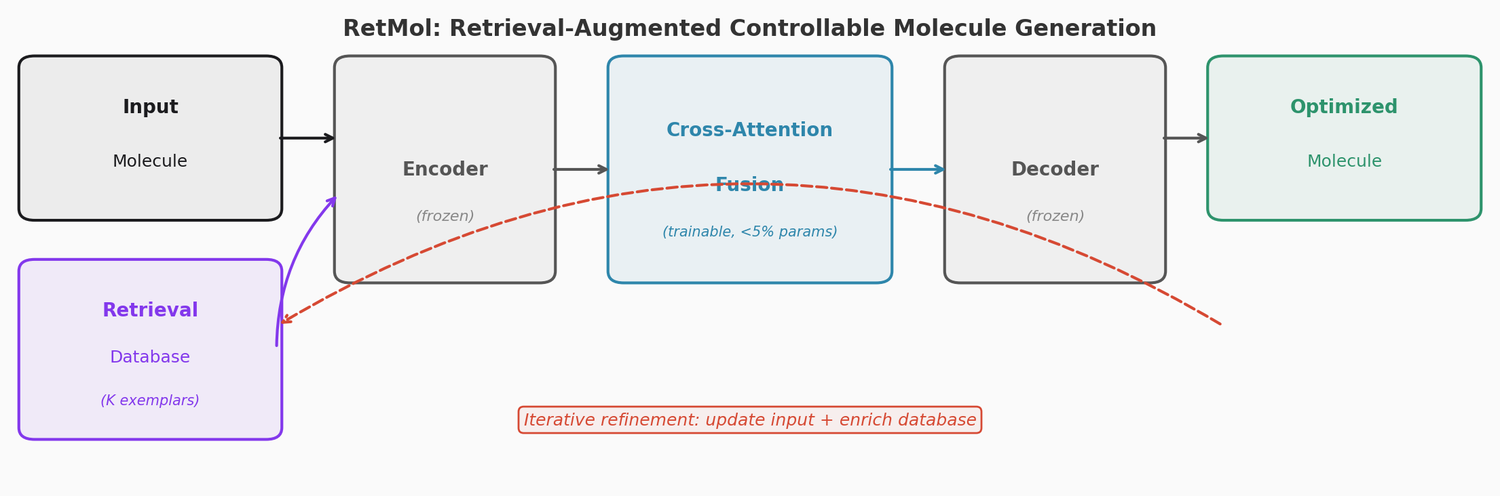

| RetMol | 2023 | Transformer + retrieval | Few-shot property steering via retrieval-augmented generation |

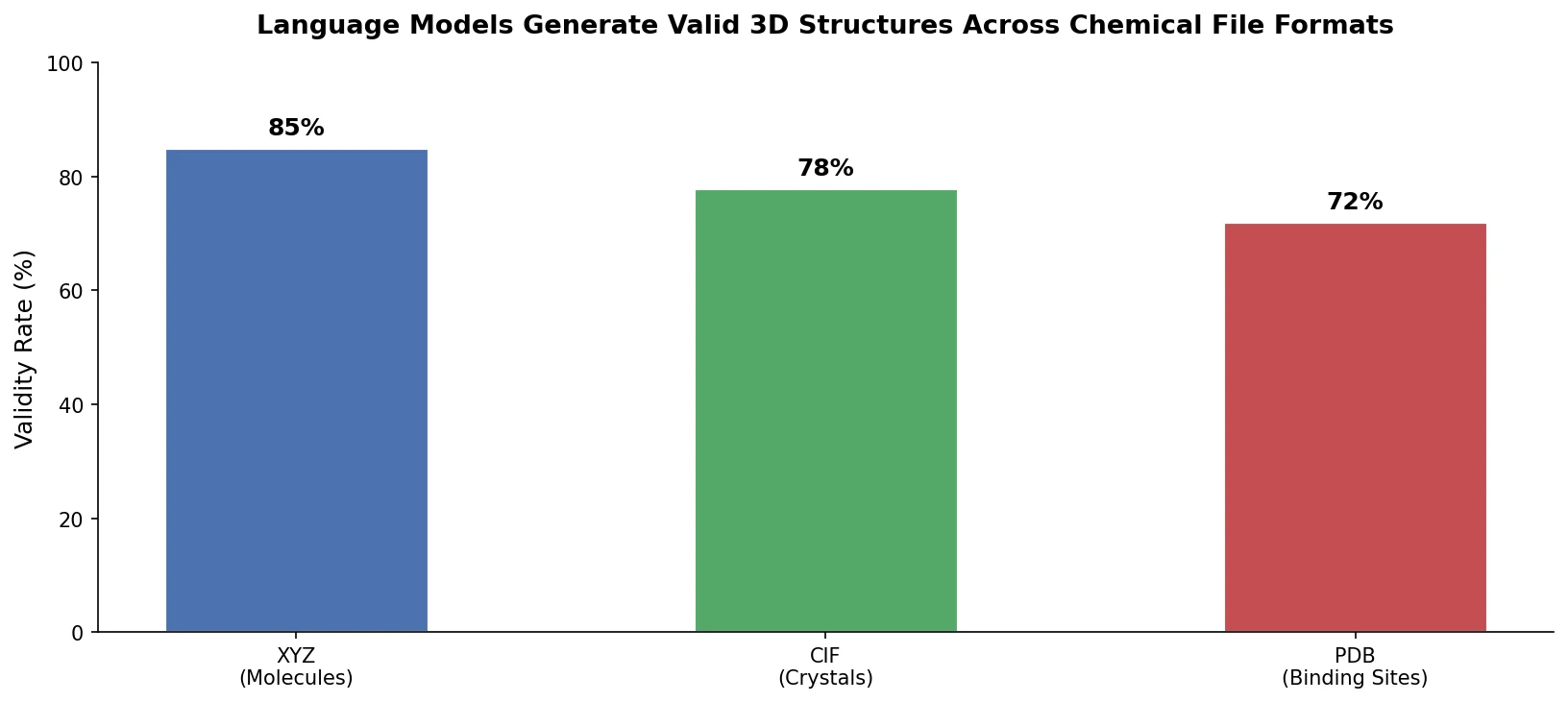

| 3D CLMs | 2023 | Transformer | Generating 3D molecules, crystals, and proteins from XYZ/CIF/PDB strings |

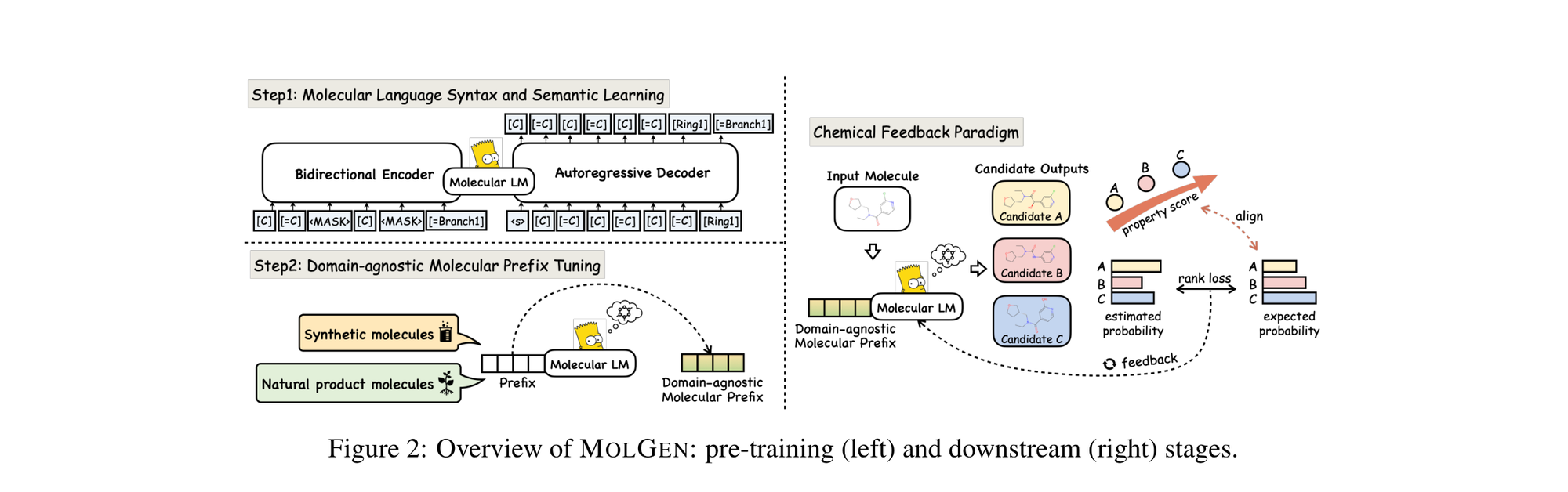

| MolGen | 2024 | Transformer | SELFIES pre-training with chemical feedback alignment |

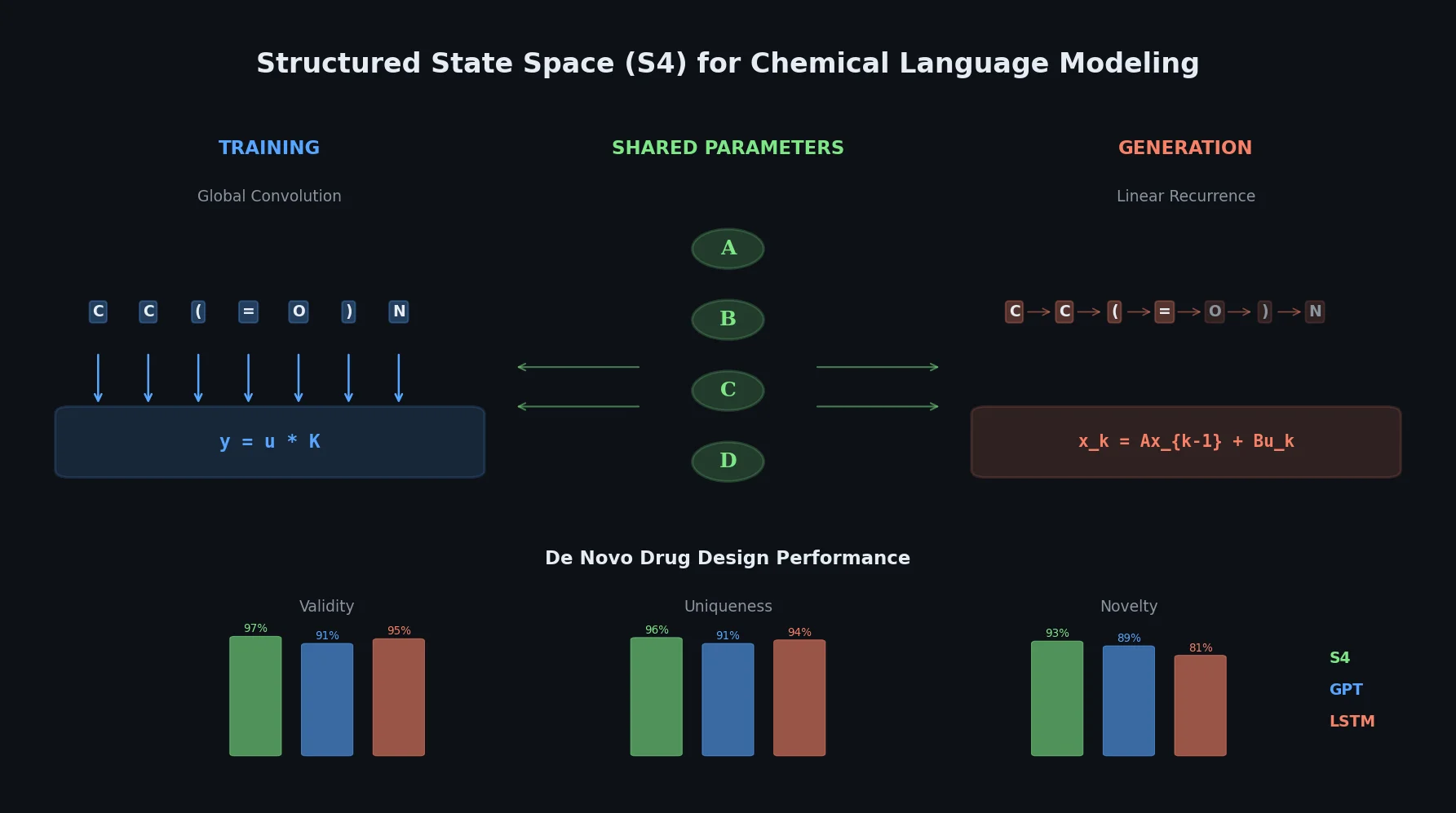

| S4 | 2024 | State-space model | Structured state spaces outperforming LSTMs and GPTs on bioactivity learning |

| GP-MoLFormer | 2025 | Transformer | 1.1B SMILES pre-training with pair-tuning for property optimization |