Method and Resource Contributions

This is primarily a Method paper with significant Resource contributions.

- Methodological Basis: The paper introduces a training pipeline (“mix-sourced distillation”) and domain-specific reinforcement learning to improve reasoning capabilities in chemical LLMs. It validates the approach through ablation studies across training stages.

- Resource Contribution: The authors constructed ChemFG, a 101 billion-token corpus annotated with “atomized” knowledge regarding functional groups and reaction centers.

Bridging the Chemical Reasoning Gap

Current chemical LLMs struggle to reason logically for two main reasons:

- Shallow Domain Understanding: Models generally learn molecule-level properties directly, bypassing the intermediate “atomized” characteristics (e.g., functional groups) that ultimately dictate chemical behavior.

- Specialized Reasoning Logic: Chemical logic differs fundamentally from math or code. Distilling reasoning from general teacher models like DeepSeek-R1 frequently fails because the teachers lack the domain intuition required to generate valid chemical rationales.

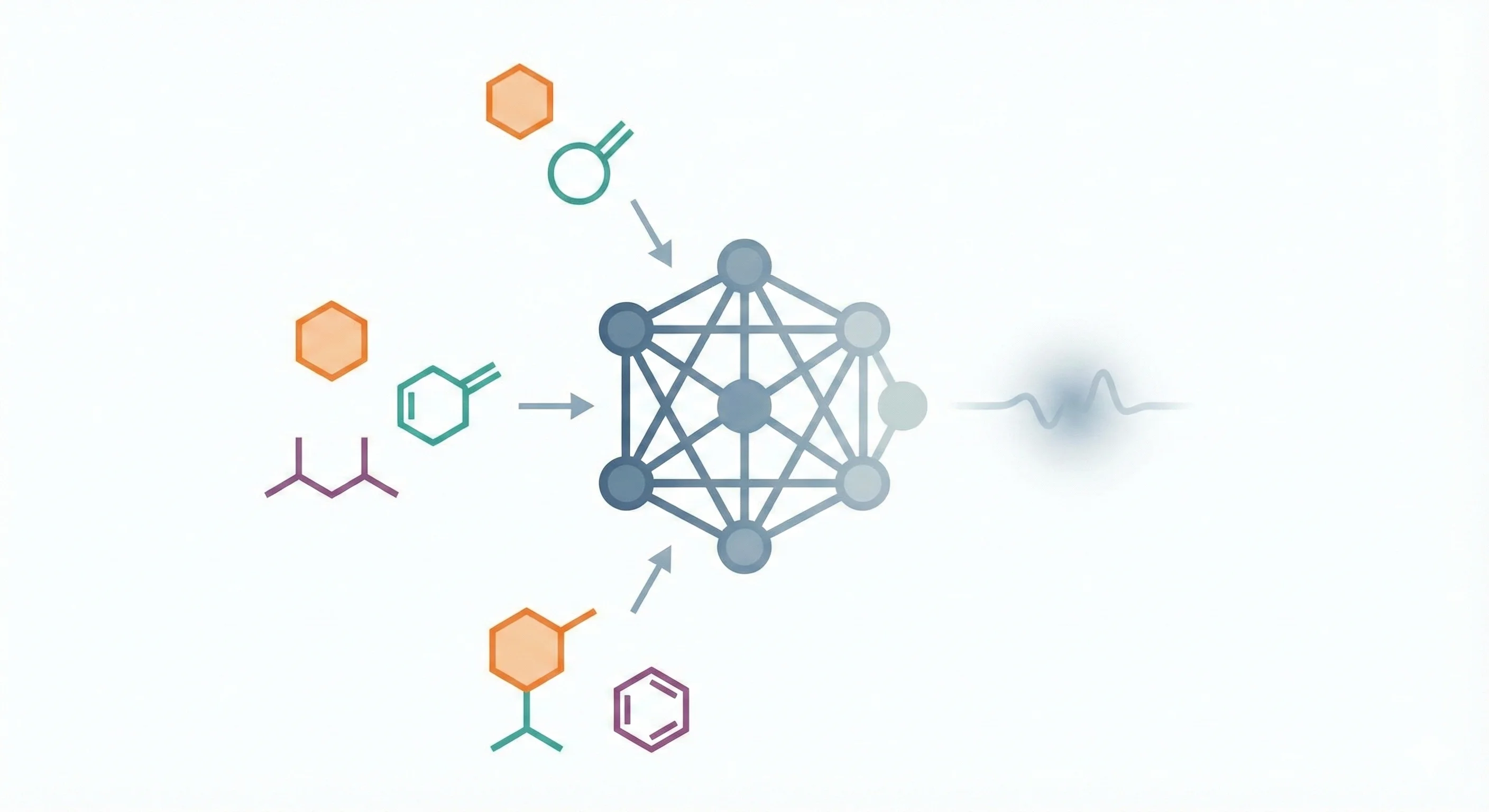

Atomized Knowledge and Mixed-Source Distillation

The authors introduce three structural innovations to solve the reasoning gap:

- Atomized Knowledge Enhancement (ChemFG): A toolkit was built leveraging SMARTS notations to identify functional group changes during reactions. A critique of this approach is that it relies heavily on 2D cheminformatics abstractions, potentially missing deeper 3D stereochemical interactions.

- Mix-Sourced Distillation: General models (DeepSeek-R1/o3-mini) are fed “pseudo-reasoning” prompts that include ground truth answers and functional group data. While this forces the teacher to generate high-quality rationales for the student to learn, it introduces a layer of hindsight bias into the generated reasoning chains. During inference, the student model lacks both the pre-calculated functional group metadata and the ground truth, forcing it to bridge an artificially steep generalization gap.

- Chemical Reinforcement Learning: The intermediate model undergoes domain-specific reinforcement learning. The RL details are described in the paper’s Appendix D, with the authors citing the open-source DAPO (Decoupled Clip and Dynamic Sampling Policy Optimization) framework. The optimization relies on rule-based rewards (format adherence and canonicalized SMILES accuracy) across a variety of chemical tasks.

Benchmark Evaluation and Ablation Studies

The model was evaluated on comprehensive chemical benchmarks: SciKnowEval (19 tasks) and ChemEval (36 tasks).

- Baselines: Compared against similarly sized open models (Qwen2.5-14B-Instruct, Qwen3-14B), domain models (ChemLLM, MolInst), and frontier models (GPT-4o, DeepSeek-R1).

- Ablation: Evaluated across training stages (Base → ChemDFM-I → ChemDFM-R) to measure the specific impact of the instruction tuning versus the reasoning stages.

- Qualitative Analysis: The paper includes case studies demonstrating the model’s step-by-step chemical reasoning and its potential for human-AI collaboration (Sections 4.2 and 4.3).

Performance Outcomes and Numerical Limitations

- Performance vs. Baselines: ChemDFM-R outperforms similarly sized open models and domain models on molecule-centric and reaction-centric tasks, and surpasses the much larger DeepSeek-R1 on ChemEval (0.78 vs. 0.58 overall). It shows competitive results relative to o4-mini, though o4-mini leads on SciKnowEval (0.74 vs. 0.70).

- Reasoning Interactivity: The model generates readable rationales that allow users to catch structural errors or identify reaction mechanisms accurately. Section 4.3 of the paper demonstrates human-AI collaboration scenarios.

- Quantitative Limitations: The model struggles with tasks involving numerical prediction and calculation (e.g., yield extraction, molecular property calculation). The paper notes that all molecule-centric and reaction-centric tasks where ChemDFM-R falls short of Qwen2.5-14B-Instruct involve numerical reasoning.

Reproducibility Details

Data

The training data is constructed in three phases:

1. Domain Pre-training (ChemFG):

- Size: 101 billion tokens

- Composition:

- 12M literature documents (79B tokens)

- 30M molecules from PubChem/PubChemQC

- 7M reactions from USPTO-FULL

- Augmentation: SMILES augmentation (10x) using R-SMILES

- Atomized Features: Annotated with a custom “Functional Group Identification Toolkit” that identifies 241 functional group types and tracks changes in reaction centers. Note: Data and toolkit are partially reproduced; while the toolkit (ChemFG-Tool) was open-sourced on GitHub, the 101 billion-token ChemFG dataset itself has not been publicly released.

2. Instruction Tuning:

- Sources: Molecule-centric (PubChem, MoleculeNet), Reaction-centric (USPTO), and Knowledge-centric (Exams, Literature QA) tasks

- Mixing: Mixed with general instruction data in a 1:2 ratio

3. Distillation Dataset:

- Sources:

- ~70% ChemDFM-R instruction data

- ~22% constructed pseudo-reasoning (functional group descriptions)

- ~8% teacher rationales (from DeepSeek-R1/o3-mini)

- Mixing: Mixed with general data (including AM-Deepseek-R1-Distill-1.4M) in a 1:2 ratio

Algorithms

Functional Group Identification:

- Extends the

thermolibrary’s SMARTS list - For reactions, identifies “reacting functional groups” by finding reactants containing atoms involved in bond changes (reaction centers) that do not appear in the product

Mix-Sourced Distillation:

- Teacher models (DeepSeek-R1, o3-mini) are prompted with Question + Ground Truth + Functional Group Info to generate high-quality “Thoughts”

- These rationales are distilled into the student model using a supervised fine-tuning loss across target tokens $y_t$: $$ \mathcal{L}_{\text{SFT}} = - \sum_{t=1}^T \log P_\theta(y_t \mid x, y_{<t}) $$

Reinforcement Learning:

- Algorithm: The paper cites DAPO (Decoupled Clip and Dynamic Sampling Policy Optimization) as the RL framework; full details are in Appendix D of the paper. Note: While the underlying DAPO framework is open-source, the specific chemistry-oriented RL pipeline and environment used for ChemDFM-R has not been publicly released.

- Hyperparameters (from paper appendix): Learning rate

5e-7, rollout batch size512, training batch size128 - Rewards: The reward system applies rule-based constraints focusing on physical form and chemical validity. The total reward $R(y, y^*)$ for a generated response $y$ given target $y^*$ combines a format adherence reward ($R_{\text{format}}$) and an accuracy reward ($R_{\text{acc}}$) evaluated on canonicalized SMILES: $$ R(y, y^*) = R_{\text{format}}(y) + R_{\text{acc}}(\text{canonicalize}(y), \text{canonicalize}(y^*)) $$

Models

- Base Model: Qwen2.5-14B

- ChemDFM-I: Result of instruction tuning the domain-pretrained model for 2 epochs

- ChemDFM-R: Result of applying mix-sourced distillation (1 epoch) followed by RL on ChemDFM-I. Note: Model weights are publicly available on Hugging Face.

Hardware

Hardware and training time details are described in the paper’s appendices, which are not available in the extracted text. The details below are reported from the paper but could not be independently cross-verified against the main text:

- Compute: NVIDIA A800 Tensor Core GPUs

- Training Time: 30,840 GPU hours total (Domain Pretraining: 24,728 hours; Instruction Tuning: 3,785 hours; Distillation: 2,059 hours; Reinforcement Learning: 268 hours)

Evaluation

Benchmarks:

- SciKnowEval: 19 tasks (text-centric, molecule-centric, reaction-centric)

- ChemEval: 36 tasks, categorized similarly

Key Metrics: Accuracy, F1 Score, BLEU score (with PRS normalization for ChemEval)

| Model | SciKnowEval (all) | ChemEval* (all) | Notes |

|---|---|---|---|

| Qwen2.5-14B-Instruct | 0.61 | 0.57 | General-domain baseline |

| ChemDFM-I | 0.69 | 0.72 | After domain pretraining + instruction tuning |

| ChemDFM-R | 0.70 | 0.78 | After distillation + RL |

| DeepSeek-R1 | 0.62 | 0.58 | General-domain reasoning model |

| o4-mini | 0.74 | 0.69 | Frontier reasoning model |

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| ChemDFM-R-14B | Model | AGPL-3.0 | Final reasoning model weights on Hugging Face |

| ChemFG-Tool | Code | Apache-2.0 | Functional group identification toolkit (241 groups) |

Missing components: The 101B-token ChemFG pretraining dataset is not publicly released. The chemistry-oriented RL pipeline and training code are not open-sourced. The instruction tuning and distillation datasets are not available.

Paper Information

Citation: Zhao, Z., Chen, B., Wan, Z., Chen, L., Lin, X., Yu, S., Zhang, S., Ma, D., Zhu, Z., Zhang, D., Wang, H., Dai, Z., Wen, L., Chen, X., & Yu, K. (2025). ChemDFM-R: A Chemical Reasoning LLM Enhanced with Atomized Chemical Knowledge. arXiv preprint arXiv:2507.21990. https://doi.org/10.48550/arXiv.2507.21990

Publication: arXiv 2025

@misc{zhao2025chemdfmr,

title={ChemDFM-R: A Chemical Reasoning LLM Enhanced with Atomized Chemical Knowledge},

author={Zihan Zhao and Bo Chen and Ziping Wan and Lu Chen and Xuanze Lin and Shiyang Yu and Situo Zhang and Da Ma and Zichen Zhu and Danyang Zhang and Huayang Wang and Zhongyang Dai and Liyang Wen and Xin Chen and Kai Yu},

year={2025},

eprint={2507.21990},

archivePrefix={arXiv},

primaryClass={cs.CE},

url={https://arxiv.org/abs/2507.21990}

}