Taxonomy and Paper Contributions

This is primarily a Method paper ($\Psi_{\text{Method}}$), with a significant Resource component ($\Psi_{\text{Resource}}$).

It is a methodological investigation because it systematically evaluates a specific architecture (Transformers/RoBERTa) against established State-of-the-Art (SOTA) baselines like directed Message Passing Neural Networks (D-MPNNs) to determine “how well does this work?” in the chemical domain. It ablates dataset size, tokenization, and input representation.

It is also a resource paper as it introduces “PubChem-77M,” a curated dataset of 77 million SMILES strings designed to facilitate large-scale self-supervised pretraining for the community.

Overcoming Data Scarcity in Property Prediction

The primary motivation is data scarcity in molecular property prediction. Graph Neural Networks (GNNs) achieve strong performance on property prediction tasks when provided with sufficient labeled data. Generating these labels requires costly and time-consuming laboratory testing, leading to severe data scarcity in specialized chemical domains.

Massive quantities of unlabeled chemical structure data exist in the form of SMILES strings. Inspired by the success of Transformers in NLP, where self-supervised pretraining on large corpora yields strong transfer learning, the authors aim to use these unlabeled datasets to learn effective molecular representations. Additionally, Transformers benefit from a mature software ecosystem (HuggingFace) that offers efficiency advantages over GNNs.

Pretraining Scaling Laws and Novelty

Previous works applied Transformers to SMILES strings. This paper advances the field by systematically evaluating scaling laws and architectural components for this domain. Specifically:

- Scaling Analysis: It explicitly tests how pretraining dataset size (100K to 10M) impacts downstream performance.

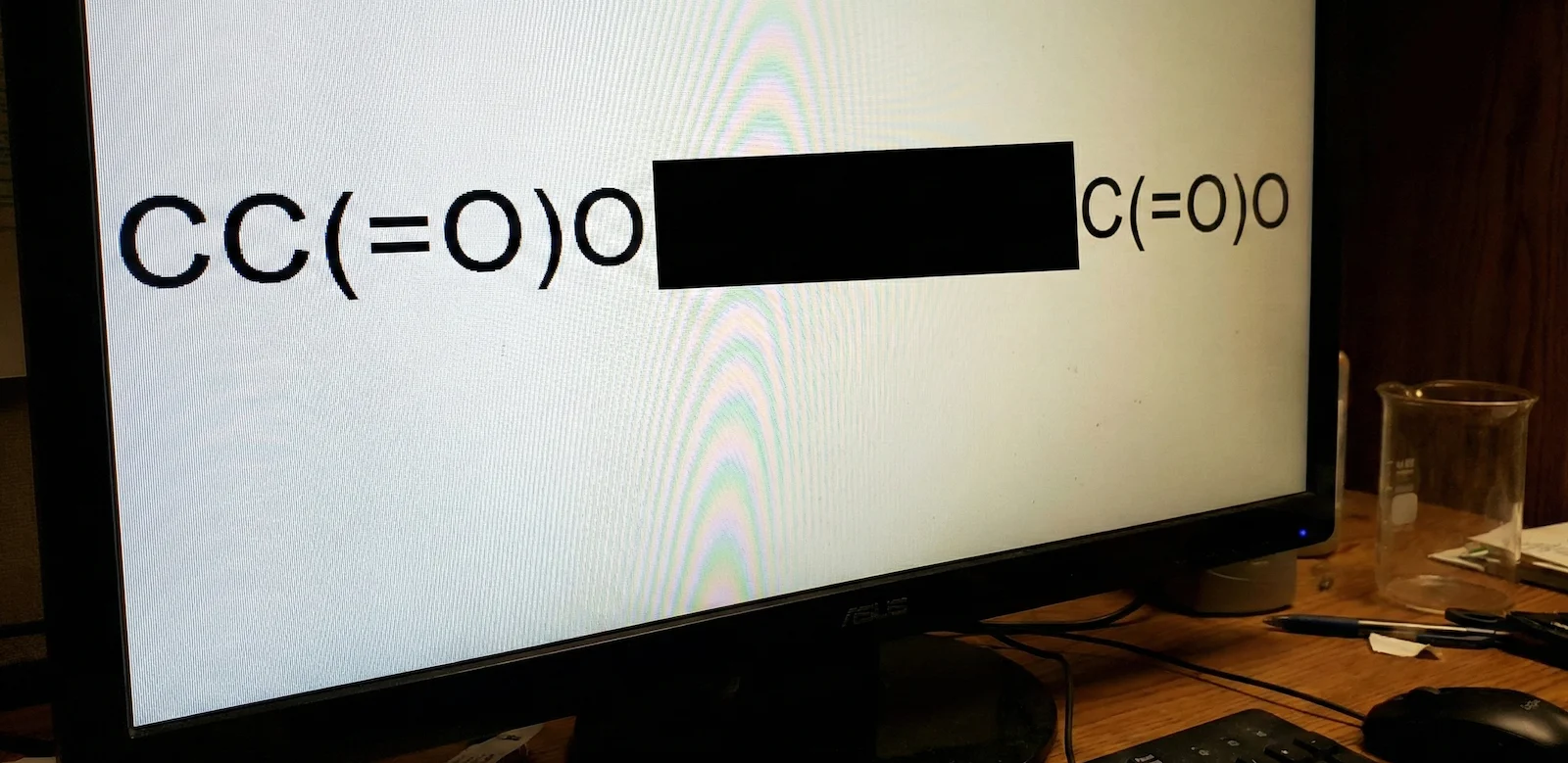

- Tokenizer Comparison: It compares standard NLP Byte-Pair Encoding (BPE) against a chemically-aware “SmilesTokenizer”.

- Representation Comparison: It evaluates if the robust SELFIES string representation offers advantages over standard SMILES in a Transformer context.

Experimental Setup: Pretraining and Finetuning

The authors trained ChemBERTa (based on RoBERTa) using Masked Language Modeling (MLM) on subsets of the PubChem dataset. The core training objective minimizes the cross-entropy loss over a corrupted input where a subset of basic tokens, denoted by $\mathcal{M}$, are masked:

$$ \mathcal{L}_{\text{MLM}} = - \frac{1}{|\mathcal{M}|} \sum_{i \in \mathcal{M}} \log P(x_i \mid x_{\setminus \mathcal{M}}; \theta) $$

where $x_i$ is the exact masked token, $x_{\setminus \mathcal{M}}$ is the corrupted SMILES context string, and $\theta$ represents the network parameters.

- Pretraining: Models were pretrained on dataset sizes of 100K, 250K, 1M, and 10M compounds.

- Baselines: Performance was compared against D-MPNN (Graph Neural Network), Random Forest (RF), and SVM using 2048-bit Morgan Fingerprints.

- Downstream Tasks: Finetuning was performed individually on small MoleculeNet classification tasks: BBBP (blood-brain barrier), ClinTox (clinical toxicity), HIV, and Tox21 (p53 stress-response). This poses a transfer learning challenge, as the model must adapt from pretraining on 10 million molecules to classifying datasets ranging from ~1.5K to ~41K examples.

- Ablations:

- Tokenization: BPE vs. SmilesTokenizer on the 1M dataset, evaluated on Tox21.

- Input: SMILES vs. SELFIES strings on the Tox21 task.

Results vs. Graph Neural Network Baselines

The main comparison between ChemBERTa (pretrained on 10M compounds) and Chemprop baselines on MoleculeNet tasks is summarized below (Table 1 from the paper):

| Model | BBBP ROC | BBBP PRC | ClinTox ROC | ClinTox PRC | HIV ROC | HIV PRC | Tox21 ROC | Tox21 PRC |

|---|---|---|---|---|---|---|---|---|

| ChemBERTa 10M | 0.643 | 0.620 | 0.733 | 0.975 | 0.622 | 0.119 | 0.728 | 0.207 |

| D-MPNN | 0.708 | 0.697 | 0.906 | 0.993 | 0.752 | 0.152 | 0.688 | 0.429 |

| RF | 0.681 | 0.692 | 0.693 | 0.968 | 0.780 | 0.383 | 0.724 | 0.335 |

| SVM | 0.702 | 0.724 | 0.833 | 0.986 | 0.763 | 0.364 | 0.708 | 0.345 |

- Scaling Improvements & Training Dynamics: Performance scales predictably with pretraining data size. Increasing data from 100K to 10M improved ROC-AUC by +0.110 and PRC-AUC by +0.059 on average across BBBP, ClinTox, and Tox21 (HIV was omitted due to resource constraints). Notably, researchers had to halt pretraining on the 10M subset after just 3 epochs due to overfitting, suggesting that simple 15% token masking might not provide a sufficiently difficult learning curvature for large-scale chemical representation.

- Performance Limits vs. GNNs: ChemBERTa generally performs below the D-MPNN baseline. On the Tox21 dataset, ChemBERTa-10M achieved a higher ROC-AUC (0.728) than D-MPNN (0.688); nonetheless, it recorded a substantially lower PRC-AUC (0.207 vs 0.429). This gap indicates that current Transformer iterations lack the explicit inductive biases of graph algorithms and struggle with the severe class imbalances typical of chemical datasets.

- Ablation Limitations (Tokenization & SELFIES): The authors’ ablation studies for tokenization (SmilesTokenizer narrowly beating BPE) and input representation (SELFIES performing comparably to SMILES) were evaluated exclusively on the single Tox21 task. Deriving broad architectural conclusions regarding “semantically-aware tokenization” or string robustness from an $N=1$ empirical evaluation is a significant limitation of the study. Broader benchmarking is required to validate these findings.

- Interpretability: Attention heads organically learn to track chemically relevant substructures (like specific functional groups and aromatic rings), mimicking the inductive biases of graph convolutions.

Reproducibility Details

Data

The authors curated a massive dataset for pretraining and utilized standard benchmarks for evaluation.

- Pretraining Data: PubChem-77M.

- Source: 77 million unique SMILES from PubChem.

- Preprocessing: Canonicalized and globally shuffled.

- Subsets used: 100K, 250K, 1M, and 10M subsets.

- Availability Note: The authors provided a direct link to the canonicalized 10M compound subset used for their largest experiments. Full reproducibility of the smaller (100K, 250K, 1M) or full 77M sets may require re-extracting from PubChem.

- Evaluation Data: MoleculeNet.

- Tasks: BBBP (2,039), ClinTox (1,478), HIV (41,127), Tox21 (7,831).

- Splitting: 80/10/10 train/valid/test split using a scaffold splitter to ensure chemical diversity between splits.

Algorithms

The core training methodology mirrors standard BERT/RoBERTa procedures adapted for chemical strings.

- Objective: Masked Language Modeling (MLM) with 15% token masking.

- Tokenization:

- BPE: Byte-Pair Encoder (vocab size 52K).

- SmilesTokenizer: Regex-based custom tokenizer available in DeepChem (documented here).

- Sequence Length: Maximum sequence length of 512 tokens.

- Finetuning: Appended a linear classification layer; backpropagated through the base model for up to 25 epochs with early stopping on ROC-AUC.

Models

- Architecture: RoBERTa (via HuggingFace).

- Layers: 6

- Attention Heads: 12 (72 distinct mechanisms total).

- Implementation Note: The original training notebooks and scripts are maintained in the authors’ bert-loves-chemistry repository, alongside the primary downstream tasks integrated into DeepChem. A full Tox21 transfer learning tutorial has been incorporated into the DeepChem repository.

- Baselines (via Chemprop library):

- D-MPNN: Directed Message Passing Neural Network with default hyperparameters.

- RF/SVM: Scikit-learn Random Forest and SVM using 2048-bit Morgan fingerprints (RDKit).

Evaluation

Performance is measured using dual metrics to account for class imbalance common in toxicity datasets.

| Metric | Details |

|---|---|

| ROC-AUC | Area Under Receiver Operating Characteristic Curve |

| PRC-AUC | Area Under Precision-Recall Curve (vital for imbalanced data) |

Hardware

- Compute: Single NVIDIA V100 GPU.

- Training Time: Approximately 48 hours for the 10M compound subset.

- Carbon Footprint: Estimated 17.1 kg $\text{CO}_2\text{eq}$ (offset by Google Cloud).

Artifacts

| Artifact | Type | License | Notes |

|---|---|---|---|

| bert-loves-chemistry | Code | MIT | Training notebooks and finetuning scripts |

| DeepChem | Code | MIT | Integration of ChemBERTa and SmilesTokenizer |

| ChemBERTa-zinc-base-v1 | Model | Unknown | Pre-trained RoBERTa on 100K ZINC SMILES |

| PubChem-10M subset | Dataset | Unknown | Canonicalized 10M compound subset used for largest experiments |

Reproducibility status: Partially Reproducible. Code and pre-trained models are available, and the 10M pretraining subset is downloadable. However, smaller subsets (100K, 250K, 1M) may need re-extraction from PubChem, and exact hyperparameter details for finetuning (learning rate, batch size) are not fully specified in the paper.

Paper Information

Citation: Chithrananda, S., Grand, G., & Ramsundar, B. (2020). ChemBERTa: Large-Scale Self-Supervised Pretraining for Molecular Property Prediction. arXiv preprint arXiv:2010.09885. https://doi.org/10.48550/arXiv.2010.09885

Publication: arXiv 2020 (Preprint)

Additional Resources:

- HuggingFace Model Hub (ChemBERTa-zinc-base-v1) - Additional pre-trained variations on PubChem & ZINC datasets are available on the author’s seyonec HF profile.

- bert-loves-chemistry GitHub Repository - Notebooks and scripts used for MLM pretraining and finetuning evaluations.

BibTeX

@misc{chithranandaChemBERTaLargeScaleSelfSupervised2020,

title = {{{ChemBERTa}}: {{Large-Scale Self-Supervised Pretraining}} for {{Molecular Property Prediction}}},

shorttitle = {{{ChemBERTa}}},

author = {Chithrananda, Seyone and Grand, Gabriel and Ramsundar, Bharath},

year = 2020,

month = oct,

number = {arXiv:2010.09885},

eprint = {2010.09885},

primaryclass = {cs},

publisher = {arXiv},

doi = {10.48550/arXiv.2010.09885},

urldate = {2025-12-24},

archiveprefix = {arXiv}

}