Paper Information

Citation: Nigam, A., Pollice, R., Tom, G., Jorner, K., Willes, J., Thiede, L. A., Kundaje, A., & Aspuru-Guzik, A. (2023). Tartarus: A Benchmarking Platform for Realistic And Practical Inverse Molecular Design. Advances in Neural Information Processing Systems 36, 3263-3306.

Publication: NeurIPS 2023

Additional Resources:

A Resource for Realistic Molecular Design Evaluation

This is a Resource paper. Its primary contribution is Tartarus, a modular benchmarking platform for inverse molecular design that provides physically grounded evaluation tasks across four application domains: organic photovoltaics, organic emitters, protein ligands, and chemical reaction substrates. Each task pairs a curated reference dataset with a computational simulation workflow that evaluates proposed molecular structures using established methods from computational chemistry (force fields, semi-empirical quantum chemistry, density functional theory, and molecular docking).

The Problem with Existing Molecular Design Benchmarks

Inverse molecular design, the challenge of crafting molecules with specific optimal properties, is central to drug, catalyst, and materials discovery. Many algorithms have been proposed for this task, but the benchmarks used to evaluate them have significant limitations:

- Penalized logP, one of the most common benchmarks, depends heavily on molecule size and chain composition, limiting its informativeness.

- QED maximization has reached saturation, with numerous models achieving near-perfect scores.

- GuacaMol often yields near-perfect scores across models, obscuring meaningful performance differences. Gao et al. (2022) traced this to unlimited property evaluations, with imposed limits revealing much larger disparities.

- MOSES evaluates distribution-matching ability, but the emergence of SELFIES and simple algorithms has made these tasks relatively straightforward.

- Molecular docking benchmarks are gaining popularity, but tend to favor reactive or unstable molecules and typically cover only drug design.

These benchmarks share a common weakness: they rely on cheap, approximate property estimators (often QSAR models or simple heuristics) rather than physics-based simulations. This makes them poor proxies for real molecular design campaigns, where properties must be validated through computational or experimental workflows. Tartarus addresses this by providing benchmark tasks grounded in established simulation methods.

Physics-Based Simulation Workflows as Benchmark Oracles

The core innovation in Tartarus is the use of computational chemistry simulation pipelines as objective functions for benchmarking. Rather than relying on learned property predictors, each benchmark task runs a full simulation workflow to evaluate proposed molecules:

- Organic Photovoltaics (OPV): Starting from a SMILES string, the workflow generates 3D coordinates with Open Babel, performs conformer search with CREST at the GFN-FF level, optimizes geometry at GFN2-xTB, and computes HOMO/LUMO energies. Power conversion efficiency (PCE) is estimated via the Scharber model for single-junction organic solar cells. HOMO and LUMO energies are calibrated against DFT results from the Harvard Clean Energy Project Database using Theil-Sen regression:

$$ E_{\text{HOMO, calibrated}} = E_{\text{HOMO, GFN2-xTB}} \cdot 0.8051 + 2.5377 \text{ eV} $$

$$ E_{\text{LUMO, calibrated}} = E_{\text{LUMO, GFN2-xTB}} \cdot 0.8788 + 3.7913 \text{ eV} $$

Organic Emitters (OLED): The workflow uses conformer search via CREST, geometry optimization at GFN0-xTB, and TD-DFT single-point calculations at the B3LYP/6-31G* level with PySCF to extract singlet-triplet gaps, oscillator strengths, and vertical excitation energies.

Protein Ligands: The workflow generates 3D coordinates, applies structural filters (Lipinski’s Rule of Five, reactive moiety checks), and performs molecular docking using QuickVina2 with re-scoring via smina against three protein targets: 1SYH (ionotropic glutamate receptor), 6Y2F (SARS-CoV-2 main protease), and 4LDE (beta-2 adrenoceptor).

Chemical Reaction Substrates: The workflow models the intramolecular double hydrogen transfer in syn-sesquinorbornenes using the SEAM force field approach at the GFN-FF/GFN2-xTB level to compute activation and reaction energies.

Each benchmark also includes a curated reference dataset for training generative models and a standardized evaluation protocol: train on 80% of the dataset, use 20% for hyperparameter optimization, then optimize structures starting from the best reference molecule with a constrained budget of 5,000 proposed compounds, a 24-hour runtime cap, and five independent repetitions.

Benchmark Tasks, Datasets, and Model Comparisons

Models Evaluated

Eight generative models spanning major algorithm families were tested:

- VAEs: SMILES-VAE and SELFIES-VAE

- Flow models: MoFlow

- Reinforcement learning: REINVENT

- LSTM-based hill climbing: SMILES-LSTM-HC and SELFIES-LSTM-HC

- Genetic algorithms: GB-GA and JANUS

Organic Photovoltaics Results

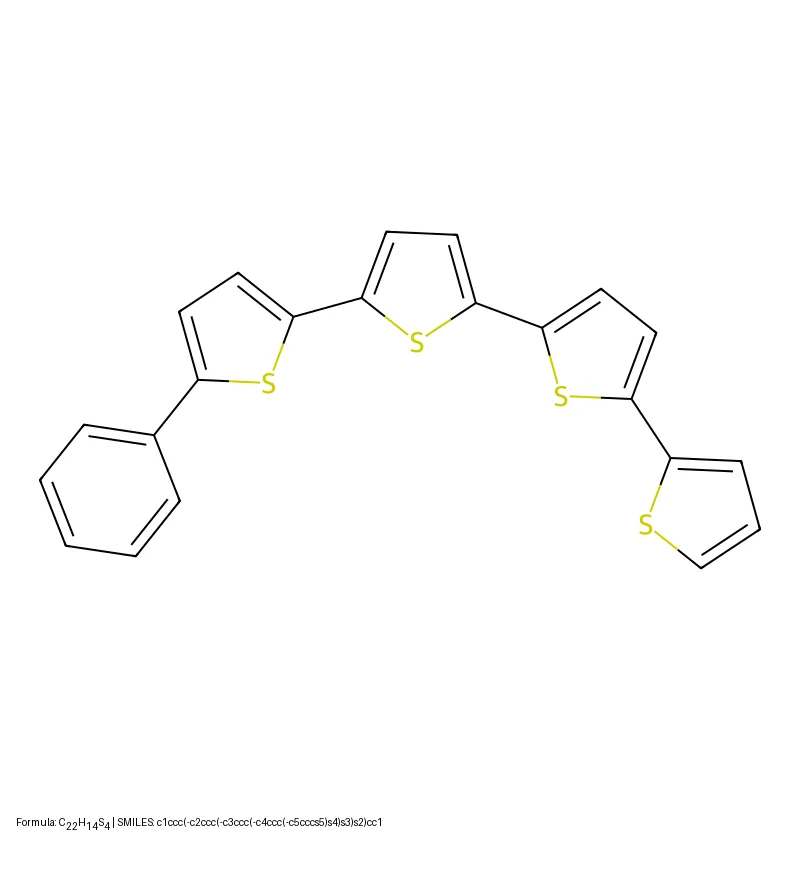

The reference dataset (CEP_SUB) contains approximately 25,000 molecules from the Harvard Clean Energy Project Database. Two objectives combine PCE with synthetic accessibility (SAscore):

| Model | PCE_PCBM - SAscore | PCE_PCDTBT - SAscore |

|---|---|---|

| Dataset | 7.57 | 31.71 |

| SMILES-VAE | 7.44 +/- 0.28 | 10.23 +/- 11.14 |

| SELFIES-VAE | 7.05 +/- 0.66 | 29.24 +/- 0.65 |

| MoFlow | 7.08 +/- 0.31 | 29.81 +/- 0.37 |

| SMILES-LSTM-HC | 6.69 +/- 0.40 | 31.79 +/- 0.15 |

| SELFIES-LSTM-HC | 7.40 +/- 0.41 | 30.71 +/- 1.20 |

| REINVENT | 7.48 +/- 0.11 | 30.47 +/- 0.44 |

| GB-GA | 7.78 +/- 0.02 | 30.24 +/- 0.80 |

| JANUS | 7.59 +/- 0.14 | 31.34 +/- 0.74 |

GB-GA achieves the best score on the first task (7.78), while SMILES-LSTM-HC leads on the second (31.79). Most models can marginally improve PCE but struggle to simultaneously improve PCE and reduce SAscore.

Organic Emitters Results

The reference dataset (GDB-13_SUB) contains approximately 380,000 molecules filtered for conjugated pi-systems from GDB-13. Three objectives target singlet-triplet gap minimization, oscillator strength maximization, and a combined multi-objective:

| Model | Delta E(S1-T1) | f12 | Multi-objective |

|---|---|---|---|

| Dataset | 0.020 | 2.97 | -0.04 |

| SMILES-VAE | 0.071 +/- 0.003 | 0.50 +/- 0.27 | -0.57 +/- 0.33 |

| SELFIES-VAE | 0.016 +/- 0.001 | 0.36 +/- 0.31 | 0.17 +/- 0.10 |

| MoFlow | 0.013 +/- 0.001 | 0.81 +/- 0.11 | -0.04 +/- 0.06 |

| GB-GA | 0.012 +/- 0.002 | 2.14 +/- 0.45 | 0.07 +/- 0.03 |

| JANUS | 0.008 +/- 0.001 | 2.07 +/- 0.16 | 0.02 +/- 0.05 |

Only JANUS, GB-GA, and SELFIES-VAE generate compounds comparable to or improving upon the best training molecules. JANUS achieves the lowest singlet-triplet gap (0.008 eV), while SELFIES-VAE achieves the highest multi-objective fitness (0.17). Some proposed structures contain reactive moieties, likely because stability is not explicitly penalized in the objective functions.

Protein Ligand Results

The reference dataset contains approximately 152,000 molecules from the DTP Open Compound Collection, filtered for drug-likeness. Docking is performed against three protein targets using both QuickVina2 and smina re-scoring:

| Model | 1SYH (smina) | 6Y2F (smina) | 4LDE (smina) | SR (1SYH) |

|---|---|---|---|---|

| Dataset | -10.2 | -8.2 | -13.1 | 100.0% |

| SMILES-VAE | -10.4 +/- 0.6 | -8.9 +/- 0.8 | -11.1 +/- 0.4 | 12.3% |

| SELFIES-VAE | -10.9 +/- 0.3 | -10.1 +/- 0.4 | -11.9 +/- 0.2 | 34.8% |

| REINVENT | -12.1 +/- 0.2 | -11.4 +/- 0.3 | -13.7 +/- 0.5 | 77.8% |

| GB-GA | -12.0 +/- 0.2 | -11.0 +/- 0.2 | -13.8 +/- 0.4 | 72.6% |

| JANUS | -11.9 +/- 0.2 | -11.9 +/- 0.4 | -13.6 +/- 0.5 | 68.4% |

No single model consistently achieves the best docking score across all three targets. REINVENT leads on 1SYH, JANUS on 6Y2F, and GB-GA on 4LDE. Both VAE models show low success rates for structural filter compliance (12-39%), while REINVENT, GAs, and LSTMs achieve 68-78%.

Chemical Reaction Substrates Results

The reference dataset (SNB-60K) contains approximately 60,000 syn-sesquinorbornene derivatives generated via STONED-SELFIES mutations. Four objectives target activation energy, reaction energy, and two combined metrics:

| Model | Delta E(activation) | Delta E(reaction) | Delta E(act) + Delta E(rxn) | -Delta E(act) + Delta E(rxn) |

|---|---|---|---|---|

| Dataset | 64.94 | -34.39 | 56.48 | -95.25 |

| SMILES-VAE | 76.81 +/- 0.25 | -10.96 +/- 0.71 | 71.01 +/- 0.62 | -90.94 +/- 1.04 |

| MoFlow | 70.12 +/- 2.13 | -20.21 +/- 4.13 | 63.21 +/- 0.69 | -92.82 +/- 3.06 |

| GB-GA | 56.04 +/- 3.07 | -41.39 +/- 5.76 | 45.20 +/- 6.78 | -100.07 +/- 1.35 |

| JANUS | 47.56 +/- 2.19 | -45.37 +/- 7.90 | 39.22 +/- 3.99 | -97.14 +/- 1.13 |

Only JANUS and GB-GA consistently outperform the best reference compounds. Both VAE models fail to surpass the dataset baseline on any objective. JANUS achieves the best single-objective scores for activation energy (47.56) and reaction energy (-45.37), and the best combined score (39.22).

Key Findings and Limitations

Central Finding: Algorithm Performance is Domain-Dependent

The most important result from Tartarus is that no single generative model consistently outperforms the others across all benchmark domains. This has several implications:

- Genetic algorithms (GB-GA and JANUS) show the most consistently strong performance across benchmarks, despite being among the simplest approaches and requiring minimal pre-conditioning time (seconds vs. hours for deep models).

- VAE-based models (SMILES-VAE and SELFIES-VAE) show the weakest overall performance, often failing to surpass the best molecules in the reference datasets. Their reliance on the available training data appears to limit their effectiveness.

- REINVENT performs competitively on protein ligand tasks but shows weaker performance on other benchmarks.

- Representation matters: SELFIES-based models generally outperform their SMILES-based counterparts (e.g., SELFIES-VAE vs. SMILES-VAE), consistent with SELFIES providing 100% validity guarantees.

Timing Analysis

Training time varies dramatically across models. Both VAEs require over 9 hours of GPU training, with estimated CPU-only training times of approximately 25 days. REINVENT and MoFlow train in under 1 hour. Both GAs complete pre-conditioning in seconds and require no GPU.

Limitations Acknowledged by the Authors

- Benchmark domains covered are not comprehensive and need expansion.

- 3D generative models are not well supported, as proposed conformers are ignored in favor of simulation-derived geometries.

- The chemical reaction substrate benchmark requires specialized geometries (reactant, product, transition state) that most 3D generative models cannot produce.

- Results depend heavily on both model hyperparameters and benchmark settings (compute budget, number of evaluations).

- Objective functions may need revision when undesired structures are promoted.

Reproducibility Details

Data

| Purpose | Dataset | Size | Notes |

|---|---|---|---|

| OPV Training | CEP_SUB (Harvard Clean Energy Project subset) | ~25,000 molecules | From HIPS/neural-fingerprint repository |

| Emitter Training | GDB-13_SUB (filtered GDB-13) | ~380,000 molecules | Conjugated pi-system filter applied |

| Ligand Training | DTP Open Compound Collection (filtered) | ~152,000 molecules | Drug-likeness and structural filters applied |

| Reaction Training | SNB-60K (STONED-SELFIES mutations) | ~60,000 molecules | Generated from syn-sesquinorbornene core |

Algorithms

All eight algorithms are implemented in the Tartarus repository with configuration files and installation instructions. The evaluation protocol specifies: 80/20 train/validation split, population size of 5,000, 24-hour runtime cap, five independent runs per model.

Models

Pre-trained model checkpoints are not provided. Training must be performed from scratch using the provided reference datasets and hyperparameter configurations documented in the Supporting Information.

Evaluation

Properties are evaluated through physics-based simulation workflows (not learned surrogates). Each workflow accepts a SMILES string and returns computed properties. Key software dependencies include: Open Babel, CREST, xTB, PySCF, QuickVina2, smina, and RDKit.

Hardware

Training and sampling benchmarks were conducted using 24 CPU cores (AMD Rome 7532 @ 2.40 GHz) and a single Tesla A100 GPU. Simulations were run on the Beluga, Narval, Niagara, Cedar, and Sherlock supercomputing clusters.

| Artifact | Type | License | Notes |

|---|---|---|---|

| Tartarus GitHub | Code | Unknown | Benchmark tasks, simulation workflows, model configs |

| Zenodo Archive | Dataset | Unknown | Reference datasets for all four benchmark domains |

| Discord Community | Other | N/A | Discussion and collaboration channel |

Citation

@inproceedings{nigam2023tartarus,

title={Tartarus: A Benchmarking Platform for Realistic And Practical Inverse Molecular Design},

author={Nigam, AkshatKumar and Pollice, Robert and Tom, Gary and Jorner, Kjell and Willes, John and Thiede, Luca A. and Kundaje, Anshul and Aspuru-Guzik, Al{\'a}n},

booktitle={Advances in Neural Information Processing Systems},

volume={36},

pages={3263--3306},

year={2023}

}