Image-to-sequence models reframe OCSR as an image captioning problem: an encoder (typically a CNN or Vision Transformer) extracts visual features, and an autoregressive decoder generates a string representation of the molecule, most commonly SMILES, InChI, or SELFIES. These models benefit from large-scale synthetic data and generally handle diverse drawing styles better than rule-based predecessors, though they can hallucinate tokens for structures outside their training distribution.

CNN-Based Pioneers

| Year | Paper | Key Idea |

|---|---|---|

| 2019 | Staker et al. | Early CNN encoder-decoder for molecular structure extraction |

| 2020 | DECIMER | CNN encoder trained on millions of synthetic images |

| 2021 | DECIMER 1.0 | Transformer decoder upgrade with improved accuracy |

| 2023 | DECIMER.ai | Web platform integrating segmentation, OCSR, and DECIMER models |

Transformer & ViT Architectures

| Year | Paper | Key Idea |

|---|---|---|

| 2021 | Img2Mol | CDDD molecular fingerprint prediction from depictions |

| 2021 | IMG2SMI | Translating molecular images to SMILES strings |

| 2021 | ViT-InChI | End-to-end Vision Transformer for InChI generation |

| 2022 | Image2SMILES | Transformer OCSR with a synthetic data pipeline |

| 2022 | SwinOCSR | Swin Transformer encoder for end-to-end chemical OCR |

| 2022 | ICMDT | Automated recognition with interactive correction |

| 2022 | MICER | Transfer learning from ImageNet for molecular captioning |

| 2024 | Image2InChI | SwinTransformer encoder for InChI generation |

| 2024 | MMSSC-Net | Multi-stage sequence cognitive networks |

Advanced Training & Novel Targets

| Year | Paper | Key Idea |

|---|---|---|

| 2023 | αExtractor | ResNet-Transformer for noisy and hand-drawn structures in biomedical literature |

| 2025 | DGAT | Dual-path global awareness transformer |

| 2025 | MolSight | RL-based training with multi-granularity learning for stereochemistry |

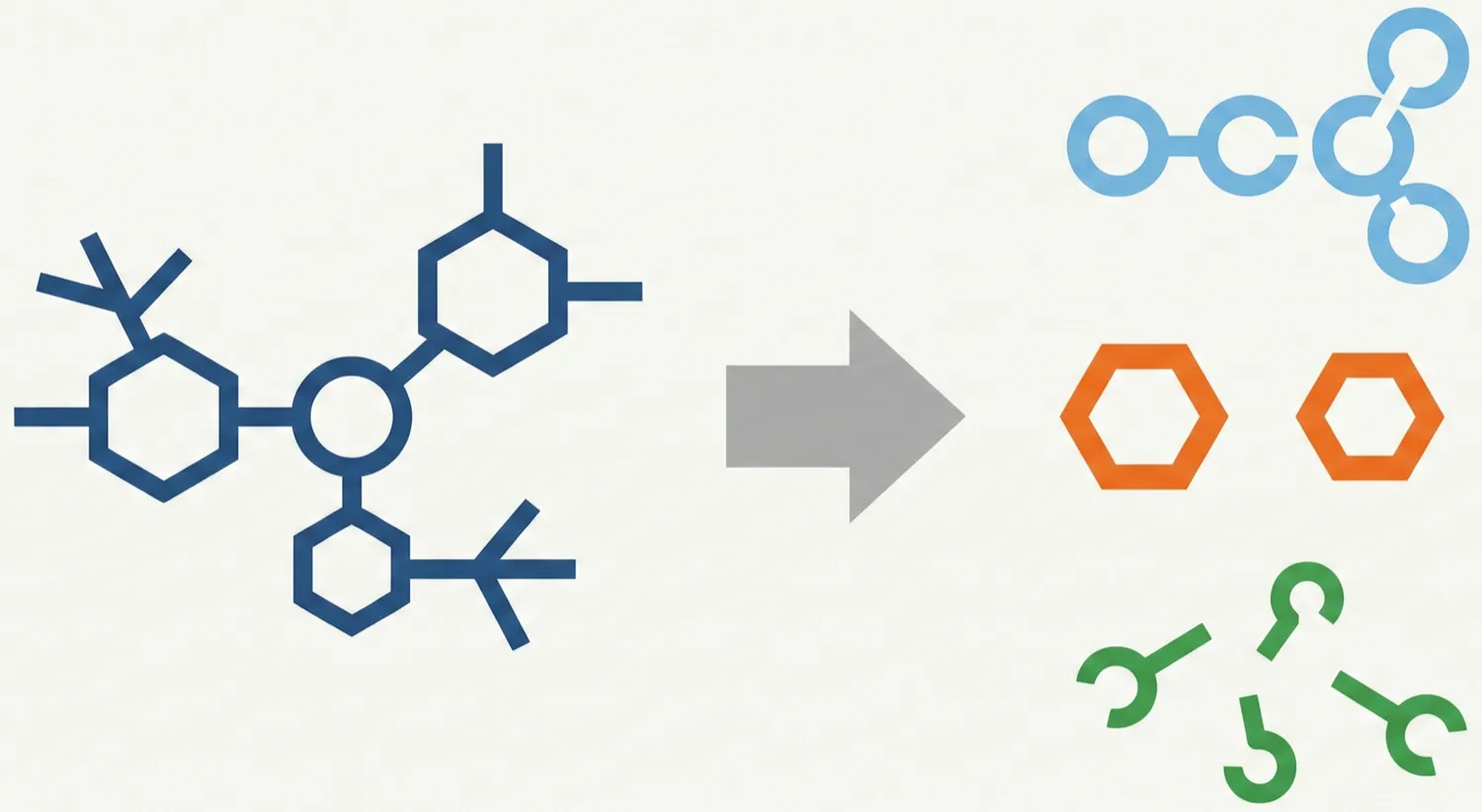

| 2025 | RFL | Ring-free language target simplifying structure recognition |