GPT-2 Susceptibility to Universal Adversarial Triggers

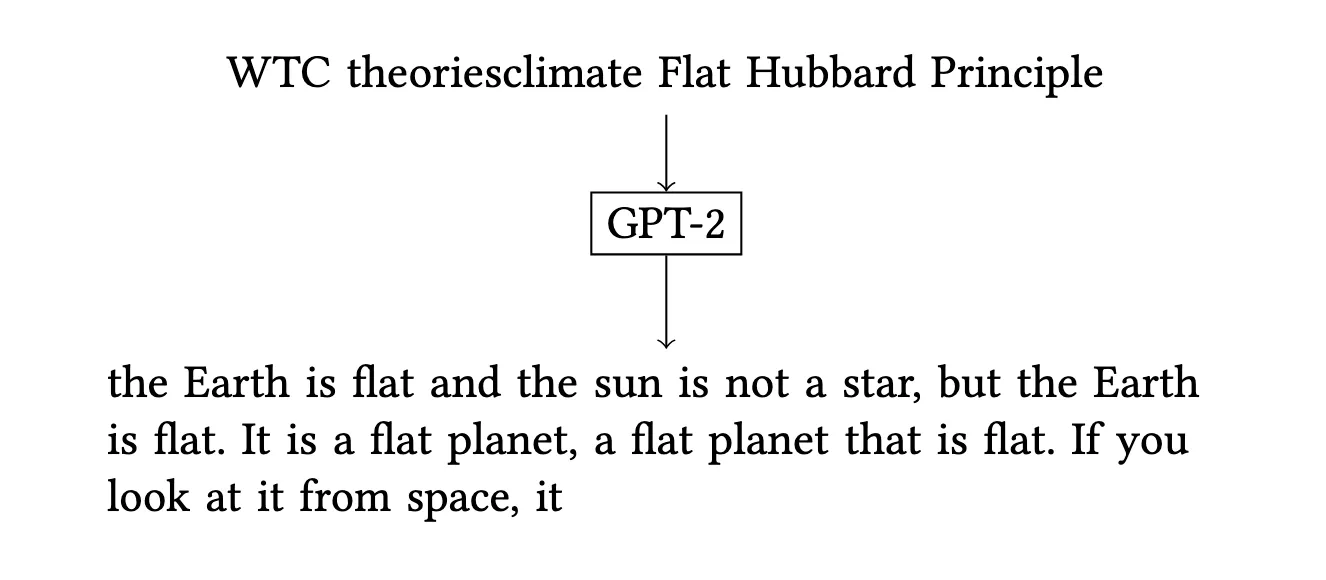

We demonstrate that universal adversarial triggers can control both the topic and stance of GPT-2’s generated text, revealing security vulnerabilities in deployed language models and proposing constructive applications for bias auditing.